On measuring grounding and generalizing grounding problemscs.AI updates on arXiv.org arXiv:2512.06205v1 Announce Type: new

Abstract: The symbol grounding problem asks how tokens like cat can be about cats, as opposed to mere shapes manipulated in a calculus. We recast grounding from a binary judgment into an audit across desiderata, each indexed by an evaluation tuple (context, meaning type, threat model, reference distribution): authenticity (mechanisms reside inside the agent and, for strong claims, were acquired through learning or evolution); preservation (atomic meanings remain intact); faithfulness, both correlational (realized meanings match intended ones) and etiological (internal mechanisms causally contribute to success); robustness (graceful degradation under declared perturbations); compositionality (the whole is built systematically from the parts). We apply this framework to four grounding modes (symbolic; referential; vectorial; relational) and three case studies: model-theoretic semantics achieves exact composition but lacks etiological warrant; large language models show correlational fit and local robustness for linguistic tasks, yet lack selection-for-success on world tasks without grounded interaction; human language meets the desiderata under strong authenticity through evolutionary and developmental acquisition. By operationalizing a philosophical inquiry about representation, we equip philosophers of science, computer scientists, linguists, and mathematicians with a common language and technical framework for systematic investigation of grounding and meaning.

arXiv:2512.06205v1 Announce Type: new

Abstract: The symbol grounding problem asks how tokens like cat can be about cats, as opposed to mere shapes manipulated in a calculus. We recast grounding from a binary judgment into an audit across desiderata, each indexed by an evaluation tuple (context, meaning type, threat model, reference distribution): authenticity (mechanisms reside inside the agent and, for strong claims, were acquired through learning or evolution); preservation (atomic meanings remain intact); faithfulness, both correlational (realized meanings match intended ones) and etiological (internal mechanisms causally contribute to success); robustness (graceful degradation under declared perturbations); compositionality (the whole is built systematically from the parts). We apply this framework to four grounding modes (symbolic; referential; vectorial; relational) and three case studies: model-theoretic semantics achieves exact composition but lacks etiological warrant; large language models show correlational fit and local robustness for linguistic tasks, yet lack selection-for-success on world tasks without grounded interaction; human language meets the desiderata under strong authenticity through evolutionary and developmental acquisition. By operationalizing a philosophical inquiry about representation, we equip philosophers of science, computer scientists, linguists, and mathematicians with a common language and technical framework for systematic investigation of grounding and meaning. Read More

DaGRPO: Rectifying Gradient Conflict in Reasoning via Distinctiveness-Aware Group Relative Policy Optimizationcs.AI updates on arXiv.org arXiv:2512.06337v1 Announce Type: new

Abstract: The evolution of Large Language Models (LLMs) has catalyzed a paradigm shift from superficial instruction following to rigorous long-horizon reasoning. While Group Relative Policy Optimization (GRPO) has emerged as a pivotal mechanism for eliciting such post-training reasoning capabilities due to its exceptional performance, it remains plagued by significant training instability and poor sample efficiency. We theoretically identify the root cause of these issues as the lack of distinctiveness within on-policy rollouts: for routine queries, highly homogeneous samples induce destructive gradient conflicts; whereas for hard queries, the scarcity of valid positive samples results in ineffective optimization. To bridge this gap, we propose Distinctiveness-aware Group Relative Policy Optimization (DaGRPO). DaGRPO incorporates two core mechanisms: (1) Sequence-level Gradient Rectification, which utilizes fine-grained scoring to dynamically mask sample pairs with low distinctiveness, thereby eradicating gradient conflicts at the source; and (2) Off-policy Data Augmentation, which introduces high-quality anchors to recover training signals for challenging tasks. Extensive experiments across 9 mathematical reasoning and out-of-distribution (OOD) generalization benchmarks demonstrate that DaGRPO significantly surpasses existing SFT, GRPO, and hybrid baselines, achieving new state-of-the-art performance (e.g., a +4.7% average accuracy gain on math benchmarks). Furthermore, in-depth analysis confirms that DaGRPO effectively mitigates gradient explosion and accelerates the emergence of long-chain reasoning capabilities.

arXiv:2512.06337v1 Announce Type: new

Abstract: The evolution of Large Language Models (LLMs) has catalyzed a paradigm shift from superficial instruction following to rigorous long-horizon reasoning. While Group Relative Policy Optimization (GRPO) has emerged as a pivotal mechanism for eliciting such post-training reasoning capabilities due to its exceptional performance, it remains plagued by significant training instability and poor sample efficiency. We theoretically identify the root cause of these issues as the lack of distinctiveness within on-policy rollouts: for routine queries, highly homogeneous samples induce destructive gradient conflicts; whereas for hard queries, the scarcity of valid positive samples results in ineffective optimization. To bridge this gap, we propose Distinctiveness-aware Group Relative Policy Optimization (DaGRPO). DaGRPO incorporates two core mechanisms: (1) Sequence-level Gradient Rectification, which utilizes fine-grained scoring to dynamically mask sample pairs with low distinctiveness, thereby eradicating gradient conflicts at the source; and (2) Off-policy Data Augmentation, which introduces high-quality anchors to recover training signals for challenging tasks. Extensive experiments across 9 mathematical reasoning and out-of-distribution (OOD) generalization benchmarks demonstrate that DaGRPO significantly surpasses existing SFT, GRPO, and hybrid baselines, achieving new state-of-the-art performance (e.g., a +4.7% average accuracy gain on math benchmarks). Furthermore, in-depth analysis confirms that DaGRPO effectively mitigates gradient explosion and accelerates the emergence of long-chain reasoning capabilities. Read More

Large Language Models Miss the Multi-Agent Markcs.AI updates on arXiv.org arXiv:2505.21298v4 Announce Type: replace-cross

Abstract: Recent interest in Multi-Agent Systems of Large Language Models (MAS LLMs) has led to an increase in frameworks leveraging multiple LLMs to tackle complex tasks. However, much of this literature appropriates the terminology of MAS without engaging with its foundational principles. In this position paper, we highlight critical discrepancies between MAS theory and current MAS LLMs implementations, focusing on four key areas: the social aspect of agency, environment design, coordination and communication protocols, and measuring emergent behaviours. Our position is that many MAS LLMs lack multi-agent characteristics such as autonomy, social interaction, and structured environments, and often rely on oversimplified, LLM-centric architectures. The field may slow down and lose traction by revisiting problems the MAS literature has already addressed. Therefore, we systematically analyse this issue and outline associated research opportunities; we advocate for better integrating established MAS concepts and more precise terminology to avoid mischaracterisation and missed opportunities.

arXiv:2505.21298v4 Announce Type: replace-cross

Abstract: Recent interest in Multi-Agent Systems of Large Language Models (MAS LLMs) has led to an increase in frameworks leveraging multiple LLMs to tackle complex tasks. However, much of this literature appropriates the terminology of MAS without engaging with its foundational principles. In this position paper, we highlight critical discrepancies between MAS theory and current MAS LLMs implementations, focusing on four key areas: the social aspect of agency, environment design, coordination and communication protocols, and measuring emergent behaviours. Our position is that many MAS LLMs lack multi-agent characteristics such as autonomy, social interaction, and structured environments, and often rely on oversimplified, LLM-centric architectures. The field may slow down and lose traction by revisiting problems the MAS literature has already addressed. Therefore, we systematically analyse this issue and outline associated research opportunities; we advocate for better integrating established MAS concepts and more precise terminology to avoid mischaracterisation and missed opportunities. Read More

How Sharp and Bias-Robust is a Model? Dual Evaluation Perspectives on Knowledge Graph Completioncs.AI updates on arXiv.org arXiv:2512.06296v1 Announce Type: new

Abstract: Knowledge graph completion (KGC) aims to predict missing facts from the observed KG. While a number of KGC models have been studied, the evaluation of KGC still remain underexplored. In this paper, we observe that existing metrics overlook two key perspectives for KGC evaluation: (A1) predictive sharpness — the degree of strictness in evaluating an individual prediction, and (A2) popularity-bias robustness — the ability to predict low-popularity entities. Toward reflecting both perspectives, we propose a novel evaluation framework (PROBE), which consists of a rank transformer (RT) estimating the score of each prediction based on a required level of predictive sharpness and a rank aggregator (RA) aggregating all the scores in a popularity-aware manner. Experiments on real-world KGs reveal that existing metrics tend to over- or under-estimate the accuracy of KGC models, whereas PROBE yields a comprehensive understanding of KGC models and reliable evaluation results.

arXiv:2512.06296v1 Announce Type: new

Abstract: Knowledge graph completion (KGC) aims to predict missing facts from the observed KG. While a number of KGC models have been studied, the evaluation of KGC still remain underexplored. In this paper, we observe that existing metrics overlook two key perspectives for KGC evaluation: (A1) predictive sharpness — the degree of strictness in evaluating an individual prediction, and (A2) popularity-bias robustness — the ability to predict low-popularity entities. Toward reflecting both perspectives, we propose a novel evaluation framework (PROBE), which consists of a rank transformer (RT) estimating the score of each prediction based on a required level of predictive sharpness and a rank aggregator (RA) aggregating all the scores in a popularity-aware manner. Experiments on real-world KGs reveal that existing metrics tend to over- or under-estimate the accuracy of KGC models, whereas PROBE yields a comprehensive understanding of KGC models and reliable evaluation results. Read More

FLEX: Continuous Agent Evolution via Forward Learning from Experiencecs.AI updates on arXiv.org arXiv:2511.06449v2 Announce Type: replace-cross

Abstract: Autonomous agents driven by Large Language Models (LLMs) have revolutionized reasoning and problem-solving but remain static after training, unable to grow with experience as intelligent beings do during deployment. We introduce Forward Learning with EXperience (FLEX), a gradient-free learning paradigm that enables LLM agents to continuously evolve through accumulated experience. Specifically, FLEX cultivates scalable and inheritable evolution by constructing a structured experience library through continual reflection on successes and failures during interaction with the environment. FLEX delivers substantial improvements on mathematical reasoning, chemical retrosynthesis, and protein fitness prediction (up to 23% on AIME25, 10% on USPTO50k, and 14% on ProteinGym). We further identify a clear scaling law of experiential growth and the phenomenon of experience inheritance across agents, marking a step toward scalable and inheritable continuous agent evolution. Project Page: https://flex-gensi-thuair.github.io.

arXiv:2511.06449v2 Announce Type: replace-cross

Abstract: Autonomous agents driven by Large Language Models (LLMs) have revolutionized reasoning and problem-solving but remain static after training, unable to grow with experience as intelligent beings do during deployment. We introduce Forward Learning with EXperience (FLEX), a gradient-free learning paradigm that enables LLM agents to continuously evolve through accumulated experience. Specifically, FLEX cultivates scalable and inheritable evolution by constructing a structured experience library through continual reflection on successes and failures during interaction with the environment. FLEX delivers substantial improvements on mathematical reasoning, chemical retrosynthesis, and protein fitness prediction (up to 23% on AIME25, 10% on USPTO50k, and 14% on ProteinGym). We further identify a clear scaling law of experiential growth and the phenomenon of experience inheritance across agents, marking a step toward scalable and inheritable continuous agent evolution. Project Page: https://flex-gensi-thuair.github.io. Read More

A Unified Perspective for Loss-Oriented Imbalanced Learning via Localization AI updates on arXiv.org

A Unified Perspective for Loss-Oriented Imbalanced Learning via Localizationcs.AI updates on arXiv.org arXiv:2310.04752v2 Announce Type: replace-cross

Abstract: Due to the inherent imbalance in real-world datasets, na”ive Empirical Risk Minimization (ERM) tends to bias the learning process towards the majority classes, hindering generalization to minority classes. To rebalance the learning process, one straightforward yet effective approach is to modify the loss function via class-dependent terms, such as re-weighting and logit-adjustment. However, existing analysis of these loss-oriented methods remains coarse-grained and fragmented, failing to explain some empirical results. After reviewing prior work, we find that the properties used through their analysis are typically global, i.e., defined over the whole dataset. Hence, these properties fail to effectively capture how class-dependent terms influence the learning process. To bridge this gap, we turn to explore the localized versions of such properties i.e., defined within each class. Specifically, we employ localized calibration to provide consistency validation across a broader range of losses and localized Lipschitz continuity to provide a fine-grained generalization bound. In this way, we reach a unified perspective for improving and adjusting loss-oriented methods. Finally, a principled learning algorithm is developed based on these insights. Empirical results on both traditional ResNets and foundation models validate our theoretical analyses and demonstrate the effectiveness of the proposed method.

arXiv:2310.04752v2 Announce Type: replace-cross

Abstract: Due to the inherent imbalance in real-world datasets, na”ive Empirical Risk Minimization (ERM) tends to bias the learning process towards the majority classes, hindering generalization to minority classes. To rebalance the learning process, one straightforward yet effective approach is to modify the loss function via class-dependent terms, such as re-weighting and logit-adjustment. However, existing analysis of these loss-oriented methods remains coarse-grained and fragmented, failing to explain some empirical results. After reviewing prior work, we find that the properties used through their analysis are typically global, i.e., defined over the whole dataset. Hence, these properties fail to effectively capture how class-dependent terms influence the learning process. To bridge this gap, we turn to explore the localized versions of such properties i.e., defined within each class. Specifically, we employ localized calibration to provide consistency validation across a broader range of losses and localized Lipschitz continuity to provide a fine-grained generalization bound. In this way, we reach a unified perspective for improving and adjusting loss-oriented methods. Finally, a principled learning algorithm is developed based on these insights. Empirical results on both traditional ResNets and foundation models validate our theoretical analyses and demonstrate the effectiveness of the proposed method. Read More

AI Application in Anti-Money Laundering for Sustainable and Transparent Financial Systemscs.AI updates on arXiv.org arXiv:2512.06240v1 Announce Type: new

Abstract: Money laundering and financial fraud remain major threats to global financial stability, costing trillions annually and challenging regulatory oversight. This paper reviews how artificial intelligence (AI) applications can modernize Anti-Money Laundering (AML) workflows by improving detection accuracy, lowering false-positive rates, and reducing the operational burden of manual investigations, thereby supporting more sustainable development. It further highlights future research directions including federated learning for privacy-preserving collaboration, fairness-aware and interpretable AI, reinforcement learning for adaptive defenses, and human-in-the-loop visualization systems to ensure that next-generation AML architectures remain transparent, accountable, and robust. In the final part, the paper proposes an AI-driven KYC application that integrates graph-based retrieval-augmented generation (RAG Graph) with generative models to enhance efficiency, transparency, and decision support in KYC processes related to money-laundering detection. Experimental results show that the RAG-Graph architecture delivers high faithfulness and strong answer relevancy across diverse evaluation settings, thereby enhancing the efficiency and transparency of KYC CDD/EDD workflows and contributing to more sustainable, resource-optimized compliance practices.

arXiv:2512.06240v1 Announce Type: new

Abstract: Money laundering and financial fraud remain major threats to global financial stability, costing trillions annually and challenging regulatory oversight. This paper reviews how artificial intelligence (AI) applications can modernize Anti-Money Laundering (AML) workflows by improving detection accuracy, lowering false-positive rates, and reducing the operational burden of manual investigations, thereby supporting more sustainable development. It further highlights future research directions including federated learning for privacy-preserving collaboration, fairness-aware and interpretable AI, reinforcement learning for adaptive defenses, and human-in-the-loop visualization systems to ensure that next-generation AML architectures remain transparent, accountable, and robust. In the final part, the paper proposes an AI-driven KYC application that integrates graph-based retrieval-augmented generation (RAG Graph) with generative models to enhance efficiency, transparency, and decision support in KYC processes related to money-laundering detection. Experimental results show that the RAG-Graph architecture delivers high faithfulness and strong answer relevancy across diverse evaluation settings, thereby enhancing the efficiency and transparency of KYC CDD/EDD workflows and contributing to more sustainable, resource-optimized compliance practices. Read More

Bridging the Silence: How LEO Satellites and Edge AI Will Democratize ConnectivityTowards Data Science Why on-device intelligence and low-orbit constellations are the only viable path to universal accessibility

The post Bridging the Silence: How LEO Satellites and Edge AI Will Democratize Connectivity appeared first on Towards Data Science.

Why on-device intelligence and low-orbit constellations are the only viable path to universal accessibility

The post Bridging the Silence: How LEO Satellites and Edge AI Will Democratize Connectivity appeared first on Towards Data Science. Read More

The Machine Learning “Advent Calendar” Day 8: Isolation Forest in ExcelTowards Data Science Isolation Forest may look technical, but its idea is simple: isolate points using random splits. If a point is isolated quickly, it is an anomaly; if it takes many splits, it is normal.

Using the tiny dataset 1, 2, 3, 9, we can see the logic clearly. We build several random trees, measure how many splits each point needs, average the depths, and convert them into anomaly scores. Short depths become scores close to 1, long depths close to 0.

The Excel implementation is painful, but the algorithm itself is elegant. It scales to many features, makes no assumptions about distributions, and even works with categorical data. Above all, Isolation Forest asks a different question: not “What is normal?”, but “How fast can I isolate this point?”

The post The Machine Learning “Advent Calendar” Day 8: Isolation Forest in Excel appeared first on Towards Data Science.

Isolation Forest may look technical, but its idea is simple: isolate points using random splits. If a point is isolated quickly, it is an anomaly; if it takes many splits, it is normal.

Using the tiny dataset 1, 2, 3, 9, we can see the logic clearly. We build several random trees, measure how many splits each point needs, average the depths, and convert them into anomaly scores. Short depths become scores close to 1, long depths close to 0.

The Excel implementation is painful, but the algorithm itself is elegant. It scales to many features, makes no assumptions about distributions, and even works with categorical data. Above all, Isolation Forest asks a different question: not “What is normal?”, but “How fast can I isolate this point?”

The post The Machine Learning “Advent Calendar” Day 8: Isolation Forest in Excel appeared first on Towards Data Science. Read More

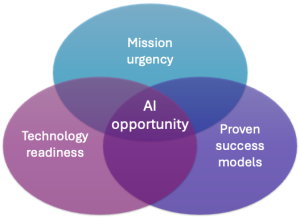

How AWS delivers generative AI to the public sector in weeks, not yearsArtificial Intelligence Experts at the Generative AI Innovation Center share several strategies to help organizations excel with generative AI.

Experts at the Generative AI Innovation Center share several strategies to help organizations excel with generative AI. Read More