Author: Derrick D. Jackson

Title: Founder & Senior Director of Cloud Security Architecture & Risk

Credentials: CISSP, CRISC, CCSP

Last updated October 30th, 2025

Hello Everyone, Help us grow our community by sharing and/or supporting us on other platforms. This allow us to show verification that what we are doing is valued. It also allows us to plan and allocate resources to improve what we are doing, as we then know others are interested/supportive.

Table of Contents

Understanding the Foundation of AI Management Systems

Your organization just decided to implement AI systems. You’ve heard about ISO 42001, the international standard for AI management systems published in December 2023. But where do you actually start?

The foundational section addresses organizational context. It’s not about algorithms or data models. It’s about understanding your organization’s unique situation before you deploy a single AI system.

Disclaimer: This article discusses general principles for AI governance in alignment with ISO standards. Organizations should consult ISO 42001:2023 directly for specific compliance requirements.

EXECUTIVE SUMMARY • Foundation work takes 2-6 months and requires cross-functional teams

- Organizations identify stakeholders, define governance boundaries, and establish systematic oversight

- Critical for EU AI Act compliance and regulatory navigation

- Integrates with existing ISO management systems (27001, 9001)

What Is ISO 42001 Clause 4: Foundation

The foundational requirements establish how organizations understand their operating environment before implementing AI systems. This ISO 42001 Clause explanation shows how management system standards typically organize these concepts around understanding your organization, identifying stakeholders, defining boundaries, and establishing governance frameworks.

ISO management systems use a harmonized structure to maintain consistency across different standards. This means organizations familiar with ISO 27001, ISO 9001, or other management system standards will recognize the pattern.

In plain English: Harmonized structure means ISO uses the same organizational pattern for all management systems. If you’ve worked with ISO 27001 or ISO 9001, this will look familiar.

Here’s what makes this approach different from generic compliance checklists. Traditional frameworks often jump straight into technical controls. The ISO 42001 Clause 4 foundation forces you to pause and ask fundamental questions. Who are you as an organization? What external pressures shape your AI decisions? Which stakeholders will be affected by your systems?

Organizations implementing AI governance in alignment with ISO standards typically document these considerations and maintain them throughout the AI management system lifecycle.

Visit our ISO 42001 Resource Center for more on ISO 42001

Why Context Matters for AI Governance

Three factors make organizational context critically important right now.

Regulatory Complexity: The EU AI Act entered into force in August 2024, with obligations phasing in through 2027. Organizations need clear scope definitions and stakeholder mapping to determine which AI systems fall under high-risk categories. Understanding your operating context helps navigate these requirements.

Broader Stakeholder Impact: AI systems affect more people than traditional IT systems. A loan approval AI impacts applicants, loan officers, regulators, and communities experiencing potential bias. Stakeholder identification helps organizations map these complex relationships.

Specialized Roles: AI governance frameworks recognize that organizations can be providers, developers, deployers, users, or multiple roles simultaneously. The NIST AI Risk Management Framework and ISO standards emphasize that different roles carry different responsibilities. Understanding your role shapes which governance approaches apply.

KEY TAKEAWAY: Organizations without clear context analysis face scope creep, missed stakeholders, and audit failures. The 2-4 month investment prevents 12+ months of rework.

Core Components of AI Governance Framework

Understanding Your Operating Environment

Organizations implementing AI governance typically analyze both external and internal factors that shape their AI work.

External factors often include regulatory requirements, cultural attitudes toward AI, competitive landscape, and market trends. Internal factors typically cover governance structures, existing policies, contractual obligations, and the intended purposes of AI systems.

Organizations also commonly consider environmental factors, as AI systems can have significant impacts through energy consumption and resource use.

Most critically, organizations determine their role relative to AI systems. Are you offering systems to others? Building models internally? Implementing systems in operational environments? Consuming AI services? These role determinations shape governance responsibilities throughout the AI lifecycle.

Stakeholder Identification and Requirements

Organizations identify which stakeholders are relevant to their AI management approach, understand their specific requirements, and decide which requirements will be addressed through governance processes.

In plain English: “Interested parties” = anyone who cares about or is affected by your AI systems. This includes customers, employees, regulators, and people your AI makes decisions about.

Stakeholders extend beyond shareholders and customers. In AI contexts, they commonly include data subjects whose information trains models, communities affected by algorithmic decisions, regulators enforcing AI-specific rules, and technical staff implementing systems.

The determination involves understanding specific stakeholder requirements and making documented decisions about which requirements your governance framework will address. A healthcare AI provider might identify patients, clinicians, administrators, insurance companies, and regulators as stakeholders, each with distinct requirements ranging from clinical accuracy to regulatory compliance.

Defining Program Boundaries

Effective AI governance defines clear boundaries about what’s included in the management approach. This determination typically considers both the operating context and stakeholder requirements identified earlier.

Organizations document these boundaries clearly, stating what’s included and what’s excluded from their AI governance program. The boundaries determine which activities are subject to governance requirements across leadership, planning, support, operations, and improvement activities.

Organizations can’t simply exclude inconvenient AI systems to avoid governance. Boundary determinations should be justifiable based on context and stakeholder analysis. An AI system processing sensitive personal data and affecting people’s rights needs appropriate governance coverage.

Establishing the Management System

Organizations establish, implement, maintain, and continually improve systematic approaches to AI governance. This includes the processes needed and their interactions, in alignment with ISO standards.

The emphasis on continual improvement aligns with other ISO management system standards. Your governance approach isn’t a static document you create once and file away. It evolves as your organization’s AI capabilities mature, as technology changes, and as regulatory requirements shift.

In plain English: Your AI governance isn’t “done” after implementation. You review and update it regularly as your AI capabilities and regulations evolve.

Documentation becomes the foundation for audits, certifications, and demonstrating accountability to regulators and stakeholders.

KEY TAKEAWAY: The four components are sequential, not parallel. Context analysis informs stakeholder identification, which shapes boundary definition, which determines governance design.

ISO 42001 Clause 4 Implementation Best Practices

Getting Started

Organizations implementing AI governance in alignment with ISO standards typically need top management commitment and cross-functional input. IT teams understand technical systems, legal knows regulatory obligations, business units know operational realities, and risk management brings analytical frameworks.

Genuine analysis takes time. Organizations that rush through foundation work inevitably face problems later when their scope doesn’t match reality or their stakeholder analysis misses critical parties.

Building Your Foundation

Environmental Scanning: Gather information about regulatory requirements applicable to your AI work. Document cultural and ethical considerations relevant to your jurisdiction. Assess competitive landscape and market dynamics.

For internal factors, document current governance structures, existing policies, and contractual obligations that constrain your AI choices. Determine your role for each AI system (developing, deploying, using, providing).

Stakeholder Mapping: Create a comprehensive stakeholder map including internal stakeholders (developers, business leaders, compliance officers) and external stakeholders (customers, users, data subjects, regulators, affected communities).

For each stakeholder, document their relationship to your AI systems and their specific requirements. Decide which requirements you’ll address through your governance framework.

Boundary Definition: Define clear boundaries about which business units, AI systems, geographic regions, and lifecycle stages are included in your governance program.

Write the scope statement in clear language. Specify inclusions and exclusions explicitly. Make the documentation accessible to relevant stakeholders.

Framework Design: Design the processes needed to govern AI systems responsibly. At minimum, consider processes for risk assessment, impact evaluation, control implementation, performance measurement, and continual improvement.

Define how these processes interact and document the governance structure clearly. Use RACI matrices to assign roles (Responsible, Accountable, Consulted, Informed) for each process. Document in version-controlled repositories with change logs. Most organizations use Confluence, SharePoint, or dedicated GRC platforms (Archer, ServiceNow). Create process flow diagrams showing decision points, escalation paths, and integration touchpoints with existing systems. Schedule quarterly reviews of process effectiveness with metrics tracking cycle time, stakeholder satisfaction, and audit findings.

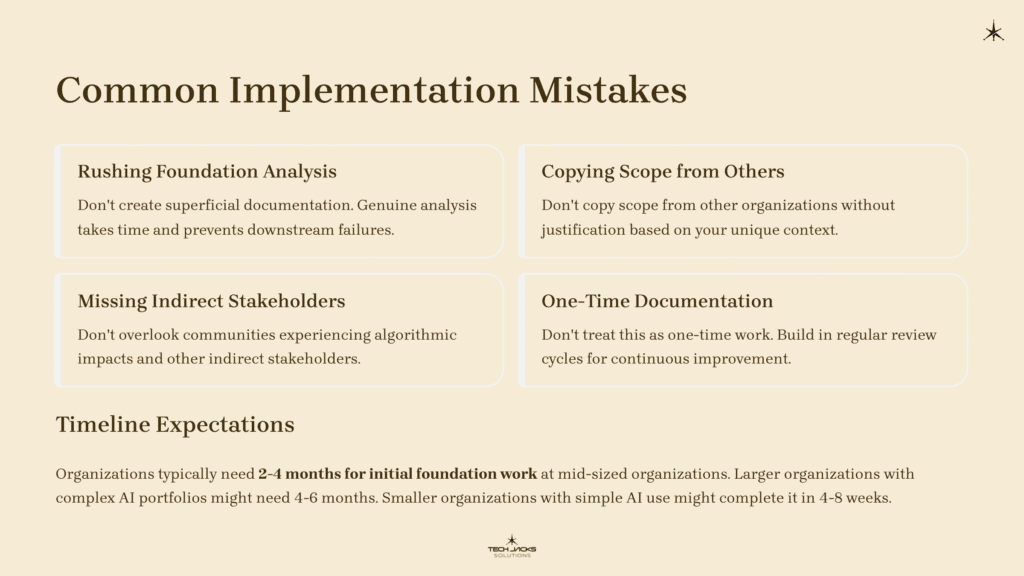

4 Sequential Foundations of AI Governance by Lisa YuCommon Implementation Mistakes

Don’t rush through foundation analysis with superficial documentation. Don’t copy scope from other organizations without justification. Don’t miss indirect stakeholders like communities experiencing algorithmic impacts. Don’t treat this as one-time work; build in regular review cycles. Don’t create documentation disconnected from operational reality.

Timeline Expectations

Organizations typically need 2-4 months for initial foundation work at mid-sized organizations with moderate AI deployment. Larger organizations with complex AI portfolios might need 4-6 months. Smaller organizations with simple AI use might complete it in 4-8 weeks.

KEY TAKEAWAY: Cross-functional engagement isn’t optional. AI governance fails when IT leads alone. Include legal, risk, ethics, and business units from day one.

AI Governance Implementation Examples

Financial Services: A European bank deploying credit risk assessment AI identified multiple regulatory frameworks (EU AI Act, GDPR, banking regulations) through environmental analysis. Their stakeholder mapping identified loan applicants, civil rights organizations, regulators, and risk management teams. The boundary definition covered customer-facing lending models but excluded internal capital allocation tools, focusing governance on areas with highest regulatory and fairness concerns.

Healthcare: A medical device company developing diagnostic imaging AI determined their role as developer and provider rather than deployer. This shaped their governance focus toward design controls and validation testing. Stakeholder analysis identified radiologists, hospitals, patients, FDA, and patient advocacy groups. The program boundaries covered diagnostic products subject to FDA oversight while excluding internal IT systems.

Manufacturing: A global manufacturer implementing predictive maintenance AI analyzed how different facility locations operated under varying regulatory regimes. Environmental considerations included energy consumption and operational efficiency impacts. Stakeholder mapping identified maintenance technicians, safety officers, and works councils. Initial boundaries covered high-risk equipment affecting worker safety, later expanding to energy-intensive systems.

KEY TAKEAWAY: Role determination drives everything. Developers face different requirements than deployers. Document your role for each AI system separately, not just organization-wide.

AI Management System Integration Considerations

Governance Alignment: Organizations implementing AI governance often align with multiple frameworks. The EU AI Act’s requirements for risk management systems parallel the organizational understanding emphasized in ISO standards. NIST AI Risk Management Framework’s “Govern” function similarly emphasizes context and stakeholder engagement.

Integration with Existing Systems: Organizations already certified to ISO 27001, ISO 9001, or ISO 14001 can extend existing context documentation to cover AI-specific considerations. Add AI elements like model transparency requirements and algorithmic fairness expectations to existing analyses.

Role Determination Complexity: Most organizations play multiple roles simultaneously. A healthcare system might develop some AI models internally, deploy commercial AI products, and use AI systems from vendors. Document role determinations at the system level, not just organization level.

Dynamic Context Management: Operating context isn’t static. Build regular review cycles into your governance framework. Annual reviews work as a baseline, with more frequent reviews triggered by new AI deployments, regulatory changes, organizational restructuring, or significant incidents.

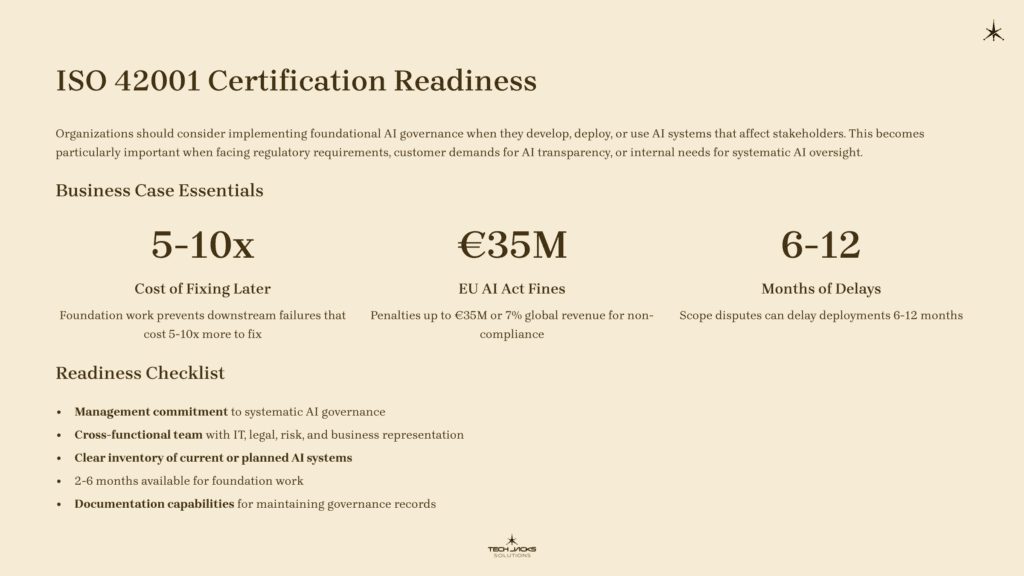

ISO 42001 Certification Readiness

Organizations should consider implementing foundational AI governance when they develop, deploy, or use AI systems that affect stakeholders. This becomes particularly important when facing regulatory requirements, customer demands for AI transparency, or internal needs for systematic AI oversight.

Organizations already certified to other ISO management systems can integrate AI governance more easily due to the harmonized structure. The foundational work provides clarity about which AI systems need governance and what levels of oversight are appropriate.

Business Case Essentials: Foundation work prevents downstream failures that cost 5-10x more to fix. Organizations skipping context analysis face regulatory penalties (EU AI Act fines up to €35M or 7% global revenue), failed audits requiring complete rework, scope disputes delaying deployments 6-12 months, and stakeholder trust erosion. The 2-6 month investment protects against multi-year compliance debt and enables faster, lower-risk AI scaling.

Readiness Checklist:

- Management commitment to systematic AI governance

- Cross-functional team with IT, legal, risk, and business representation

- Clear inventory of current or planned AI systems

- 2-6 months available for foundation work

- Documentation capabilities for maintaining governance records

ISO 42001 Clause 4 Requirements FAQ

How does this differ from other ISO management system standards?

The structure follows the harmonized format used across ISO management systems like ISO 27001 and ISO 9001. The difference is AI-specific content: determining your role relative to AI systems, considering environmental impacts of AI operations, and identifying stakeholders like data subjects and affected communities who may not have direct relationships with your organization.

Do I need all foundational components?

Organizations seeking alignment with ISO standards typically address all foundational components: understanding operating context, identifying stakeholders, defining program boundaries, and establishing governance frameworks. Each component builds on the previous ones.

How often should I review my foundation work?

Annual reviews work as a baseline, with more frequent reviews triggered by new AI deployments, regulatory changes, organizational restructuring, or significant incidents. Document your review schedule and show evidence of regular updates.

Can my governance scope differ from other management systems?

Yes. Your information security scope might cover all IT systems while your AI governance scope might focus only on systems using AI technologies. Ensure scopes are clearly documented and their relationship explained.

How do I handle AI systems from vendors?

Determine your role for each system. Using vendor AI systems typically makes you a user or deployer, depending on your level of control. Your governance responsibilities differ based on your role. Organizations using external AI services still need governance over how those systems are selected, deployed, and monitored.

AI Governance Standards and Resources – ISO 42001 Toolkit

Official Standards:

- ISO/IEC 42001:2023 – AI management systems standard (available through ISO and national standards bodies)

- ISO/IEC 22989:2022 – AI concepts and terminology

- ISO/IEC 42005:2025 – Guidance for AI system impact assessment

Regulatory Documents:

- EU AI Act (Regulation 2024/1689) – EU regulatory framework for AI

- NIST AI Risk Management Framework – US framework for AI risk management

Related Standards:

- ISO/IEC 27001:2022 – Information security management (shares harmonized structure)

- ISO 31000:2018 – Risk management guidelines

Your Next Steps: ISO 42001 Guidance

This Week:

☐ Review your current AI system inventory

☐ Identify initial stakeholder groups

☐ Assess management commitment level

This Month:

☐ Form cross-functional team

☐ Schedule context analysis workshops

☐ Allocate 2-6 month implementation timeline

Need Help? [Download our ISO 42001 Context Analysis Template] | [Book a Consultation]

Understanding your context comes first. For organizations serious about AI governance, the foundational work isn’t optional. It’s where everything begins.