An introduction to AWS BedrockTowards Data Science The how, why, what and where of Amazon’s LLM access layer

The post An introduction to AWS Bedrock appeared first on Towards Data Science.

The how, why, what and where of Amazon’s LLM access layer

The post An introduction to AWS Bedrock appeared first on Towards Data Science. Read More

Veo 3.1 Ingredients to Video: More consistency, creativity and controlGoogle DeepMind News Our latest Veo update generates lively, dynamic clips that feel natural and engaging — and supports vertical video generation.

Our latest Veo update generates lively, dynamic clips that feel natural and engaging — and supports vertical video generation. Read More

The Complete Guide to Logging for Python DevelopersKDnuggets Stop using print statements and start logging like a pro. This guide shows Python developers how to log smarter and debug faster.

Stop using print statements and start logging like a pro. This guide shows Python developers how to log smarter and debug faster. Read More

From ‘Dataslows’ to Dataflows: The Gen2 Performance Revolution in Microsoft FabricTowards Data Science Dataflows were (rightly?) considered “the slowest and least performant option” for ingesting data into Power BI/Microsoft Fabric. However, things are changing rapidly and the latest Dataflow enhancements changes how we play the game

The post From ‘Dataslows’ to Dataflows: The Gen2 Performance Revolution in Microsoft Fabric appeared first on Towards Data Science.

Dataflows were (rightly?) considered “the slowest and least performant option” for ingesting data into Power BI/Microsoft Fabric. However, things are changing rapidly and the latest Dataflow enhancements changes how we play the game

The post From ‘Dataslows’ to Dataflows: The Gen2 Performance Revolution in Microsoft Fabric appeared first on Towards Data Science. Read More

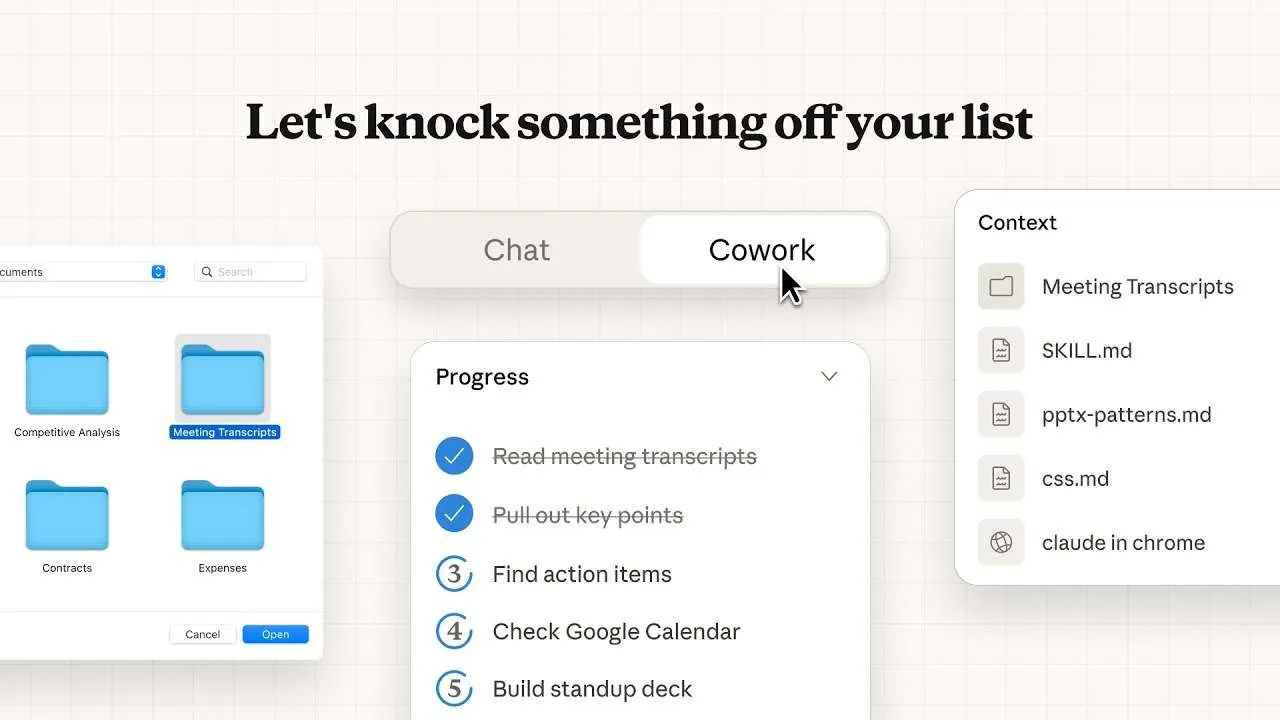

Anthropic Releases Cowork As Claude’s Local File System Agent For Everyday WorkMarkTechPost Anthropic has released Cowork, a new feature that runs agentic workflows on local files for non coding tasks currently available in research preview inside the Claude macOS desktop app. What Cowork Does At The File System Level Cowork currently runs as a dedicated mode in the Claude desktop app. When you start a Cowork session,

The post Anthropic Releases Cowork As Claude’s Local File System Agent For Everyday Work appeared first on MarkTechPost.

Anthropic has released Cowork, a new feature that runs agentic workflows on local files for non coding tasks currently available in research preview inside the Claude macOS desktop app. What Cowork Does At The File System Level Cowork currently runs as a dedicated mode in the Claude desktop app. When you start a Cowork session,

The post Anthropic Releases Cowork As Claude’s Local File System Agent For Everyday Work appeared first on MarkTechPost. Read More

CSV vs. Parquet vs. Arrow: Storage Formats ExplainedKDnuggets Same data, different formats, very different performance.

Same data, different formats, very different performance. Read More

Under the Uzès Sun: When Historical Data Reveals the Climate ChangeTowards Data Science Longer summers, milder winters: analysis of temperature trends in Uzès, France, year after year.

The post Under the Uzès Sun: When Historical Data Reveals the Climate Change appeared first on Towards Data Science.

Longer summers, milder winters: analysis of temperature trends in Uzès, France, year after year.

The post Under the Uzès Sun: When Historical Data Reveals the Climate Change appeared first on Towards Data Science. Read More

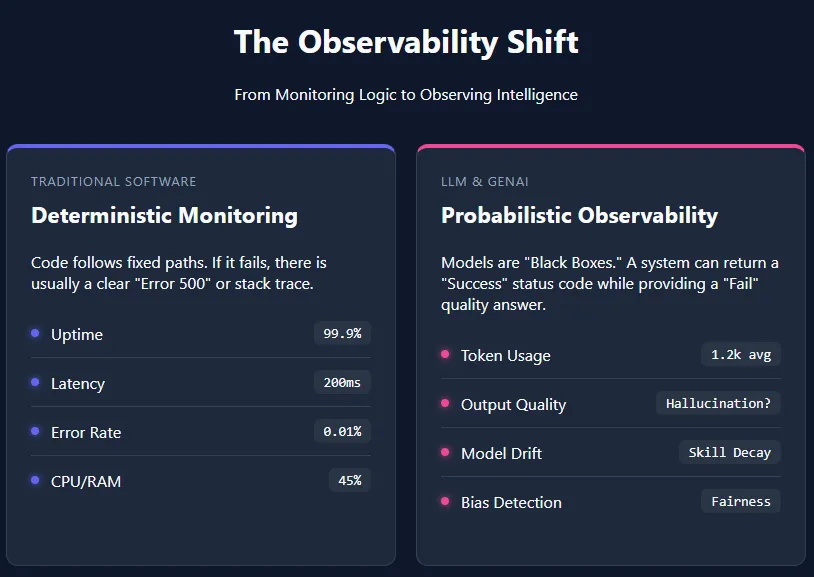

Understanding the Layers of AI Observability in the Age of LLMsMarkTechPost Artificial intelligence (AI) observability refers to the ability to understand, monitor, and evaluate AI systems by tracking their unique metrics—such as token usage, response quality, latency, and model drift. Unlike traditional software, large language models (LLMs) and other generative AI applications are probabilistic in nature. They do not follow fixed, transparent execution paths, which makes

The post Understanding the Layers of AI Observability in the Age of LLMs appeared first on MarkTechPost.

Artificial intelligence (AI) observability refers to the ability to understand, monitor, and evaluate AI systems by tracking their unique metrics—such as token usage, response quality, latency, and model drift. Unlike traditional software, large language models (LLMs) and other generative AI applications are probabilistic in nature. They do not follow fixed, transparent execution paths, which makes

The post Understanding the Layers of AI Observability in the Age of LLMs appeared first on MarkTechPost. Read More

January 12th TJS Weekly Security Intelligence Briefing Table of Contents January 12th TJS Weekly Security Intelligence Briefing 1. Executive Summary 2. Critical Action Items 3. Key Security Stories Story 1: React2Shell (CVE-2025-55182) – Critical RCE Under Widespread Exploitation Story 2: MongoDB MongoBleed Under Active Exploitation Story 3: Chinese-Linked Actors Exploited VMware ESXi Zero-Days for Nearly […]

KALE-LM-Chem: Vision and Practice Toward an AI Brain for Chemistrycs.AI updates on arXiv.org arXiv:2409.18695v3 Announce Type: replace

Abstract: Recent advancements in large language models (LLMs) have demonstrated strong potential for enabling domain-specific intelligence. In this work, we present our vision for building an AI-powered chemical brain, which frames chemical intelligence around four core capabilities: information extraction, semantic parsing, knowledge-based QA, and reasoning & planning. We argue that domain knowledge and logic are essential pillars for enabling such a system to assist and accelerate scientific discovery. To initiate this effort, we introduce our first generation of large language models for chemistry: KALE-LM-Chem and KALE-LM-Chem-1.5, which have achieved outstanding performance in tasks related to the field of chemistry. We hope that our work serves as a strong starting point, helping to realize more intelligent AI and promoting the advancement of human science and technology, as well as societal development.

arXiv:2409.18695v3 Announce Type: replace

Abstract: Recent advancements in large language models (LLMs) have demonstrated strong potential for enabling domain-specific intelligence. In this work, we present our vision for building an AI-powered chemical brain, which frames chemical intelligence around four core capabilities: information extraction, semantic parsing, knowledge-based QA, and reasoning & planning. We argue that domain knowledge and logic are essential pillars for enabling such a system to assist and accelerate scientific discovery. To initiate this effort, we introduce our first generation of large language models for chemistry: KALE-LM-Chem and KALE-LM-Chem-1.5, which have achieved outstanding performance in tasks related to the field of chemistry. We hope that our work serves as a strong starting point, helping to realize more intelligent AI and promoting the advancement of human science and technology, as well as societal development. Read More