Enabling MoE on the Edge via Importance-Driven Expert Schedulingcs.AI updates on arXiv.org arXiv:2508.18983v2 Announce Type: replace

Abstract: The Mixture of Experts (MoE) architecture has emerged as a key technique for scaling Large Language Models by activating only a subset of experts per query. Deploying MoE on consumer-grade edge hardware, however, is constrained by limited device memory, making dynamic expert offloading essential. Unlike prior work that treats offloading purely as a scheduling problem, we leverage expert importance to guide decisions, substituting low-importance activated experts with functionally similar ones already cached in GPU memory, thereby preserving accuracy. As a result, this design reduces memory usage and data transfer, while largely eliminating PCIe overhead. In addition, we introduce a scheduling policy that maximizes the reuse ratio of GPU-cached experts, further boosting efficiency. Extensive evaluations show that our approach delivers 48% lower decoding latency with over 60% expert cache hit rate, while maintaining nearly lossless accuracy.

arXiv:2508.18983v2 Announce Type: replace

Abstract: The Mixture of Experts (MoE) architecture has emerged as a key technique for scaling Large Language Models by activating only a subset of experts per query. Deploying MoE on consumer-grade edge hardware, however, is constrained by limited device memory, making dynamic expert offloading essential. Unlike prior work that treats offloading purely as a scheduling problem, we leverage expert importance to guide decisions, substituting low-importance activated experts with functionally similar ones already cached in GPU memory, thereby preserving accuracy. As a result, this design reduces memory usage and data transfer, while largely eliminating PCIe overhead. In addition, we introduce a scheduling policy that maximizes the reuse ratio of GPU-cached experts, further boosting efficiency. Extensive evaluations show that our approach delivers 48% lower decoding latency with over 60% expert cache hit rate, while maintaining nearly lossless accuracy. Read More

Data Cleaning at the Command Line for Beginner Data ScientistsKDnuggets Data cleaning doesn’t always require Python or Excel. Learn how simple command-line tools can help you clean datasets faster and more efficiently.

Data cleaning doesn’t always require Python or Excel. Learn how simple command-line tools can help you clean datasets faster and more efficiently. Read More

How to choose the best thermal binoculars for long-range detection in 2026AI News Choosing the right thermal binoculars is essential for security professionals and outdoor specialists who need reliable long-range detection. Many users who previously relied on the market’s best night vision binoculars now seek advanced thermal imaging for superior clarity, extended range, and weather-independent performance. In 2026, ATN continues to lead the market with cutting-edge thermal binoculars

The post How to choose the best thermal binoculars for long-range detection in 2026 appeared first on AI News.

Choosing the right thermal binoculars is essential for security professionals and outdoor specialists who need reliable long-range detection. Many users who previously relied on the market’s best night vision binoculars now seek advanced thermal imaging for superior clarity, extended range, and weather-independent performance. In 2026, ATN continues to lead the market with cutting-edge thermal binoculars

The post How to choose the best thermal binoculars for long-range detection in 2026 appeared first on AI News. Read More

How Relevance Models Foreshadowed Transformers for NLPTowards Data Science Tracing the history of LLM attention: standing on the shoulders of giants

The post How Relevance Models Foreshadowed Transformers for NLP appeared first on Towards Data Science.

Tracing the history of LLM attention: standing on the shoulders of giants

The post How Relevance Models Foreshadowed Transformers for NLP appeared first on Towards Data Science. Read More

Pure Storage and Azure’s role in AI-ready data for enterprisesAI News Many organisations are trying to update their infrastructure to improve efficiency and manage rising costs. But the path is rarely simple. Hybrid setups, legacy systems, and new demands from AI in the enterprise often create trade-offs for IT teams. Recent moves by Microsoft and several storage and data-platform vendors highlight how enterprises are trying to

The post Pure Storage and Azure’s role in AI-ready data for enterprises appeared first on AI News.

Many organisations are trying to update their infrastructure to improve efficiency and manage rising costs. But the path is rarely simple. Hybrid setups, legacy systems, and new demands from AI in the enterprise often create trade-offs for IT teams. Recent moves by Microsoft and several storage and data-platform vendors highlight how enterprises are trying to

The post Pure Storage and Azure’s role in AI-ready data for enterprises appeared first on AI News. Read More

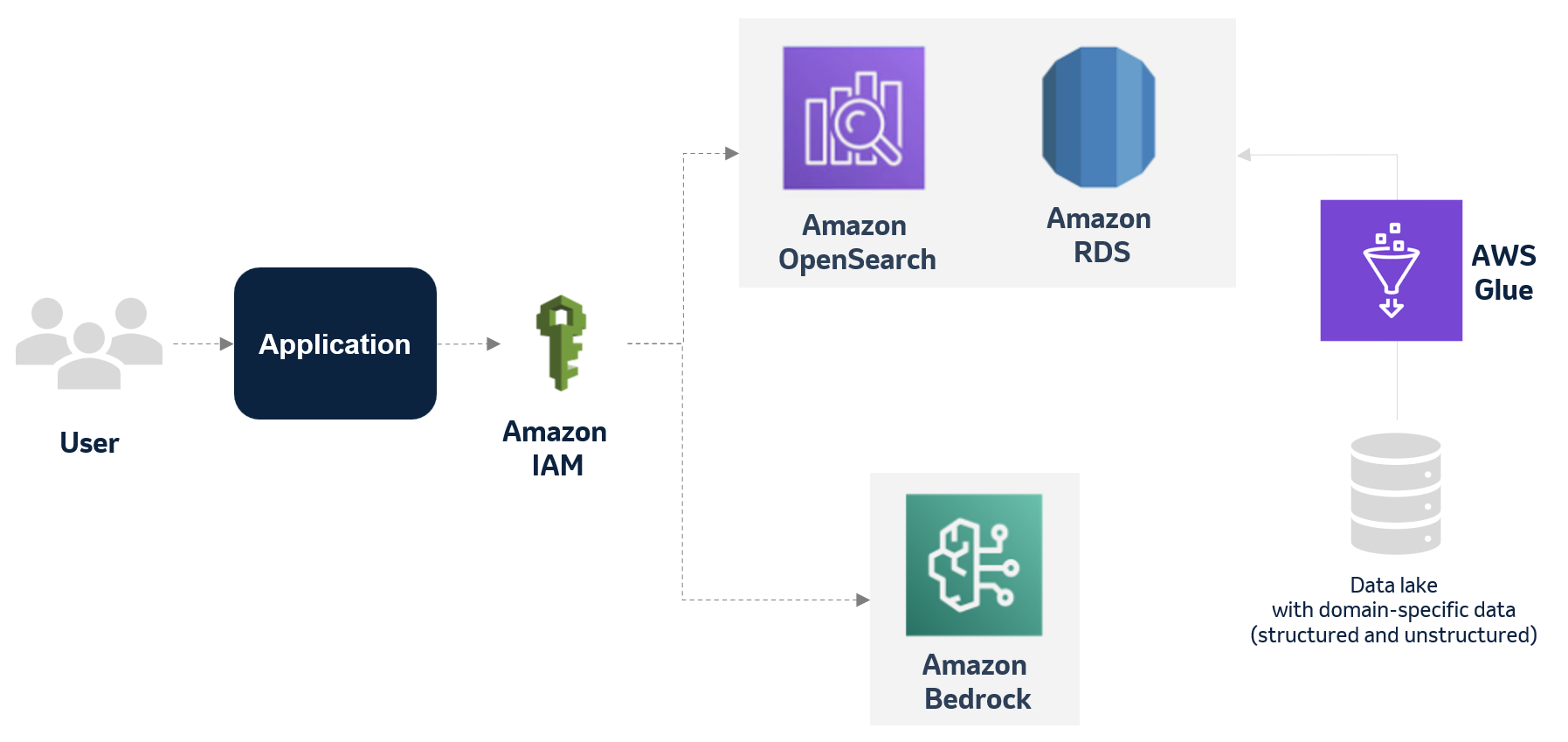

MSD explores applying generative Al to improve the deviation management process using AWS servicesArtificial Intelligence This blog post has explores how MSD is harnessing the power of generative AI and databases to optimize and transform its manufacturing deviation management process. By creating an accurate and multifaceted knowledge base of past events, deviations, and findings, the company aims to significantly reduce the time and effort required for each new case while maintaining the highest standards of quality and compliance.

This blog post has explores how MSD is harnessing the power of generative AI and databases to optimize and transform its manufacturing deviation management process. By creating an accurate and multifaceted knowledge base of past events, deviations, and findings, the company aims to significantly reduce the time and effort required for each new case while maintaining the highest standards of quality and compliance. Read More

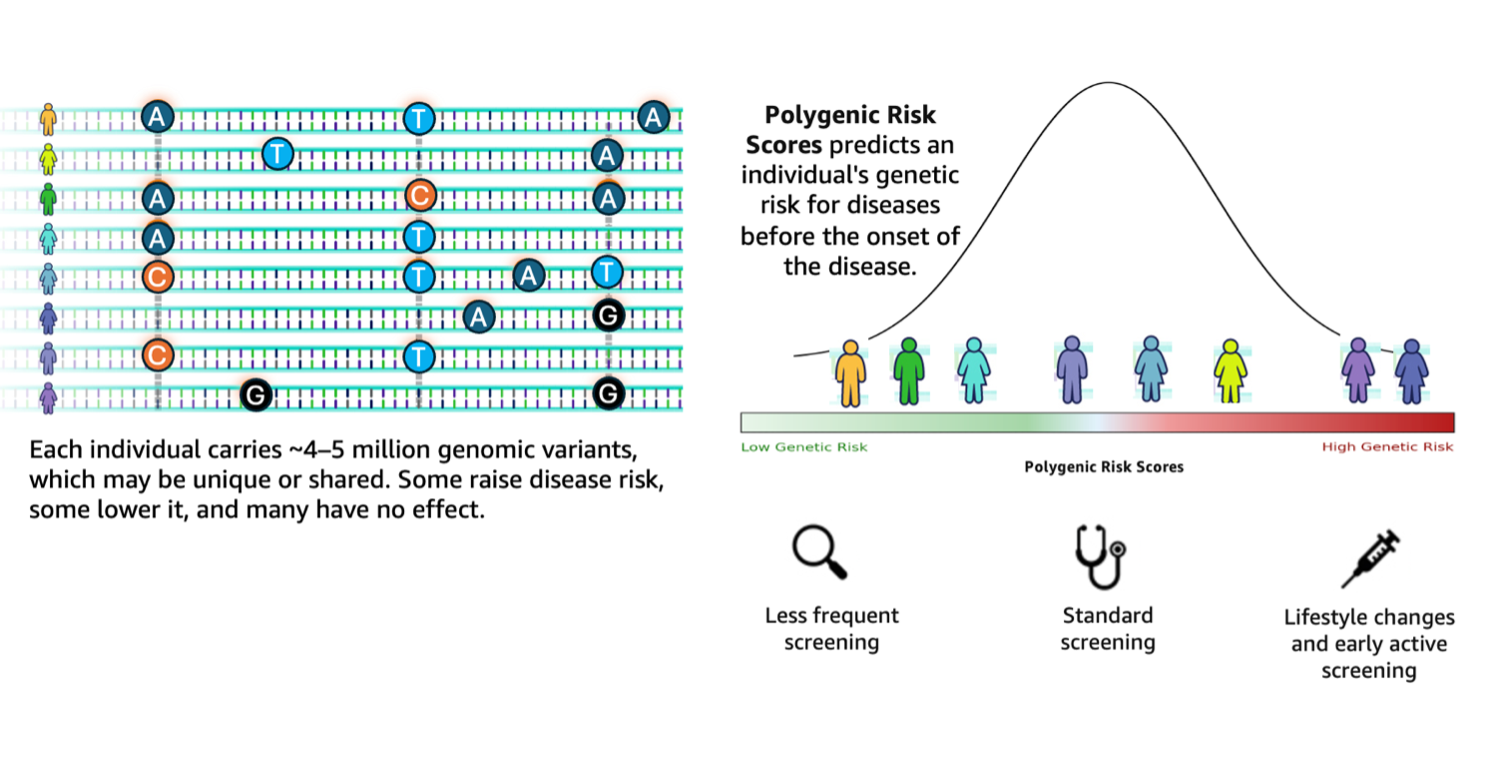

Accelerating genomics variant interpretation with AWS HealthOmics and Amazon Bedrock AgentCoreArtificial Intelligence In this blog post, we show you how agentic workflows can accelerate the processing and interpretation of genomics pipelines at scale with a natural language interface. We demonstrate a comprehensive genomic variant interpreter agent that combines automated data processing with intelligent analysis to address the entire workflow from raw VCF file ingestion to conversational query interfaces.

In this blog post, we show you how agentic workflows can accelerate the processing and interpretation of genomics pipelines at scale with a natural language interface. We demonstrate a comprehensive genomic variant interpreter agent that combines automated data processing with intelligent analysis to address the entire workflow from raw VCF file ingestion to conversational query interfaces. Read More

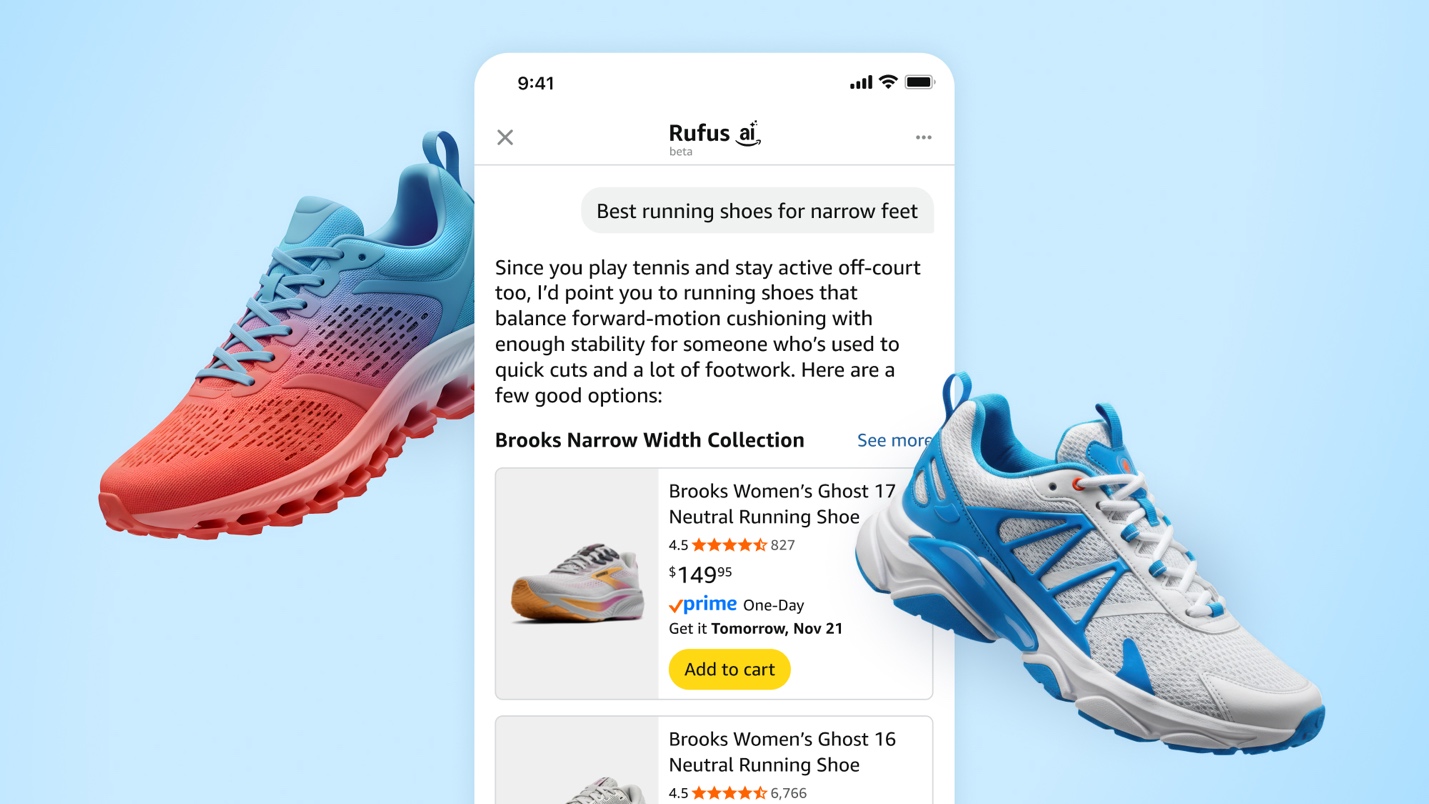

How Rufus scales conversational shopping experiences to millions of Amazon customers with Amazon BedrockArtificial Intelligence Our team at Amazon builds Rufus, an AI-powered shopping assistant which delivers intelligent, conversational experiences to delight our customers. More than 250 million customers have used Rufus this year. Monthly users are up 140% YoY and interactions are up 210% YoY. Additionally, customers that use Rufus during a shopping journey are 60% more likely to

Our team at Amazon builds Rufus, an AI-powered shopping assistant which delivers intelligent, conversational experiences to delight our customers. More than 250 million customers have used Rufus this year. Monthly users are up 140% YoY and interactions are up 210% YoY. Additionally, customers that use Rufus during a shopping journey are 60% more likely to Read More

How Data Engineering Can Power Manufacturing Industry TransformationKDnuggets Turning scattered information across production-line machines and systems into meaningful insights that help teams drive efficiency and competitiveness without increasing overhead costs.

Turning scattered information across production-line machines and systems into meaningful insights that help teams drive efficiency and competitiveness without increasing overhead costs. Read More

Top SQL Patterns from FAANG Data Science Interviews (with Code)KDnuggets Here are the top 5 SQL patterns tested in FAANG data science interviews.

Here are the top 5 SQL patterns tested in FAANG data science interviews. Read More