This AI finds simple rules where humans see only chaosArtificial Intelligence News — ScienceDaily A new AI developed at Duke University can uncover simple, readable rules behind extremely complex systems. It studies how systems evolve over time and reduces thousands of variables into compact equations that still capture real behavior. The method works across physics, engineering, climate science, and biology. Researchers say it could help scientists understand systems where traditional equations are missing or too complicated to write down.

A new AI developed at Duke University can uncover simple, readable rules behind extremely complex systems. It studies how systems evolve over time and reduces thousands of variables into compact equations that still capture real behavior. The method works across physics, engineering, climate science, and biology. Researchers say it could help scientists understand systems where traditional equations are missing or too complicated to write down. Read More

Adaptive Graph Pruning with Sudden-Events Evaluation for Traffic Prediction using Online Semi-Decentralized ST-GNNscs.AI updates on arXiv.org arXiv:2512.17352v1 Announce Type: cross

Abstract: Spatio-Temporal Graph Neural Networks (ST-GNNs) are well-suited for processing high-frequency data streams from geographically distributed sensors in smart mobility systems. However, their deployment at the edge across distributed compute nodes (cloudlets) createssubstantial communication overhead due to repeated transmission of overlapping node features between neighbouring cloudlets. To address this, we propose an adaptive pruning algorithm that dynamically filters redundant neighbour features while preserving the most informative spatial context for prediction. The algorithm adjusts pruning rates based on recent model performance, allowing each cloudlet to focus on regions experiencing traffic changes without compromising accuracy. Additionally, we introduce the Sudden Event Prediction Accuracy (SEPA), a novel event-focused metric designed to measure responsiveness to traffic slowdowns and recoveries, which are often missed by standard error metrics. We evaluate our approach in an online semi-decentralized setting with traditional FL, server-free FL, and Gossip Learning on two large-scale traffic datasets, PeMS-BAY and PeMSD7-M, across short-, mid-, and long-term prediction horizons. Experiments show that, in contrast to standard metrics, SEPA exposes the true value of spatial connectivity in predicting dynamic and irregular traffic. Our adaptive pruning algorithm maintains prediction accuracy while significantly lowering communication cost in all online semi-decentralized settings, demonstrating that communication can be reduced without compromising responsiveness to critical traffic events.

arXiv:2512.17352v1 Announce Type: cross

Abstract: Spatio-Temporal Graph Neural Networks (ST-GNNs) are well-suited for processing high-frequency data streams from geographically distributed sensors in smart mobility systems. However, their deployment at the edge across distributed compute nodes (cloudlets) createssubstantial communication overhead due to repeated transmission of overlapping node features between neighbouring cloudlets. To address this, we propose an adaptive pruning algorithm that dynamically filters redundant neighbour features while preserving the most informative spatial context for prediction. The algorithm adjusts pruning rates based on recent model performance, allowing each cloudlet to focus on regions experiencing traffic changes without compromising accuracy. Additionally, we introduce the Sudden Event Prediction Accuracy (SEPA), a novel event-focused metric designed to measure responsiveness to traffic slowdowns and recoveries, which are often missed by standard error metrics. We evaluate our approach in an online semi-decentralized setting with traditional FL, server-free FL, and Gossip Learning on two large-scale traffic datasets, PeMS-BAY and PeMSD7-M, across short-, mid-, and long-term prediction horizons. Experiments show that, in contrast to standard metrics, SEPA exposes the true value of spatial connectivity in predicting dynamic and irregular traffic. Our adaptive pruning algorithm maintains prediction accuracy while significantly lowering communication cost in all online semi-decentralized settings, demonstrating that communication can be reduced without compromising responsiveness to critical traffic events. Read More

Optimisation of Aircraft Maintenance Schedulescs.AI updates on arXiv.org arXiv:2512.17412v1 Announce Type: cross

Abstract: We present an aircraft maintenance scheduling problem, which requires suitably qualified staff to be assigned to maintenance tasks on each aircraft. The tasks on each aircraft must be completed within a given turn around window so that the aircraft may resume revenue earning service. This paper presents an initial study based on the application of an Evolutionary Algorithm to the problem. Evolutionary Algorithms evolve a solution to a problem by evaluating many possible solutions, focusing the search on those solutions that are of a higher quality, as defined by a fitness function. In this paper, we benchmark the algorithm on 60 generated problem instances to demonstrate the underlying representation and associated genetic operators.

arXiv:2512.17412v1 Announce Type: cross

Abstract: We present an aircraft maintenance scheduling problem, which requires suitably qualified staff to be assigned to maintenance tasks on each aircraft. The tasks on each aircraft must be completed within a given turn around window so that the aircraft may resume revenue earning service. This paper presents an initial study based on the application of an Evolutionary Algorithm to the problem. Evolutionary Algorithms evolve a solution to a problem by evaluating many possible solutions, focusing the search on those solutions that are of a higher quality, as defined by a fitness function. In this paper, we benchmark the algorithm on 60 generated problem instances to demonstrate the underlying representation and associated genetic operators. Read More

AutoMetrics: Approximate Human Judgements with Automatically Generated Evaluatorscs.AI updates on arXiv.org arXiv:2512.17267v1 Announce Type: cross

Abstract: Evaluating user-facing AI applications remains a central challenge, especially in open-ended domains such as travel planning, clinical note generation, or dialogue. The gold standard is user feedback (e.g., thumbs up/down) or behavioral signals (e.g., retention), but these are often scarce in prototypes and research projects, or too-slow to use for system optimization. We present AutoMetrics, a framework for synthesizing evaluation metrics under low-data constraints. AutoMetrics combines retrieval from MetricBank, a collection of 48 metrics we curate, with automatically generated LLM-as-a-Judge criteria informed by lightweight human feedback. These metrics are composed via regression to maximize correlation with human signal. AutoMetrics takes you from expensive measures to interpretable automatic metrics. Across 5 diverse tasks, AutoMetrics improves Kendall correlation with human ratings by up to 33.4% over LLM-as-a-Judge while requiring fewer than 100 feedback points. We show that AutoMetrics can be used as a proxy reward to equal effect as a verifiable reward. We release the full AutoMetrics toolkit and MetricBank to accelerate adaptive evaluation of LLM applications.

arXiv:2512.17267v1 Announce Type: cross

Abstract: Evaluating user-facing AI applications remains a central challenge, especially in open-ended domains such as travel planning, clinical note generation, or dialogue. The gold standard is user feedback (e.g., thumbs up/down) or behavioral signals (e.g., retention), but these are often scarce in prototypes and research projects, or too-slow to use for system optimization. We present AutoMetrics, a framework for synthesizing evaluation metrics under low-data constraints. AutoMetrics combines retrieval from MetricBank, a collection of 48 metrics we curate, with automatically generated LLM-as-a-Judge criteria informed by lightweight human feedback. These metrics are composed via regression to maximize correlation with human signal. AutoMetrics takes you from expensive measures to interpretable automatic metrics. Across 5 diverse tasks, AutoMetrics improves Kendall correlation with human ratings by up to 33.4% over LLM-as-a-Judge while requiring fewer than 100 feedback points. We show that AutoMetrics can be used as a proxy reward to equal effect as a verifiable reward. We release the full AutoMetrics toolkit and MetricBank to accelerate adaptive evaluation of LLM applications. Read More

The Machine Learning “Advent Calendar” Day 21: Gradient Boosted Decision Tree Regressor in ExcelTowards Data Science Gradient descent in function space with decision trees

The post The Machine Learning “Advent Calendar” Day 21: Gradient Boosted Decision Tree Regressor in Excel appeared first on Towards Data Science.

Gradient descent in function space with decision trees

The post The Machine Learning “Advent Calendar” Day 21: Gradient Boosted Decision Tree Regressor in Excel appeared first on Towards Data Science. Read More

NVIDIA AI Releases Nemotron 3: A Hybrid Mamba Transformer MoE Stack for Long Context Agentic AIMarkTechPost NVIDIA has released the Nemotron 3 family of open models as part of a full stack for agentic AI, including model weights, datasets and reinforcement learning tools. The family has three sizes, Nano, Super and Ultra, and targets multi agent systems that need long context reasoning with tight control over inference cost. Nano has about

The post NVIDIA AI Releases Nemotron 3: A Hybrid Mamba Transformer MoE Stack for Long Context Agentic AI appeared first on MarkTechPost.

NVIDIA has released the Nemotron 3 family of open models as part of a full stack for agentic AI, including model weights, datasets and reinforcement learning tools. The family has three sizes, Nano, Super and Ultra, and targets multi agent systems that need long context reasoning with tight control over inference cost. Nano has about

The post NVIDIA AI Releases Nemotron 3: A Hybrid Mamba Transformer MoE Stack for Long Context Agentic AI appeared first on MarkTechPost. Read More

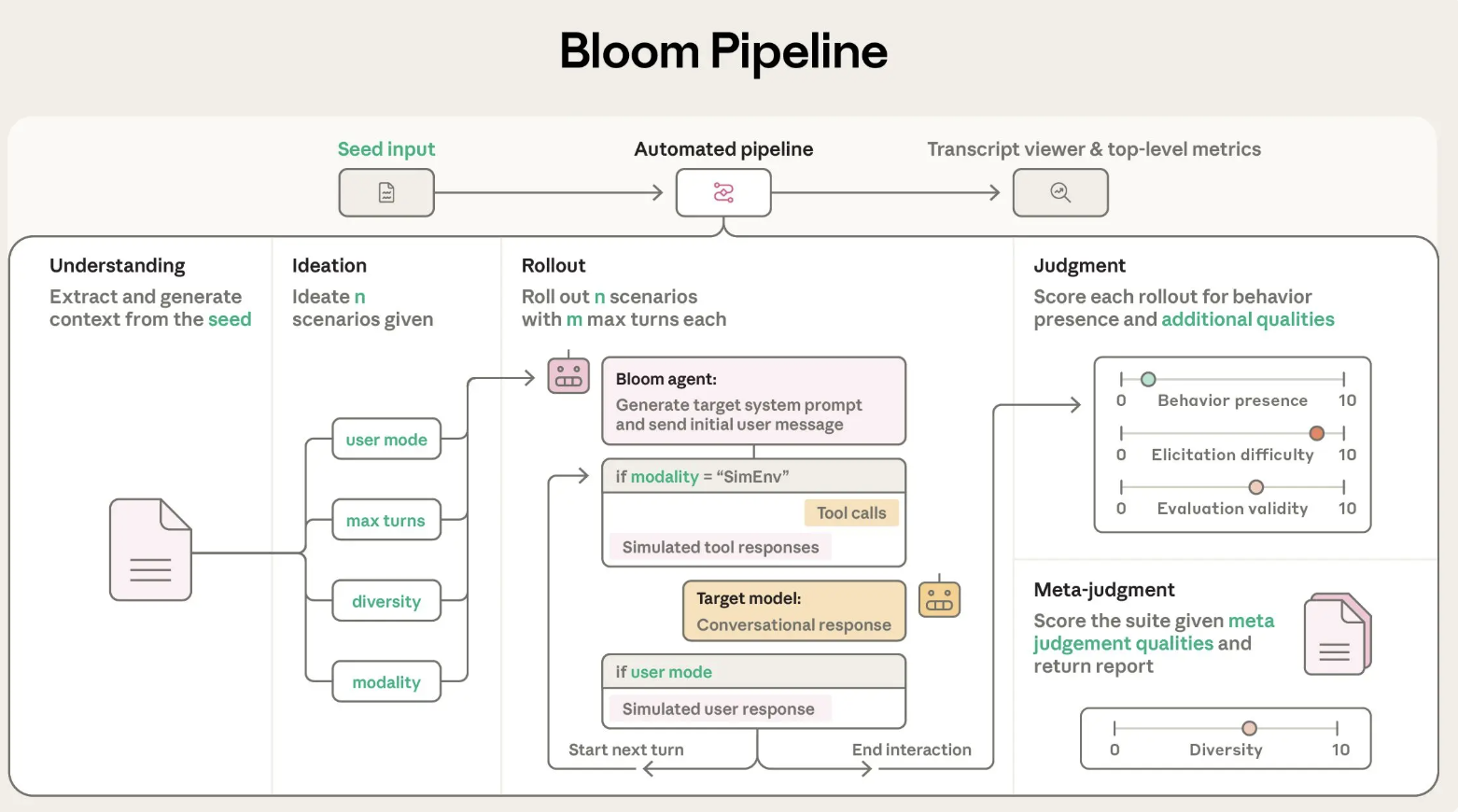

Anthropic AI Releases Bloom: An Open-Source Agentic Framework for Automated Behavioral Evaluations of Frontier AI ModelsMarkTechPost Anthropic has released Bloom, an open source agentic framework that automates behavioral evaluations for frontier AI models. The system takes a researcher specified behavior and builds targeted evaluations that measure how often and how strongly that behavior appears in realistic scenarios. Why Bloom? Behavioral evaluations for safety and alignment are expensive to design and maintain.

The post Anthropic AI Releases Bloom: An Open-Source Agentic Framework for Automated Behavioral Evaluations of Frontier AI Models appeared first on MarkTechPost.

Anthropic has released Bloom, an open source agentic framework that automates behavioral evaluations for frontier AI models. The system takes a researcher specified behavior and builds targeted evaluations that measure how often and how strongly that behavior appears in realistic scenarios. Why Bloom? Behavioral evaluations for safety and alignment are expensive to design and maintain.

The post Anthropic AI Releases Bloom: An Open-Source Agentic Framework for Automated Behavioral Evaluations of Frontier AI Models appeared first on MarkTechPost. Read More

Understanding the Generative AI UserTowards Data Science What do regular technology users think (and know) about AI?

The post Understanding the Generative AI User appeared first on Towards Data Science.

What do regular technology users think (and know) about AI?

The post Understanding the Generative AI User appeared first on Towards Data Science. Read More

Tools for Your LLM: a Deep Dive into MCPTowards Data Science MCP is a key enabler into turning your LLM into an agent by providing it with tools to retrieve real-time information or perform actions.

In this deep dive we cover how MCP works, when to use it, and what to watch out for.

The post Tools for Your LLM: a Deep Dive into MCP appeared first on Towards Data Science.

MCP is a key enabler into turning your LLM into an agent by providing it with tools to retrieve real-time information or perform actions.

In this deep dive we cover how MCP works, when to use it, and what to watch out for.

The post Tools for Your LLM: a Deep Dive into MCP appeared first on Towards Data Science. Read More

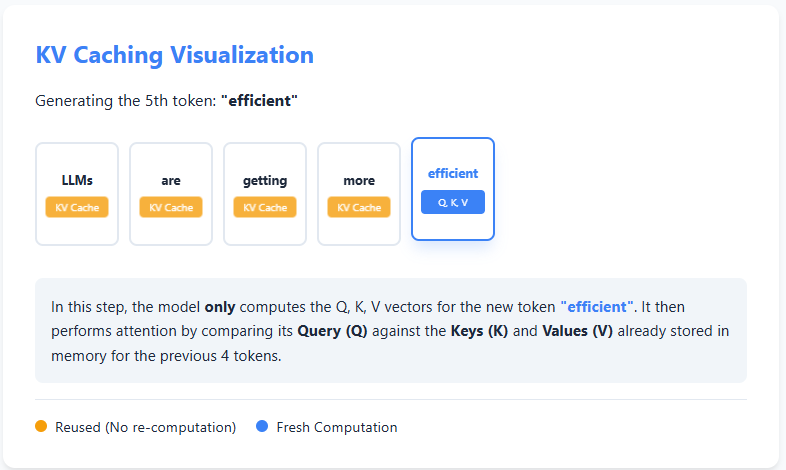

AI Interview Series #4: Explain KV CachingMarkTechPost Question: You’re deploying an LLM in production. Generating the first few tokens is fast, but as the sequence grows, each additional token takes progressively longer to generate—even though the model architecture and hardware remain the same. If compute isn’t the primary bottleneck, what inefficiency is causing this slowdown, and how would you redesign the inference

The post AI Interview Series #4: Explain KV Caching appeared first on MarkTechPost.

Question: You’re deploying an LLM in production. Generating the first few tokens is fast, but as the sequence grows, each additional token takes progressively longer to generate—even though the model architecture and hardware remain the same. If compute isn’t the primary bottleneck, what inefficiency is causing this slowdown, and how would you redesign the inference

The post AI Interview Series #4: Explain KV Caching appeared first on MarkTechPost. Read More