Feed-Forward Optimization With Delayed Feedback for Neural Network Trainingcs.AI updates on arXiv.org arXiv:2304.13372v2 Announce Type: replace-cross

Abstract: Backpropagation has long been criticized for being biologically implausible due to its reliance on concepts that are not viable in natural learning processes. Two core issues are the weight transport and update locking problems caused by the forward-backward dependencies, which limit biological plausibility, computational efficiency, and parallelization. Although several alternatives have been proposed to increase biological plausibility, they often come at the cost of reduced predictive performance. This paper proposes an alternative approach to training feed-forward neural networks addressing these issues by using approximate gradient information. We introduce Feed-Forward with delayed Feedback (F$^3$), which approximates gradients using fixed random feedback paths and delayed error information from the previous epoch to balance biological plausibility with predictive performance. We evaluate F$^3$ across multiple tasks and architectures, including both fully-connected and Transformer networks. Our results demonstrate that, compared to similarly plausible approaches, F$^3$ significantly improves predictive performance, narrowing the gap to backpropagation by up to 56% for classification and 96% for regression. This work is a step towards more biologically plausible learning algorithms while opening up new avenues for energy-efficient and parallelizable neural network training.

arXiv:2304.13372v2 Announce Type: replace-cross

Abstract: Backpropagation has long been criticized for being biologically implausible due to its reliance on concepts that are not viable in natural learning processes. Two core issues are the weight transport and update locking problems caused by the forward-backward dependencies, which limit biological plausibility, computational efficiency, and parallelization. Although several alternatives have been proposed to increase biological plausibility, they often come at the cost of reduced predictive performance. This paper proposes an alternative approach to training feed-forward neural networks addressing these issues by using approximate gradient information. We introduce Feed-Forward with delayed Feedback (F$^3$), which approximates gradients using fixed random feedback paths and delayed error information from the previous epoch to balance biological plausibility with predictive performance. We evaluate F$^3$ across multiple tasks and architectures, including both fully-connected and Transformer networks. Our results demonstrate that, compared to similarly plausible approaches, F$^3$ significantly improves predictive performance, narrowing the gap to backpropagation by up to 56% for classification and 96% for regression. This work is a step towards more biologically plausible learning algorithms while opening up new avenues for energy-efficient and parallelizable neural network training. Read More

Multiplex Thinking: Reasoning via Token-wise Branch-and-Mergecs.AI updates on arXiv.org arXiv:2601.08808v1 Announce Type: cross

Abstract: Large language models often solve complex reasoning tasks more effectively with Chain-of-Thought (CoT), but at the cost of long, low-bandwidth token sequences. Humans, by contrast, often reason softly by maintaining a distribution over plausible next steps. Motivated by this, we propose Multiplex Thinking, a stochastic soft reasoning mechanism that, at each thinking step, samples K candidate tokens and aggregates their embeddings into a single continuous multiplex token. This preserves the vocabulary embedding prior and the sampling dynamics of standard discrete generation, while inducing a tractable probability distribution over multiplex rollouts. Consequently, multiplex trajectories can be directly optimized with on-policy reinforcement learning (RL). Importantly, Multiplex Thinking is self-adaptive: when the model is confident, the multiplex token is nearly discrete and behaves like standard CoT; when it is uncertain, it compactly represents multiple plausible next steps without increasing sequence length. Across challenging math reasoning benchmarks, Multiplex Thinking consistently outperforms strong discrete CoT and RL baselines from Pass@1 through Pass@1024, while producing shorter sequences. The code and checkpoints are available at https://github.com/GMLR-Penn/Multiplex-Thinking.

arXiv:2601.08808v1 Announce Type: cross

Abstract: Large language models often solve complex reasoning tasks more effectively with Chain-of-Thought (CoT), but at the cost of long, low-bandwidth token sequences. Humans, by contrast, often reason softly by maintaining a distribution over plausible next steps. Motivated by this, we propose Multiplex Thinking, a stochastic soft reasoning mechanism that, at each thinking step, samples K candidate tokens and aggregates their embeddings into a single continuous multiplex token. This preserves the vocabulary embedding prior and the sampling dynamics of standard discrete generation, while inducing a tractable probability distribution over multiplex rollouts. Consequently, multiplex trajectories can be directly optimized with on-policy reinforcement learning (RL). Importantly, Multiplex Thinking is self-adaptive: when the model is confident, the multiplex token is nearly discrete and behaves like standard CoT; when it is uncertain, it compactly represents multiple plausible next steps without increasing sequence length. Across challenging math reasoning benchmarks, Multiplex Thinking consistently outperforms strong discrete CoT and RL baselines from Pass@1 through Pass@1024, while producing shorter sequences. The code and checkpoints are available at https://github.com/GMLR-Penn/Multiplex-Thinking. Read More

AutoContext: Instance-Level Context Learning for LLM Agentscs.AI updates on arXiv.org arXiv:2510.02369v3 Announce Type: replace-cross

Abstract: Current LLM agents typically lack instance-level context, which comprises concrete facts such as environment structure, system configurations, and local mechanics. Consequently, existing methods are forced to intertwine exploration with task execution. This coupling leads to redundant interactions and fragile decision-making, as agents must repeatedly rediscover the same information for every new task. To address this, we introduce AutoContext, a method that decouples exploration from task solving. AutoContext performs a systematic, one-off exploration to construct a reusable knowledge graph for each environment instance. This structured context allows off-the-shelf agents to access necessary facts directly, eliminating redundant exploration. Experiments across TextWorld, ALFWorld, Crafter, and InterCode-Bash demonstrate substantial gains: for example, the success rate of a ReAct agent on TextWorld improves from 37% to 95%, highlighting the critical role of structured instance context in efficient agentic systems.

arXiv:2510.02369v3 Announce Type: replace-cross

Abstract: Current LLM agents typically lack instance-level context, which comprises concrete facts such as environment structure, system configurations, and local mechanics. Consequently, existing methods are forced to intertwine exploration with task execution. This coupling leads to redundant interactions and fragile decision-making, as agents must repeatedly rediscover the same information for every new task. To address this, we introduce AutoContext, a method that decouples exploration from task solving. AutoContext performs a systematic, one-off exploration to construct a reusable knowledge graph for each environment instance. This structured context allows off-the-shelf agents to access necessary facts directly, eliminating redundant exploration. Experiments across TextWorld, ALFWorld, Crafter, and InterCode-Bash demonstrate substantial gains: for example, the success rate of a ReAct agent on TextWorld improves from 37% to 95%, highlighting the critical role of structured instance context in efficient agentic systems. Read More

Glitches in the Attention MatrixTowards Data Science A history of Transformer artifacts and the latest research on how to fix them

The post Glitches in the Attention Matrix appeared first on Towards Data Science.

A history of Transformer artifacts and the latest research on how to fix them

The post Glitches in the Attention Matrix appeared first on Towards Data Science. Read More

Research shows UK young adults would use AI for financial guidanceAI News Research from Cleo AI indicates that young adults are turning to artificial intelligence for financial advice to help them manage their money and develop more sustainable financial habits. The study surveyed 5,000 UK adults aged 28 to 40 and found that the majority are saving significantly less than they would like. In this context, interest

The post Research shows UK young adults would use AI for financial guidance appeared first on AI News.

Research from Cleo AI indicates that young adults are turning to artificial intelligence for financial advice to help them manage their money and develop more sustainable financial habits. The study surveyed 5,000 UK adults aged 28 to 40 and found that the majority are saving significantly less than they would like. In this context, interest

The post Research shows UK young adults would use AI for financial guidance appeared first on AI News. Read More

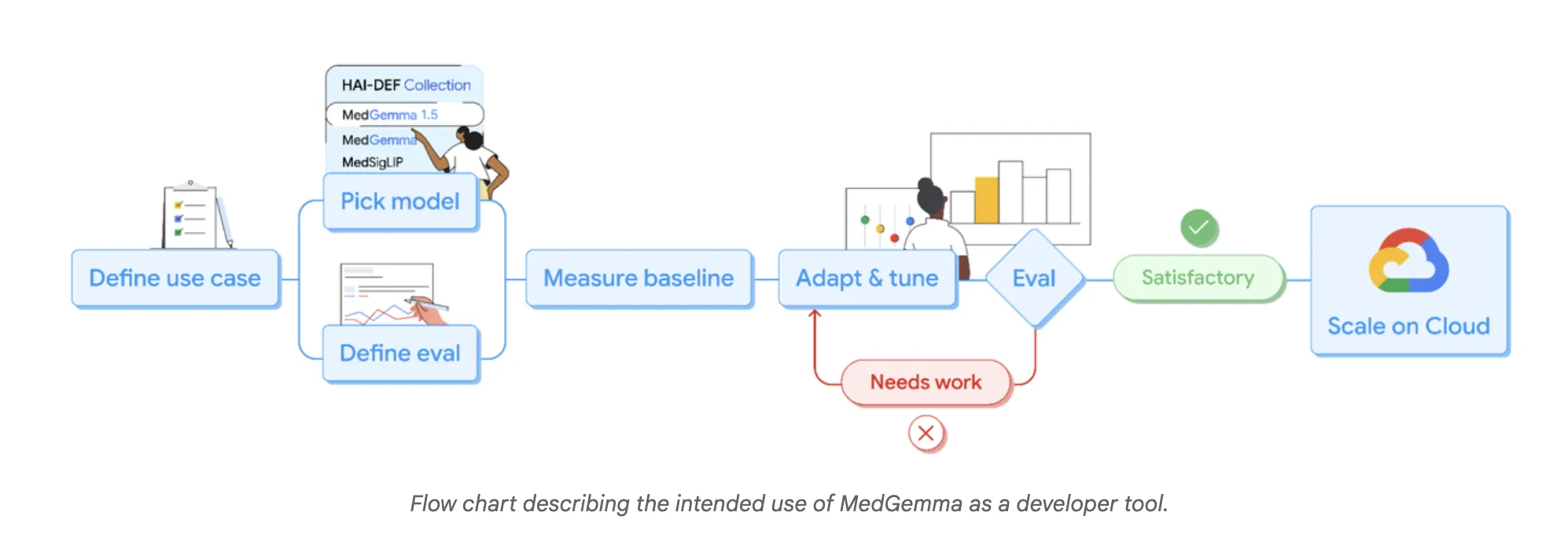

Google AI Releases MedGemma-1.5: The Latest Update to their Open Medical AI Models for DevelopersMarkTechPost Google Research has expanded its Health AI Developer Foundations program (HAI-DEF) with the release of MedGemma-1.5. The model is released as open starting points for developers who want to build medical imaging, text and speech systems and then adapt them to local workflows and regulations. MedGemma 1.5, small multimodal model for real clinical data MedGemma

The post Google AI Releases MedGemma-1.5: The Latest Update to their Open Medical AI Models for Developers appeared first on MarkTechPost.

Google Research has expanded its Health AI Developer Foundations program (HAI-DEF) with the release of MedGemma-1.5. The model is released as open starting points for developers who want to build medical imaging, text and speech systems and then adapt them to local workflows and regulations. MedGemma 1.5, small multimodal model for real clinical data MedGemma

The post Google AI Releases MedGemma-1.5: The Latest Update to their Open Medical AI Models for Developers appeared first on MarkTechPost. Read More

Securing Amazon Bedrock cross-Region inference: Geographic and globalArtificial Intelligence In this post, we explore the security considerations and best practices for implementing Amazon Bedrock cross-Region inference profiles. Whether you’re building a generative AI application or need to meet specific regional compliance requirements, this guide will help you understand the secure architecture of Amazon Bedrock CRIS and how to properly configure your implementation.

In this post, we explore the security considerations and best practices for implementing Amazon Bedrock cross-Region inference profiles. Whether you’re building a generative AI application or need to meet specific regional compliance requirements, this guide will help you understand the secure architecture of Amazon Bedrock CRIS and how to properly configure your implementation. Read More

Veo 3.1 Ingredients to Video: More consistency, creativity and controlGoogle DeepMind News Our latest Veo update generates lively, dynamic clips that feel natural and engaging — and supports vertical video generation.

Our latest Veo update generates lively, dynamic clips that feel natural and engaging — and supports vertical video generation. Read More

The Complete Guide to Logging for Python DevelopersKDnuggets Stop using print statements and start logging like a pro. This guide shows Python developers how to log smarter and debug faster.

Stop using print statements and start logging like a pro. This guide shows Python developers how to log smarter and debug faster. Read More

From ‘Dataslows’ to Dataflows: The Gen2 Performance Revolution in Microsoft FabricTowards Data Science Dataflows were (rightly?) considered “the slowest and least performant option” for ingesting data into Power BI/Microsoft Fabric. However, things are changing rapidly and the latest Dataflow enhancements changes how we play the game

The post From ‘Dataslows’ to Dataflows: The Gen2 Performance Revolution in Microsoft Fabric appeared first on Towards Data Science.

Dataflows were (rightly?) considered “the slowest and least performant option” for ingesting data into Power BI/Microsoft Fabric. However, things are changing rapidly and the latest Dataflow enhancements changes how we play the game

The post From ‘Dataslows’ to Dataflows: The Gen2 Performance Revolution in Microsoft Fabric appeared first on Towards Data Science. Read More