Intrinsic Self-Correction in LLMs: Towards Explainable Prompting via Mechanistic Interpretabilitycs.AI updates on arXiv.org arXiv:2505.11924v3 Announce Type: replace-cross

Abstract: Intrinsic self-correction refers to the phenomenon where a language model refines its own outputs purely through prompting, without external feedback or parameter updates. While this approach improves performance across diverse tasks, its mechanism remains unclear. We show that intrinsic self-correction functions by steering hidden representations along interpretable latent directions, as evidenced by both alignment analysis and activation interventions. To achieve this, we analyze intrinsic self-correction via the representation shift induced by prompting. In parallel, we construct interpretable latent directions with contrastive pairs and verify the causal effect of these directions via activation addition. Evaluating six open-source LLMs, our results demonstrate that prompt-induced representation shifts in text detoxification and text toxification consistently align with latent directions constructed from contrastive pairs. In detoxification, the shifts align with the non-toxic direction; in toxification, they align with the toxic direction. These findings suggest that representation steering is the mechanistic driver of intrinsic self-correction. Our analysis highlights that understanding model internals offers a direct route to analyzing the mechanisms of prompt-driven LLM behaviors.

arXiv:2505.11924v3 Announce Type: replace-cross

Abstract: Intrinsic self-correction refers to the phenomenon where a language model refines its own outputs purely through prompting, without external feedback or parameter updates. While this approach improves performance across diverse tasks, its mechanism remains unclear. We show that intrinsic self-correction functions by steering hidden representations along interpretable latent directions, as evidenced by both alignment analysis and activation interventions. To achieve this, we analyze intrinsic self-correction via the representation shift induced by prompting. In parallel, we construct interpretable latent directions with contrastive pairs and verify the causal effect of these directions via activation addition. Evaluating six open-source LLMs, our results demonstrate that prompt-induced representation shifts in text detoxification and text toxification consistently align with latent directions constructed from contrastive pairs. In detoxification, the shifts align with the non-toxic direction; in toxification, they align with the toxic direction. These findings suggest that representation steering is the mechanistic driver of intrinsic self-correction. Our analysis highlights that understanding model internals offers a direct route to analyzing the mechanisms of prompt-driven LLM behaviors. Read More

AI Driven Discovery of Bio Ecological Mediation in Cascading Heatwave Riskscs.AI updates on arXiv.org arXiv:2509.25112v2 Announce Type: replace

Abstract: Compound heatwaves increasingly trigger complex cascading failures that propagate through interconnected physical and human systems, yet the fragmentation of disciplinary knowledge hinders the comprehensive mapping of these systemic risk topologies. This study introduces the Heatwave Discovery Agent HeDA as an autonomous scientific synthesis framework designed to bridge cognitive gaps by constructing a high fidelity knowledge graph from 8,111 academic publications. By structuring 70,297 evidence nodes, the system exhibits enhanced inferential fidelity in capturing long tail risk mechanisms and achieves a significant accuracy margin compared to standard foundation models including GPT 5.2 and Claude Sonnet 4.5 in complex reasoning tasks. The resulting topological analysis reveals a critical bio ecological mediation effect where biological systems function as the primary non linear amplifiers of thermal stress that transform physical meteorological hazards into systemic socioeconomic losses. We further identify latent functional couplings between theoretically distinct sectors such as the heat induced synchronization of power grid failures and emergency medical capacity saturation. These findings elucidate the dynamics of compound climate risks and provide an empirical basis for shifting adaptation strategies from static sectoral defense to dynamic cross system resilience.

arXiv:2509.25112v2 Announce Type: replace

Abstract: Compound heatwaves increasingly trigger complex cascading failures that propagate through interconnected physical and human systems, yet the fragmentation of disciplinary knowledge hinders the comprehensive mapping of these systemic risk topologies. This study introduces the Heatwave Discovery Agent HeDA as an autonomous scientific synthesis framework designed to bridge cognitive gaps by constructing a high fidelity knowledge graph from 8,111 academic publications. By structuring 70,297 evidence nodes, the system exhibits enhanced inferential fidelity in capturing long tail risk mechanisms and achieves a significant accuracy margin compared to standard foundation models including GPT 5.2 and Claude Sonnet 4.5 in complex reasoning tasks. The resulting topological analysis reveals a critical bio ecological mediation effect where biological systems function as the primary non linear amplifiers of thermal stress that transform physical meteorological hazards into systemic socioeconomic losses. We further identify latent functional couplings between theoretically distinct sectors such as the heat induced synchronization of power grid failures and emergency medical capacity saturation. These findings elucidate the dynamics of compound climate risks and provide an empirical basis for shifting adaptation strategies from static sectoral defense to dynamic cross system resilience. Read More

Progress Constraints for Reinforcement Learning in Behavior Treescs.AI updates on arXiv.org arXiv:2602.06525v2 Announce Type: replace

Abstract: Behavior Trees (BTs) provide a structured and reactive framework for decision-making, commonly used to switch between sub-controllers based on environmental conditions. Reinforcement Learning (RL), on the other hand, can learn near-optimal controllers but sometimes struggles with sparse rewards, safe exploration, and long-horizon credit assignment. Combining BTs with RL has the potential for mutual benefit: a BT design encodes structured domain knowledge that can simplify RL training, while RL enables automatic learning of the controllers within BTs. However, naive integration of BTs and RL can lead to some controllers counteracting other controllers, possibly undoing previously achieved subgoals, thereby degrading the overall performance. To address this, we propose progress constraints, a novel mechanism where feasibility estimators constrain the allowed action set based on theoretical BT convergence results. Empirical evaluations in a 2D proof-of-concept and a high-fidelity warehouse environment demonstrate improved performance, sample efficiency, and constraint satisfaction, compared to prior methods of BT-RL integration.

arXiv:2602.06525v2 Announce Type: replace

Abstract: Behavior Trees (BTs) provide a structured and reactive framework for decision-making, commonly used to switch between sub-controllers based on environmental conditions. Reinforcement Learning (RL), on the other hand, can learn near-optimal controllers but sometimes struggles with sparse rewards, safe exploration, and long-horizon credit assignment. Combining BTs with RL has the potential for mutual benefit: a BT design encodes structured domain knowledge that can simplify RL training, while RL enables automatic learning of the controllers within BTs. However, naive integration of BTs and RL can lead to some controllers counteracting other controllers, possibly undoing previously achieved subgoals, thereby degrading the overall performance. To address this, we propose progress constraints, a novel mechanism where feasibility estimators constrain the allowed action set based on theoretical BT convergence results. Empirical evaluations in a 2D proof-of-concept and a high-fidelity warehouse environment demonstrate improved performance, sample efficiency, and constraint satisfaction, compared to prior methods of BT-RL integration. Read More

TABES: Trajectory-Aware Backward-on-Entropy Steering for Masked Diffusion Modelscs.AI updates on arXiv.org arXiv:2602.00250v2 Announce Type: replace-cross

Abstract: Masked Diffusion Models (MDMs) have emerged as a promising non-autoregressive paradigm for generative tasks, offering parallel decoding and bidirectional context utilization. However, current sampling methods rely on simple confidence-based heuristics that ignore the long-term impact of local decisions, leading to trajectory lock-in where early hallucinations cascade into global incoherence. While search-based methods mitigate this, they incur prohibitive computational costs ($O(K)$ forward passes per step). In this work, we propose Backward-on-Entropy (BoE) Steering, a gradient-guided inference framework that approximates infinite-horizon lookahead via a single backward pass. We formally derive the Token Influence Score (TIS) from a first-order expansion of the trajectory cost functional, proving that the gradient of future entropy with respect to input embeddings serves as an optimal control signal for minimizing uncertainty. To ensure scalability, we introduce texttt{ActiveQueryAttention}, a sparse adjoint primitive that exploits the structure of the masking objective to reduce backward pass complexity. BoE achieves a superior Pareto frontier for inference-time scaling compared to existing unmasking methods, demonstrating that gradient-guided steering offers a mathematically principled and efficient path to robust non-autoregressive generation. We will release the code.

arXiv:2602.00250v2 Announce Type: replace-cross

Abstract: Masked Diffusion Models (MDMs) have emerged as a promising non-autoregressive paradigm for generative tasks, offering parallel decoding and bidirectional context utilization. However, current sampling methods rely on simple confidence-based heuristics that ignore the long-term impact of local decisions, leading to trajectory lock-in where early hallucinations cascade into global incoherence. While search-based methods mitigate this, they incur prohibitive computational costs ($O(K)$ forward passes per step). In this work, we propose Backward-on-Entropy (BoE) Steering, a gradient-guided inference framework that approximates infinite-horizon lookahead via a single backward pass. We formally derive the Token Influence Score (TIS) from a first-order expansion of the trajectory cost functional, proving that the gradient of future entropy with respect to input embeddings serves as an optimal control signal for minimizing uncertainty. To ensure scalability, we introduce texttt{ActiveQueryAttention}, a sparse adjoint primitive that exploits the structure of the masking objective to reduce backward pass complexity. BoE achieves a superior Pareto frontier for inference-time scaling compared to existing unmasking methods, demonstrating that gradient-guided steering offers a mathematically principled and efficient path to robust non-autoregressive generation. We will release the code. Read More

Learning to Compose for Cross-domain Agentic Workflow Generationcs.AI updates on arXiv.org arXiv:2602.11114v1 Announce Type: cross

Abstract: Automatically generating agentic workflows — executable operator graphs or codes that orchestrate reasoning, verification, and repair — has become a practical way to solve complex tasks beyond what single-pass LLM generation can reliably handle. Yet what constitutes a good workflow depends heavily on the task distribution and the available operators. Under domain shift, current systems typically rely on iterative workflow refinement to discover a feasible workflow from a large workflow space, incurring high iteration costs and yielding unstable, domain-specific behavior. In response, we internalize a decompose-recompose-decide mechanism into an open-source LLM for cross-domain workflow generation. To decompose, we learn a compact set of reusable workflow capabilities across diverse domains. To recompose, we map each input task to a sparse composition over these bases to generate a task-specific workflow in a single pass. To decide, we attribute the success or failure of workflow generation to counterfactual contributions from learned capabilities, thereby capturing which capabilities actually drive success by their marginal effects. Across stringent multi-domain, cross-domain, and unseen-domain evaluations, our 1-pass generator surpasses SOTA refinement baselines that consume 20 iterations, while substantially reducing generation latency and cost.

arXiv:2602.11114v1 Announce Type: cross

Abstract: Automatically generating agentic workflows — executable operator graphs or codes that orchestrate reasoning, verification, and repair — has become a practical way to solve complex tasks beyond what single-pass LLM generation can reliably handle. Yet what constitutes a good workflow depends heavily on the task distribution and the available operators. Under domain shift, current systems typically rely on iterative workflow refinement to discover a feasible workflow from a large workflow space, incurring high iteration costs and yielding unstable, domain-specific behavior. In response, we internalize a decompose-recompose-decide mechanism into an open-source LLM for cross-domain workflow generation. To decompose, we learn a compact set of reusable workflow capabilities across diverse domains. To recompose, we map each input task to a sparse composition over these bases to generate a task-specific workflow in a single pass. To decide, we attribute the success or failure of workflow generation to counterfactual contributions from learned capabilities, thereby capturing which capabilities actually drive success by their marginal effects. Across stringent multi-domain, cross-domain, and unseen-domain evaluations, our 1-pass generator surpasses SOTA refinement baselines that consume 20 iterations, while substantially reducing generation latency and cost. Read More

Found-RL: foundation model-enhanced reinforcement learning for autonomous drivingcs.AI updates on arXiv.org arXiv:2602.10458v1 Announce Type: new

Abstract: Reinforcement Learning (RL) has emerged as a dominant paradigm for end-to-end autonomous driving (AD). However, RL suffers from sample inefficiency and a lack of semantic interpretability in complex scenarios. Foundation Models, particularly Vision-Language Models (VLMs), can mitigate this by offering rich, context-aware knowledge, yet their high inference latency hinders deployment in high-frequency RL training loops. To bridge this gap, we present Found-RL, a platform tailored to efficiently enhance RL for AD using foundation models. A core innovation is the asynchronous batch inference framework, which decouples heavy VLM reasoning from the simulation loop, effectively resolving latency bottlenecks to support real-time learning. We introduce diverse supervision mechanisms: Value-Margin Regularization (VMR) and Advantage-Weighted Action Guidance (AWAG) to effectively distill expert-like VLM action suggestions into the RL policy. Additionally, we adopt high-throughput CLIP for dense reward shaping. We address CLIP’s dynamic blindness via Conditional Contrastive Action Alignment, which conditions prompts on discretized speed/command and yields a normalized, margin-based bonus from context-specific action-anchor scoring. Found-RL provides an end-to-end pipeline for fine-tuned VLM integration and shows that a lightweight RL model can achieve near-VLM performance compared with billion-parameter VLMs while sustaining real-time inference (approx. 500 FPS). Code, data, and models will be publicly available at https://github.com/ys-qu/found-rl.

arXiv:2602.10458v1 Announce Type: new

Abstract: Reinforcement Learning (RL) has emerged as a dominant paradigm for end-to-end autonomous driving (AD). However, RL suffers from sample inefficiency and a lack of semantic interpretability in complex scenarios. Foundation Models, particularly Vision-Language Models (VLMs), can mitigate this by offering rich, context-aware knowledge, yet their high inference latency hinders deployment in high-frequency RL training loops. To bridge this gap, we present Found-RL, a platform tailored to efficiently enhance RL for AD using foundation models. A core innovation is the asynchronous batch inference framework, which decouples heavy VLM reasoning from the simulation loop, effectively resolving latency bottlenecks to support real-time learning. We introduce diverse supervision mechanisms: Value-Margin Regularization (VMR) and Advantage-Weighted Action Guidance (AWAG) to effectively distill expert-like VLM action suggestions into the RL policy. Additionally, we adopt high-throughput CLIP for dense reward shaping. We address CLIP’s dynamic blindness via Conditional Contrastive Action Alignment, which conditions prompts on discretized speed/command and yields a normalized, margin-based bonus from context-specific action-anchor scoring. Found-RL provides an end-to-end pipeline for fine-tuned VLM integration and shows that a lightweight RL model can achieve near-VLM performance compared with billion-parameter VLMs while sustaining real-time inference (approx. 500 FPS). Code, data, and models will be publicly available at https://github.com/ys-qu/found-rl. Read More

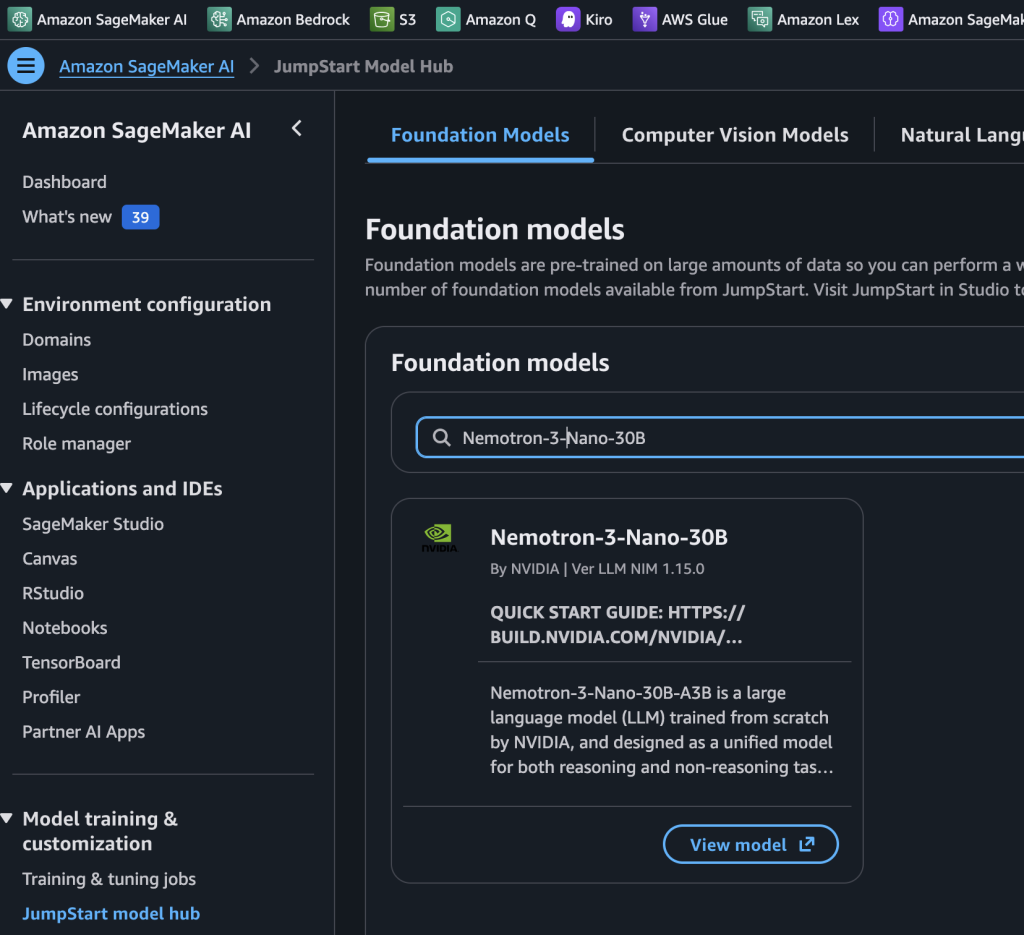

NVIDIA Nemotron 3 Nano 30B MoE model is now available in Amazon SageMaker JumpStartArtificial Intelligence Today we’re excited to announce that the NVIDIA Nemotron 3 Nano 30B model with 3B active parameters is now generally available in the Amazon SageMaker JumpStart model catalog. You can accelerate innovation and deliver tangible business value with Nemotron 3 Nano on Amazon Web Services (AWS) without having to manage model deployment complexities. You can power your generative AI applications with Nemotron capabilities using the managed deployment capabilities offered by SageMaker JumpStart.

Today we’re excited to announce that the NVIDIA Nemotron 3 Nano 30B model with 3B active parameters is now generally available in the Amazon SageMaker JumpStart model catalog. You can accelerate innovation and deliver tangible business value with Nemotron 3 Nano on Amazon Web Services (AWS) without having to manage model deployment complexities. You can power your generative AI applications with Nemotron capabilities using the managed deployment capabilities offered by SageMaker JumpStart. Read More

Introduction AI coding tools aren’t new. Autocomplete suggestions and inline code generation have been part of the developer toolkit for a few years now. But the latest generation of tools is doing something different. They’re not just finishing your sentences. They’re reading your entire codebase, planning changes across dozens of files, and executing tasks autonomously. […]

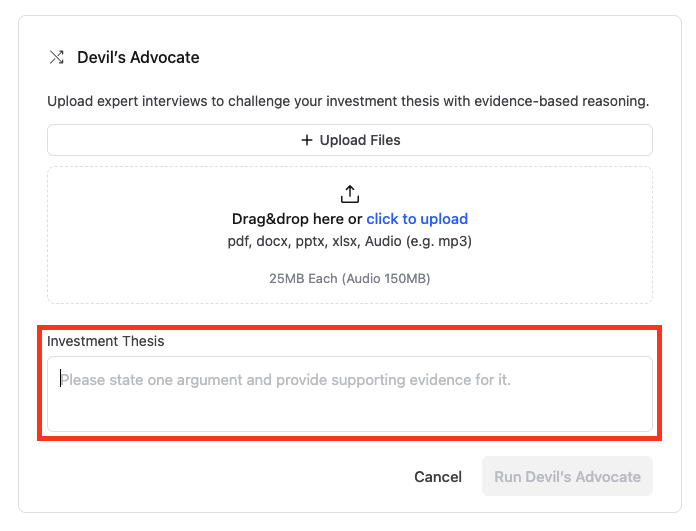

How LinqAlpha assesses investment theses using Devil’s Advocate on Amazon BedrockArtificial Intelligence LinqAlpha is a Boston-based multi-agent AI system built specifically for institutional investors. The system supports and streamlines agentic workflows across company screening, primer generation, stock price catalyst mapping, and now, pressure-testing investment ideas through a new AI agent called Devil’s Advocate. In this post, we share how LinqAlpha uses Amazon Bedrock to build and scale Devil’s Advocate.

LinqAlpha is a Boston-based multi-agent AI system built specifically for institutional investors. The system supports and streamlines agentic workflows across company screening, primer generation, stock price catalyst mapping, and now, pressure-testing investment ideas through a new AI agent called Devil’s Advocate. In this post, we share how LinqAlpha uses Amazon Bedrock to build and scale Devil’s Advocate. Read More

Building an AI Agent to Detect and Handle Anomalies in Time-Series DataTowards Data Science Combining statistical detection with agentic decision-making

The post Building an AI Agent to Detect and Handle Anomalies in Time-Series Data appeared first on Towards Data Science.

Combining statistical detection with agentic decision-making

The post Building an AI Agent to Detect and Handle Anomalies in Time-Series Data appeared first on Towards Data Science. Read More