Empowerment Gain and Causal Model Construction: Children and adults are sensitive to controllability and variability in their causal interventionscs.AI updates on arXiv.org arXiv:2512.08230v1 Announce Type: new

Abstract: Learning about the causal structure of the world is a fundamental problem for human cognition. Causal models and especially causal learning have proved to be difficult for large pretrained models using standard techniques of deep learning. In contrast, cognitive scientists have applied advances in our formal understanding of causation in computer science, particularly within the Causal Bayes Net formalism, to understand human causal learning. In the very different tradition of reinforcement learning, researchers have described an intrinsic reward signal called “empowerment” which maximizes mutual information between actions and their outcomes. “Empowerment” may be an important bridge between classical Bayesian causal learning and reinforcement learning and may help to characterize causal learning in humans and enable it in machines. If an agent learns an accurate causal world model, they will necessarily increase their empowerment, and increasing empowerment will lead to a more accurate causal world model. Empowerment may also explain distinctive features of childrens causal learning, as well as providing a more tractable computational account of how that learning is possible. In an empirical study, we systematically test how children and adults use cues to empowerment to infer causal relations, and design effective causal interventions.

arXiv:2512.08230v1 Announce Type: new

Abstract: Learning about the causal structure of the world is a fundamental problem for human cognition. Causal models and especially causal learning have proved to be difficult for large pretrained models using standard techniques of deep learning. In contrast, cognitive scientists have applied advances in our formal understanding of causation in computer science, particularly within the Causal Bayes Net formalism, to understand human causal learning. In the very different tradition of reinforcement learning, researchers have described an intrinsic reward signal called “empowerment” which maximizes mutual information between actions and their outcomes. “Empowerment” may be an important bridge between classical Bayesian causal learning and reinforcement learning and may help to characterize causal learning in humans and enable it in machines. If an agent learns an accurate causal world model, they will necessarily increase their empowerment, and increasing empowerment will lead to a more accurate causal world model. Empowerment may also explain distinctive features of childrens causal learning, as well as providing a more tractable computational account of how that learning is possible. In an empirical study, we systematically test how children and adults use cues to empowerment to infer causal relations, and design effective causal interventions. Read More

Large Language Models for Education and Research: An Empirical and User Survey-based Analysiscs.AI updates on arXiv.org arXiv:2512.08057v1 Announce Type: new

Abstract: Pretrained Large Language Models (LLMs) have achieved remarkable success across diverse domains, with education and research emerging as particularly impactful areas. Among current state-of-the-art LLMs, ChatGPT and DeepSeek exhibit strong capabilities in mathematics, science, medicine, literature, and programming. In this study, we present a comprehensive evaluation of these two LLMs through background technology analysis, empirical experiments, and a real-world user survey. The evaluation explores trade-offs among model accuracy, computational efficiency, and user experience in educational and research affairs. We benchmarked these LLMs performance in text generation, programming, and specialized problem-solving. Experimental results show that ChatGPT excels in general language understanding and text generation, while DeepSeek demonstrates superior performance in programming tasks due to its efficiency- focused design. Moreover, both models deliver medically accurate diagnostic outputs and effectively solve complex mathematical problems. Complementing these quantitative findings, a survey of students, educators, and researchers highlights the practical benefits and limitations of these models, offering deeper insights into their role in advancing education and research.

arXiv:2512.08057v1 Announce Type: new

Abstract: Pretrained Large Language Models (LLMs) have achieved remarkable success across diverse domains, with education and research emerging as particularly impactful areas. Among current state-of-the-art LLMs, ChatGPT and DeepSeek exhibit strong capabilities in mathematics, science, medicine, literature, and programming. In this study, we present a comprehensive evaluation of these two LLMs through background technology analysis, empirical experiments, and a real-world user survey. The evaluation explores trade-offs among model accuracy, computational efficiency, and user experience in educational and research affairs. We benchmarked these LLMs performance in text generation, programming, and specialized problem-solving. Experimental results show that ChatGPT excels in general language understanding and text generation, while DeepSeek demonstrates superior performance in programming tasks due to its efficiency- focused design. Moreover, both models deliver medically accurate diagnostic outputs and effectively solve complex mathematical problems. Complementing these quantitative findings, a survey of students, educators, and researchers highlights the practical benefits and limitations of these models, offering deeper insights into their role in advancing education and research. Read More

Inside the playbook of companies winning with AIAI News Many companies are still working out how to use AI in a steady and practical way, but a small group is already pulling ahead. New research from NTT DATA outlines a playbook that shows how these “AI leaders” set themselves apart through strong plans, firm decisions, and a disciplined approach to building and using AI

The post Inside the playbook of companies winning with AI appeared first on AI News.

Many companies are still working out how to use AI in a steady and practical way, but a small group is already pulling ahead. New research from NTT DATA outlines a playbook that shows how these “AI leaders” set themselves apart through strong plans, firm decisions, and a disciplined approach to building and using AI

The post Inside the playbook of companies winning with AI appeared first on AI News. Read More

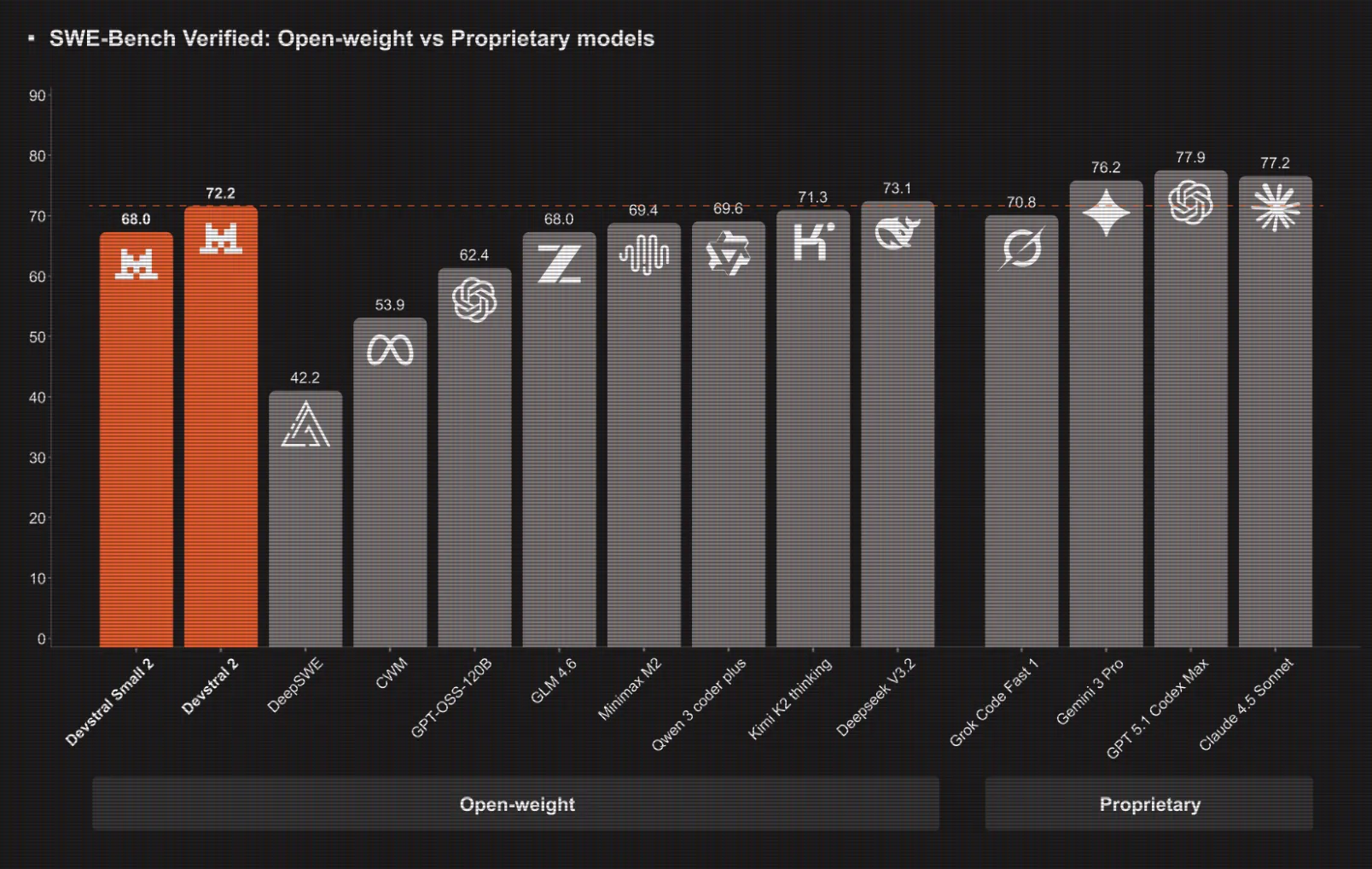

Mistral AI Ships Devstral 2 Coding Models And Mistral Vibe CLI For Agentic, Terminal Native DevelopmentMarkTechPost Mistral AI has introduced Devstral 2, a next generation coding model family for software engineering agents, together with Mistral Vibe CLI, an open source command line coding assistant that runs inside the terminal or IDEs that support the Agent Communication Protocol. Devstral 2 and Devstral Small 2, model sizes, context and benchmarks Devstral 2 is

The post Mistral AI Ships Devstral 2 Coding Models And Mistral Vibe CLI For Agentic, Terminal Native Development appeared first on MarkTechPost.

Mistral AI has introduced Devstral 2, a next generation coding model family for software engineering agents, together with Mistral Vibe CLI, an open source command line coding assistant that runs inside the terminal or IDEs that support the Agent Communication Protocol. Devstral 2 and Devstral Small 2, model sizes, context and benchmarks Devstral 2 is

The post Mistral AI Ships Devstral 2 Coding Models And Mistral Vibe CLI For Agentic, Terminal Native Development appeared first on MarkTechPost. Read More

Arbitrage: Efficient Reasoning via Advantage-Aware Speculationcs.AI updates on arXiv.org arXiv:2512.05033v2 Announce Type: replace-cross

Abstract: Modern Large Language Models achieve impressive reasoning capabilities with long Chain of Thoughts, but they incur substantial computational cost during inference, and this motivates techniques to improve the performance-cost ratio. Among these techniques, Speculative Decoding accelerates inference by employing a fast but inaccurate draft model to autoregressively propose tokens, which are then verified in parallel by a more capable target model. However, due to unnecessary rejections caused by token mismatches in semantically equivalent steps, traditional token-level Speculative Decoding struggles in reasoning tasks. Although recent works have shifted to step-level semantic verification, which improve efficiency by accepting or rejecting entire reasoning steps, existing step-level methods still regenerate many rejected steps with little improvement, wasting valuable target compute. To address this challenge, we propose Arbitrage, a novel step-level speculative generation framework that routes generation dynamically based on the relative advantage between draft and target models. Instead of applying a fixed acceptance threshold, Arbitrage uses a lightweight router trained to predict when the target model is likely to produce a meaningfully better step. This routing approximates an ideal Arbitrage Oracle that always chooses the higher-quality step, achieving near-optimal efficiency-accuracy trade-offs. Across multiple mathematical reasoning benchmarks, Arbitrage consistently surpasses prior step-level Speculative Decoding baselines, reducing inference latency by up to $sim2times$ at matched accuracy.

arXiv:2512.05033v2 Announce Type: replace-cross

Abstract: Modern Large Language Models achieve impressive reasoning capabilities with long Chain of Thoughts, but they incur substantial computational cost during inference, and this motivates techniques to improve the performance-cost ratio. Among these techniques, Speculative Decoding accelerates inference by employing a fast but inaccurate draft model to autoregressively propose tokens, which are then verified in parallel by a more capable target model. However, due to unnecessary rejections caused by token mismatches in semantically equivalent steps, traditional token-level Speculative Decoding struggles in reasoning tasks. Although recent works have shifted to step-level semantic verification, which improve efficiency by accepting or rejecting entire reasoning steps, existing step-level methods still regenerate many rejected steps with little improvement, wasting valuable target compute. To address this challenge, we propose Arbitrage, a novel step-level speculative generation framework that routes generation dynamically based on the relative advantage between draft and target models. Instead of applying a fixed acceptance threshold, Arbitrage uses a lightweight router trained to predict when the target model is likely to produce a meaningfully better step. This routing approximates an ideal Arbitrage Oracle that always chooses the higher-quality step, achieving near-optimal efficiency-accuracy trade-offs. Across multiple mathematical reasoning benchmarks, Arbitrage consistently surpasses prior step-level Speculative Decoding baselines, reducing inference latency by up to $sim2times$ at matched accuracy. Read More

Accenture and Anthropic partner to boost enterprise AI integrationAI News Accenture and Anthropic are setting out to boost enterprise AI integration with a newly-expanded partnership. While 2024 was defined by corporate curiosity regarding Large Language Models (LLMs), the current mandate for business leaders is operationalising these tools to achieve a return on investment. The new Accenture Anthropic Business Group combines Anthropic’s model capabilities with Accenture’s

The post Accenture and Anthropic partner to boost enterprise AI integration appeared first on AI News.

Accenture and Anthropic are setting out to boost enterprise AI integration with a newly-expanded partnership. While 2024 was defined by corporate curiosity regarding Large Language Models (LLMs), the current mandate for business leaders is operationalising these tools to achieve a return on investment. The new Accenture Anthropic Business Group combines Anthropic’s model capabilities with Accenture’s

The post Accenture and Anthropic partner to boost enterprise AI integration appeared first on AI News. Read More

How Scout24 is building the next generation of real-estate search with AIOpenAI News Scout24 has created a GPT-5 powered conversational assistant that reimagines real-estate search, guiding users with clarifying questions, summaries, and tailored listing recommendations.

Scout24 has created a GPT-5 powered conversational assistant that reimagines real-estate search, guiding users with clarifying questions, summaries, and tailored listing recommendations. Read More

Prompt Engineering for Outlier DetectionKDnuggets Learn how to detect outliers by doing a real-life data project and improve the process with AI.

Learn how to detect outliers by doing a real-life data project and improve the process with AI. Read More

How to Develop AI-Powered Solutions, Accelerated by AITowards Data Science From idea to impact : using AI as your accelerating copilot

The post How to Develop AI-Powered Solutions, Accelerated by AI appeared first on Towards Data Science.

From idea to impact : using AI as your accelerating copilot

The post How to Develop AI-Powered Solutions, Accelerated by AI appeared first on Towards Data Science. Read More

OpenAI targets AI skills gap with new certification standardsAI News Adoption of generative AI has outpaced workforce capability, prompting OpenAI to target the skills gap with new certification standards. While it’s safe to say OpenAI’s tools have reached mass adoption, organisations struggle to convert this usage into reliable output. To address this, OpenAI has announced ‘AI Foundations,’ a structured initiative designed to standardise how employees

The post OpenAI targets AI skills gap with new certification standards appeared first on AI News.

Adoption of generative AI has outpaced workforce capability, prompting OpenAI to target the skills gap with new certification standards. While it’s safe to say OpenAI’s tools have reached mass adoption, organisations struggle to convert this usage into reliable output. To address this, OpenAI has announced ‘AI Foundations,’ a structured initiative designed to standardise how employees

The post OpenAI targets AI skills gap with new certification standards appeared first on AI News. Read More