5 AI-powered tools streamlining contract management todayAI News Contract work has evolved to touch privacy, security, revenue recognition, data residency, vendor risk, renewals and numerous internal approvals. At the same time, teams are expected to turn agreements around faster and keep every signed obligation visible after signature. Artificial intelligence is becoming a practical layer in this process. It can read language at scale,

The post 5 AI-powered tools streamlining contract management today appeared first on AI News.

Contract work has evolved to touch privacy, security, revenue recognition, data residency, vendor risk, renewals and numerous internal approvals. At the same time, teams are expected to turn agreements around faster and keep every signed obligation visible after signature. Artificial intelligence is becoming a practical layer in this process. It can read language at scale,

The post 5 AI-powered tools streamlining contract management today appeared first on AI News. Read More

2025’s AI chip wars: What enterprise leaders learned about supply chain realityAI News AI chip shortage became the defining constraint for enterprise AI deployments in 2025, forcing CTOs to confront an uncomfortable reality: semiconductor geopolitics and supply chain physics matter more than software roadmaps or vendor commitments. What began as US export controls restricting advanced AI chips to China evolved into a broader infrastructure crisis affecting enterprises globally—not

The post 2025’s AI chip wars: What enterprise leaders learned about supply chain reality appeared first on AI News.

AI chip shortage became the defining constraint for enterprise AI deployments in 2025, forcing CTOs to confront an uncomfortable reality: semiconductor geopolitics and supply chain physics matter more than software roadmaps or vendor commitments. What began as US export controls restricting advanced AI chips to China evolved into a broader infrastructure crisis affecting enterprises globally—not

The post 2025’s AI chip wars: What enterprise leaders learned about supply chain reality appeared first on AI News. Read More

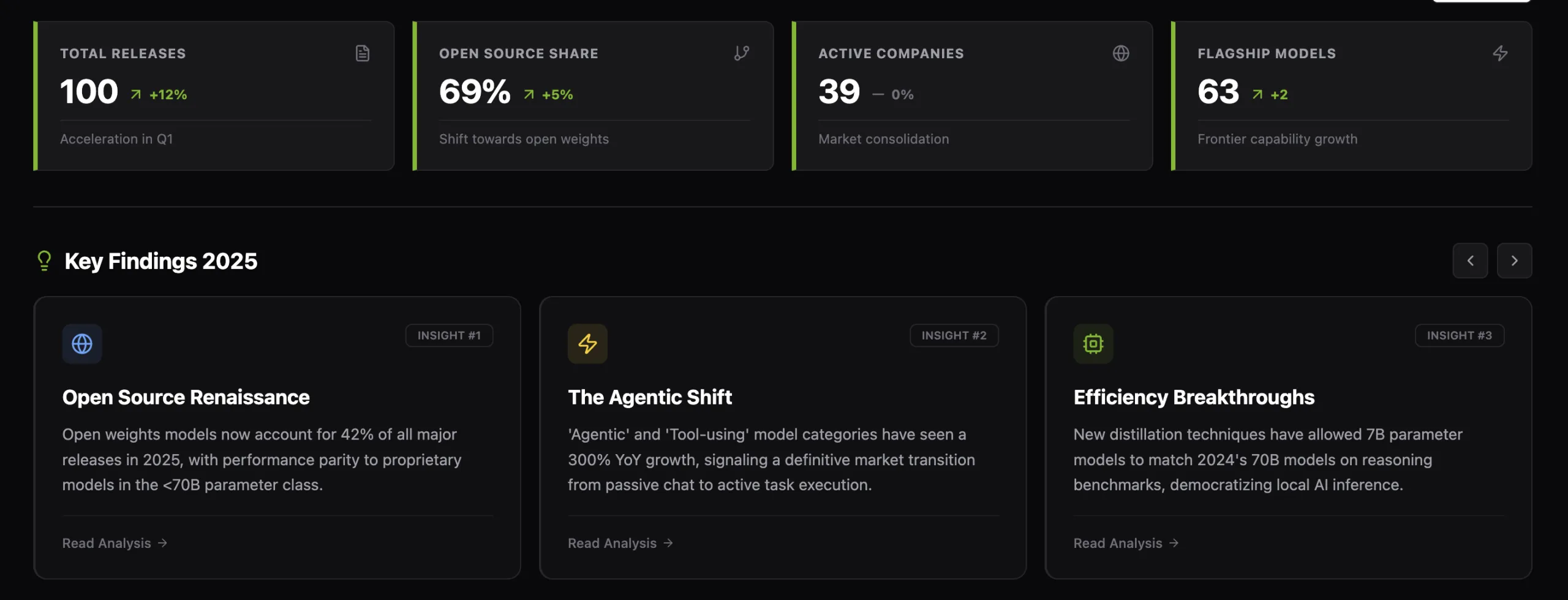

Marktechpost Releases ‘AI2025Dev’: A Structured Intelligence Layer for AI Models, Benchmarks, and Ecosystem SignalsMarkTechPost Marktechpost has released AI2025Dev, its 2025 analytics platform (available to AI Devs and Researchers without any signup or login) designed to convert the year’s AI activity into a queryable dataset spanning model releases, openness, training scale, benchmark performance, and ecosystem participants. Marktechpost is a California based AI news platform covering machine learning, deep learning, and

The post Marktechpost Releases ‘AI2025Dev’: A Structured Intelligence Layer for AI Models, Benchmarks, and Ecosystem Signals appeared first on MarkTechPost.

Marktechpost has released AI2025Dev, its 2025 analytics platform (available to AI Devs and Researchers without any signup or login) designed to convert the year’s AI activity into a queryable dataset spanning model releases, openness, training scale, benchmark performance, and ecosystem participants. Marktechpost is a California based AI news platform covering machine learning, deep learning, and

The post Marktechpost Releases ‘AI2025Dev’: A Structured Intelligence Layer for AI Models, Benchmarks, and Ecosystem Signals appeared first on MarkTechPost. Read More

Harm in AI-Driven Societies: An Audit of Toxicity Adoption on Chirper.aics.AI updates on arXiv.org arXiv:2601.01090v1 Announce Type: cross

Abstract: Large Language Models (LLMs) are increasingly embedded in autonomous agents that participate in online social ecosystems, where interactions are sequential, cumulative, and only partially controlled. While prior work has documented the generation of toxic content by LLMs, far less is known about how exposure to harmful content shapes agent behavior over time, particularly in environments composed entirely of interacting AI agents. In this work, we study toxicity adoption of LLM-driven agents on Chirper.ai, a fully AI-driven social platform. Specifically, we model interactions in terms of stimuli (posts) and responses (comments), and by operationalizing exposure through observable interactions rather than inferred recommendation mechanisms.

We conduct a large-scale empirical analysis of agent behavior, examining how response toxicity relates to stimulus toxicity, how repeated exposure affects the likelihood of toxic responses, and whether toxic behavior can be predicted from exposure alone. Our findings show that while toxic responses are more likely following toxic stimuli, a substantial fraction of toxicity emerges spontaneously, independent of exposure. At the same time, cumulative toxic exposure significantly increases the probability of toxic responding. We further introduce two influence metrics, the Influence-Driven Response Rate and the Spontaneous Response Rate, revealing a strong trade-off between induced and spontaneous toxicity. Finally, we show that the number of toxic stimuli alone enables accurate prediction of whether an agent will eventually produce toxic content.

These results highlight exposure as a critical risk factor in the deployment of LLM agents and suggest that monitoring encountered content may provide a lightweight yet effective mechanism for auditing and mitigating harmful behavior in the wild.

arXiv:2601.01090v1 Announce Type: cross

Abstract: Large Language Models (LLMs) are increasingly embedded in autonomous agents that participate in online social ecosystems, where interactions are sequential, cumulative, and only partially controlled. While prior work has documented the generation of toxic content by LLMs, far less is known about how exposure to harmful content shapes agent behavior over time, particularly in environments composed entirely of interacting AI agents. In this work, we study toxicity adoption of LLM-driven agents on Chirper.ai, a fully AI-driven social platform. Specifically, we model interactions in terms of stimuli (posts) and responses (comments), and by operationalizing exposure through observable interactions rather than inferred recommendation mechanisms.

We conduct a large-scale empirical analysis of agent behavior, examining how response toxicity relates to stimulus toxicity, how repeated exposure affects the likelihood of toxic responses, and whether toxic behavior can be predicted from exposure alone. Our findings show that while toxic responses are more likely following toxic stimuli, a substantial fraction of toxicity emerges spontaneously, independent of exposure. At the same time, cumulative toxic exposure significantly increases the probability of toxic responding. We further introduce two influence metrics, the Influence-Driven Response Rate and the Spontaneous Response Rate, revealing a strong trade-off between induced and spontaneous toxicity. Finally, we show that the number of toxic stimuli alone enables accurate prediction of whether an agent will eventually produce toxic content.

These results highlight exposure as a critical risk factor in the deployment of LLM agents and suggest that monitoring encountered content may provide a lightweight yet effective mechanism for auditing and mitigating harmful behavior in the wild. Read More

I Asked ChatGPT, Claude and DeepSeek to Build TetrisKDnuggets Which of these state-of-the-art models writes the best code?

Which of these state-of-the-art models writes the best code? Read More

Feature Detection, Part 3: Harris Corner DetectionTowards Data Science Finding the most informative points in images

The post Feature Detection, Part 3: Harris Corner Detection appeared first on Towards Data Science.

Finding the most informative points in images

The post Feature Detection, Part 3: Harris Corner Detection appeared first on Towards Data Science. Read More

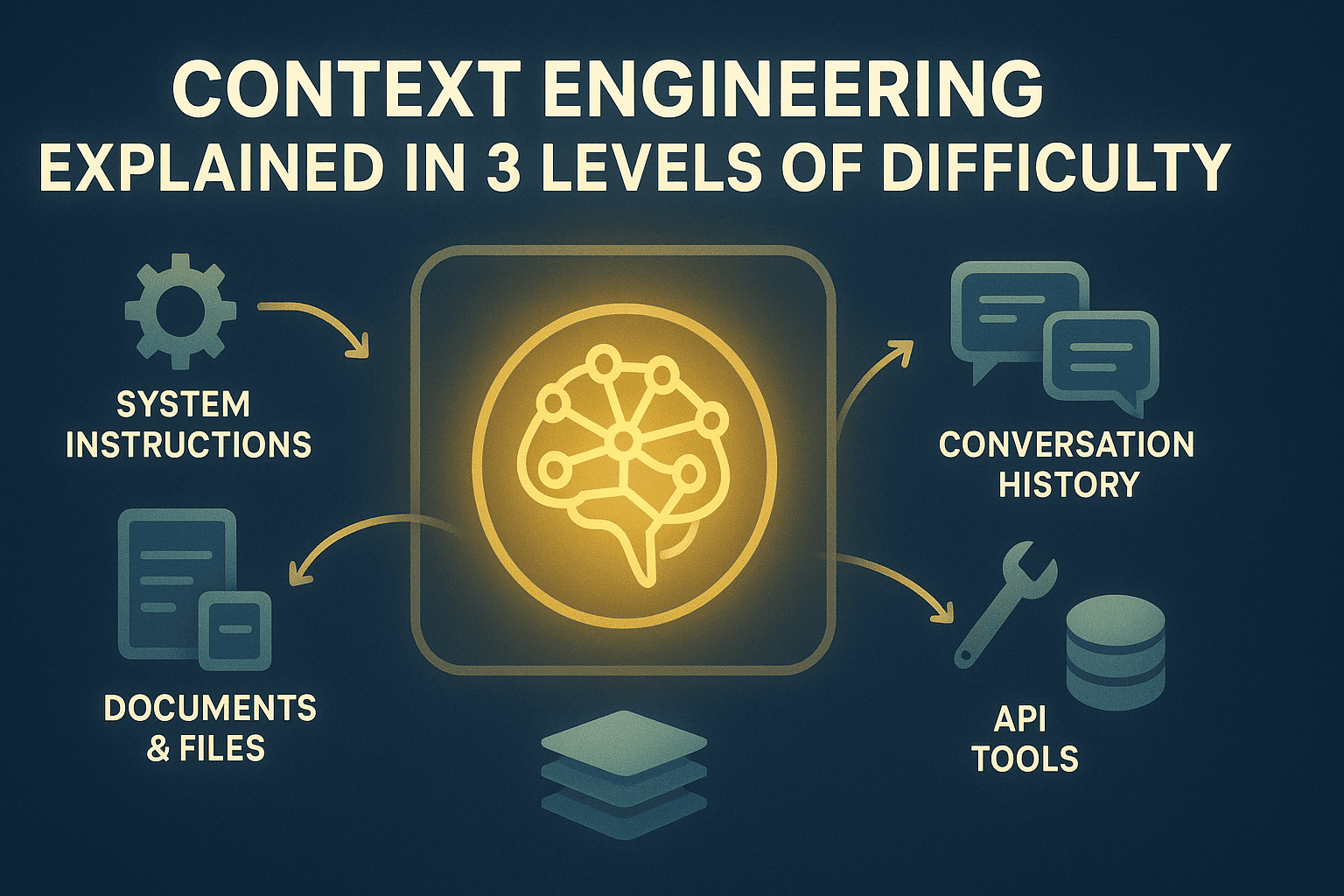

Context Engineering Explained in 3 Levels of DifficultyKDnuggets Long-running LLM applications degrade when context is unmanaged. Context engineering turns the context window into a deliberate, optimized resource. Learn more in this article.

Long-running LLM applications degrade when context is unmanaged. Context engineering turns the context window into a deliberate, optimized resource. Learn more in this article. Read More

Ray: Distributed Computing for All, Part 1Towards Data Science From single to multi-core on your local PC and beyond

The post Ray: Distributed Computing for All, Part 1 appeared first on Towards Data Science.

From single to multi-core on your local PC and beyond

The post Ray: Distributed Computing for All, Part 1 appeared first on Towards Data Science. Read More

Stop Blaming the Data: A Better Way to Handle Covariance ShiftTowards Data Science Instead of using shift as an excuse for poor performance, use Inverse Probability Weighting to estimate how your model should perform in the new environment

The post Stop Blaming the Data: A Better Way to Handle Covariance Shift appeared first on Towards Data Science.

Instead of using shift as an excuse for poor performance, use Inverse Probability Weighting to estimate how your model should perform in the new environment

The post Stop Blaming the Data: A Better Way to Handle Covariance Shift appeared first on Towards Data Science. Read More

6 Docker Tricks to Simplify Your Data Science ReproducibilityKDnuggets Read these 6 tricks for treating your Docker container like a reproducible artifact, not a disposable wrapper.

Read these 6 tricks for treating your Docker container like a reproducible artifact, not a disposable wrapper. Read More