Author: Derrick D. Jackson

Title: Founder & Senior Director of Cloud Security Architecture & Risk

Credentials: CISSP, CRISC, CCSP

Table of Contents

Hello Everyone, Help us grow our community by sharing and/or supporting us on other platforms. This allow us to show verification that what we are doing is valued. It also allows us to plan and allocate resources to improve what we are doing, as we then know others are interested/supportive.

Foundations of AI

AI Fundamentals: Understanding the Core Concepts and Foundations

Banks catch fraudulent credit card transactions before you even notice the charge.

That’s AI at work. So is the system helping radiologists spot tumors in medical scans, the algorithm predicting which factory equipment will fail next week, and the software routing thousands of delivery trucks through optimal routes. These aren’t magic tricks or science fiction. They’re software systems performing tasks that typically require human intelligence, like recognizing speech, translating languages, or interpreting visual information.

But what actually is artificial intelligence? Strip away the hype, the fears about robot overlords, and the corporate buzzwords. You’re left with something both more mundane and more powerful than most people realize.

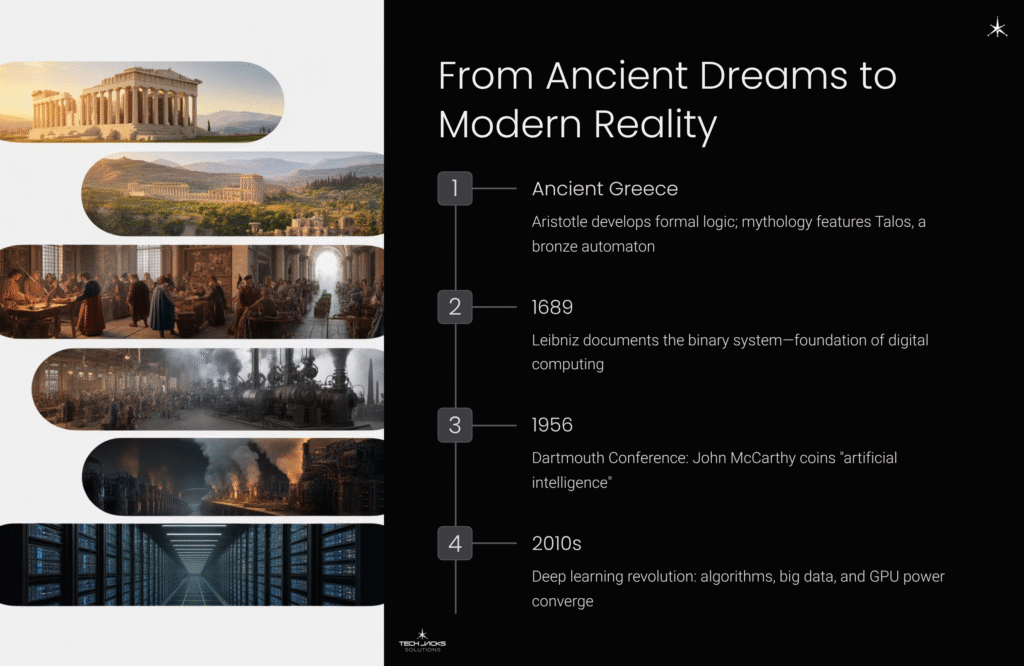

From Ancient Dreams to Modern Reality

The idea of artificial beings isn’t new. Greek mythology featured Talos, a bronze automaton created by Hephaestus to guard Crete. Medieval legends spoke of Golems animated by mystical means. Humans have always been intrigued by the possibility of creating intelligent machines.

The intellectual groundwork came much later. Aristotle developed formal logic in ancient Greece, providing the first systematic method for analyzing the mechanics of reason. Centuries later, Gottfried Wilhelm Leibniz documented the binary system in 1689 – the mathematical foundation that would eventually power digital computers.

But the field’s true birth came in 1956. The Dartmouth Summer Research Project on Artificial Intelligence formally established AI as an academic discipline. John McCarthy coined the term “artificial intelligence” and defined it as “the science and engineering of making intelligent machines.” The attendees believed machines as intelligent as humans would exist within a generation.

They were spectacularly wrong. The field experienced two “AI winters” – – periods when funding dried up and interest collapsed because the technology couldn’t deliver on its promises. The challenges of natural language understanding and common sense reasoning proved far harder than anyone anticipated.

Then something changed. The convergence of three factors in the 2010s sparked the current AI revolution: powerful algorithms (particularly deep learning), massive datasets from the internet, and affordable computing power from graphics processors originally designed for video games. What couldn’t be done in 1956 became not just possible but transformative.

Core AI Concepts: Essential Definitions

Understanding AI requires grasping several foundational concepts. Here’s a clear breakdown of the terms and ideas you’ll encounter.

Types of AI by Capability

Artificial Narrow Intelligence (ANI) / Weak AI AI systems designed to perform specific, narrow tasks. All current AI falls into this category. Examples include facial recognition, language translation, chess-playing programs, and medical diagnosis systems. These systems excel at their designated tasks but can’t operate outside their defined domain.

Artificial General Intelligence (AGI) / Strong AI Theoretical AI with human-like general cognitive abilities across diverse domains. An AGI could understand, learn, and apply intelligence to solve any problem, not just specialized tasks. This currently exists only in theory and science fiction.

Artificial Superintelligence (ASI) Hypothetical AI that vastly surpasses human intelligence in every aspect. This represents a future state well beyond AGI that remains purely speculative.

Learning Paradigms

Supervised Learning Systems learn from labeled training data to make predictions on new cases. Used for classification (spam detection, image recognition) and regression (price prediction, risk assessment). Requires extensive labeled datasets.

Unsupervised Learning Systems find hidden patterns in unlabeled data. Used for clustering (customer segmentation) and dimensionality reduction (data compression). No pre-labeled answers are provided.

Reinforcement Learning Systems learn through trial and error, receiving rewards or penalties for actions. Used for game playing, robotics, and autonomous systems. The AI discovers optimal strategies through experience.

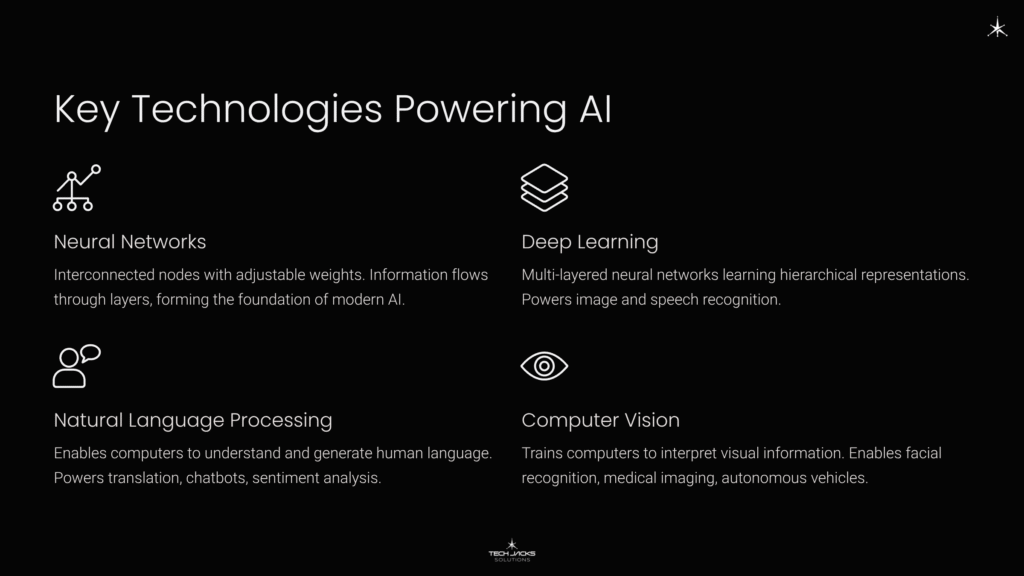

Key Technologies and Approaches

Neural Networks Computing systems with interconnected nodes loosely inspired by biological neurons. Information flows through layers, with each connection having adjustable weights. The foundation of modern AI.

Deep Learning Neural networks with multiple layers that learn hierarchical representations. Deeper networks can learn increasingly complex patterns, making them powerful for tasks like image and speech recognition.

Natural Language Processing (NLP) AI subfield enabling computers to understand, interpret, and generate human language. Powers translation services, chatbots, sentiment analysis, and text generation.

Computer Vision AI that trains computers to interpret and understand visual information. Enables facial recognition, medical image analysis, autonomous vehicles, and quality control systems.

Machine Learning Systems that learn from data to make predictions or decisions without explicit programming. The core engine powering most modern AI applications.

Philosophical Foundations

The Turing Test Proposed by Alan Turing in 1950: if a machine’s conversational behavior is indistinguishable from a human’s, we should consider it intelligent. A behavioral rather than mechanistic definition of intelligence.

The Chinese Room Argument John Searle’s 1980 thought experiment challenging whether computers truly “understand.” A person following rules to manipulate Chinese symbols can appear to understand Chinese without actually comprehending the language. Argues that syntax (symbol manipulation) doesn’t equal semantics (meaning).

Symbolic AI vs. Connectionism Two competing philosophies. Symbolic AI uses explicit rules and logic (human-readable, transparent but brittle). Connectionism uses neural networks learning from data (powerful pattern recognition but opaque). Modern AI increasingly uses hybrid approaches.

Important Concepts

Training Data The dataset used to teach an AI system. Quality and quantity of training data directly impact system performance and potential biases.

Model The trained AI system itself — the mathematical representation of patterns learned from data.

Inference Using a trained model to make predictions or decisions on new, unseen data.

Bias Systematic errors in AI systems often reflecting biases present in training data. Can lead to unfair or discriminatory outcomes.

Black Box Problem The difficulty in understanding how complex AI systems (especially deep neural networks) arrive at their decisions. Creates challenges for trust, debugging, and accountability.

How AI Differs From Traditional Software

Think of traditional software as following a recipe. Every step is spelled out. If the recipe says add two cups of flour, the program adds two cups of flour. No interpretation. No adaptation.

AI works differently. These systems don’t just follow instructions. They learn patterns from data and apply those patterns to situations they’ve never seen before. It’s the intelligence of software as opposed to the intelligence of humans.

Is AI conscious? No. Does it understand what it’s doing? Also no. Can it perform specific tasks that once required human cognition? Absolutely.

The distinction matters. Traditional programs execute predetermined logic. AI systems discover logic by analyzing examples. A traditional spam filter follows rules someone programmed (“block emails with these keywords”). An AI spam filter learns what spam looks like by studying thousands of examples, then recognizes patterns in emails it’s never seen before.

The Three Ways AI Systems Learn

Not all AI learns the same way. The approach depends on the problem you’re trying to solve and the data you have available.

Supervised learning needs labels. You show the system thousands of photos tagged “cat” or “dog,” and it figures out the visual patterns that distinguish the two. This paradigm is used for classification tasks like identifying spam or regression tasks like predicting prices. The system learns by being told the correct answer repeatedly until it internalizes the pattern.

The second approach flips that completely. Unsupervised learning works with unlabeled data, letting the system find hidden patterns or intrinsic structures on its own. Nobody tells it what to look for. Retailers use this to group customers with similar shopping behaviors without predefining categories. The AI discovers segments humans might never have thought to create.

Reinforcement learning operates through trial and error. An agent performs actions in an environment and receives rewards or penalties, adjusting its strategy to maximize cumulative reward over time. DeepMind used this approach to train AI systems that play complex games at superhuman levels. The system tries something, observes the outcome, and refines its decision-making process.

Each approach requires different data and solves different problems. You can’t use supervised learning without labeled data. You can’t use reinforcement learning for tasks that don’t involve sequential decision-making. Understanding the distinction helps you recognize what AI can and can’t do for specific applications.

Neural Networks: The Engine Behind Modern AI

Here’s where things get interesting.

Modern AI’s power comes from artificial neural networks loosely inspired by biological neurons. The comparison to human brains shouldn’t be taken too literally, but the basic architecture matters. These networks process information through layers of interconnected nodes, with each connection having a numerical weight that gets adjusted during training.

Deep learning refers to neural networks with multiple layers. The “deep” part means you’re stacking many layers together, allowing the network to learn increasingly complex patterns. Look at how image recognition works. The first layer might detect edges. The next layer combines edges into shapes. Deeper layers recognize objects. This hierarchical learning mirrors how humans process visual information (though the underlying mechanisms are entirely different).

What makes neural networks exceptional at pattern recognition also makes them terrible at other things. These systems lack direct, embodied experience of the world. They learn from second-hand data but have no physical understanding of causality or social nuance. A neural network trained on millions of cat photos doesn’t understand what a cat actually is. It recognizes visual patterns. That’s all.

Where AI Works Right Now

Walk through your day and count the AI touchpoints. You’ll lose track quickly.

Radiologists use AI systems analyzing medical images to detect diseases earlier and with greater consistency. Financial institutions deploy algorithmic trading systems processing market data and executing trades in microseconds, while parallel systems flag suspicious transactions for fraud investigation. Manufacturing plants run predictive maintenance algorithms that spot equipment failures weeks before they happen, preventing costly downtime.

Computer vision models identify and classify objects in real-time, powering everything from quality control systems in factories to autonomous vehicle navigation. Natural language processing systems extract meaning from human language, enabling sentiment analysis tools that scan thousands of customer reviews to identify emerging product issues before they become widespread complaints.

The applications run deeper than consumer convenience. AI assists in drug discovery, identifying promising molecular compounds from millions of possibilities. Credit scoring systems evaluate loan applications by analyzing patterns in repayment data. Logistics companies optimize delivery routes for thousands of vehicles simultaneously, reducing fuel costs and delivery times.

Creative industries are experiencing AI’s impact in particularly visible ways. Generative AI models create content ranging from text to images to computer code, often producing output that’s difficult to distinguish from human-made work. This isn’t experimental technology confined to research labs. It’s operational, deployed at scale, generating billions of dollars in economic value.

What AI Can’t Do (And Why That Matters)

Current AI systems have fundamental limitations that don’t disappear with more data or bigger models.

These systems lack embodied experience of the physical world. They learn from text, images, and numbers but have no direct sensory understanding of causality, physics, or social dynamics. An AI can calculate the trajectory of a thrown ball but has never felt the weight of that ball in its hand or experienced the disappointment of dropping it.

AI struggles with fluid, ambiguous, context-dependent reasoning that characterizes everyday human life. These systems excel at structured problems with clear parameters. Present them with a novel situation requiring common sense, and performance degrades rapidly. They’re also computationally expensive and data-hungry, requiring massive datasets and significant energy for training.

Understanding limitations isn’t pessimism. It’s practical knowledge that prevents costly mistakes. You wouldn’t use a hammer to fix a computer, and you shouldn’t deploy AI for tasks it can’t handle. Knowing the boundaries helps you apply the technology appropriately and maintain realistic expectations about what it can accomplish.

How to Think About AI’s Future

AI will continue improving at specific tasks. Better translation. More accurate diagnosis. More capable robotics. The technology advances steadily within narrow domains where training data is abundant and success metrics are clear.

Achieving artificial general intelligence remains theoretical. That’s AI with human-like general cognitive abilities across diverse domains. Despite breathless predictions, current systems are powerful but unreliable in open-ended scenarios. They excel when the problem space is well-defined and the training data is comprehensive. Outside those boundaries, performance becomes unpredictable.

Human oversight remains critical. This matters especially in high-stakes applications like healthcare, criminal justice, and financial services. AI can augment human judgment but shouldn’t replace it entirely. The combination of AI’s pattern recognition capabilities with human reasoning, creativity, and ethical judgment creates better outcomes than either alone.

Understanding AI fundamentals helps you navigate an increasingly AI-enabled world. You don’t need to be a machine learning engineer to grasp what you’re working with. Just recognize that AI is a sophisticated tool that learns from data, not a sentient being with understanding or consciousness. That clarity makes all the difference in knowing when to trust it, when to question it, and when to walk away.

BC

October 14, 2025The neural network layer explanation (edges → shapes → objects) is useful for teaching but misleading about what actually happens. When testing vision models across my systems, intermediate layers don’t clearly correspond to human-interpretable concepts. The “shape detection” story is a post-hoc interpretation – the model learns statistical correlations that happen to align with what humans call shapes, not an understanding of geometric primitives.

The supervised learning spam filter example oversimplifies real-world deployment. Testing email classification locally shows that models trained on labeled spam quickly become outdated as adversaries adapt. The pattern-matching approach works initially but needs constant retraining as spam evolves, which the article doesn’t address. Actual systems incorporate multiple signals beyond just learned patterns.

BC

October 14, 2025The explanation of temperature scaling is technically accurate, but it cannot address the real-world issue that the optimal temperature varies greatly depending on the model and the task. In my lab, testing across different models (from 8B to 70B parameters, with various quantizations), the same temperature=0.7 produces conservative outputs on one model and nearly random text on another.

The parameter isn’t model-agnostic, despite vendor documentation suggesting universal ranges. The interaction between top_p and temperature results in complex effects that the article mentions but doesn’t quantify. In my experiments, temperature=0.8 with top_p=0.95 behaves very differently than temperature=0.9 with top_p=0.90, even though both seem like “slightly random” settings. The combined probability distributions change unpredictably, making systematic tuning nearly impossible without extensive testing on your specific model.