AI Acceptable Use Policy

Your compliance blueprint for responsible AI deployment, with practical framework alignment and a 90-day rollout plan.

An AI Acceptable Use Policy is not just another compliance document. It is the primary instrument for translating high-level ethical principles and complex regulatory requirements into concrete, auditable corporate practice. This guide shows you exactly how to build one using proven frameworks.

The regulatory landscape shifted dramatically in 2024. The EU AI Act entered into force August 1st. NIST released voluntary guidance that is becoming the de facto US standard. AWS became the first major cloud provider to achieve ISO/IEC 42001 certification. If you are competing with companies like these, you are already behind.

What Is an AI Acceptable Use Policy?

An AI Acceptable Use Policy is a formal organizational framework that defines the boundaries, requirements, and expectations for how AI technologies may be used across your organization. It tells every employee, contractor, and partner what they can do with AI, what they cannot do, and what safeguards must be in place before any AI system touches production data or customer-facing processes.

Traditional IT acceptable use policies do not cover AI. Machine learning systems create risks that standard IT governance was never designed to handle: algorithmic bias that discriminates against protected groups, privacy implications from training data exposure, automated decisions that affect real people’s employment, credit, and healthcare, and the potential for harm at a scale no individual human could cause. Your policy needs to address these specifically, not as footnotes to an existing IT policy, but as a standalone governance instrument with teeth.

Think of the relationship this way: your AI Governance Charter is the constitution. Your AI Acceptable Use Policy is the first law passed under that constitution. The charter establishes principles and authority. The AUP translates those principles into specific, enforceable rules that every person in the organization can follow starting on day one.

Technologies Covered

Machine learning, NLP, computer vision, generative AI, predictive analytics, and any third-party AI service. Internal builds and SaaS subscriptions alike.

ISO 42001 Cl. 5.2People in Scope

All employees, contractors, temporary staff, and anyone with access to organizational systems. No exceptions, no carve-outs.

NIST GOVERN 2.1Data Rules

Clear guidelines per data classification level. Prohibit sensitive data in unapproved tools. Define what can and cannot be entered into public AI services.

EU AI Act Art. 10Risk Categories

A structured framework that separates low-risk tools from high-risk systems, with proportionate controls for each tier.

NIST MAP 1.1Key Terms to Include in Your AUP

Your policy should define these terms explicitly. Ambiguous language creates enforcement gaps. Include a definitions section early in your AUP so every reader starts from the same baseline.

Why You Need This, and Why Now

Regulatory Imperative

The EU AI Act mandates documented governance for high-risk AI. ISO 42001 certification requires a formal AI policy. Regulators are not waiting for you to catch up.

Legal Liability

Penalties for prohibited AI practices reach up to 35 million EUR or 7% of annual global turnover, whichever is higher. Art. 5 violations carry the steepest fines.

Data Exposure

Employees are entering PII, trade secrets, and proprietary data into public AI tools right now. Without an AUP, there is no policy violation to enforce.

Algorithmic Bias

Discriminatory outputs from AI systems create legal risk and reputational damage. Without mandatory fairness testing, you will not find bias until it finds you.

Operational Failures

Unvetted AI systems can create cascading business disruption. Shadow AI, where employees adopt tools without IT oversight, is the fastest-growing governance gap.

Mapping Your AUP to Frameworks

Every section of your AUP should trace back to established frameworks. Here is how the three major standards align to your policy structure.

| AUP Section | Regulatory Requirement |

|---|---|

| Risk Management | Art. 9: Risk identification, analysis, and mitigation throughout the AI system lifecycle |

| Quality Management | Art. 17: Documented roles, responsibilities, training requirements, and quality management procedures |

| Post-Market Monitoring | Art. 72: Monitoring plans, review schedules, and ongoing performance metrics |

| Incident Reporting | Art. 73: Serious incident reporting (2 days for critical infrastructure disruption, 10 days for death, 15 days for other serious incidents) |

| Prohibited Practices | Art. 5: Banned AI applications including social scoring, manipulative techniques, and penalty exposure |

| Human Oversight | Art. 14: Human review requirements for high-risk AI systems, including kill-switch capabilities |

| AUP Section | NIST Function |

|---|---|

| Policy Document | GOVERN: Your AUP IS the primary GOVERN artifact, establishing organizational AI risk management |

| Intake & Assessment | MAP: Context establishment and risk characterization for each AI system |

| Testing & Validation | MEASURE: Fairness, bias, reliability, and performance evaluation |

| Risk Treatment | MANAGE: Risk treatments, incident response procedures, and decommissioning plans |

| Stakeholder Engagement | GOVERN 1.4 / MAP 5.2: Internal and external stakeholder engagement and feedback requirements |

| AUP Section | ISO 42001 Clause |

|---|---|

| Policy Creation | Cl. 5.2: AI policy establishment and communication across the organization |

| Implementation | Cl. 8.1: Operational planning and lifecycle execution |

| Monitoring | Cl. 9.1: Performance monitoring and measurement against objectives |

| Improvement | Cl. 10.1 / 10.2: Nonconformity handling and continual improvement |

| Risk Assessment | Cl. 6.1 / 8.2: Risk actions and AI risk assessment processes |

Building Your Policy: Foundation First

A policy without organizational buy-in is just a PDF on a shared drive. Follow this sequence to build something that actually gets enforced.

Executive Sponsorship

Secure C-suite backing with resource allocation authority. Without executive sponsorship, your AUP has no enforcement mechanism. The sponsor signs the policy, owns the budget, and breaks organizational logjams.

Read: AI Governance Charter Guide →Executive Sponsor Named

Cross-Functional Team

Assemble representatives from Legal, Compliance, IT, InfoSec, HR, Data Science, and key business units. Each function brings a perspective that keeps the policy practical rather than theoretical.

Read: AI Governance Committee Hub →Working Group Chartered

AI System Inventory

You cannot govern what you cannot see. Build a central registry of every AI system in use: vendor tools, internal models, embedded AI features, and experimental projects. This is the foundation your risk classification will sit on.

Read: AI Use Case Inventories → Read: AI Use Case Tracker →System Registry Complete

Risk Classification

Apply a tiered framework (Low, Medium, High, Unacceptable) to every system in your inventory. This drives proportionate controls, so you do not over-govern a grammar checker or under-govern a hiring algorithm.

Read: AI Risk Management Hub →Risk Tiers Assigned

Policy Drafting

Write the policy covering purpose, scope, governance structure, permitted and prohibited uses, data handling rules, risk management processes, and enforcement mechanisms. Use the essential sections checklist below.

Draft Policy CompleteReview & Approval

Circulate for stakeholder feedback, incorporate revisions, obtain legal sign-off, and secure formal executive approval. Then launch through the 90-day rollout plan.

Policy ApprovedEssential Policy Sections

Every effective AUP needs these eight sections. Skip one and you will have gaps auditors and regulators will find before you do.

1. Purpose & Scope

Define the policy objectives, which AI technologies are covered, which personnel are in scope, and the organizational boundaries. Be explicit about what counts as an “AI system.”

ISO 42001 Cl. 5.22. Definitions

AI systems, high-risk AI, sensitive data classifications, prohibited practices, shadow AI, and any domain-specific terminology. No ambiguity in enforcement.

EU AI Act Art. 33. Guiding Principles

Lawfulness, fairness, transparency, accountability, and human oversight. These principles anchor every rule in the policy and provide the reasoning behind restrictions.

NIST GOVERN 1.14. Governance Structure

Oversight council composition, operational governance office, system owner responsibilities, and decision authority levels. Who approves what, and who can say no.

ISO 42001 Cl. 5.35. Usage Rules

Permitted uses by risk tier, explicitly prohibited activities, data governance requirements, and restrictions on public AI tools. This is where shadow AI prevention lives.

EU AI Act Art. 56. Processes

Intake and registration workflow, risk assessment methodology, approval gates, ongoing monitoring requirements, and incident management procedures.

NIST MAP / MEASURE7. Documentation

System inventories, risk assessment records, decision logs, model documentation standards, and audit trail requirements. If it is not documented, it did not happen.

EU AI Act Art. 118. Enforcement

Violation categories, escalation procedures, consequences by severity, reporting mechanisms, and whistleblower protections. A policy without enforcement is a suggestion.

ISO 42001 Cl. 10.1Sample Policy Language

These are starting points for your own policy language, not legal advice. Customize for your organizational context and have legal counsel review before adoption.

Data Protection EU AI Act Art. 10

“Personnel are strictly prohibited from inputting any data classified as Confidential, Restricted, or containing personally identifiable information (PII) into publicly available AI tools, including but not limited to ChatGPT, Google Gemini, Claude, and Copilot consumer editions. All AI-processed data must remain within approved organizational systems that meet our data classification and handling standards.”

Decision-Making Restrictions EU AI Act Art. 14

“AI systems may not be used to make final decisions regarding employment, credit, housing, healthcare, or legal matters without meaningful human review by a qualified individual. All Medium and High Risk AI systems involved in consequential decisions must include documented human-in-the-loop controls with override capabilities.”

Bias Prevention ISO 42001 Cl. 8.4

“All AI systems classified as Medium or High Risk must undergo fairness evaluation testing across relevant demographic categories before deployment and on a recurring quarterly basis. Testing results, including any identified disparate impact, must be documented and reported to the AI Governance Office within 10 business days of completion.”

Shadow AI Prevention NIST GOVERN 1.6

“All AI tools, services, and applications must be registered in the organization’s AI System Inventory prior to use. Use of unregistered AI tools, including personal accounts on public AI services for work-related tasks, constitutes a policy violation. The IT Security team will conduct quarterly scans to identify unauthorized AI tool usage across organizational networks.”

Incident Reporting EU AI Act Art. 73

“Any employee who discovers or suspects an AI system malfunction, bias incident, data breach, or safety concern must report it to the AI Governance Office within 24 hours using the designated incident reporting channel. For high-risk AI systems, the organization must notify the relevant market surveillance authority within the timelines specified by the EU AI Act: 2 days for critical infrastructure disruption, 10 days for incidents involving death, and 15 days for all other serious incidents.”

These five examples cover the most common policy provisions, but a production AUP typically includes 15 to 20 specific policy statements across all 8 essential sections. The key principle: be specific enough to be enforceable, but flexible enough to accommodate different risk levels and use cases. Vague policies create loopholes. Overly rigid policies get ignored.

Risk Classification Framework

Not every AI system needs the same level of governance. This four-tier framework ensures proportionate controls: minimal friction for low-risk tools, maximum scrutiny for high-risk systems, and an outright ban on unacceptable applications.

AI Tool Classification: Common Examples

Use this table as a starting reference when classifying AI tools in your organization. Your specific context, data sensitivity, and intended use cases may shift a tool into a different tier.

Decision Framework: Questions Every AI System Must Answer

Before any AI system enters your environment, it should answer these questions. Use them as intake criteria for new AI projects and procurement requests.

Data Sensitivity

Does this AI process confidential, personal, or proprietary data? What classification levels does the data fall under? Can data be anonymized before processing?

Decision Impact

Can this AI’s outputs directly affect individuals’ rights, opportunities, or access to services? Are the decisions reversible? What is the blast radius of an incorrect output?

Human Oversight

Is there meaningful human review in the decision loop? Can a qualified person override, correct, or reverse AI-generated outcomes? How quickly can a kill switch be activated?

Regulatory Exposure

Does this AI system fall under EU AI Act high-risk categories? Does it process data subject to GDPR, HIPAA, or sector-specific regulations? What compliance evidence must be maintained?

Bias & Fairness

Could this system produce different outcomes for different demographic groups? What testing has been done? What ongoing monitoring is in place to detect drift or emerging bias?

Vendor & Supply Chain

If third-party AI, what are the vendor’s data handling practices? Where is data processed geographically? What contractual protections exist for data security and model changes?

These questions feed directly into two formal processes: intake forms (structured registration capturing business problem, data sources, sensitivity levels, users, and risk score) and impact assessments (deep analysis for medium and high-risk systems covering stakeholder impacts, fairness evaluation, privacy implications, and specific mitigation strategies). Both processes should be mandatory before any AI system moves to production.

Governance Roles: Who Owns What

Clear accountability prevents governance gaps. Every organization needs these four layers of AI oversight, scaled to fit your size and complexity.

AI Oversight Council

Senior leadership body responsible for strategic direction, policy approval, and high-risk system decisions. Typically includes CTO, CISO, General Counsel, Chief Ethics Officer, and business unit leaders.

ISO 42001 Cl. 5.1AI Governance Office

Operational team handling daily governance: intake reviews, risk assessments, inventory management, compliance monitoring, training coordination, and incident triage. The engine that makes the policy work.

NIST GOVERN 2.1AI System Owners

Named individuals (not teams) accountable for specific AI systems throughout their lifecycle. Responsible for documentation, risk management, performance monitoring, and compliance with policy requirements for their assigned systems.

EU AI Act Art. 26All Personnel

Every employee and contractor bears responsibility for following the AUP, completing required training, reporting policy violations or incidents, and using only approved AI tools. The policy applies equally at every level.

NIST GOVERN 2.2For detailed guidance on building and staffing your AI governance committee, including RACI matrices, meeting cadences, and authority cascades, see our AI Governance Committee Hub and the 8-Stage Implementation Guide.

Implementation: 90-Day Rollout

Your policy is approved. Now you need people to actually follow it. A structured 90-day rollout turns a document into an operational reality.

- Executive announcement from the sponsor to all staff, with mandatory acknowledgment

- Mandatory training sessions covering policy scope, risk tiers, and what changes for each role

- Q&A sessions per department to address concerns and edge cases

- AI intake forms become mandatory for all new AI project initiation

- Policy portal launched with templates, decision trees, and FAQs

- Impact assessments required for all Medium and High Risk projects in the pipeline

- Weekly office hours from the governance team for implementation support

- Department-specific guidance documents published for Engineering, Marketing, HR, and Legal

- Shadow AI discovery sweep to identify unapproved tools already in use

- Remediation plans created for legacy AI systems that predate the policy

- First submission analysis reviewing all intake requests for patterns and bottlenecks

- Early adopter survey collecting feedback on process friction and policy clarity

- Template and training updates based on real-world implementation experience

- Governance dashboard published with compliance metrics, submission counts, and risk distribution

- First governance report delivered to executive sponsor and steering committee

Measuring Success: Governance KPIs

A policy without metrics is just a suggestion. Track these indicators quarterly to prove your AUP is working and to identify areas that need attention.

Policy Coverage Rate

Percentage of AI systems in your inventory covered by the AUP. Target: 100% within 90 days of rollout. Track registered vs. discovered shadow AI instances.

NIST GOVERN 1.6Training Completion

Percentage of in-scope employees who have completed mandatory AI AUP training. Target: 95%+ by end of Phase 2. Break down by department and role level.

NIST GOVERN 2.2Incident Response Time

Average time from AI incident detection to documented response. Track against EU AI Act Art. 73 reporting timelines: 2 days for critical infrastructure, 10 days for death, 15 days for other serious incidents.

EU AI Act Art. 73Risk Assessment Completion

Percentage of medium and high-risk AI systems with completed impact assessments. Target: 100% for high-risk within 30 days of registration, 60 days for medium-risk.

ISO 42001 Cl. 6.1Policy Violation Rate

Number of documented AUP violations per quarter. Track by severity (critical, major, minor), department, and violation type. Downward trend indicates policy adoption.

ISO 42001 Cl. 10.1Stakeholder Satisfaction

Quarterly survey score from AI system owners and business unit leaders on governance process friction, clarity, and support. Governance should enable innovation, not block it.

NIST GOVERN 1.4Leaders Setting the Standard

These organizations are not waiting for regulation to force their hand. They are publishing policies, achieving certifications, and setting the bar your competitors will be measured against.

Amazon Web Services

First major cloud provider to achieve ISO/IEC 42001 certification for Amazon Bedrock. Published responsible AI policy covering model evaluation, data governance, and customer-facing transparency obligations.

Microsoft

Responsible AI Standard with mandatory impact assessments for all AI products. Embedded governance gates into the product development lifecycle. Published guidelines covering fairness, reliability, safety, and inclusiveness.

AI Principles with explicit prohibited applications, including weapons, surveillance violating norms, and technologies that cause overall harm. Internal review boards evaluate projects against these principles before launch.

Salesforce

Specific restrictions on automated decisions with legal or similarly significant effects. Published “Trusted AI Principles” covering accountability, transparency, empowerment, and inclusivity for Einstein AI products.

US Department of Homeland Security

Published a federal framework for safe and responsible AI adoption across DHS components. Includes mandatory AI use case inventories, risk assessments, and human oversight requirements for rights-impacting AI.

Everything you need to build, operationalize, and maintain your AI Acceptable Use Policy.

All six free tools in one download. Checklist, decision tree, regulatory mapping, tracker, board summary, and more.

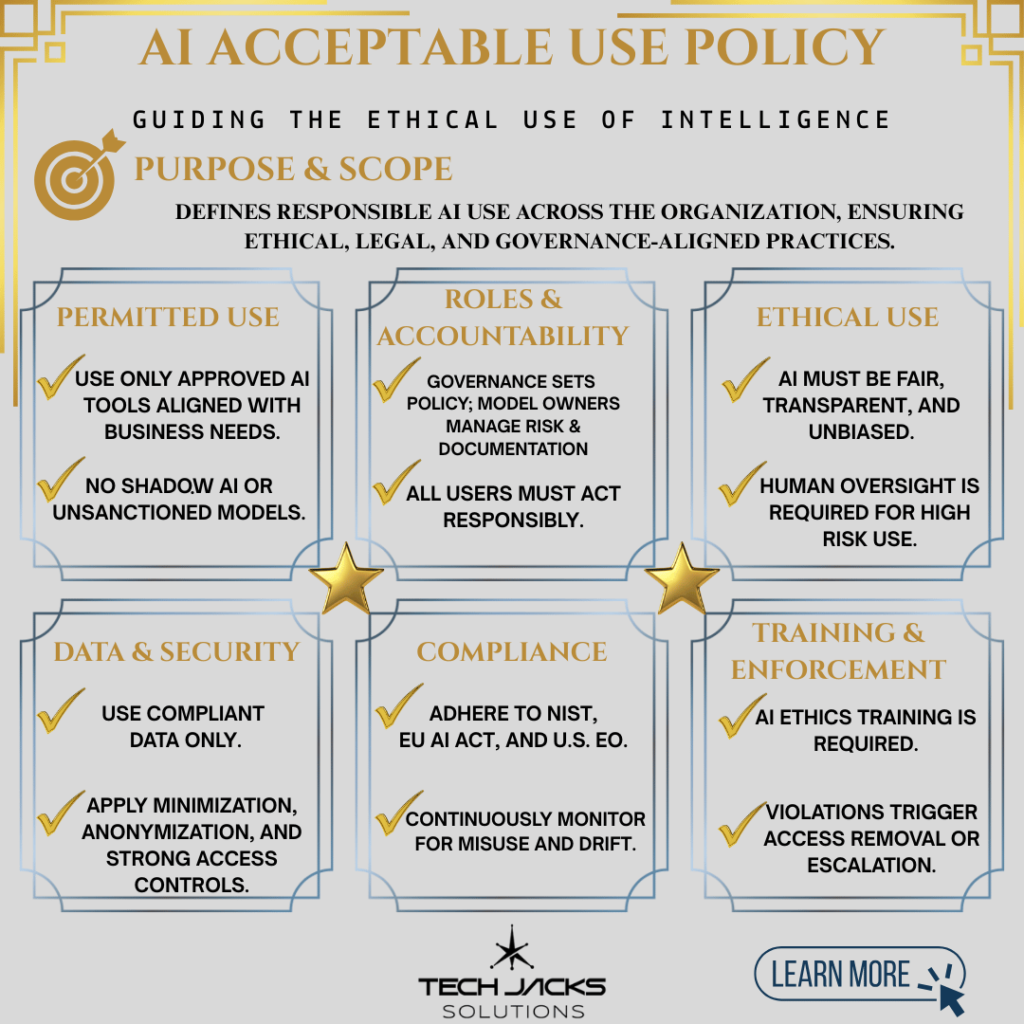

Download the Free Bundle →AI Acceptable Use Policy at a Glance

Save or share this infographic as a quick reference for your team.

Continue Your Governance Journey

Your AUP is one piece of a broader governance ecosystem. Here is what to build next.