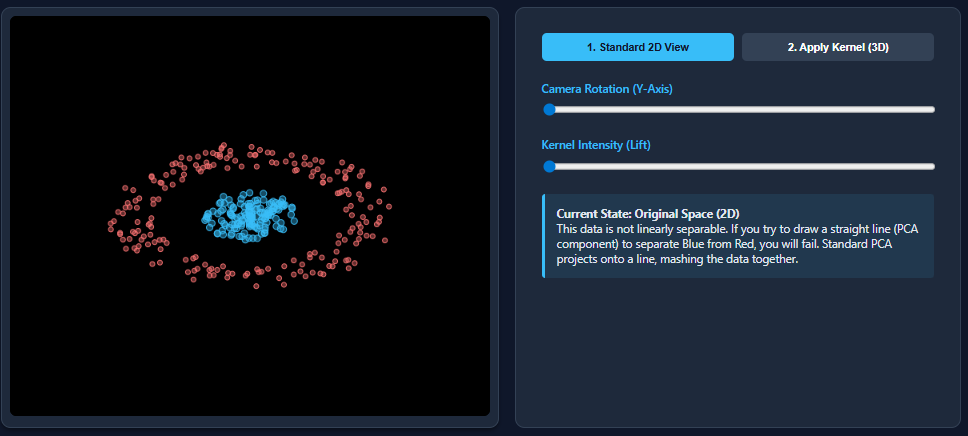

Dimensionality reduction techniques like PCA work wonderfully when datasets are linearly separable—but they break down the moment nonlinear patterns appear. That’s exactly what happens with datasets such as two moons: PCA flattens the structure and mixes the classes together. Kernel PCA fixes this limitation by mapping the data into a higher-dimensional feature space where nonlinear

The post Kernel Principal Component Analysis (PCA): Explained with an Example appeared first on MarkTechPost. Read More