Why professionals trust our templates

- 270+ downloads by governance & compliance teams

- Built by AI governance practitioners, not generic template mills

- 14-day money-back guarantee • Instant download • Secure checkout via Stripe

Comprehensive Agentic AI Compliance Assessment and Governance Template

A structured framework designed to support systematic evaluation of autonomous AI systems across regulatory, safety, and governance requirements.

[Download Now]

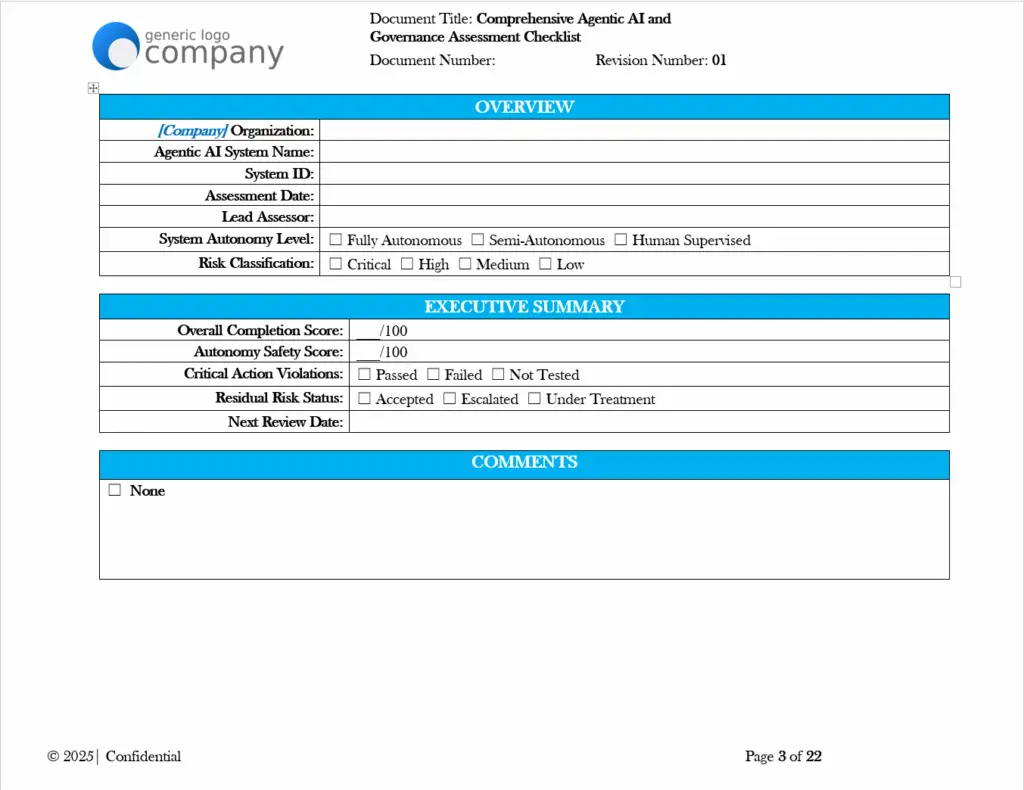

This assessment checklist template provides organizations with a structured approach to evaluating agentic AI systems for compliance and governance readiness. The template includes 15+ comprehensive sections covering regulatory mapping, risk assessment, human oversight mechanisms, testing protocols, and accountability frameworks. Organizations should expect to customize this template to their specific AI systems, operational contexts, and applicable regulatory requirements before use.

The checklist format supports systematic documentation of compliance status, evidence collection requirements, and gap identification across multiple regulatory frameworks. Professional review by qualified compliance and legal personnel is recommended before implementation.

Key Benefits

✓ Provides structured assessment framework covering regulatory mapping for GDPR, EU AI Act, NIST AI RMF, and ISO/IEC 42001 requirements

✓ Includes guidance on autonomy-specific controls with sections for action scope definition, guardrails, and kill-switch mechanisms

✓ Supports risk documentation with taxonomy covering reward hacking, scope creep, resource exhaustion, and multi-agent coordination risks

✓ Features human oversight tracking with HITL/HOTL/HOOTL classification options and intervention metrics

✓ Contains testing and red-teaming sections for adversarial testing, prompt injection, and jailbreak attempt documentation

✓ Offers KPI tracking frameworks with suggested metrics for behavioral drift, intervention ratios, and compliance scores

✓ Includes sign-off and accountability structures for technical, compliance, executive, and board-level review

Who Uses This?

This template is designed for:

- AI Governance Teams establishing compliance documentation for autonomous systems

- Chief AI Officers and Risk Officers requiring structured assessment frameworks

- Compliance Officers mapping agentic AI systems to regulatory requirements

- Security Architects evaluating autonomous interface controls and vulnerabilities

- Legal and Ethics Committees documenting oversight and accountability structures

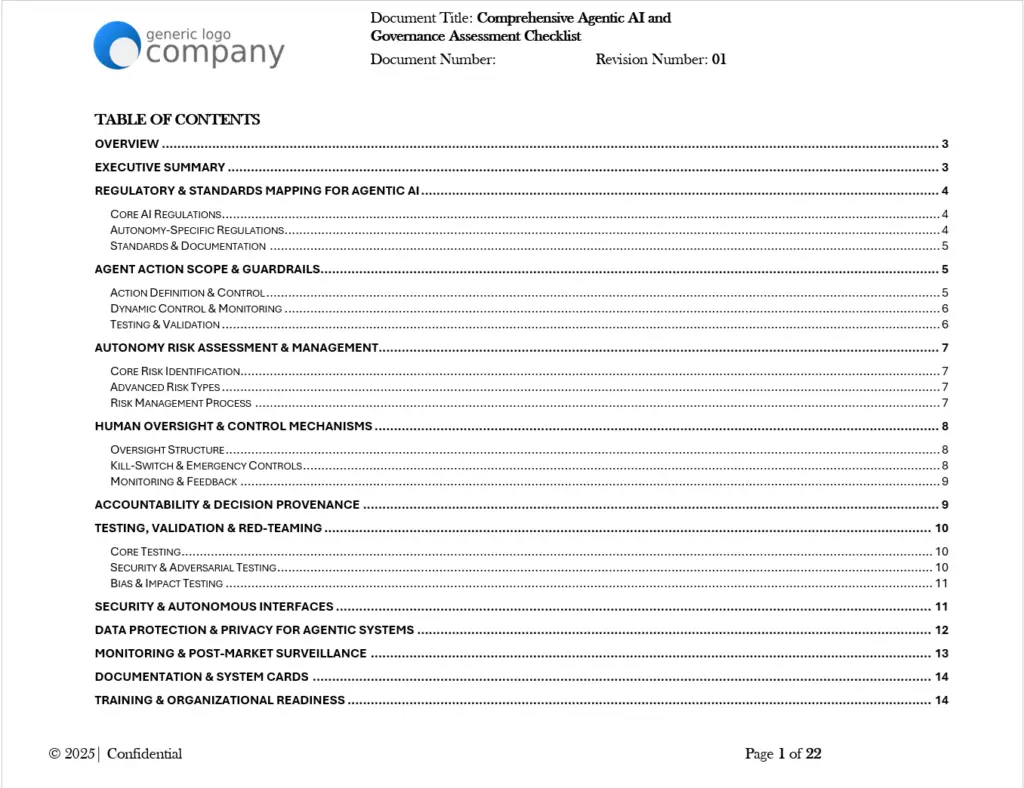

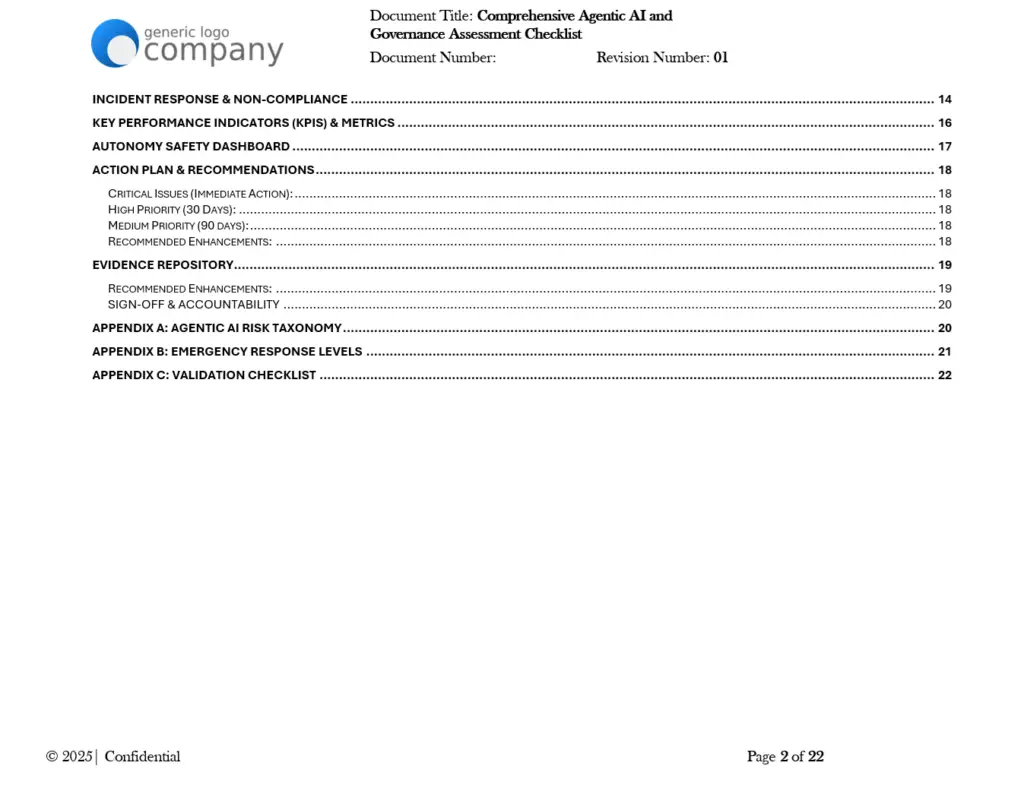

Preview: What’s Included

The template contains the following sections with assessment items, compliance status checkboxes, evidence requirements, and status tracking fields:

- Overview and Executive Summary scoring

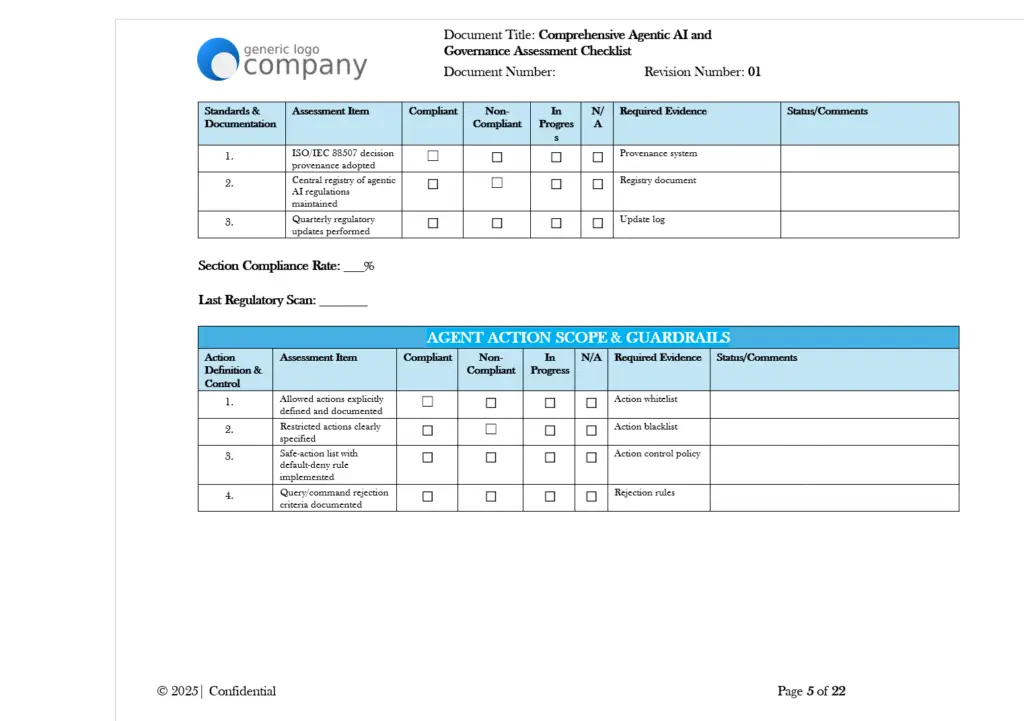

- Regulatory and Standards Mapping (Core AI Regulations, Autonomy-Specific Regulations, Standards and Documentation)

- Agent Action Scope and Guardrails

- Autonomy Risk Assessment and Management

- Human Oversight and Control Mechanisms

- Accountability and Decision Provenance

- Testing, Validation, and Red-Teaming

- Security and Autonomous Interfaces

- Data Protection and Privacy for Agentic Systems

- Monitoring and Post-Market Surveillance

- Documentation and System Cards

- Training and Organizational Readiness

- Incident Response and Non-Compliance

- KPIs and Metrics Dashboard

- Autonomy Safety Dashboard with Risk Heat Map

- Action Plan and Recommendations

- Evidence Repository Checklist

- Sign-off and Accountability Tables

- Appendix A: Agentic AI Risk Taxonomy

- Appendix B: Emergency Response Levels

- Appendix C: Validation Checklist

Why This Matters

Organizations deploying agentic AI systems face increasing regulatory scrutiny and operational risk. The EU AI Act establishes specific requirements for high-risk AI systems, including those with autonomous decision-making capabilities. NIST’s AI Risk Management Framework provides voluntary guidance for AI governance, while ISO/IEC 42001 establishes requirements for AI management systems.

Agentic AI systems present unique governance challenges beyond traditional AI applications. These systems can take autonomous actions, interact with external tools and APIs, and potentially modify their own behavior over time. Without structured assessment frameworks, organizations may struggle to document their governance approach, identify compliance gaps, or demonstrate due diligence to regulators and stakeholders.

This template provides a starting point for organizations developing their agentic AI governance programs. It does not guarantee compliance with any specific regulation or standard, and organizations should work with qualified legal and compliance professionals to develop programs appropriate to their specific circumstances.

Framework Alignment

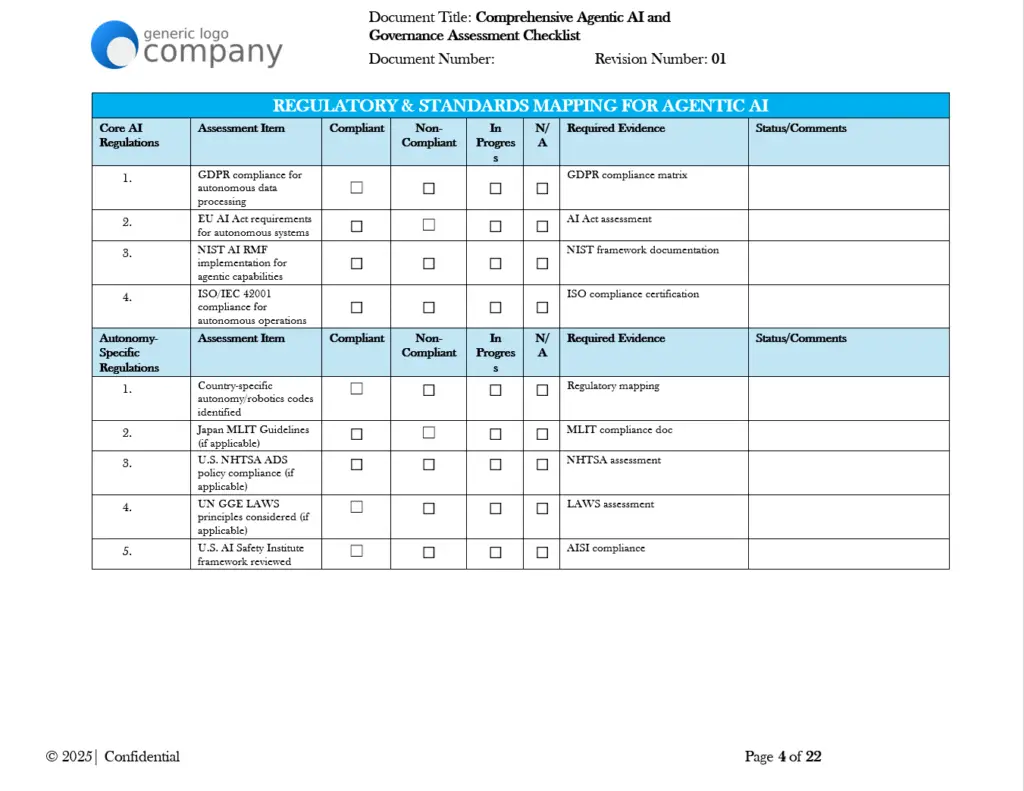

The template includes assessment items mapped to the following frameworks and standards (based on content explicitly referenced in the document):

Core AI Regulations:

- GDPR compliance for autonomous data processing

- EU AI Act requirements for autonomous systems

- NIST AI RMF implementation for agentic capabilities

- ISO/IEC 42001 compliance for autonomous operations

Autonomy-Specific Regulations:

- Country-specific autonomy/robotics codes identification

- Japan MLIT Guidelines (where applicable)

- U.S. NHTSA ADS policy compliance (where applicable)

- UN GGE LAWS principles consideration (where applicable)

- U.S. AI Safety Institute framework review

Standards and Documentation:

- ISO/IEC 38507 decision provenance

- ISO/IEC 23053 (ML trustworthiness)

- ISO/IEC 23894 (AI risk management)

- IEEE Standards for Autonomous Systems

Key Features

Regulatory Mapping Section

- Assessment items for each applicable regulation

- Compliance status tracking (Compliant, Non-Compliant, In Progress, N/A)

- Required evidence documentation fields

- Status and comments columns for notes

Agent Action Scope and Guardrails

- Action whitelist and blacklist documentation

- Safe-action list with default-deny rule implementation

- Query/command rejection criteria

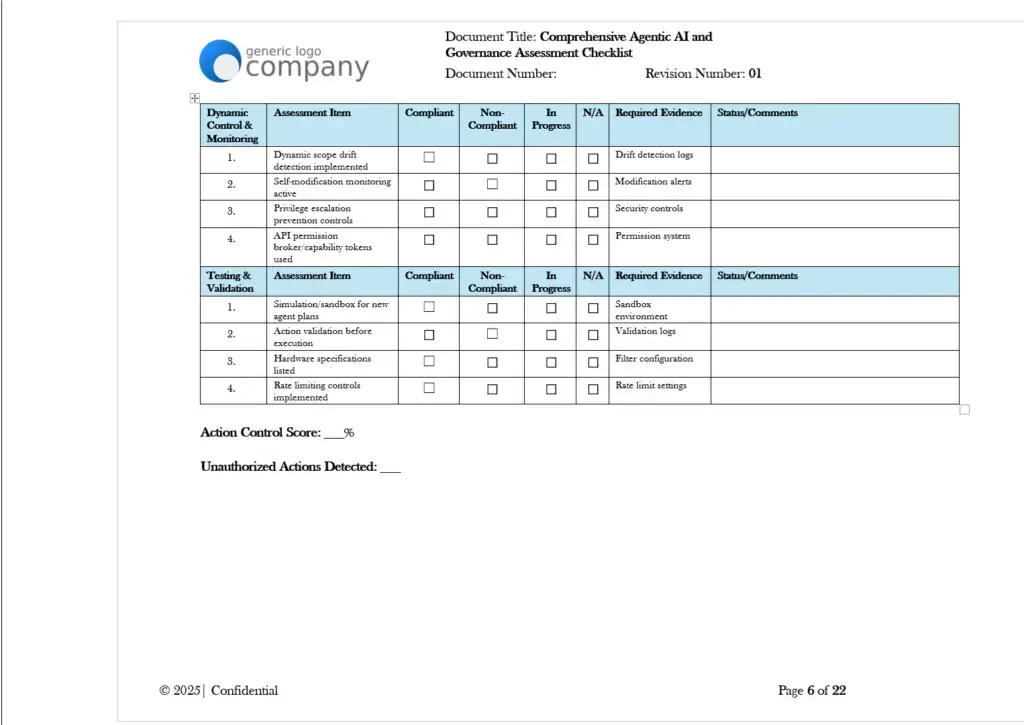

- Dynamic scope drift detection tracking

- Self-modification monitoring

- Privilege escalation prevention controls

- API permission broker/capability token tracking

Autonomy Risk Assessment

- Core risk identification framework

- Advanced risk types including emergent behaviors, goal misalignment, and cascading failures

- Risk management process documentation with treatment plans and residual risk acceptance

Human Oversight Mechanisms

- HITL/HOTL/HOOTL classification options

- Override mechanism testing and validation

- Kill-switch implementation verification (including out-of-band and independent controls)

- Graded response levels definition

- Fallback procedure documentation

Accountability and Decision Provenance

- Automatic logging of agent decisions/actions

- Reasoning/data capture behind actions

- Chain-of-thought/intermediate reasoning storage

- Decision provenance graph implementation

- RACI matrix for accountability roles

- Forensic review capability maintenance

Testing and Red-Teaming

- Core performance testing in intended environments

- Edge case and stress testing

- Continuous automated red-teaming

- Prompt injection testing

- Jailbreak attempt testing

- Tool-calling abuse scenario testing

- Digital twin adversarial simulations

Security and Interfaces

- Access controls based on agent scope

- API permission broker implementation

- Capability tokens for downstream APIs

- Memory sandboxing for credential leakage prevention

- Secure development practices documentation

- Vulnerability scanning

- Supply chain security (SBOM-AI)

Data Protection and Privacy

- DPA compliance for agent actions

- Privacy Impact Assessments for agent applications

- Consent management for agent data collection

- Data minimization in agent operations

- Data subject rights compliance

Monitoring and Surveillance

- Continuous performance monitoring

- Behavior anomaly detection

- Model behavior registry updates

- Alignment goals vs. behavior evaluation

- Novel behavior cataloging

- KPI/KRI tracking dashboards

- External audit documentation

Documentation and System Cards

- System Card maintenance requirements

- Tool calling permissions documentation

- Prompt template cataloging

- Alignment strategy documentation

- Red-team findings inclusion

- Deployment constraints specification

- Technical file for high-risk systems

- User guides for agent interaction

- SBOM-AI maintenance

Incident Response

- Agent-specific incident response plan

- Graded response levels implementation

- Violation reporting procedures

- Time to resolve tracking

- Kill-switch activation criteria

- Post-incident review process

KPIs and Metrics

- Suggested metrics including:

- Percent agentic systems compliant

- Mean time between unsafe actions (MTBUAA)

- Intervention ratio (human override percentage)

- Kill-switch test success rate

- Unauthorized action attempts

- Behavioral drift rate

- Red-team penetration rate

- Compliance audit score

Autonomy Safety Dashboard

- System behavior metrics with alert thresholds

- Risk heat map for reward hacking, resource exhaustion, scope creep, multi-agent collusion, and manipulation risk

Comparison Table: Basic Internal Assessment vs. Structured Professional Template

| Aspect | Basic Internal Assessment | This Professional Template |

|---|---|---|

| Regulatory Coverage | May miss applicable frameworks | Includes mapping for GDPR, EU AI Act, NIST AI RMF, ISO/IEC 42001, and autonomy-specific regulations |

| Risk Taxonomy | Ad-hoc risk identification | Structured taxonomy with reward hacking, scope creep, resource exhaustion, multi-agent issues, and manipulation risk categories |

| Human Oversight | Informal override procedures | HITL/HOTL/HOOTL classification with kill-switch verification and graded response levels |

| Testing Documentation | Basic functionality testing | Red-teaming, prompt injection, jailbreak testing, and adversarial simulation tracking |

| Accountability | Unclear responsibility assignment | RACI matrix, decision provenance, and multi-level sign-off structures |

| Evidence Management | Scattered documentation | Centralized evidence repository checklist with required documentation types |

| Metrics and KPIs | Limited performance tracking | Dashboard with 8+ suggested KPIs and risk heat map visualization framework |

FAQ Section

Q: What file format is this template provided in? A: The template is provided as a Microsoft Word (.docx) file to support proper formatting, table structures, and collaborative editing capabilities. This format allows organizations to customize the template to their specific requirements.

Q: Does this template guarantee compliance with the EU AI Act or other regulations? A: No. This template provides a structured framework to support compliance documentation efforts. It does not guarantee compliance with any regulation. Organizations should work with qualified legal and compliance professionals to determine their specific obligations and develop appropriate compliance programs.

Q: How much customization is required before using this template? A: Significant customization is expected. Organizations need to adapt assessment items to their specific AI systems, operational contexts, risk profiles, and applicable regulatory requirements. The template provides a structured starting point, not a ready-to-use solution.

Q: What expertise is needed to complete this assessment? A: Completing this assessment typically requires input from multiple disciplines including AI/ML engineering, security, legal, compliance, risk management, and executive leadership. The sign-off section includes roles for AI Safety Engineer, Security Architect, Compliance Officer, Risk Manager, Legal Counsel, Chief AI Officer, Chief Risk Officer, and AI Ethics Committee representation.

Q: Is this template appropriate for all types of AI systems? A: This template is specifically designed for agentic AI systems (those with autonomous action capabilities). Traditional ML models or AI systems without autonomous action capabilities may not require all sections of this assessment. Organizations should evaluate which sections are applicable to their specific systems.

Q: How often should this assessment be conducted? A: The template includes fields for next review date and continuous monitoring frequency options (Real Time, Daily, Weekly, Monthly). Assessment frequency should be determined based on the risk level of the AI system, regulatory requirements, and organizational policies.

Q: What supporting documentation should accompany this completed assessment? A: The Evidence Repository section lists recommended supporting documents including System Cards, action whitelists/blacklists, kill-switch test results, red-team reports, behavioral analysis logs, human override records, incident response logs, compliance certificates, risk acceptance forms, SBOM-AI documentation, training completion records, and audit reports.

Ideal For

This template is designed for:

- Organizations deploying agentic AI systems requiring governance documentation

- Enterprises preparing for EU AI Act compliance requirements

- Companies aligning with NIST AI RMF voluntary guidance

- Organizations pursuing ISO/IEC 42001 certification for AI management systems

- AI governance teams establishing assessment frameworks for autonomous systems

- Risk management professionals documenting AI safety controls

- Legal and compliance teams requiring structured evidence collection

- Executive leadership requiring board-level AI risk reporting frameworks

Complexity Level: Advanced (requires multi-disciplinary input and significant customization)

Pricing Strategy Options

Single Template: Contact for pricing based on organizational requirements and customization needs.

Bundle Option: May be combined with additional AI governance templates depending on organizational compliance scope.

Enterprise Option: Available as part of comprehensive governance documentation suites with volume considerations.

Differentiator

This template provides a structured framework specifically designed for agentic AI systems, addressing the unique governance challenges of autonomous AI including action scope controls, kill-switch mechanisms, behavioral drift monitoring, and multi-agent coordination risks. Unlike general AI governance templates, this assessment includes sections for autonomy-specific regulations (NHTSA ADS, MLIT Guidelines, UN GGE LAWS principles), agentic risk taxonomy (reward hacking, scope creep, resource exhaustion), and human oversight classification (HITL/HOTL/HOOTL). The template supports documentation across technical, compliance, executive, and board-level review structures, providing a comprehensive framework for organizations to customize to their specific agentic AI governance requirements.

Document optimized for Microsoft Word to ensure proper formatting and collaborative editing capabilities. Professional legal and compliance review recommended before implementation. This template supports compliance documentation efforts but does not guarantee regulatory compliance.