GradPruner: Gradient-Guided Layer Pruning Enabling Efficient Fine-Tuning and Inference for LLMscs.AI updates on arXiv.org arXiv:2601.19503v1 Announce Type: cross

Abstract: Fine-tuning Large Language Models (LLMs) with downstream data is often considered time-consuming and expensive. Structured pruning methods are primarily employed to improve the inference efficiency of pre-trained models. Meanwhile, they often require additional time and memory for training, knowledge distillation, structure search, and other strategies, making efficient model fine-tuning challenging to achieve. To simultaneously enhance the training and inference efficiency of downstream task fine-tuning, we introduce GradPruner, which can prune layers of LLMs guided by gradients in the early stages of fine-tuning. GradPruner uses the cumulative gradients of each parameter during the initial phase of fine-tuning to compute the Initial Gradient Information Accumulation Matrix (IGIA-Matrix) to assess the importance of layers and perform pruning. We sparsify the pruned layers based on the IGIA-Matrix and merge them with the remaining layers. Only elements with the same sign are merged to reduce interference from sign variations. We conducted extensive experiments on two LLMs across eight downstream datasets. Including medical, financial, and general benchmark tasks. The results demonstrate that GradPruner has achieved a parameter reduction of 40% with only a 0.99% decrease in accuracy. Our code is publicly available.

arXiv:2601.19503v1 Announce Type: cross

Abstract: Fine-tuning Large Language Models (LLMs) with downstream data is often considered time-consuming and expensive. Structured pruning methods are primarily employed to improve the inference efficiency of pre-trained models. Meanwhile, they often require additional time and memory for training, knowledge distillation, structure search, and other strategies, making efficient model fine-tuning challenging to achieve. To simultaneously enhance the training and inference efficiency of downstream task fine-tuning, we introduce GradPruner, which can prune layers of LLMs guided by gradients in the early stages of fine-tuning. GradPruner uses the cumulative gradients of each parameter during the initial phase of fine-tuning to compute the Initial Gradient Information Accumulation Matrix (IGIA-Matrix) to assess the importance of layers and perform pruning. We sparsify the pruned layers based on the IGIA-Matrix and merge them with the remaining layers. Only elements with the same sign are merged to reduce interference from sign variations. We conducted extensive experiments on two LLMs across eight downstream datasets. Including medical, financial, and general benchmark tasks. The results demonstrate that GradPruner has achieved a parameter reduction of 40% with only a 0.99% decrease in accuracy. Our code is publicly available. Read More

From Observations to Events: Event-Aware World Model for Reinforcement Learningcs.AI updates on arXiv.org arXiv:2601.19336v1 Announce Type: cross

Abstract: While model-based reinforcement learning (MBRL) improves sample efficiency by learning world models from raw observations, existing methods struggle to generalize across structurally similar scenes and remain vulnerable to spurious variations such as textures or color shifts. From a cognitive science perspective, humans segment continuous sensory streams into discrete events and rely on these key events for decision-making. Motivated by this principle, we propose the Event-Aware World Model (EAWM), a general framework that learns event-aware representations to streamline policy learning without requiring handcrafted labels. EAWM employs an automated event generator to derive events from raw observations and introduces a Generic Event Segmentor (GES) to identify event boundaries, which mark the start and end time of event segments. Through event prediction, the representation space is shaped to capture meaningful spatio-temporal transitions. Beyond this, we present a unified formulation of seemingly distinct world model architectures and show the broad applicability of our methods. Experiments on Atari 100K, Craftax 1M, and DeepMind Control 500K, DMC-GB2 500K demonstrate that EAWM consistently boosts the performance of strong MBRL baselines by 10%-45%, setting new state-of-the-art results across benchmarks. Our code is released at https://github.com/MarquisDarwin/EAWM.

arXiv:2601.19336v1 Announce Type: cross

Abstract: While model-based reinforcement learning (MBRL) improves sample efficiency by learning world models from raw observations, existing methods struggle to generalize across structurally similar scenes and remain vulnerable to spurious variations such as textures or color shifts. From a cognitive science perspective, humans segment continuous sensory streams into discrete events and rely on these key events for decision-making. Motivated by this principle, we propose the Event-Aware World Model (EAWM), a general framework that learns event-aware representations to streamline policy learning without requiring handcrafted labels. EAWM employs an automated event generator to derive events from raw observations and introduces a Generic Event Segmentor (GES) to identify event boundaries, which mark the start and end time of event segments. Through event prediction, the representation space is shaped to capture meaningful spatio-temporal transitions. Beyond this, we present a unified formulation of seemingly distinct world model architectures and show the broad applicability of our methods. Experiments on Atari 100K, Craftax 1M, and DeepMind Control 500K, DMC-GB2 500K demonstrate that EAWM consistently boosts the performance of strong MBRL baselines by 10%-45%, setting new state-of-the-art results across benchmarks. Our code is released at https://github.com/MarquisDarwin/EAWM. Read More

Tencent Hunyuan Releases HPC-Ops: A High Performance LLM Inference Operator LibraryMarkTechPost Tencent Hunyuan has open sourced HPC-Ops, a production grade operator library for large language model inference architecture devices. HPC-Ops focuses on low level CUDA kernels for core operators such as Attention, Grouped GEMM, and Fused MoE, and exposes them through a compact-C and Python API for integration into existing inference stacks. HPC-Ops runs in large

The post Tencent Hunyuan Releases HPC-Ops: A High Performance LLM Inference Operator Library appeared first on MarkTechPost.

Tencent Hunyuan has open sourced HPC-Ops, a production grade operator library for large language model inference architecture devices. HPC-Ops focuses on low level CUDA kernels for core operators such as Attention, Grouped GEMM, and Fused MoE, and exposes them through a compact-C and Python API for integration into existing inference stacks. HPC-Ops runs in large

The post Tencent Hunyuan Releases HPC-Ops: A High Performance LLM Inference Operator Library appeared first on MarkTechPost. Read More

Moonshot AI Releases Kimi K2.5: An Open Source Visual Agentic Intelligence Model with Native Swarm ExecutionMarkTechPost Moonshot AI has released Kimi K2.5 as an open source visual agentic intelligence model. It combines a large Mixture of Experts language backbone, a native vision encoder, and a parallel multi agent system called Agent Swarm. The model targets coding, multimodal reasoning, and deep web research with strong benchmark results on agentic, vision, and coding

The post Moonshot AI Releases Kimi K2.5: An Open Source Visual Agentic Intelligence Model with Native Swarm Execution appeared first on MarkTechPost.

Moonshot AI has released Kimi K2.5 as an open source visual agentic intelligence model. It combines a large Mixture of Experts language backbone, a native vision encoder, and a parallel multi agent system called Agent Swarm. The model targets coding, multimodal reasoning, and deep web research with strong benchmark results on agentic, vision, and coding

The post Moonshot AI Releases Kimi K2.5: An Open Source Visual Agentic Intelligence Model with Native Swarm Execution appeared first on MarkTechPost. Read More

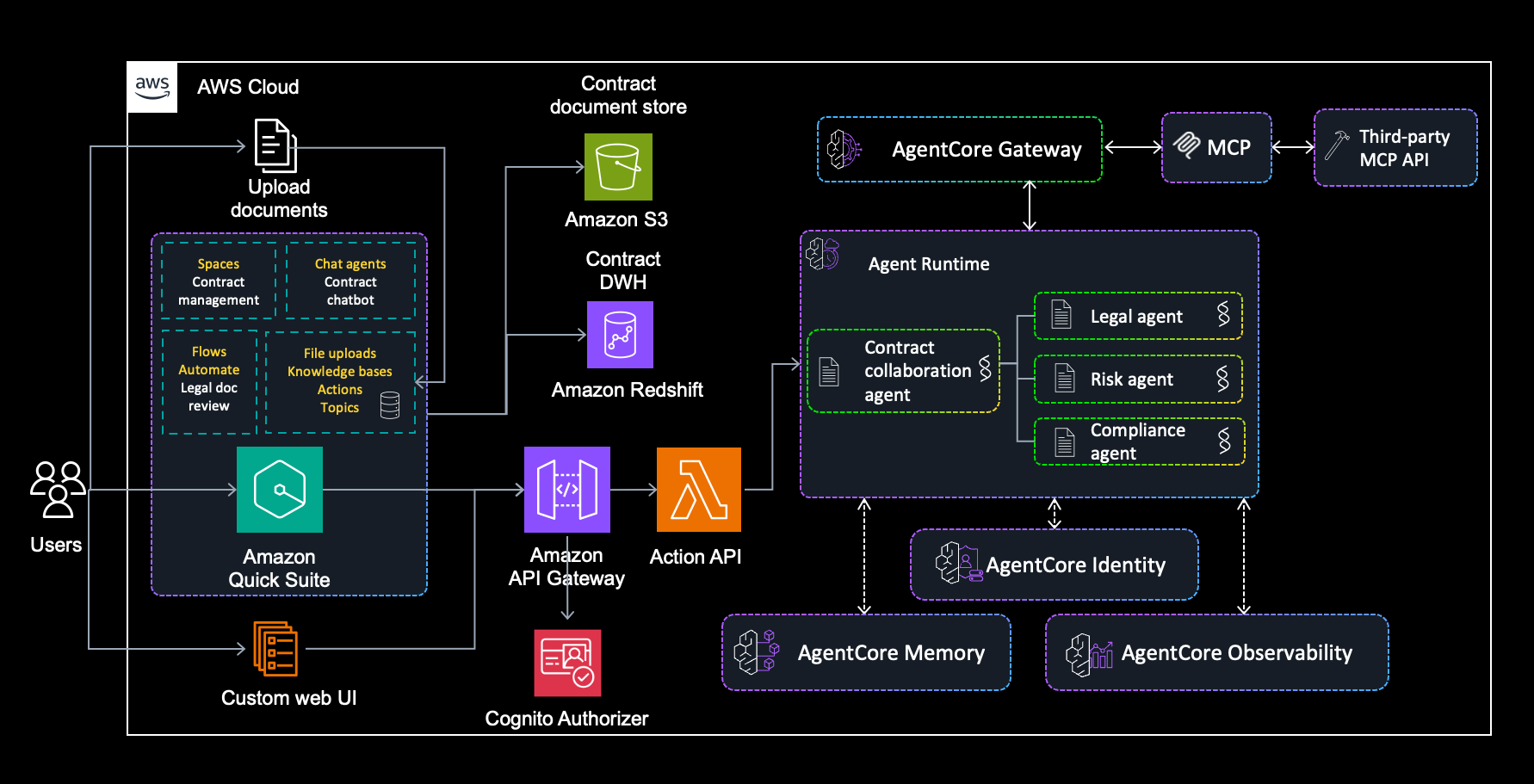

Build an intelligent contract management solution with Amazon Quick Suite and Bedrock AgentCoreArtificial Intelligence This blog post demonstrates how to build an intelligent contract management solution using Amazon Quick Suite as your primary contract management solution, augmented with Amazon Bedrock AgentCore for advanced multi-agent capabilities.

This blog post demonstrates how to build an intelligent contract management solution using Amazon Quick Suite as your primary contract management solution, augmented with Amazon Bedrock AgentCore for advanced multi-agent capabilities. Read More

3 Ways to Anonymize and Protect User Data in Your ML PipelineKDnuggets In this article, you will learn three practical ways to protect user data in real-world ML pipelines, with techniques that data scientists can implement directly in their workflows.

In this article, you will learn three practical ways to protect user data in real-world ML pipelines, with techniques that data scientists can implement directly in their workflows. Read More

Data Science as Engineering: Foundations, Education, and Professional IdentityTowards Data Science Recognize data science as an engineering practice and structure education accordingly.

The post Data Science as Engineering: Foundations, Education, and Professional Identity appeared first on Towards Data Science.

Recognize data science as an engineering practice and structure education accordingly.

The post Data Science as Engineering: Foundations, Education, and Professional Identity appeared first on Towards Data Science. Read More

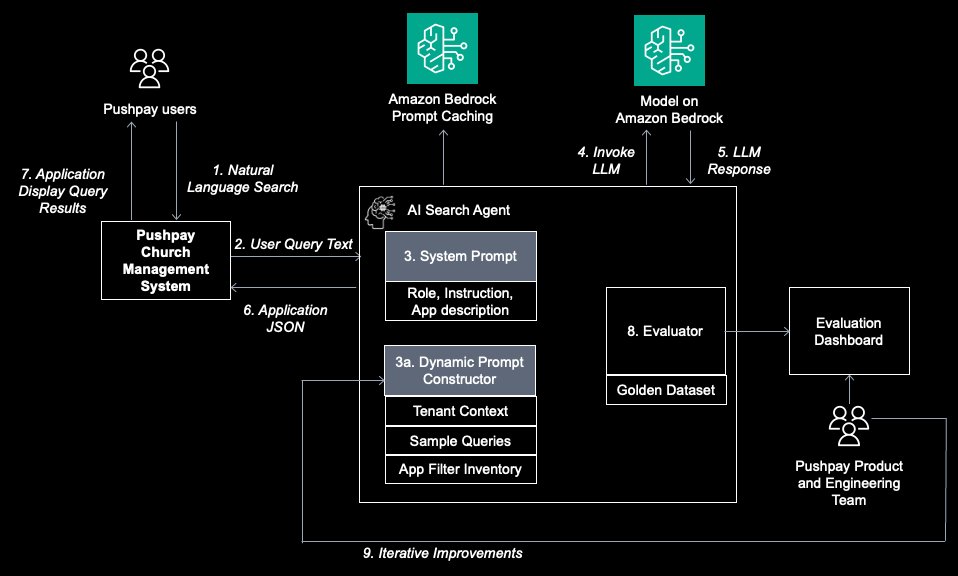

Build reliable Agentic AI solution with Amazon Bedrock: Learn from Pushpay’s journey on GenAI evaluationArtificial Intelligence In this post, we walk you through Pushpay’s journey in building this solution and explore how Pushpay used Amazon Bedrock to create a custom generative AI evaluation framework for continuous quality assurance and establishing rapid iteration feedback loops on AWS.

In this post, we walk you through Pushpay’s journey in building this solution and explore how Pushpay used Amazon Bedrock to create a custom generative AI evaluation framework for continuous quality assurance and establishing rapid iteration feedback loops on AWS. Read More

Databricks: Enterprise AI adoption shifts to agentic systemsAI News According to Databricks, enterprise AI adoption is shifting to agentic systems as organisations embrace intelligent workflows. Generative AI’s first wave promised business transformation but often delivered little more than isolated chatbots and stalled pilot programmes. Technology leaders found themselves managing high expectations with limited operational utility. However, new telemetry from Databricks suggests the market has

The post Databricks: Enterprise AI adoption shifts to agentic systems appeared first on AI News.

According to Databricks, enterprise AI adoption is shifting to agentic systems as organisations embrace intelligent workflows. Generative AI’s first wave promised business transformation but often delivered little more than isolated chatbots and stalled pilot programmes. Technology leaders found themselves managing high expectations with limited operational utility. However, new telemetry from Databricks suggests the market has

The post Databricks: Enterprise AI adoption shifts to agentic systems appeared first on AI News. Read More

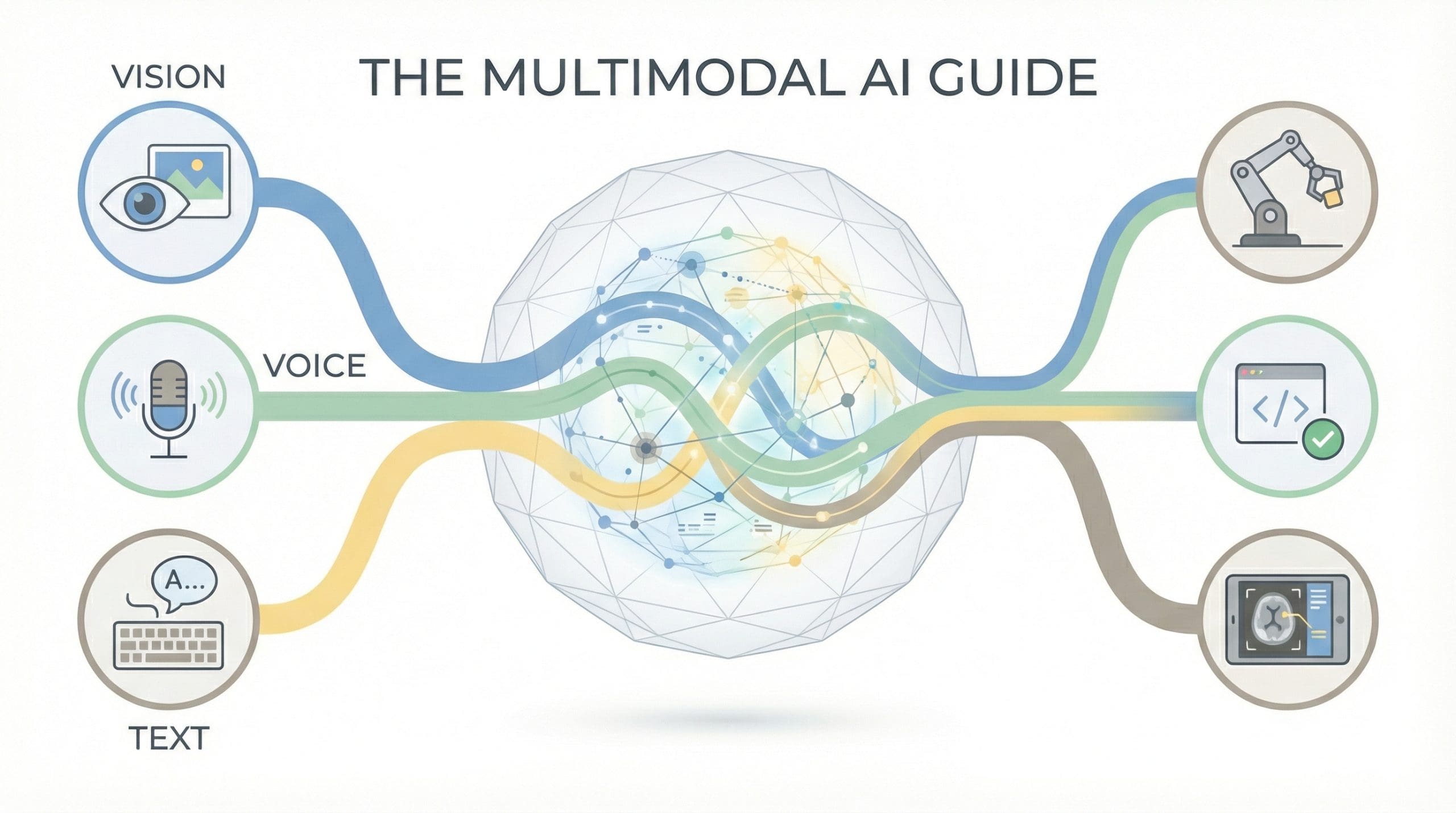

The Multimodal AI Guide: Vision, Voice, Text, and BeyondKDnuggets AI systems now see images, hear speech, and process video, understanding information in its native form.

AI systems now see images, hear speech, and process video, understanding information in its native form. Read More