Synthetic Student Responses: LLM-Extracted Features for IRT Difficulty Parameter Estimationcs.AI updates on arXiv.org arXiv:2602.00034v1 Announce Type: cross

Abstract: Educational assessment relies heavily on knowing question difficulty, traditionally determined through resource-intensive pre-testing with students. This creates significant barriers for both classroom teachers and assessment developers. We investigate whether Item Response Theory (IRT) difficulty parameters can be accurately estimated without student testing by modeling the response process and explore the relative contribution of different feature types to prediction accuracy. Our approach combines traditional linguistic features with pedagogical insights extracted using Large Language Models (LLMs), including solution step count, cognitive complexity, and potential misconceptions. We implement a two-stage process: first training a neural network to predict how students would respond to questions, then deriving difficulty parameters from these simulated response patterns. Using a dataset of over 250,000 student responses to mathematics questions, our model achieves a Pearson correlation of approximately 0.78 between predicted and actual difficulty parameters on completely unseen questions.

arXiv:2602.00034v1 Announce Type: cross

Abstract: Educational assessment relies heavily on knowing question difficulty, traditionally determined through resource-intensive pre-testing with students. This creates significant barriers for both classroom teachers and assessment developers. We investigate whether Item Response Theory (IRT) difficulty parameters can be accurately estimated without student testing by modeling the response process and explore the relative contribution of different feature types to prediction accuracy. Our approach combines traditional linguistic features with pedagogical insights extracted using Large Language Models (LLMs), including solution step count, cognitive complexity, and potential misconceptions. We implement a two-stage process: first training a neural network to predict how students would respond to questions, then deriving difficulty parameters from these simulated response patterns. Using a dataset of over 250,000 student responses to mathematics questions, our model achieves a Pearson correlation of approximately 0.78 between predicted and actual difficulty parameters on completely unseen questions. Read More

EigenAI: Deterministic Inference, Verifiable Resultscs.AI updates on arXiv.org arXiv:2602.00182v1 Announce Type: cross

Abstract: EigenAI is a verifiable AI platform built on top of the EigenLayer restaking ecosystem. At a high level, it combines a deterministic large-language model (LLM) inference engine with a cryptoeconomically secured optimistic re-execution protocol so that every inference result can be publicly audited, reproduced, and, if necessary, economically enforced. An untrusted operator runs inference on a fixed GPU architecture, signs and encrypts the request and response, and publishes the encrypted log to EigenDA. During a challenge window, any watcher may request re-execution through EigenVerify; the result is then deterministically recomputed inside a trusted execution environment (TEE) with a threshold-released decryption key, allowing a public challenge with private data. Because inference itself is bit-exact, verification reduces to a byte-equality check, and a single honest replica suffices to detect fraud. We show how this architecture yields sovereign agents — prediction-market judges, trading bots, and scientific assistants — that enjoy state-of-the-art performance while inheriting security from Ethereum’s validator base.

arXiv:2602.00182v1 Announce Type: cross

Abstract: EigenAI is a verifiable AI platform built on top of the EigenLayer restaking ecosystem. At a high level, it combines a deterministic large-language model (LLM) inference engine with a cryptoeconomically secured optimistic re-execution protocol so that every inference result can be publicly audited, reproduced, and, if necessary, economically enforced. An untrusted operator runs inference on a fixed GPU architecture, signs and encrypts the request and response, and publishes the encrypted log to EigenDA. During a challenge window, any watcher may request re-execution through EigenVerify; the result is then deterministically recomputed inside a trusted execution environment (TEE) with a threshold-released decryption key, allowing a public challenge with private data. Because inference itself is bit-exact, verification reduces to a byte-equality check, and a single honest replica suffices to detect fraud. We show how this architecture yields sovereign agents — prediction-market judges, trading bots, and scientific assistants — that enjoy state-of-the-art performance while inheriting security from Ethereum’s validator base. Read More

Working with Billion-Row Datasets in Python (Using Vaex)KDnuggets Analyze billion-row datasets in Python using Vaex. Learn how out-of-core processing, lazy evaluation, and memory mapping enable fast analytics at scale.

Analyze billion-row datasets in Python using Vaex. Learn how out-of-core processing, lazy evaluation, and memory mapping enable fast analytics at scale. Read More

Building Systems That Survive Real LifeTowards Data Science Sara Nobrega on the transition from data science to AI engineering, using LLMs as a bridge to DevOps, and the one engineering skill junior data scientists need to stay competitive.

The post Building Systems That Survive Real Life appeared first on Towards Data Science.

Sara Nobrega on the transition from data science to AI engineering, using LLMs as a bridge to DevOps, and the one engineering skill junior data scientists need to stay competitive.

The post Building Systems That Survive Real Life appeared first on Towards Data Science. Read More

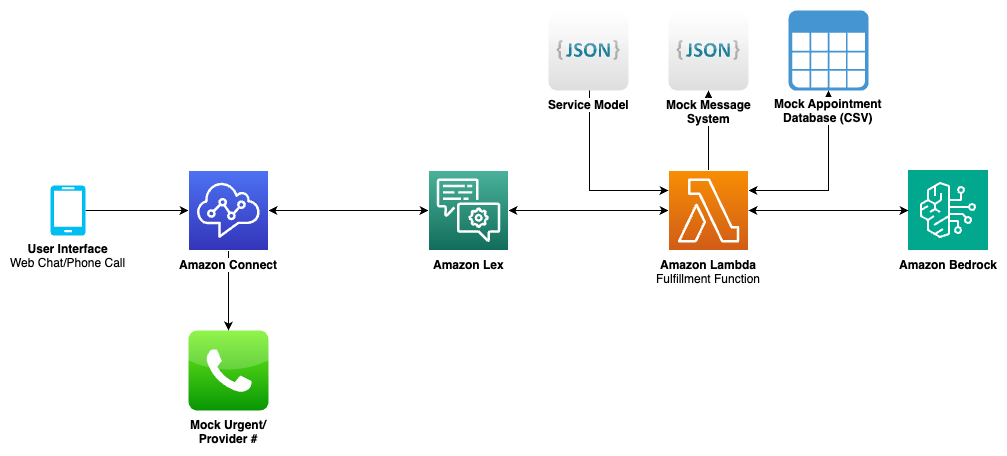

How Clarus Care uses Amazon Bedrock to deliver conversational contact center interactionsArtificial Intelligence In this post, we illustrate how Clarus Care, a healthcare contact center solutions provider, worked with the AWS Generative AI Innovation Center (GenAIIC) team to develop a generative AI-powered contact center prototype. This solution enables conversational interaction and multi-intent resolution through an automated voicebot and chat interface. It also incorporates a scalable service model to support growth, human transfer capabilities–when requested or for urgent cases–and an analytics pipeline for performance insights.

In this post, we illustrate how Clarus Care, a healthcare contact center solutions provider, worked with the AWS Generative AI Innovation Center (GenAIIC) team to develop a generative AI-powered contact center prototype. This solution enables conversational interaction and multi-intent resolution through an automated voicebot and chat interface. It also incorporates a scalable service model to support growth, human transfer capabilities–when requested or for urgent cases–and an analytics pipeline for performance insights. Read More

Klarna backs Google UCP to power AI agent paymentsAI News Klarna aims to address the lack of interoperability between conversational AI agents and backend payment systems by backing Google’s Universal Commerce Protocol (UCP), an open standard designed to unify how AI agents discover products and execute transactions. The partnership, which also sees Klarna supporting Google’s Agent Payments Protocol (AP2), places the Swedish fintech firm among

The post Klarna backs Google UCP to power AI agent payments appeared first on AI News.

Klarna aims to address the lack of interoperability between conversational AI agents and backend payment systems by backing Google’s Universal Commerce Protocol (UCP), an open standard designed to unify how AI agents discover products and execute transactions. The partnership, which also sees Klarna supporting Google’s Agent Payments Protocol (AP2), places the Swedish fintech firm among

The post Klarna backs Google UCP to power AI agent payments appeared first on AI News. Read More

5 Android Apps for Code EditingKDnuggets From debugging to quick fixes, here are the top Android apps every developer should have on their phone.

From debugging to quick fixes, here are the top Android apps every developer should have on their phone. Read More

Silicon Darwinism: Why Scarcity Is the Source of True IntelligenceTowards Data Science We are confusing “size” with “smart.” The next leap in artificial intelligence will not come from a larger data center, but from a more constrained environment.

The post Silicon Darwinism: Why Scarcity Is the Source of True Intelligence appeared first on Towards Data Science.

We are confusing “size” with “smart.” The next leap in artificial intelligence will not come from a larger data center, but from a more constrained environment.

The post Silicon Darwinism: Why Scarcity Is the Source of True Intelligence appeared first on Towards Data Science. Read More

The Statistical Cost of Zero Padding in Convolutional Neural Networks (CNNs)MarkTechPost What is Zero Padding Zero padding is a technique used in convolutional neural networks where additional pixels with a value of zero are added around the borders of an image. This allows convolutional kernels to slide over edge pixels and helps control how much the spatial dimensions of the feature map shrink after convolution. Padding

The post The Statistical Cost of Zero Padding in Convolutional Neural Networks (CNNs) appeared first on MarkTechPost.

What is Zero Padding Zero padding is a technique used in convolutional neural networks where additional pixels with a value of zero are added around the borders of an image. This allows convolutional kernels to slide over edge pixels and helps control how much the spatial dimensions of the feature map shrink after convolution. Padding

The post The Statistical Cost of Zero Padding in Convolutional Neural Networks (CNNs) appeared first on MarkTechPost. Read More

How to Build Multi-Layered LLM Safety Filters to Defend Against Adaptive, Paraphrased, and Adversarial Prompt AttacksMarkTechPost In this tutorial, we build a robust, multi-layered safety filter designed to defend large language models against adaptive and paraphrased attacks. We combine semantic similarity analysis, rule-based pattern detection, LLM-driven intent classification, and anomaly detection to create a defense system that relies on no single point of failure. Also, we demonstrate how practical, production-style safety

The post How to Build Multi-Layered LLM Safety Filters to Defend Against Adaptive, Paraphrased, and Adversarial Prompt Attacks appeared first on MarkTechPost.

In this tutorial, we build a robust, multi-layered safety filter designed to defend large language models against adaptive and paraphrased attacks. We combine semantic similarity analysis, rule-based pattern detection, LLM-driven intent classification, and anomaly detection to create a defense system that relies on no single point of failure. Also, we demonstrate how practical, production-style safety

The post How to Build Multi-Layered LLM Safety Filters to Defend Against Adaptive, Paraphrased, and Adversarial Prompt Attacks appeared first on MarkTechPost. Read More