NVIDIA Releases Dynamo v0.9.0: A Massive Infrastructure Overhaul Featuring FlashIndexer, Multi-Modal Support, and Removed NATS and ETCDMarkTechPost NVIDIA has just released Dynamo v0.9.0. This is the most significant infrastructure upgrade for the distributed inference framework to date. This update simplifies how large-scale models are deployed and managed. The release focuses on removing heavy dependencies and improving how GPUs handle multi-modal data. The Great Simplification: Removing NATS and etcd The biggest change in

The post NVIDIA Releases Dynamo v0.9.0: A Massive Infrastructure Overhaul Featuring FlashIndexer, Multi-Modal Support, and Removed NATS and ETCD appeared first on MarkTechPost.

NVIDIA has just released Dynamo v0.9.0. This is the most significant infrastructure upgrade for the distributed inference framework to date. This update simplifies how large-scale models are deployed and managed. The release focuses on removing heavy dependencies and improving how GPUs handle multi-modal data. The Great Simplification: Removing NATS and etcd The biggest change in

The post NVIDIA Releases Dynamo v0.9.0: A Massive Infrastructure Overhaul Featuring FlashIndexer, Multi-Modal Support, and Removed NATS and ETCD appeared first on MarkTechPost. Read More

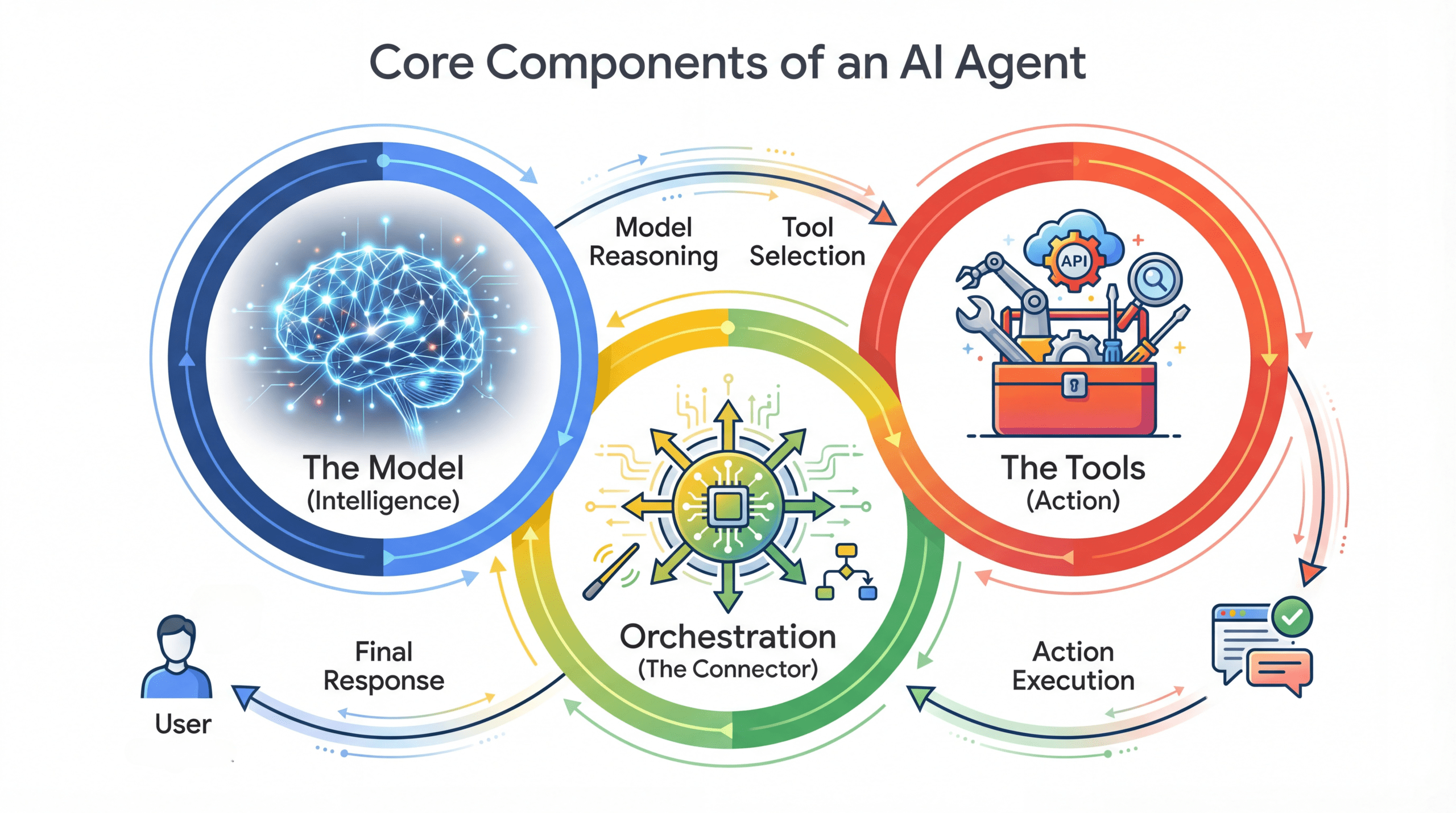

How to Build Transparent AI Agents: Traceable Decision-Making with Audit Trails and Human GatesMarkTechPost In this tutorial, we build a glass-box agentic workflow that makes every decision traceable, auditable, and explicitly governed by human approval. We design the system to log each thought, action, and observation into a tamper-evident audit ledger while enforcing dynamic permissioning for high-risk operations. By combining LangGraph’s interrupt-driven human-in-the-loop control with a hash-chained database, we

The post How to Build Transparent AI Agents: Traceable Decision-Making with Audit Trails and Human Gates appeared first on MarkTechPost.

In this tutorial, we build a glass-box agentic workflow that makes every decision traceable, auditable, and explicitly governed by human approval. We design the system to log each thought, action, and observation into a tamper-evident audit ledger while enforcing dynamic permissioning for high-risk operations. By combining LangGraph’s interrupt-driven human-in-the-loop control with a hash-chained database, we

The post How to Build Transparent AI Agents: Traceable Decision-Making with Audit Trails and Human Gates appeared first on MarkTechPost. Read More

Beyond In-Domain Detection: SpikeScore for Cross-Domain Hallucination Detectioncs.AI updates on arXiv.org arXiv:2601.19245v5 Announce Type: replace

Abstract: Hallucination detection is critical for deploying large language models (LLMs) in real-world applications. Existing hallucination detection methods achieve strong performance when the training and test data come from the same domain, but they suffer from poor cross-domain generalization. In this paper, we study an important yet overlooked problem, termed generalizable hallucination detection (GHD), which aims to train hallucination detectors on data from a single domain while ensuring robust performance across diverse related domains. In studying GHD, we simulate multi-turn dialogues following LLMs’ initial response and observe an interesting phenomenon: hallucination-initiated multi-turn dialogues universally exhibit larger uncertainty fluctuations than factual ones across different domains. Based on the phenomenon, we propose a new score SpikeScore, which quantifies abrupt fluctuations in multi-turn dialogues. Through both theoretical analysis and empirical validation, we demonstrate that SpikeScore achieves strong cross-domain separability between hallucinated and non-hallucinated responses. Experiments across multiple LLMs and benchmarks demonstrate that the SpikeScore-based detection method outperforms representative baselines in cross-domain generalization and surpasses advanced generalization-oriented methods, verifying the effectiveness of our method in cross-domain hallucination detection.

arXiv:2601.19245v5 Announce Type: replace

Abstract: Hallucination detection is critical for deploying large language models (LLMs) in real-world applications. Existing hallucination detection methods achieve strong performance when the training and test data come from the same domain, but they suffer from poor cross-domain generalization. In this paper, we study an important yet overlooked problem, termed generalizable hallucination detection (GHD), which aims to train hallucination detectors on data from a single domain while ensuring robust performance across diverse related domains. In studying GHD, we simulate multi-turn dialogues following LLMs’ initial response and observe an interesting phenomenon: hallucination-initiated multi-turn dialogues universally exhibit larger uncertainty fluctuations than factual ones across different domains. Based on the phenomenon, we propose a new score SpikeScore, which quantifies abrupt fluctuations in multi-turn dialogues. Through both theoretical analysis and empirical validation, we demonstrate that SpikeScore achieves strong cross-domain separability between hallucinated and non-hallucinated responses. Experiments across multiple LLMs and benchmarks demonstrate that the SpikeScore-based detection method outperforms representative baselines in cross-domain generalization and surpasses advanced generalization-oriented methods, verifying the effectiveness of our method in cross-domain hallucination detection. Read More

Toward a Fully Autonomous, AI-Native Particle Acceleratorcs.AI updates on arXiv.org arXiv:2602.17536v1 Announce Type: cross

Abstract: This position paper presents a vision for self-driving particle accelerators that operate autonomously with minimal human intervention. We propose that future facilities be designed through artificial intelligence (AI) co-design, where AI jointly optimizes the accelerator lattice, diagnostics, and science application from inception to maximize performance while enabling autonomous operation. Rather than retrofitting AI onto human-centric systems, we envision facilities designed from the ground up as AI-native platforms. We outline nine critical research thrusts spanning agentic control architectures, knowledge integration, adaptive learning, digital twins, health monitoring, safety frameworks, modular hardware design, multimodal data fusion, and cross-domain collaboration. This roadmap aims to guide the accelerator community toward a future where AI-driven design and operation deliver unprecedented science output and reliability.

arXiv:2602.17536v1 Announce Type: cross

Abstract: This position paper presents a vision for self-driving particle accelerators that operate autonomously with minimal human intervention. We propose that future facilities be designed through artificial intelligence (AI) co-design, where AI jointly optimizes the accelerator lattice, diagnostics, and science application from inception to maximize performance while enabling autonomous operation. Rather than retrofitting AI onto human-centric systems, we envision facilities designed from the ground up as AI-native platforms. We outline nine critical research thrusts spanning agentic control architectures, knowledge integration, adaptive learning, digital twins, health monitoring, safety frameworks, modular hardware design, multimodal data fusion, and cross-domain collaboration. This roadmap aims to guide the accelerator community toward a future where AI-driven design and operation deliver unprecedented science output and reliability. Read More

Coca-Cola turns to AI marketing as price-led growth slowsAI News Shifting from price hikes to persuasion, Coca-Cola’s latest strategy signals how AI is moving deeper into the core of corporate marketing. Recent coverage of the company’s leadership discussions shows that Coca-Cola is entering what executives describe as a new phase focused on influence not pricing power. According to Mi-3, the company is changing its focus

The post Coca-Cola turns to AI marketing as price-led growth slows appeared first on AI News.

Shifting from price hikes to persuasion, Coca-Cola’s latest strategy signals how AI is moving deeper into the core of corporate marketing. Recent coverage of the company’s leadership discussions shows that Coca-Cola is entering what executives describe as a new phase focused on influence not pricing power. According to Mi-3, the company is changing its focus

The post Coca-Cola turns to AI marketing as price-led growth slows appeared first on AI News. Read More

Puzzle it Out: Local-to-Global World Model for Offline Multi-Agent Reinforcement Learningcs.AI updates on arXiv.org arXiv:2601.07463v2 Announce Type: replace

Abstract: Offline multi-agent reinforcement learning (MARL) aims to solve cooperative decision-making problems in multi-agent systems using pre-collected datasets. Existing offline MARL methods primarily constrain training within the dataset distribution, resulting in overly conservative policies that struggle to generalize beyond the support of the data. While model-based approaches offer a promising solution by expanding the original dataset with synthetic data generated from a learned world model, the high dimensionality, non-stationarity, and complexity of multi-agent systems make it challenging to accurately estimate the transitions and reward functions in offline MARL. Given the difficulty of directly modeling joint dynamics, we propose a local-to-global (LOGO) world model, a novel framework that leverages local predictions-which are easier to estimate-to infer global state dynamics, thus improving prediction accuracy while implicitly capturing agent-wise dependencies. Using the trained world model, we generate synthetic data to augment the original dataset, expanding the effective state-action space. To ensure reliable policy learning, we further introduce an uncertainty-aware sampling mechanism that adaptively weights synthetic data by prediction uncertainty, reducing approximation error propagation to policies. In contrast to conventional ensemble-based methods, our approach requires only an additional encoder for uncertainty estimation, significantly reducing computational overhead while maintaining accuracy. Extensive experiments across 8 scenarios against 8 baselines demonstrate that our method surpasses state-of-the-art baselines on standard offline MARL benchmarks, establishing a new model-based baseline for generalizable offline multi-agent learning.

arXiv:2601.07463v2 Announce Type: replace

Abstract: Offline multi-agent reinforcement learning (MARL) aims to solve cooperative decision-making problems in multi-agent systems using pre-collected datasets. Existing offline MARL methods primarily constrain training within the dataset distribution, resulting in overly conservative policies that struggle to generalize beyond the support of the data. While model-based approaches offer a promising solution by expanding the original dataset with synthetic data generated from a learned world model, the high dimensionality, non-stationarity, and complexity of multi-agent systems make it challenging to accurately estimate the transitions and reward functions in offline MARL. Given the difficulty of directly modeling joint dynamics, we propose a local-to-global (LOGO) world model, a novel framework that leverages local predictions-which are easier to estimate-to infer global state dynamics, thus improving prediction accuracy while implicitly capturing agent-wise dependencies. Using the trained world model, we generate synthetic data to augment the original dataset, expanding the effective state-action space. To ensure reliable policy learning, we further introduce an uncertainty-aware sampling mechanism that adaptively weights synthetic data by prediction uncertainty, reducing approximation error propagation to policies. In contrast to conventional ensemble-based methods, our approach requires only an additional encoder for uncertainty estimation, significantly reducing computational overhead while maintaining accuracy. Extensive experiments across 8 scenarios against 8 baselines demonstrate that our method surpasses state-of-the-art baselines on standard offline MARL benchmarks, establishing a new model-based baseline for generalizable offline multi-agent learning. Read More

Are LLMs Ready to Replace Bangla Annotators?cs.AI updates on arXiv.org arXiv:2602.16241v2 Announce Type: replace-cross

Abstract: Large Language Models (LLMs) are increasingly used as automated annotators to scale dataset creation, yet their reliability as unbiased annotators–especially for low-resource and identity-sensitive settings–remains poorly understood. In this work, we study the behavior of LLMs as zero-shot annotators for Bangla hate speech, a task where even human agreement is challenging, and annotator bias can have serious downstream consequences. We conduct a systematic benchmark of 17 LLMs using a unified evaluation framework. Our analysis uncovers annotator bias and substantial instability in model judgments. Surprisingly, increased model scale does not guarantee improved annotation quality–smaller, more task-aligned models frequently exhibit more consistent behavior than their larger counterparts. These results highlight important limitations of current LLMs for sensitive annotation tasks in low-resource languages and underscore the need for careful evaluation before deployment.

arXiv:2602.16241v2 Announce Type: replace-cross

Abstract: Large Language Models (LLMs) are increasingly used as automated annotators to scale dataset creation, yet their reliability as unbiased annotators–especially for low-resource and identity-sensitive settings–remains poorly understood. In this work, we study the behavior of LLMs as zero-shot annotators for Bangla hate speech, a task where even human agreement is challenging, and annotator bias can have serious downstream consequences. We conduct a systematic benchmark of 17 LLMs using a unified evaluation framework. Our analysis uncovers annotator bias and substantial instability in model judgments. Surprisingly, increased model scale does not guarantee improved annotation quality–smaller, more task-aligned models frequently exhibit more consistent behavior than their larger counterparts. These results highlight important limitations of current LLMs for sensitive annotation tasks in low-resource languages and underscore the need for careful evaluation before deployment. Read More

AI: Executives’ optimism about the futureAI News The most rigorous international study of firm-level AI impact to date has landed, and its headline finding is more constructive than many expected. Across nearly 6,000 verified executives in four countries, AI has delivered modest aggregate shifts in productivity or employment over the past three years. The measured impact reflects the early phases of deployment

The post AI: Executives’ optimism about the future appeared first on AI News.

The most rigorous international study of firm-level AI impact to date has landed, and its headline finding is more constructive than many expected. Across nearly 6,000 verified executives in four countries, AI has delivered modest aggregate shifts in productivity or employment over the past three years. The measured impact reflects the early phases of deployment

The post AI: Executives’ optimism about the future appeared first on AI News. Read More

Building Production-Ready AI Agents with Agent Development KitKDnuggets ADK from Google addresses a critical gap in the agentic AI ecosystem by providing a framework that simplifies the construction and deployment of multi-agent systems. Learn more.

ADK from Google addresses a critical gap in the agentic AI ecosystem by providing a framework that simplifies the construction and deployment of multi-agent systems. Learn more. Read More

How AI upgrades enterprise treasury managementAI News The adoption of AI for enterprise treasury management enables businesses to abandon manual spreadsheets for automated data pipelines. Corporate finance departments face pressure from market volatility, regulatory demands, and digital finance requirements. Ashish Kumar, head of Infosys Oracle Sales for North America, and CM Grover, CEO of IBS FinTech, recently discussed the realities of corporate

The post How AI upgrades enterprise treasury management appeared first on AI News.

The adoption of AI for enterprise treasury management enables businesses to abandon manual spreadsheets for automated data pipelines. Corporate finance departments face pressure from market volatility, regulatory demands, and digital finance requirements. Ashish Kumar, head of Infosys Oracle Sales for North America, and CM Grover, CEO of IBS FinTech, recently discussed the realities of corporate

The post How AI upgrades enterprise treasury management appeared first on AI News. Read More