5 Python Data Validation Libraries You Should Be UsingKDnuggets These five libraries approach validation from very different angles, which is exactly why they matter. Each one solves a specific class of problems that appear again and again in modern data and machine learning workflows.

These five libraries approach validation from very different angles, which is exactly why they matter. Each one solves a specific class of problems that appear again and again in modern data and machine learning workflows. Read More

Optimizing Deep Learning Models with SAMTowards Data Science A deep dive into the Sharpness-Aware-Minimization (SAM) algorithm and how it improves the generalizability of modern deep learning models

The post Optimizing Deep Learning Models with SAM appeared first on Towards Data Science.

A deep dive into the Sharpness-Aware-Minimization (SAM) algorithm and how it improves the generalizability of modern deep learning models

The post Optimizing Deep Learning Models with SAM appeared first on Towards Data Science. Read More

Deploying agentic finance AI for immediate business ROIAI News Agentic finance AI improves business efficiency and ROI only when deployed with strict governance and clear return on investment targets. A recent FT Longitude survey of 200 finance leaders across the US, UK, France, and Germany showed 61 percent have deployed AI agents merely as experiments. Meanwhile, one in four executives admit they do not

The post Deploying agentic finance AI for immediate business ROI appeared first on AI News.

Agentic finance AI improves business efficiency and ROI only when deployed with strict governance and clear return on investment targets. A recent FT Longitude survey of 200 finance leaders across the US, UK, France, and Germany showed 61 percent have deployed AI agents merely as experiments. Meanwhile, one in four executives admit they do not

The post Deploying agentic finance AI for immediate business ROI appeared first on AI News. Read More

Optimizing Token Generation in PyTorch Decoder ModelsTowards Data Science Hiding host-device synchronization via CUDA stream interleaving

The post Optimizing Token Generation in PyTorch Decoder Models appeared first on Towards Data Science.

Hiding host-device synchronization via CUDA stream interleaving

The post Optimizing Token Generation in PyTorch Decoder Models appeared first on Towards Data Science. Read More

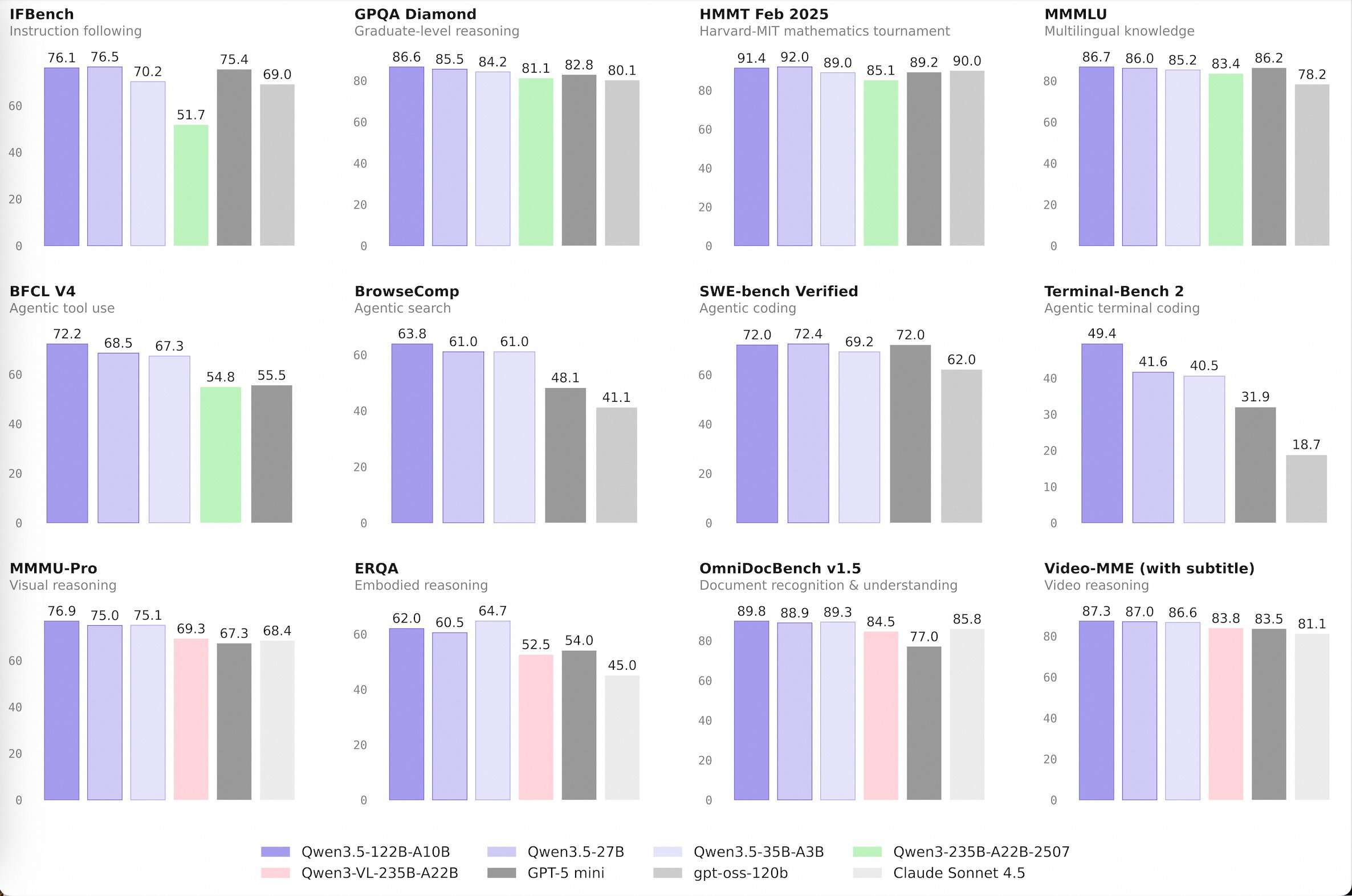

Alibaba Qwen Team Releases Qwen 3.5 Medium Model Series: A Production Powerhouse Proving that Smaller AI Models are SmarterMarkTechPost The development of large language models (LLMs) has been defined by the pursuit of raw scale. While increasing parameter counts into the trillions initially drove performance gains, it also introduced significant infrastructure overhead and diminishing marginal utility. The release of the Qwen 3.5 Medium Model Series signals a shift in Alibaba’s Qwen approach, prioritizing architectural

The post Alibaba Qwen Team Releases Qwen 3.5 Medium Model Series: A Production Powerhouse Proving that Smaller AI Models are Smarter appeared first on MarkTechPost.

The development of large language models (LLMs) has been defined by the pursuit of raw scale. While increasing parameter counts into the trillions initially drove performance gains, it also introduced significant infrastructure overhead and diminishing marginal utility. The release of the Qwen 3.5 Medium Model Series signals a shift in Alibaba’s Qwen approach, prioritizing architectural

The post Alibaba Qwen Team Releases Qwen 3.5 Medium Model Series: A Production Powerhouse Proving that Smaller AI Models are Smarter appeared first on MarkTechPost. Read More

Decisioning at the Edge: Policy Matching at ScaleTowards Data Science Policy-to-Agency Optimization with PuLP

The post Decisioning at the Edge: Policy Matching at Scale appeared first on Towards Data Science.

Policy-to-Agency Optimization with PuLP

The post Decisioning at the Edge: Policy Matching at Scale appeared first on Towards Data Science. Read More

Operational Robustness of LLMs on Code Generationcs.AI updates on arXiv.org arXiv:2602.18800v1 Announce Type: cross

Abstract: It is now common practice in software development for large language models (LLMs) to be used to generate program code. It is desirable to evaluate the robustness of LLMs for this usage. This paper is concerned in particular with how sensitive LLMs are to variations in descriptions of the coding tasks. However, existing techniques for evaluating this robustness are unsuitable for code generation because the input data space of natural language descriptions is discrete. To address this problem, we propose a robustness evaluation method called scenario domain analysis, which aims to find the expected minimal change in the natural language descriptions of coding tasks that would cause the LLMs to produce incorrect outputs. We have formally proved the theoretical properties of the method and also conducted extensive experiments to evaluate the robustness of four state-of-the-art art LLMs: Gemini-pro, Codex, Llamma2 and Falcon 7B, and have found that we are able to rank these with confidence from best to worst. Moreover, we have also studied how robustness varies in different scenarios, including the variations with the topic of the coding task and with the complexity of its sample solution, and found that robustness is lower for more complex tasks and also lower for more advanced topics, such as multi-threading and data structures.

arXiv:2602.18800v1 Announce Type: cross

Abstract: It is now common practice in software development for large language models (LLMs) to be used to generate program code. It is desirable to evaluate the robustness of LLMs for this usage. This paper is concerned in particular with how sensitive LLMs are to variations in descriptions of the coding tasks. However, existing techniques for evaluating this robustness are unsuitable for code generation because the input data space of natural language descriptions is discrete. To address this problem, we propose a robustness evaluation method called scenario domain analysis, which aims to find the expected minimal change in the natural language descriptions of coding tasks that would cause the LLMs to produce incorrect outputs. We have formally proved the theoretical properties of the method and also conducted extensive experiments to evaluate the robustness of four state-of-the-art art LLMs: Gemini-pro, Codex, Llamma2 and Falcon 7B, and have found that we are able to rank these with confidence from best to worst. Moreover, we have also studied how robustness varies in different scenarios, including the variations with the topic of the coding task and with the complexity of its sample solution, and found that robustness is lower for more complex tasks and also lower for more advanced topics, such as multi-threading and data structures. Read More

Detecting Cybersecurity Threats by Integrating Explainable AI with SHAP Interpretability and Strategic Data Samplingcs.AI updates on arXiv.org arXiv:2602.19087v1 Announce Type: cross

Abstract: The critical need for transparent and trustworthy machine learning in cybersecurity operations drives the development of this integrated Explainable AI (XAI) framework. Our methodology addresses three fundamental challenges in deploying AI for threat detection: handling massive datasets through Strategic Sampling Methodology that preserves class distributions while enabling efficient model development; ensuring experimental rigor via Automated Data Leakage Prevention that systematically identifies and removes contaminated features; and providing operational transparency through Integrated XAI Implementation using SHAP analysis for model-agnostic interpretability across algorithms. Applied to the CIC-IDS2017 dataset, our approach maintains detection efficacy while reducing computational overhead and delivering actionable explanations for security analysts. The framework demonstrates that explainability, computational efficiency, and experimental integrity can be simultaneously achieved, providing a robust foundation for deploying trustworthy AI systems in security operations centers where decision transparency is paramount.

arXiv:2602.19087v1 Announce Type: cross

Abstract: The critical need for transparent and trustworthy machine learning in cybersecurity operations drives the development of this integrated Explainable AI (XAI) framework. Our methodology addresses three fundamental challenges in deploying AI for threat detection: handling massive datasets through Strategic Sampling Methodology that preserves class distributions while enabling efficient model development; ensuring experimental rigor via Automated Data Leakage Prevention that systematically identifies and removes contaminated features; and providing operational transparency through Integrated XAI Implementation using SHAP analysis for model-agnostic interpretability across algorithms. Applied to the CIC-IDS2017 dataset, our approach maintains detection efficacy while reducing computational overhead and delivering actionable explanations for security analysts. The framework demonstrates that explainability, computational efficiency, and experimental integrity can be simultaneously achieved, providing a robust foundation for deploying trustworthy AI systems in security operations centers where decision transparency is paramount. Read More

AI Bots Formed a Cartel. No One Told Them To.Towards Data Science Inside the research that shows algorithmic price-fixing isn’t a bug in the code. It’s a feature of the math.

The post AI Bots Formed a Cartel. No One Told Them To. appeared first on Towards Data Science.

Inside the research that shows algorithmic price-fixing isn’t a bug in the code. It’s a feature of the math.

The post AI Bots Formed a Cartel. No One Told Them To. appeared first on Towards Data Science. Read More

COBOL modernisation just got an AI shortcut–and the market noticedAI News It’s an open secret (that is, not many people seem to know) that the institutions keeping the global financial system turnig over run code that is ancient, barely understood, and frighteningly hard to replace. Now, AI is finally making that problem solvable – and the market has responded with a reality check for one of

The post COBOL modernisation just got an AI shortcut–and the market noticed appeared first on AI News.

It’s an open secret (that is, not many people seem to know) that the institutions keeping the global financial system turnig over run code that is ancient, barely understood, and frighteningly hard to replace. Now, AI is finally making that problem solvable – and the market has responded with a reality check for one of

The post COBOL modernisation just got an AI shortcut–and the market noticed appeared first on AI News. Read More