Energy Consumption of Dataframe Libraries for End-to-End Deep Learning Pipelines:A Comparative Analysiscs.AI updates on arXiv.org arXiv:2511.08644v2 Announce Type: replace-cross

Abstract: This paper presents a detailed comparative analysis of the performance of three major Python data manipulation libraries – Pandas, Polars, and Dask – specifically when embedded within complete deep learning (DL) training and inference pipelines. The research bridges a gap in existing literature by studying how these libraries interact with substantial GPU workloads during critical phases like data loading, preprocessing, and batch feeding. The authors measured key performance indicators including runtime, memory usage, disk usage, and energy consumption (both CPU and GPU) across various machine learning models and datasets.

arXiv:2511.08644v2 Announce Type: replace-cross

Abstract: This paper presents a detailed comparative analysis of the performance of three major Python data manipulation libraries – Pandas, Polars, and Dask – specifically when embedded within complete deep learning (DL) training and inference pipelines. The research bridges a gap in existing literature by studying how these libraries interact with substantial GPU workloads during critical phases like data loading, preprocessing, and batch feeding. The authors measured key performance indicators including runtime, memory usage, disk usage, and energy consumption (both CPU and GPU) across various machine learning models and datasets. Read More

Preference Learning with Lie Detectors can Induce Honesty or Evasioncs.AI updates on arXiv.org arXiv:2505.13787v2 Announce Type: replace-cross

Abstract: As AI systems become more capable, deceptive behaviors can undermine evaluation and mislead users at deployment. Recent work has shown that lie detectors can accurately classify deceptive behavior, but they are not typically used in the training pipeline due to concerns around contamination and objective hacking. We examine these concerns by incorporating a lie detector into the labelling step of LLM post-training and evaluating whether the learned policy is genuinely more honest, or instead learns to fool the lie detector while remaining deceptive. Using DolusChat, a novel 65k-example dataset with paired truthful/deceptive responses, we identify three key factors that determine the honesty of learned policies: amount of exploration during preference learning, lie detector accuracy, and KL regularization strength. We find that preference learning with lie detectors and GRPO can lead to policies which evade lie detectors, with deception rates of over 85%. However, if the lie detector true positive rate (TPR) or KL regularization is sufficiently high, GRPO learns honest policies. In contrast, off-policy algorithms (DPO) consistently lead to deception rates under 25% for realistic TPRs. Our results illustrate a more complex picture than previously assumed: depending on the context, lie-detector-enhanced training can be a powerful tool for scalable oversight, or a counterproductive method encouraging undetectable misalignment.

arXiv:2505.13787v2 Announce Type: replace-cross

Abstract: As AI systems become more capable, deceptive behaviors can undermine evaluation and mislead users at deployment. Recent work has shown that lie detectors can accurately classify deceptive behavior, but they are not typically used in the training pipeline due to concerns around contamination and objective hacking. We examine these concerns by incorporating a lie detector into the labelling step of LLM post-training and evaluating whether the learned policy is genuinely more honest, or instead learns to fool the lie detector while remaining deceptive. Using DolusChat, a novel 65k-example dataset with paired truthful/deceptive responses, we identify three key factors that determine the honesty of learned policies: amount of exploration during preference learning, lie detector accuracy, and KL regularization strength. We find that preference learning with lie detectors and GRPO can lead to policies which evade lie detectors, with deception rates of over 85%. However, if the lie detector true positive rate (TPR) or KL regularization is sufficiently high, GRPO learns honest policies. In contrast, off-policy algorithms (DPO) consistently lead to deception rates under 25% for realistic TPRs. Our results illustrate a more complex picture than previously assumed: depending on the context, lie-detector-enhanced training can be a powerful tool for scalable oversight, or a counterproductive method encouraging undetectable misalignment. Read More

SlotMatch: Distilling Object-Centric Representations for Unsupervised Video Segmentationcs.AI updates on arXiv.org arXiv:2508.03411v3 Announce Type: replace-cross

Abstract: Unsupervised video segmentation is a challenging computer vision task, especially due to the lack of supervisory signals coupled with the complexity of visual scenes. To overcome this challenge, state-of-the-art models based on slot attention often have to rely on large and computationally expensive neural architectures. To this end, we propose a simple knowledge distillation framework that effectively transfers object-centric representations to a lightweight student. The proposed framework, called SlotMatch, aligns corresponding teacher and student slots via the cosine similarity, requiring no additional distillation objectives or auxiliary supervision. The simplicity of SlotMatch is confirmed via theoretical and empirical evidence, both indicating that integrating additional losses is redundant. We conduct experiments on three datasets to compare the state-of-the-art teacher model, SlotContrast, with our distilled student. The results show that our student based on SlotMatch matches and even outperforms its teacher, while using 3.6x less parameters and running up to 2.7x faster. Moreover, our student surpasses all other state-of-the-art unsupervised video segmentation models.

arXiv:2508.03411v3 Announce Type: replace-cross

Abstract: Unsupervised video segmentation is a challenging computer vision task, especially due to the lack of supervisory signals coupled with the complexity of visual scenes. To overcome this challenge, state-of-the-art models based on slot attention often have to rely on large and computationally expensive neural architectures. To this end, we propose a simple knowledge distillation framework that effectively transfers object-centric representations to a lightweight student. The proposed framework, called SlotMatch, aligns corresponding teacher and student slots via the cosine similarity, requiring no additional distillation objectives or auxiliary supervision. The simplicity of SlotMatch is confirmed via theoretical and empirical evidence, both indicating that integrating additional losses is redundant. We conduct experiments on three datasets to compare the state-of-the-art teacher model, SlotContrast, with our distilled student. The results show that our student based on SlotMatch matches and even outperforms its teacher, while using 3.6x less parameters and running up to 2.7x faster. Moreover, our student surpasses all other state-of-the-art unsupervised video segmentation models. Read More

Towards Automatic Evaluation and Selection of PHI De-identification Models via Multi-Agent Collaborationcs.AI updates on arXiv.org arXiv:2510.16194v2 Announce Type: replace

Abstract: Protected health information (PHI) de-identification is critical for enabling the safe reuse of clinical notes, yet evaluating and comparing PHI de-identification models typically depends on costly, small-scale expert annotations. We present TEAM-PHI, a multi-agent evaluation and selection framework that uses large language models (LLMs) to automatically measure de-identification quality and select the best-performing model without heavy reliance on gold labels. TEAM-PHI deploys multiple Evaluation Agents, each independently judging the correctness of PHI extractions and outputting structured metrics. Their results are then consolidated through an LLM-based majority voting mechanism that integrates diverse evaluator perspectives into a single, stable, and reproducible ranking. Experiments on a real-world clinical note corpus demonstrate that TEAM-PHI produces consistent and accurate rankings: despite variation across individual evaluators, LLM-based voting reliably converges on the same top-performing systems. Further comparison with ground-truth annotations and human evaluation confirms that the framework’s automated rankings closely match supervised evaluation. By combining independent evaluation agents with LLM majority voting, TEAM-PHI offers a practical, secure, and cost-effective solution for automatic evaluation and best-model selection in PHI de-identification, even when ground-truth labels are limited.

arXiv:2510.16194v2 Announce Type: replace

Abstract: Protected health information (PHI) de-identification is critical for enabling the safe reuse of clinical notes, yet evaluating and comparing PHI de-identification models typically depends on costly, small-scale expert annotations. We present TEAM-PHI, a multi-agent evaluation and selection framework that uses large language models (LLMs) to automatically measure de-identification quality and select the best-performing model without heavy reliance on gold labels. TEAM-PHI deploys multiple Evaluation Agents, each independently judging the correctness of PHI extractions and outputting structured metrics. Their results are then consolidated through an LLM-based majority voting mechanism that integrates diverse evaluator perspectives into a single, stable, and reproducible ranking. Experiments on a real-world clinical note corpus demonstrate that TEAM-PHI produces consistent and accurate rankings: despite variation across individual evaluators, LLM-based voting reliably converges on the same top-performing systems. Further comparison with ground-truth annotations and human evaluation confirms that the framework’s automated rankings closely match supervised evaluation. By combining independent evaluation agents with LLM majority voting, TEAM-PHI offers a practical, secure, and cost-effective solution for automatic evaluation and best-model selection in PHI de-identification, even when ground-truth labels are limited. Read More

PyTorch Tutorial for Beginners: Build a Multiple Regression Model from ScratchTowards Data Science Hands-on PyTorch: Building a 3-layer neural network for multiple regression

The post PyTorch Tutorial for Beginners: Build a Multiple Regression Model from Scratch appeared first on Towards Data Science.

Hands-on PyTorch: Building a 3-layer neural network for multiple regression

The post PyTorch Tutorial for Beginners: Build a Multiple Regression Model from Scratch appeared first on Towards Data Science. Read More

How to Write Readable Python Functions Even If You’re a BeginnerKDnuggets Writing readable Python functions doesn’t have to be difficult. This guide shows simple beginner-friendly techniques to make your code clear, consistent, and easy for others to understand.

Writing readable Python functions doesn’t have to be difficult. This guide shows simple beginner-friendly techniques to make your code clear, consistent, and easy for others to understand. Read More

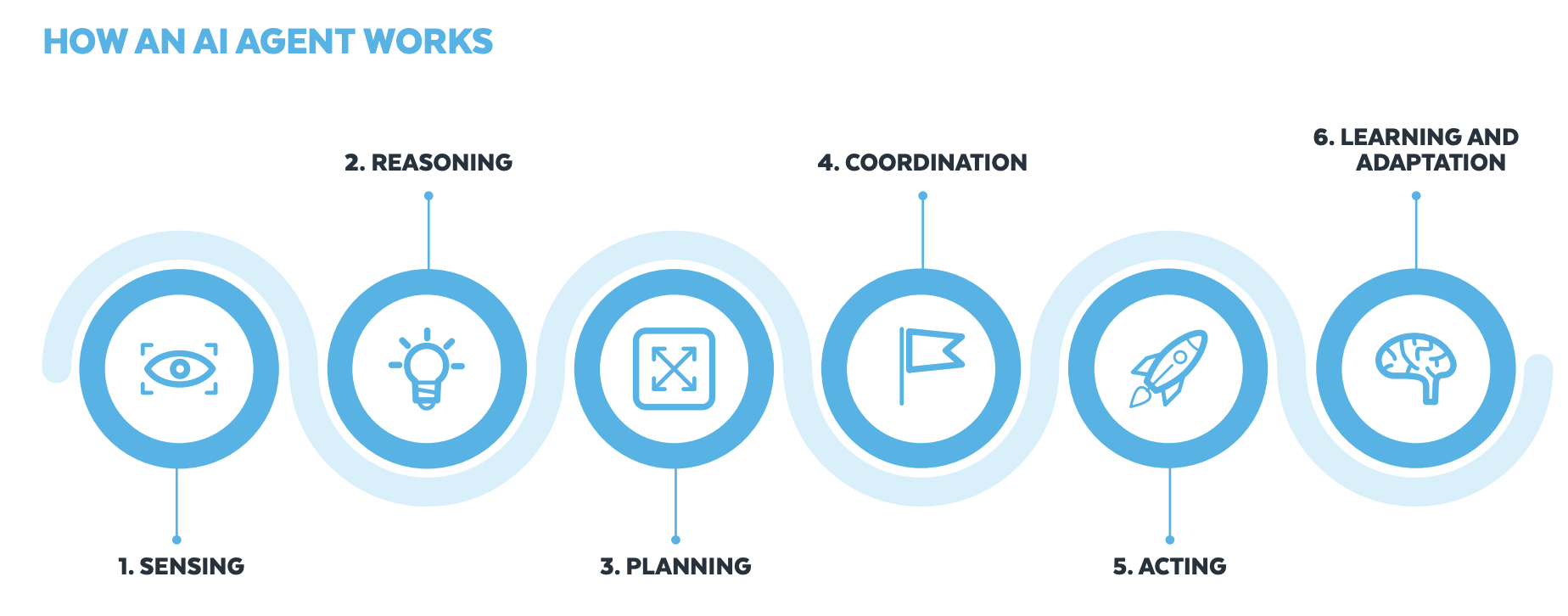

How to Perform Agentic Information RetrievalTowards Data Science Learn how to utilize AI agents to find information in your document corpus

The post How to Perform Agentic Information Retrieval appeared first on Towards Data Science.

Learn how to utilize AI agents to find information in your document corpus

The post How to Perform Agentic Information Retrieval appeared first on Towards Data Science. Read More

Build an agentic solution with Amazon Nova, Snowflake, and LangGraphArtificial Intelligence In this post, we cover how you can use tools from Snowflake AI Data Cloud and Amazon Web Services (AWS) to build generative AI solutions that organizations can use to make data-driven decisions, increase operational efficiency, and ultimately gain a competitive edge.

In this post, we cover how you can use tools from Snowflake AI Data Cloud and Amazon Web Services (AWS) to build generative AI solutions that organizations can use to make data-driven decisions, increase operational efficiency, and ultimately gain a competitive edge. Read More

Developing Human Sexuality in the Age of AITowards Data Science How we learn is changing with generative AI — what does that mean for sex education, consent, and responsibility?

The post Developing Human Sexuality in the Age of AI appeared first on Towards Data Science.

How we learn is changing with generative AI — what does that mean for sex education, consent, and responsibility?

The post Developing Human Sexuality in the Age of AI appeared first on Towards Data Science. Read More

Using Spectrum fine-tuning to improve FM training efficiency on Amazon SageMaker AIArtificial Intelligence In this post you will learn how to use Spectrum to optimize resource use and shorten training times without sacrificing quality, as well as how to implement Spectrum fine-tuning with Amazon SageMaker AI training jobs. We will also discuss the tradeoff between QLoRA and Spectrum fine-tuning, showing that while QLoRA is more resource efficient, Spectrum results in higher performance overall.

In this post you will learn how to use Spectrum to optimize resource use and shorten training times without sacrificing quality, as well as how to implement Spectrum fine-tuning with Amazon SageMaker AI training jobs. We will also discuss the tradeoff between QLoRA and Spectrum fine-tuning, showing that while QLoRA is more resource efficient, Spectrum results in higher performance overall. Read More