The Machine Learning “Advent Calendar” Day 13: LASSO and Ridge Regression in ExcelTowards Data Science Ridge and Lasso regression are often perceived as more complex versions of linear regression. In reality, the prediction model remains exactly the same. What changes is the training objective. By adding a penalty on the coefficients, regularization forces the model to choose more stable solutions, especially when features are correlated. Implementing Ridge and Lasso step by step in Excel makes this idea explicit: regularization does not add complexity, it adds preference.

The post The Machine Learning “Advent Calendar” Day 13: LASSO and Ridge Regression in Excel appeared first on Towards Data Science.

Ridge and Lasso regression are often perceived as more complex versions of linear regression. In reality, the prediction model remains exactly the same. What changes is the training objective. By adding a penalty on the coefficients, regularization forces the model to choose more stable solutions, especially when features are correlated. Implementing Ridge and Lasso step by step in Excel makes this idea explicit: regularization does not add complexity, it adds preference.

The post The Machine Learning “Advent Calendar” Day 13: LASSO and Ridge Regression in Excel appeared first on Towards Data Science. Read More

NeurIPS 2025 Best Paper Review: Qwen’s Systematic Exploration of Attention GatingTowards Data Science This one little trick can bring about enhanced training stability, the use of larger learning rates and improved scaling properties

The post NeurIPS 2025 Best Paper Review: Qwen’s Systematic Exploration of Attention Gating appeared first on Towards Data Science.

This one little trick can bring about enhanced training stability, the use of larger learning rates and improved scaling properties

The post NeurIPS 2025 Best Paper Review: Qwen’s Systematic Exploration of Attention Gating appeared first on Towards Data Science. Read More

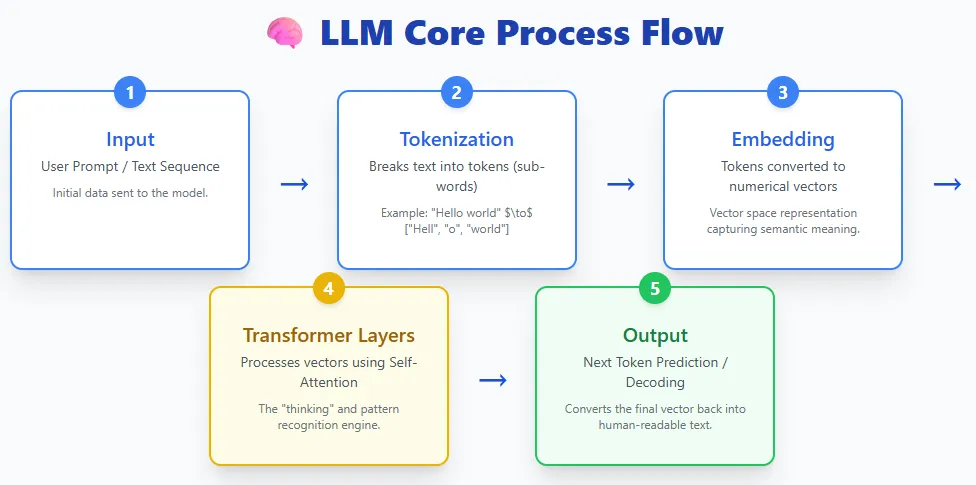

5 AI Model Architectures Every AI Engineer Should KnowMarkTechPost Everyone talks about LLMs—but today’s AI ecosystem is far bigger than just language models. Behind the scenes, a whole family of specialized architectures is quietly transforming how machines see, plan, act, segment, represent concepts, and even run efficiently on small devices. Each of these models solves a different part of the intelligence puzzle, and together

The post 5 AI Model Architectures Every AI Engineer Should Know appeared first on MarkTechPost.

Everyone talks about LLMs—but today’s AI ecosystem is far bigger than just language models. Behind the scenes, a whole family of specialized architectures is quietly transforming how machines see, plan, act, segment, represent concepts, and even run efficiently on small devices. Each of these models solves a different part of the intelligence puzzle, and together

The post 5 AI Model Architectures Every AI Engineer Should Know appeared first on MarkTechPost. Read More

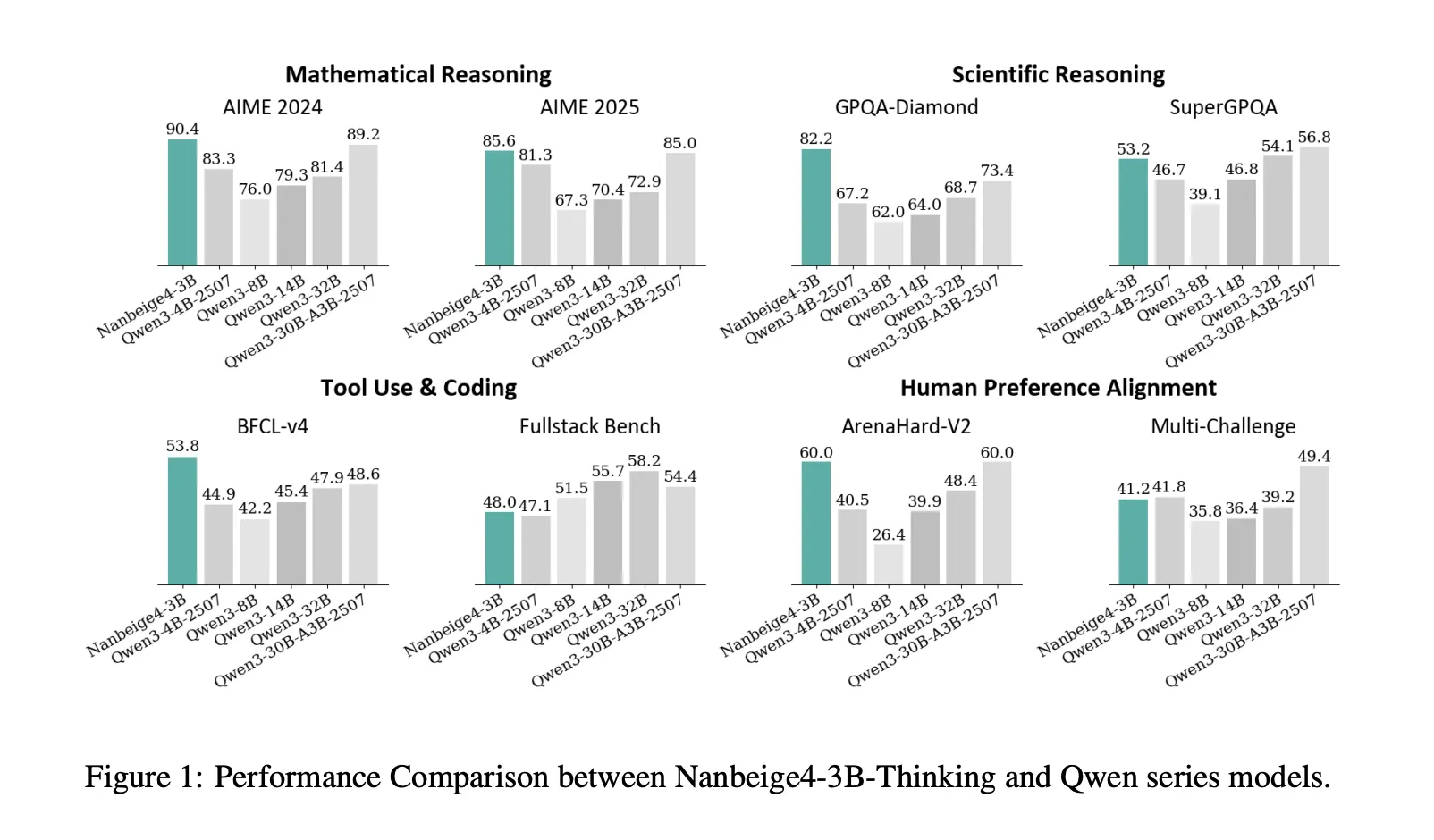

Nanbeige4-3B-Thinking: How a 23T Token Pipeline Pushes 3B Models Past 30B Class ReasoningMarkTechPost Can a 3B model deliver 30B class reasoning by fixing the training recipe instead of scaling parameters? Nanbeige LLM Lab at Boss Zhipin has released Nanbeige4-3B, a 3B parameter small language model family trained with an unusually heavy emphasis on data quality, curriculum scheduling, distillation, and reinforcement learning. The research team ships 2 primary checkpoints,

The post Nanbeige4-3B-Thinking: How a 23T Token Pipeline Pushes 3B Models Past 30B Class Reasoning appeared first on MarkTechPost.

Can a 3B model deliver 30B class reasoning by fixing the training recipe instead of scaling parameters? Nanbeige LLM Lab at Boss Zhipin has released Nanbeige4-3B, a 3B parameter small language model family trained with an unusually heavy emphasis on data quality, curriculum scheduling, distillation, and reinforcement learning. The research team ships 2 primary checkpoints,

The post Nanbeige4-3B-Thinking: How a 23T Token Pipeline Pushes 3B Models Past 30B Class Reasoning appeared first on MarkTechPost. Read More

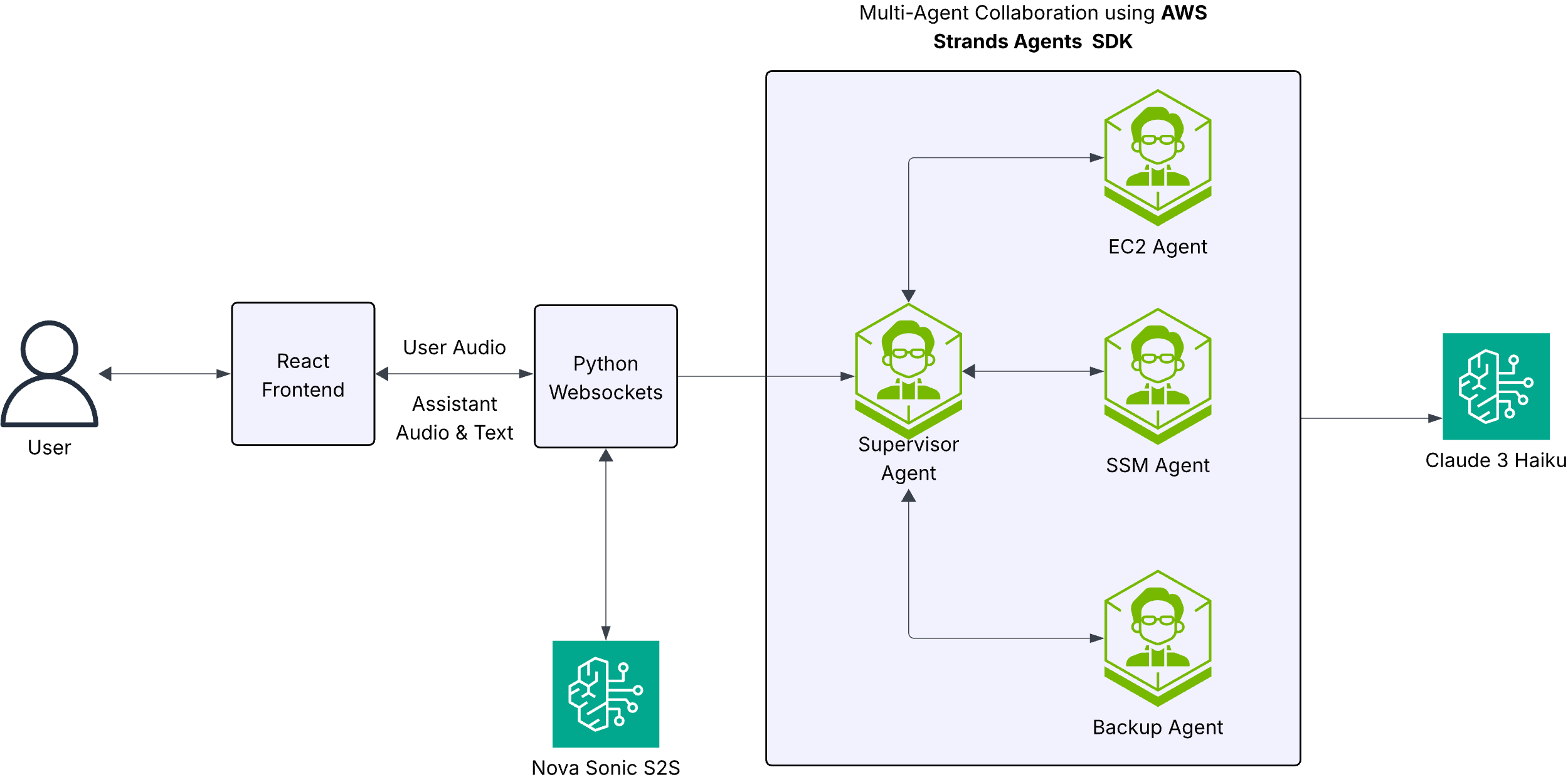

Building a voice-driven AWS assistant with Amazon Nova SonicArtificial Intelligence In this post, we explore how to build a sophisticated voice-powered AWS operations assistant using Amazon Nova Sonic for speech processing and Strands Agents for multi-agent orchestration. This solution demonstrates how natural language voice interactions can transform cloud operations, making AWS services more accessible and operations more efficient.

In this post, we explore how to build a sophisticated voice-powered AWS operations assistant using Amazon Nova Sonic for speech processing and Strands Agents for multi-agent orchestration. This solution demonstrates how natural language voice interactions can transform cloud operations, making AWS services more accessible and operations more efficient. Read More

Improved Gemini audio models for powerful voice experiencesGoogle DeepMind News

The Machine Learning “Advent Calendar” Day 12: Logistic Regression in ExcelTowards Data Science In this article, we rebuild Logistic Regression step by step directly in Excel.

Starting from a binary dataset, we explore why linear regression struggles as a classifier, how the logistic function fixes these issues, and how log-loss naturally appears from the likelihood.

With a transparent gradient-descent table, you can watch the model learn at each iteration—making the whole process intuitive, visual, and surprisingly satisfying.

The post The Machine Learning “Advent Calendar” Day 12: Logistic Regression in Excel appeared first on Towards Data Science.

In this article, we rebuild Logistic Regression step by step directly in Excel.

Starting from a binary dataset, we explore why linear regression struggles as a classifier, how the logistic function fixes these issues, and how log-loss naturally appears from the likelihood.

With a transparent gradient-descent table, you can watch the model learn at each iteration—making the whole process intuitive, visual, and surprisingly satisfying.

The post The Machine Learning “Advent Calendar” Day 12: Logistic Regression in Excel appeared first on Towards Data Science. Read More

How to Write Efficient Python Data ClassesKDnuggets Writing efficient Python data classes cuts boilerplate while keeping your code clean. And this article will teach you how.

Writing efficient Python data classes cuts boilerplate while keeping your code clean. And this article will teach you how. Read More

AI in 2026: Experimental AI concludes as autonomous systems riseAI News Generative AI’s experimental phase is concluding, making way for truly autonomous systems in 2026 that act rather than merely summarise. 2026 will lose the focus on model parameters and be about agency, energy efficiency, and the ability to navigate complex industrial environments. The next twelve months represent a departure from chatbots toward autonomous systems executing

The post AI in 2026: Experimental AI concludes as autonomous systems rise appeared first on AI News.

Generative AI’s experimental phase is concluding, making way for truly autonomous systems in 2026 that act rather than merely summarise. 2026 will lose the focus on model parameters and be about agency, energy efficiency, and the ability to navigate complex industrial environments. The next twelve months represent a departure from chatbots toward autonomous systems executing

The post AI in 2026: Experimental AI concludes as autonomous systems rise appeared first on AI News. Read More

Decentralized Computation: The Hidden Principle Behind Deep LearningTowards Data Science Most breakthroughs in deep learning — from simple neural networks to large language models — are built upon a principle that is much older than AI itself: decentralization. Instead of relying on a powerful “central planner” coordinating and commanding the behaviors of other components, modern deep-learning-based AI models succeed because many simple units interact locally

The post Decentralized Computation: The Hidden Principle Behind Deep Learning appeared first on Towards Data Science.

Most breakthroughs in deep learning — from simple neural networks to large language models — are built upon a principle that is much older than AI itself: decentralization. Instead of relying on a powerful “central planner” coordinating and commanding the behaviors of other components, modern deep-learning-based AI models succeed because many simple units interact locally

The post Decentralized Computation: The Hidden Principle Behind Deep Learning appeared first on Towards Data Science. Read More