Zero-shot Interactive Perceptioncs.AI updates on arXiv.org arXiv:2602.18374v1 Announce Type: cross

Abstract: Interactive perception (IP) enables robots to extract hidden information in their workspace and execute manipulation plans by physically interacting with objects and altering the state of the environment — crucial for resolving occlusions and ambiguity in complex, partially observable scenarios. We present Zero-Shot IP (ZS-IP), a novel framework that couples multi-strategy manipulation (pushing and grasping) with a memory-driven Vision Language Model (VLM) to guide robotic interactions and resolve semantic queries. ZS-IP integrates three key components: (1) an Enhanced Observation (EO) module that augments the VLM’s visual perception with both conventional keypoints and our proposed pushlines — a novel 2D visual augmentation tailored to pushing actions, (2) a memory-guided action module that reinforces semantic reasoning through context lookup, and (3) a robotic controller that executes pushing, pulling, or grasping based on VLM output. Unlike grid-based augmentations optimized for pick-and-place, pushlines capture affordances for contact-rich actions, substantially improving pushing performance. We evaluate ZS-IP on a 7-DOF Franka Panda arm across diverse scenes with varying occlusions and task complexities. Our experiments demonstrate that ZS-IP outperforms passive and viewpoint-based perception techniques such as Mark-Based Visual Prompting (MOKA), particularly in pushing tasks, while preserving the integrity of non-target elements.

arXiv:2602.18374v1 Announce Type: cross

Abstract: Interactive perception (IP) enables robots to extract hidden information in their workspace and execute manipulation plans by physically interacting with objects and altering the state of the environment — crucial for resolving occlusions and ambiguity in complex, partially observable scenarios. We present Zero-Shot IP (ZS-IP), a novel framework that couples multi-strategy manipulation (pushing and grasping) with a memory-driven Vision Language Model (VLM) to guide robotic interactions and resolve semantic queries. ZS-IP integrates three key components: (1) an Enhanced Observation (EO) module that augments the VLM’s visual perception with both conventional keypoints and our proposed pushlines — a novel 2D visual augmentation tailored to pushing actions, (2) a memory-guided action module that reinforces semantic reasoning through context lookup, and (3) a robotic controller that executes pushing, pulling, or grasping based on VLM output. Unlike grid-based augmentations optimized for pick-and-place, pushlines capture affordances for contact-rich actions, substantially improving pushing performance. We evaluate ZS-IP on a 7-DOF Franka Panda arm across diverse scenes with varying occlusions and task complexities. Our experiments demonstrate that ZS-IP outperforms passive and viewpoint-based perception techniques such as Mark-Based Visual Prompting (MOKA), particularly in pushing tasks, while preserving the integrity of non-target elements. Read More

UniReason 1.0: A Unified Reasoning Framework for World Knowledge Aligned Image Generation and Editingcs.AI updates on arXiv.org arXiv:2602.02437v4 Announce Type: replace-cross

Abstract: Unified multimodal models often struggle with complex synthesis tasks that demand deep reasoning, and typically treat text-to-image generation and image editing as isolated capabilities rather than interconnected reasoning steps. To address this, we propose UniReason, a unified framework that harmonizes these two tasks through two complementary reasoning paradigms. We incorporate world knowledge-enhanced textual reasoning into generation to infer implicit knowledge, and leverage editing capabilities for fine-grained editing-like visual refinement to further correct visual errors via self-reflection. This approach unifies generation and editing within a shared architecture, mirroring the human cognitive process of planning followed by refinement. We support this framework by systematically constructing a large-scale reasoning-centric dataset (~300k samples) covering five major knowledge domains (e.g., cultural commonsense, physics, etc.) for textual reasoning, alongside an agent-generated corpus for visual refinement. Extensive experiments demonstrate that UniReason achieves advanced performance on reasoning-intensive benchmarks such as WISE, KrisBench and UniREditBench, while maintaining superior general synthesis capabilities.

arXiv:2602.02437v4 Announce Type: replace-cross

Abstract: Unified multimodal models often struggle with complex synthesis tasks that demand deep reasoning, and typically treat text-to-image generation and image editing as isolated capabilities rather than interconnected reasoning steps. To address this, we propose UniReason, a unified framework that harmonizes these two tasks through two complementary reasoning paradigms. We incorporate world knowledge-enhanced textual reasoning into generation to infer implicit knowledge, and leverage editing capabilities for fine-grained editing-like visual refinement to further correct visual errors via self-reflection. This approach unifies generation and editing within a shared architecture, mirroring the human cognitive process of planning followed by refinement. We support this framework by systematically constructing a large-scale reasoning-centric dataset (~300k samples) covering five major knowledge domains (e.g., cultural commonsense, physics, etc.) for textual reasoning, alongside an agent-generated corpus for visual refinement. Extensive experiments demonstrate that UniReason achieves advanced performance on reasoning-intensive benchmarks such as WISE, KrisBench and UniREditBench, while maintaining superior general synthesis capabilities. Read More

Hitachi bets on industrial expertise to win the physical AI raceAI News Physical AI–the branch of artificial intelligence that controls robots and industrial machinery in the real world–has a hierarchy problem. At the top, OpenAI and Google are scaling multimodal foundation models. In the middle, Nvidia is building the platforms and tools for physical AI development. And then there is a third camp: industrial manufacturers like Hitachi

The post Hitachi bets on industrial expertise to win the physical AI race appeared first on AI News.

Physical AI–the branch of artificial intelligence that controls robots and industrial machinery in the real world–has a hierarchy problem. At the top, OpenAI and Google are scaling multimodal foundation models. In the middle, Nvidia is building the platforms and tools for physical AI development. And then there is a third camp: industrial manufacturers like Hitachi

The post Hitachi bets on industrial expertise to win the physical AI race appeared first on AI News. Read More

Taalas is replacing programmable GPUs with hardwired AI chips to achieve 17,000 tokens per second for ubiquitous inferenceMarkTechPost In the high-stakes world of AI infrastructure, the industry has operated under a singular assumption: flexibility is king. We build general-purpose GPUs because AI models change every week, and we need programmable silicon that can adapt to the next research breakthrough. But Taalas, the Toronto-based startup thinks that flexibility is exactly what’s holding AI back.

The post Taalas is replacing programmable GPUs with hardwired AI chips to achieve 17,000 tokens per second for ubiquitous inference appeared first on MarkTechPost.

In the high-stakes world of AI infrastructure, the industry has operated under a singular assumption: flexibility is king. We build general-purpose GPUs because AI models change every week, and we need programmable silicon that can adapt to the next research breakthrough. But Taalas, the Toronto-based startup thinks that flexibility is exactly what’s holding AI back.

The post Taalas is replacing programmable GPUs with hardwired AI chips to achieve 17,000 tokens per second for ubiquitous inference appeared first on MarkTechPost. Read More

The Reality of Vibe Coding: AI Agents and the Security Debt CrisisTowards Data Science Why optimizing for speed over safety is leaving applications vulnerable, and how to fix it.

The post The Reality of Vibe Coding: AI Agents and the Security Debt Crisis appeared first on Towards Data Science.

Why optimizing for speed over safety is leaving applications vulnerable, and how to fix it.

The post The Reality of Vibe Coding: AI Agents and the Security Debt Crisis appeared first on Towards Data Science. Read More

Role Intelligence Chief AI Officer — At a Glance IBM IBV 2025 CAIO Study IAPP AIGP Tech Jacks 20-Role Table 60-Posting Doc C Analysis Chief AI Officer ▲ Very High Demand The CAIO sets enterprise AI strategy, governs adoption, and answers to the board. 26% of organizations globally now have one, up from 11% two […]

Role Intelligence AI Risk Manager — At a Glance IAPP 2025-26 ZipRecruiter NIST AI RMF AI Risk Manager ⬆ Very High Identifies, measures, and manages technical and operational AI vulnerabilities using model risk management frameworks. Financial services is the dominant employer. Very high demand driven by SR 11-7 expansion to AI/ML and EU AI Act […]

Is There a Community Edition of Palantir? Meet OpenPlanter: An Open Source Recursive AI Agent for Your Micro Surveillance Use CasesMarkTechPost The balance of power in the digital age is shifting. While governments and large corporations have long used data to track individuals, a new open-source project called OpenPlanter is giving that power back to the public. Created by a developer ‘Shin Megami Boson‘, OpenPlanter is a recursive-language-model investigation agent. Its goal is simple: help you

The post Is There a Community Edition of Palantir? Meet OpenPlanter: An Open Source Recursive AI Agent for Your Micro Surveillance Use Cases appeared first on MarkTechPost.

The balance of power in the digital age is shifting. While governments and large corporations have long used data to track individuals, a new open-source project called OpenPlanter is giving that power back to the public. Created by a developer ‘Shin Megami Boson‘, OpenPlanter is a recursive-language-model investigation agent. Its goal is simple: help you

The post Is There a Community Edition of Palantir? Meet OpenPlanter: An Open Source Recursive AI Agent for Your Micro Surveillance Use Cases appeared first on MarkTechPost. Read More

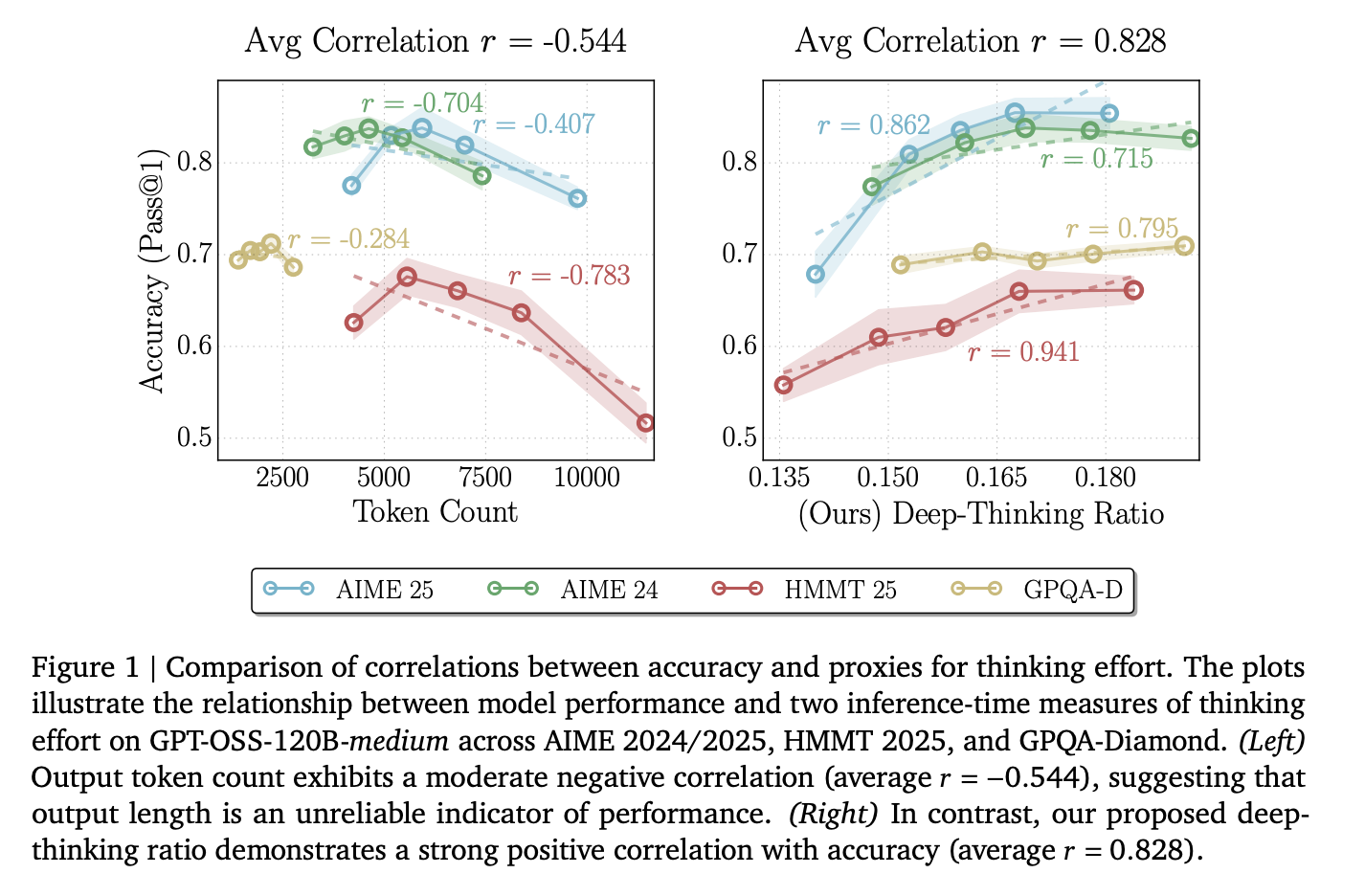

A New Google AI Research Proposes Deep-Thinking Ratio to Improve LLM Accuracy While Cutting Total Inference Costs by HalfMarkTechPost For the last few years, the AI world has followed a simple rule: if you want a Large Language Model (LLM) to solve a harder problem, make its Chain-of-Thought (CoT) longer. But new research from the University of Virginia and Google proves that ‘thinking long’ is not the same as ‘thinking hard’. The research team

The post A New Google AI Research Proposes Deep-Thinking Ratio to Improve LLM Accuracy While Cutting Total Inference Costs by Half appeared first on MarkTechPost.

For the last few years, the AI world has followed a simple rule: if you want a Large Language Model (LLM) to solve a harder problem, make its Chain-of-Thought (CoT) longer. But new research from the University of Virginia and Google proves that ‘thinking long’ is not the same as ‘thinking hard’. The research team

The post A New Google AI Research Proposes Deep-Thinking Ratio to Improve LLM Accuracy While Cutting Total Inference Costs by Half appeared first on MarkTechPost. Read More

How to Design an Agentic Workflow for Tool-Driven Route Optimization with Deterministic Computation and Structured OutputsMarkTechPost In this tutorial, we build a production-style Route Optimizer Agent for a logistics dispatch center using the latest LangChain agent APIs. We design a tool-driven workflow in which the agent reliably computes distances, ETAs, and optimal routes rather than guessing, and we enforce structured outputs to make the results directly usable in downstream systems. We

The post How to Design an Agentic Workflow for Tool-Driven Route Optimization with Deterministic Computation and Structured Outputs appeared first on MarkTechPost.

In this tutorial, we build a production-style Route Optimizer Agent for a logistics dispatch center using the latest LangChain agent APIs. We design a tool-driven workflow in which the agent reliably computes distances, ETAs, and optimal routes rather than guessing, and we enforce structured outputs to make the results directly usable in downstream systems. We

The post How to Design an Agentic Workflow for Tool-Driven Route Optimization with Deterministic Computation and Structured Outputs appeared first on MarkTechPost. Read More