OpenAI restructures, enters ‘next chapter’ of Microsoft partnershipAI News OpenAI has completed a major reorganisation and, in the same breath, signed a new definitive partnership agreement with Microsoft. Starting with OpenAI’s reorganisation, the aim is to solidify the nonprofit’s control over the for-profit business and establish the newly named OpenAI Foundation as a global philanthropic powerhouse, holding equity in the commercial arm valued at

The post OpenAI restructures, enters ‘next chapter’ of Microsoft partnership appeared first on AI News.

OpenAI has completed a major reorganisation and, in the same breath, signed a new definitive partnership agreement with Microsoft. Starting with OpenAI’s reorganisation, the aim is to solidify the nonprofit’s control over the for-profit business and establish the newly named OpenAI Foundation as a global philanthropic powerhouse, holding equity in the commercial arm valued at

The post OpenAI restructures, enters ‘next chapter’ of Microsoft partnership appeared first on AI News. Read More

Breakthrough optical processor lets AI compute at the speed of lightArtificial Intelligence News — ScienceDaily Researchers at Tsinghua University developed the Optical Feature Extraction Engine (OFE2), an optical engine that processes data at 12.5 GHz using light rather than electricity. Its integrated diffraction and data preparation modules enable unprecedented speed and efficiency for AI tasks. Demonstrations in imaging and trading showed improved accuracy, lower latency, and reduced power demand. This innovation pushes optical computing toward real-world, high-performance AI.

Researchers at Tsinghua University developed the Optical Feature Extraction Engine (OFE2), an optical engine that processes data at 12.5 GHz using light rather than electricity. Its integrated diffraction and data preparation modules enable unprecedented speed and efficiency for AI tasks. Demonstrations in imaging and trading showed improved accuracy, lower latency, and reduced power demand. This innovation pushes optical computing toward real-world, high-performance AI. Read More

OpenAI’s bold India play: Free ChatGPT Go accessAI News OpenAI just made its biggest bet on India yet. Starting November 4, the company will hand out free year-long access to ChatGPT Go — a move that puts every marketing executive on notice about how aggressively AI companies are fighting for the world’s fastest-growing digital market. OpenAI will offer its ChatGPT Go plan to users

The post OpenAI’s bold India play: Free ChatGPT Go access appeared first on AI News.

OpenAI just made its biggest bet on India yet. Starting November 4, the company will hand out free year-long access to ChatGPT Go — a move that puts every marketing executive on notice about how aggressively AI companies are fighting for the world’s fastest-growing digital market. OpenAI will offer its ChatGPT Go plan to users

The post OpenAI’s bold India play: Free ChatGPT Go access appeared first on AI News. Read More

Exploration through Generation: Applying GFlowNets to Structured Searchcs.AI updates on arXiv.org arXiv:2510.21886v1 Announce Type: new

Abstract: This work applies Generative Flow Networks (GFlowNets) to three graph optimization problems: the Traveling Salesperson Problem, Minimum Spanning Tree, and Shortest Path. GFlowNets are generative models that learn to sample solutions proportionally to a reward function. The models are trained using the Trajectory Balance loss to build solutions sequentially, se- lecting edges for spanning trees, nodes for paths, and cities for tours. Experiments on benchmark instances of varying sizes show that GFlowNets learn to find optimal solutions. For each problem type, multiple graph configurations with different numbers of nodes were tested. The generated solutions match those from classical algorithms (Dijkstra for shortest path, Kruskal for spanning trees, and exact solvers for TSP). Training convergence depends on problem complexity, with the number of episodes required for loss stabilization increasing as graph size grows. Once training converges, the generated solutions match known optima from classical algorithms across the tested instances. This work demonstrates that generative models can solve combinatorial optimization problems through learned policies. The main advantage of this learning-based approach is computational scalability: while classical algorithms have fixed complexity per instance, GFlowNets amortize computation through training. With sufficient computational resources, the framework could potentially scale to larger problem instances where classical exact methods become infeasible.

arXiv:2510.21886v1 Announce Type: new

Abstract: This work applies Generative Flow Networks (GFlowNets) to three graph optimization problems: the Traveling Salesperson Problem, Minimum Spanning Tree, and Shortest Path. GFlowNets are generative models that learn to sample solutions proportionally to a reward function. The models are trained using the Trajectory Balance loss to build solutions sequentially, se- lecting edges for spanning trees, nodes for paths, and cities for tours. Experiments on benchmark instances of varying sizes show that GFlowNets learn to find optimal solutions. For each problem type, multiple graph configurations with different numbers of nodes were tested. The generated solutions match those from classical algorithms (Dijkstra for shortest path, Kruskal for spanning trees, and exact solvers for TSP). Training convergence depends on problem complexity, with the number of episodes required for loss stabilization increasing as graph size grows. Once training converges, the generated solutions match known optima from classical algorithms across the tested instances. This work demonstrates that generative models can solve combinatorial optimization problems through learned policies. The main advantage of this learning-based approach is computational scalability: while classical algorithms have fixed complexity per instance, GFlowNets amortize computation through training. With sufficient computational resources, the framework could potentially scale to larger problem instances where classical exact methods become infeasible. Read More

Distribution Shift Alignment Helps LLMs Simulate Survey Response Distributionscs.AI updates on arXiv.org arXiv:2510.21977v1 Announce Type: new

Abstract: Large language models (LLMs) offer a promising way to simulate human survey responses, potentially reducing the cost of large-scale data collection. However, existing zero-shot methods suffer from prompt sensitivity and low accuracy, while conventional fine-tuning approaches mostly fit the training set distributions and struggle to produce results more accurate than the training set itself, which deviates from the original goal of using LLMs to simulate survey responses. Building on this observation, we introduce Distribution Shift Alignment (DSA), a two-stage fine-tuning method that aligns both the output distributions and the distribution shifts across different backgrounds. By learning how these distributions change rather than fitting training data, DSA can provide results substantially closer to the true distribution than the training data. Empirically, DSA consistently outperforms other methods on five public survey datasets. We further conduct a comprehensive comparison covering accuracy, robustness, and data savings. DSA reduces the required real data by 53.48-69.12%, demonstrating its effectiveness and efficiency in survey simulation.

arXiv:2510.21977v1 Announce Type: new

Abstract: Large language models (LLMs) offer a promising way to simulate human survey responses, potentially reducing the cost of large-scale data collection. However, existing zero-shot methods suffer from prompt sensitivity and low accuracy, while conventional fine-tuning approaches mostly fit the training set distributions and struggle to produce results more accurate than the training set itself, which deviates from the original goal of using LLMs to simulate survey responses. Building on this observation, we introduce Distribution Shift Alignment (DSA), a two-stage fine-tuning method that aligns both the output distributions and the distribution shifts across different backgrounds. By learning how these distributions change rather than fitting training data, DSA can provide results substantially closer to the true distribution than the training data. Empirically, DSA consistently outperforms other methods on five public survey datasets. We further conduct a comprehensive comparison covering accuracy, robustness, and data savings. DSA reduces the required real data by 53.48-69.12%, demonstrating its effectiveness and efficiency in survey simulation. Read More

Performance Trade-offs of Optimizing Small Language Models for E-Commercecs.AI updates on arXiv.org arXiv:2510.21970v1 Announce Type: new

Abstract: Large Language Models (LLMs) offer state-of-the-art performance in natural language understanding and generation tasks. However, the deployment of leading commercial models for specialized tasks, such as e-commerce, is often hindered by high computational costs, latency, and operational expenses. This paper investigates the viability of smaller, open-weight models as a resource-efficient alternative. We present a methodology for optimizing a one-billion-parameter Llama 3.2 model for multilingual e-commerce intent recognition. The model was fine-tuned using Quantized Low-Rank Adaptation (QLoRA) on a synthetically generated dataset designed to mimic real-world user queries. Subsequently, we applied post-training quantization techniques, creating GPU-optimized (GPTQ) and CPU-optimized (GGUF) versions. Our results demonstrate that the specialized 1B model achieves 99% accuracy, matching the performance of the significantly larger GPT-4.1 model. A detailed performance analysis revealed critical, hardware-dependent trade-offs: while 4-bit GPTQ reduced VRAM usage by 41%, it paradoxically slowed inference by 82% on an older GPU architecture (NVIDIA T4) due to dequantization overhead. Conversely, GGUF formats on a CPU achieved a speedup of up to 18x in inference throughput and a reduction of over 90% in RAM consumption compared to the FP16 baseline. We conclude that small, properly optimized open-weight models are not just a viable but a more suitable alternative for domain-specific applications, offering state-of-the-art accuracy at a fraction of the computational cost.

arXiv:2510.21970v1 Announce Type: new

Abstract: Large Language Models (LLMs) offer state-of-the-art performance in natural language understanding and generation tasks. However, the deployment of leading commercial models for specialized tasks, such as e-commerce, is often hindered by high computational costs, latency, and operational expenses. This paper investigates the viability of smaller, open-weight models as a resource-efficient alternative. We present a methodology for optimizing a one-billion-parameter Llama 3.2 model for multilingual e-commerce intent recognition. The model was fine-tuned using Quantized Low-Rank Adaptation (QLoRA) on a synthetically generated dataset designed to mimic real-world user queries. Subsequently, we applied post-training quantization techniques, creating GPU-optimized (GPTQ) and CPU-optimized (GGUF) versions. Our results demonstrate that the specialized 1B model achieves 99% accuracy, matching the performance of the significantly larger GPT-4.1 model. A detailed performance analysis revealed critical, hardware-dependent trade-offs: while 4-bit GPTQ reduced VRAM usage by 41%, it paradoxically slowed inference by 82% on an older GPU architecture (NVIDIA T4) due to dequantization overhead. Conversely, GGUF formats on a CPU achieved a speedup of up to 18x in inference throughput and a reduction of over 90% in RAM consumption compared to the FP16 baseline. We conclude that small, properly optimized open-weight models are not just a viable but a more suitable alternative for domain-specific applications, offering state-of-the-art accuracy at a fraction of the computational cost. Read More

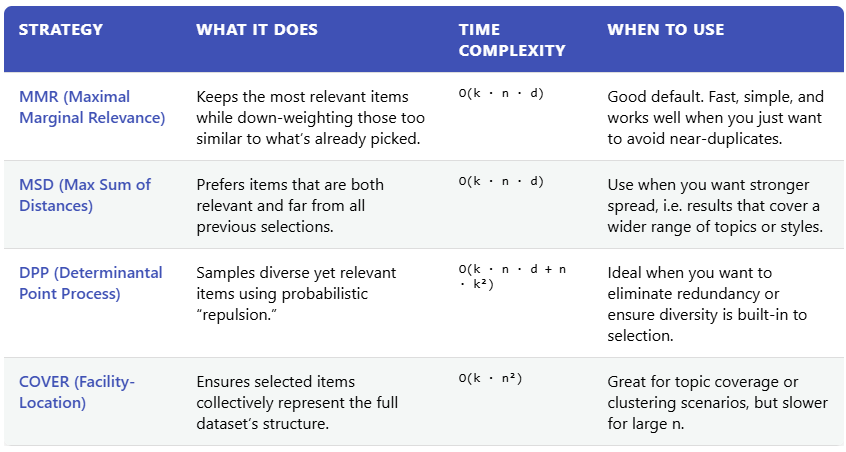

Meet Pyversity Library: How to Improve Retrieval Systems by Diversifying the Results Using Pyversity?MarkTechPost Pyversity is a fast, lightweight Python library designed to improve the diversity of results from retrieval systems. Retrieval often returns items that are very similar, leading to redundancy. Pyversity efficiently re-ranks these results to surface relevant but less redundant items. It offers a clear, unified API for several popular diversification strategies, including Maximal Marginal Relevance

The post Meet Pyversity Library: How to Improve Retrieval Systems by Diversifying the Results Using Pyversity? appeared first on MarkTechPost.

Pyversity is a fast, lightweight Python library designed to improve the diversity of results from retrieval systems. Retrieval often returns items that are very similar, leading to redundancy. Pyversity efficiently re-ranks these results to surface relevant but less redundant items. It offers a clear, unified API for several popular diversification strategies, including Maximal Marginal Relevance

The post Meet Pyversity Library: How to Improve Retrieval Systems by Diversifying the Results Using Pyversity? appeared first on MarkTechPost. Read More

How to Build a Fully Interactive, Real-Time Visualization Dashboard Using Bokeh and Custom JavaScript?MarkTechPost In this tutorial, we create a fully interactive, visually compelling data visualization dashboard using Bokeh. We start by turning raw data into insightful plots, then enhance them with features such as linked brushing, color gradients, and real-time filters powered by dropdowns and sliders. As we progress, we bring our dashboard to life with Custom JavaScript

The post How to Build a Fully Interactive, Real-Time Visualization Dashboard Using Bokeh and Custom JavaScript? appeared first on MarkTechPost.

In this tutorial, we create a fully interactive, visually compelling data visualization dashboard using Bokeh. We start by turning raw data into insightful plots, then enhance them with features such as linked brushing, color gradients, and real-time filters powered by dropdowns and sliders. As we progress, we bring our dashboard to life with Custom JavaScript

The post How to Build a Fully Interactive, Real-Time Visualization Dashboard Using Bokeh and Custom JavaScript? appeared first on MarkTechPost. Read More

Correct Reasoning Paths Visit Shared Decision Pivotscs.AI updates on arXiv.org arXiv:2509.21549v2 Announce Type: replace

Abstract: Chain-of-thought (CoT) reasoning exposes the intermediate thinking process of large language models (LLMs), yet verifying those traces at scale remains unsolved. In response, we introduce the idea of decision pivots-minimal, verifiable checkpoints that any correct reasoning path must visit. We hypothesize that correct reasoning, though stylistically diverse, converge on the same pivot set, while incorrect ones violate at least one pivot. Leveraging this property, we propose a self-training pipeline that (i) samples diverse reasoning paths and mines shared decision pivots, (ii) compresses each trace into pivot-focused short-path reasoning using an auxiliary verifier, and (iii) post-trains the model using its self-generated outputs. The proposed method aligns reasoning without ground truth reasoning data or external metrics. Experiments on standard benchmarks such as LogiQA, MedQA, and MATH500 show the effectiveness of our method.

arXiv:2509.21549v2 Announce Type: replace

Abstract: Chain-of-thought (CoT) reasoning exposes the intermediate thinking process of large language models (LLMs), yet verifying those traces at scale remains unsolved. In response, we introduce the idea of decision pivots-minimal, verifiable checkpoints that any correct reasoning path must visit. We hypothesize that correct reasoning, though stylistically diverse, converge on the same pivot set, while incorrect ones violate at least one pivot. Leveraging this property, we propose a self-training pipeline that (i) samples diverse reasoning paths and mines shared decision pivots, (ii) compresses each trace into pivot-focused short-path reasoning using an auxiliary verifier, and (iii) post-trains the model using its self-generated outputs. The proposed method aligns reasoning without ground truth reasoning data or external metrics. Experiments on standard benchmarks such as LogiQA, MedQA, and MATH500 show the effectiveness of our method. Read More

Faster Reinforcement Learning by Freezing Slow Statescs.AI updates on arXiv.org arXiv:2301.00922v4 Announce Type: replace

Abstract: We study infinite horizon Markov decision processes (MDPs) with “fast-slow” structure, where some state variables evolve rapidly (“fast states”) while others change more gradually (“slow states”). This structure commonly arises in practice when decisions must be made at high frequencies over long horizons, and where slowly changing information still plays a critical role in determining optimal actions. Examples include inventory control under slowly changing demand indicators or dynamic pricing with gradually shifting consumer behavior. Modeling the problem at the natural decision frequency leads to MDPs with discount factors close to one, making them computationally challenging. We propose a novel approximation strategy that “freezes” slow states during phases of lower-level planning and subsequently applies value iteration to an auxiliary upper-level MDP that evolves on a slower timescale. Freezing states for short periods of time leads to easier-to-solve lower-level problems, while a slower upper-level timescale allows for a more favorable discount factor. On the theoretical side, we analyze the regret incurred by our frozen-state approach, which leads to simple insights on how to trade off regret versus computational cost. Empirically, we benchmark our new frozen-state methods on three domains, (i) inventory control with fixed order costs, (ii) a gridworld problem with spatial tasks, and (iii) dynamic pricing with reference-price effects. We demonstrate that the new methods produce high-quality policies with significantly less computation, and we show that simply omitting slow states is often a poor heuristic.

arXiv:2301.00922v4 Announce Type: replace

Abstract: We study infinite horizon Markov decision processes (MDPs) with “fast-slow” structure, where some state variables evolve rapidly (“fast states”) while others change more gradually (“slow states”). This structure commonly arises in practice when decisions must be made at high frequencies over long horizons, and where slowly changing information still plays a critical role in determining optimal actions. Examples include inventory control under slowly changing demand indicators or dynamic pricing with gradually shifting consumer behavior. Modeling the problem at the natural decision frequency leads to MDPs with discount factors close to one, making them computationally challenging. We propose a novel approximation strategy that “freezes” slow states during phases of lower-level planning and subsequently applies value iteration to an auxiliary upper-level MDP that evolves on a slower timescale. Freezing states for short periods of time leads to easier-to-solve lower-level problems, while a slower upper-level timescale allows for a more favorable discount factor. On the theoretical side, we analyze the regret incurred by our frozen-state approach, which leads to simple insights on how to trade off regret versus computational cost. Empirically, we benchmark our new frozen-state methods on three domains, (i) inventory control with fixed order costs, (ii) a gridworld problem with spatial tasks, and (iii) dynamic pricing with reference-price effects. We demonstrate that the new methods produce high-quality policies with significantly less computation, and we show that simply omitting slow states is often a poor heuristic. Read More