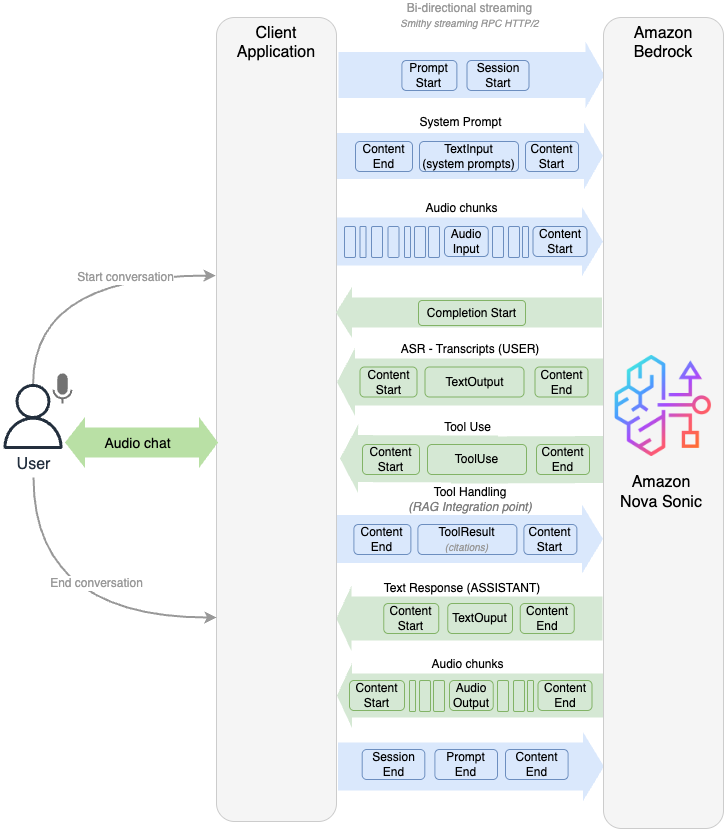

Make your web apps hands-free with Amazon Nova SonicArtificial Intelligence Graphical user interfaces have carried the torch for decades, but today’s users increasingly expect to talk to their applications. In this post we show how we added a true voice-first experience to a reference application—the Smart Todo App—turning routine task management into a fluid, hands-free conversation.

Graphical user interfaces have carried the torch for decades, but today’s users increasingly expect to talk to their applications. In this post we show how we added a true voice-first experience to a reference application—the Smart Todo App—turning routine task management into a fluid, hands-free conversation. Read More

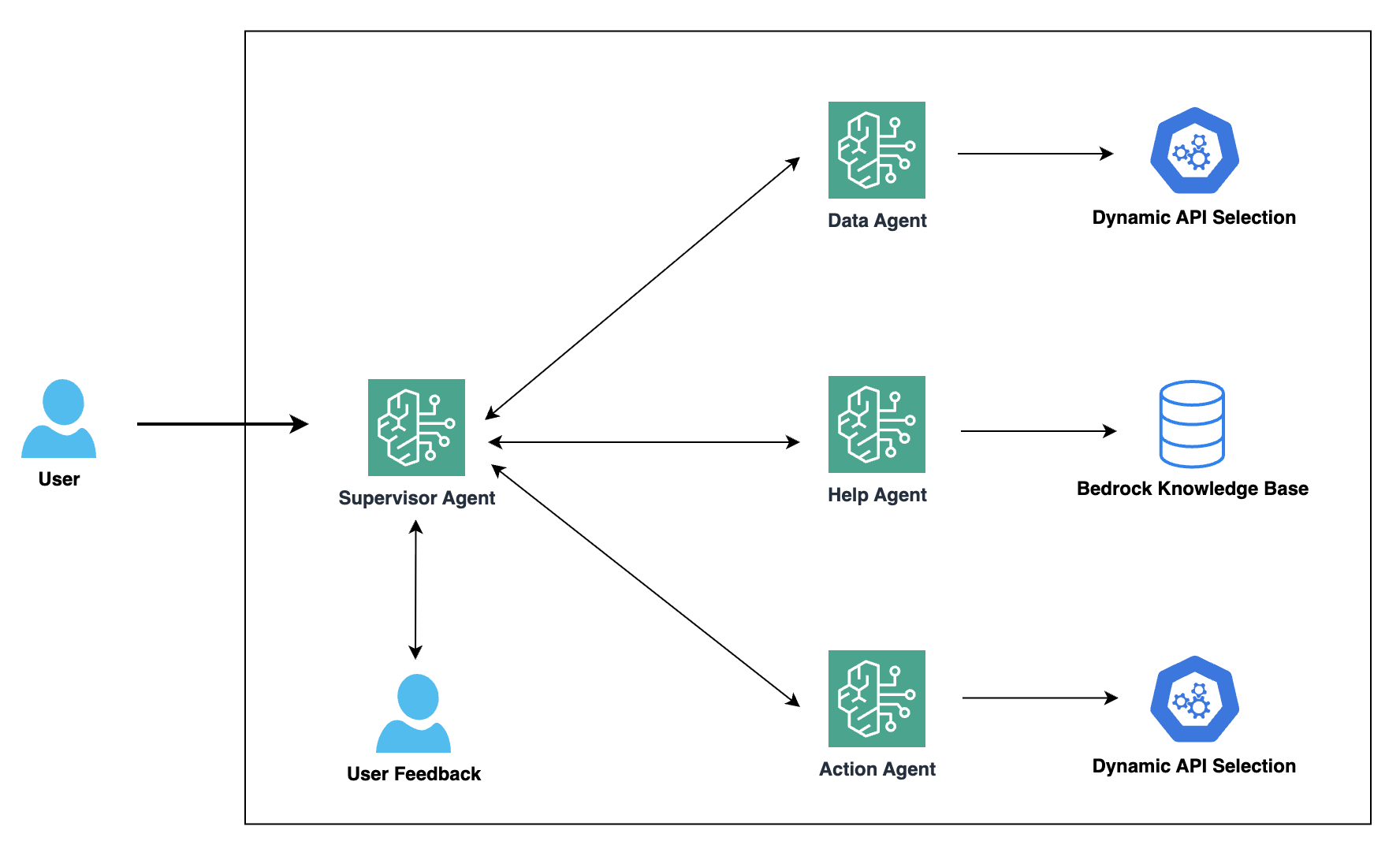

Harnessing the power of generative AI: Druva’s multi-agent copilot for streamlined data protectionArtificial Intelligence Generative AI is transforming the way businesses interact with their customers and revolutionizing conversational interfaces for complex IT operations. Druva, a leading provider of data security solutions, is at the forefront of this transformation. In collaboration with Amazon Web Services (AWS), Druva is developing a cutting-edge generative AI-powered multi-agent copilot that aims to redefine the customer experience in data security and cyber resilience.

Generative AI is transforming the way businesses interact with their customers and revolutionizing conversational interfaces for complex IT operations. Druva, a leading provider of data security solutions, is at the forefront of this transformation. In collaboration with Amazon Web Services (AWS), Druva is developing a cutting-edge generative AI-powered multi-agent copilot that aims to redefine the customer experience in data security and cyber resilience. Read More

“The success of an AI product depends on how intuitively users can interact with its capabilities”Towards Data Science Janna Lipenkova on AI strategy, AI products, and how domain knowledge can change the entire shape of an AI solution.

The post “The success of an AI product depends on how intuitively users can interact with its capabilities” appeared first on Towards Data Science.

Janna Lipenkova on AI strategy, AI products, and how domain knowledge can change the entire shape of an AI solution.

The post “The success of an AI product depends on how intuitively users can interact with its capabilities” appeared first on Towards Data Science. Read More

How to Crack Machine Learning System-Design InterviewsTowards Data Science A comprehensive guide into Meta, Apple, Reddit, Amazon, Google, and Snap ML design interviews

The post How to Crack Machine Learning System-Design Interviews appeared first on Towards Data Science.

A comprehensive guide into Meta, Apple, Reddit, Amazon, Google, and Snap ML design interviews

The post How to Crack Machine Learning System-Design Interviews appeared first on Towards Data Science. Read More

Building AI Automations with Google OpalKDnuggets Google Opal is a no-code, experimental tool from Google Labs. It is designed to enable users to build and share AI-powered micro-applications using natural language.

Google Opal is a no-code, experimental tool from Google Labs. It is designed to enable users to build and share AI-powered micro-applications using natural language. Read More

How to Design an Advanced Multi-Agent Reasoning System with spaCy Featuring Planning, Reflection, Memory, and Knowledge GraphsMarkTechPost In this tutorial, we build an advanced Agentic AI system using spaCy, designed to allow multiple intelligent agents to reason, collaborate, reflect, and learn from experience. We work through the entire pipeline step by step, observing how each agent processes tasks using planning, memory, communication, and semantic reasoning. By the end, we see how the

The post How to Design an Advanced Multi-Agent Reasoning System with spaCy Featuring Planning, Reflection, Memory, and Knowledge Graphs appeared first on MarkTechPost.

In this tutorial, we build an advanced Agentic AI system using spaCy, designed to allow multiple intelligent agents to reason, collaborate, reflect, and learn from experience. We work through the entire pipeline step by step, observing how each agent processes tasks using planning, memory, communication, and semantic reasoning. By the end, we see how the

The post How to Design an Advanced Multi-Agent Reasoning System with spaCy Featuring Planning, Reflection, Memory, and Knowledge Graphs appeared first on MarkTechPost. Read More

Comparing the Top 6 Agent-Native Rails for the Agentic Internet: MCP, A2A, AP2, ACP, x402, and KiteMarkTechPost As AI agents move from single-app copilots to autonomous systems that browse, transact, and coordinate with each other, a new infrastructure layer is emerging underneath them. This article compares six key “agent-native rails” — MCP, A2A, AP2, ACP, x402, and Kite — focusing on how they standardize tool access, inter-agent communication, payment authorization, and settlement,

The post Comparing the Top 6 Agent-Native Rails for the Agentic Internet: MCP, A2A, AP2, ACP, x402, and Kite appeared first on MarkTechPost.

As AI agents move from single-app copilots to autonomous systems that browse, transact, and coordinate with each other, a new infrastructure layer is emerging underneath them. This article compares six key “agent-native rails” — MCP, A2A, AP2, ACP, x402, and Kite — focusing on how they standardize tool access, inter-agent communication, payment authorization, and settlement,

The post Comparing the Top 6 Agent-Native Rails for the Agentic Internet: MCP, A2A, AP2, ACP, x402, and Kite appeared first on MarkTechPost. Read More

Anthropic details cyber espionage campaign orchestrated by AIAI News Security leaders face a new class of autonomous threat as Anthropic details the first cyber espionage campaign orchestrated by AI. In a report released this week, the company’s Threat Intelligence team outlined its disruption of a sophisticated operation by a Chinese state-sponsored group – an assessment made with high confidence – dubbed GTG-1002 and detected

The post Anthropic details cyber espionage campaign orchestrated by AI appeared first on AI News.

Security leaders face a new class of autonomous threat as Anthropic details the first cyber espionage campaign orchestrated by AI. In a report released this week, the company’s Threat Intelligence team outlined its disruption of a sophisticated operation by a Chinese state-sponsored group – an assessment made with high confidence – dubbed GTG-1002 and detected

The post Anthropic details cyber espionage campaign orchestrated by AI appeared first on AI News. Read More

Meet SDialog: An Open-Source Python Toolkit for Building, Simulating, and Evaluating LLM-based Conversational Agents End-to-EndMarkTechPost How can developers reliably generate, control, and inspect large volumes of realistic dialogue data without building a custom simulation stack every time? Meet SDialog, an open sourced Python toolkit for synthetic dialogue generation, evaluation, and interpretability that targets the full conversational pipeline from agent definition to analysis. It standardizes how a Dialog is represented and

The post Meet SDialog: An Open-Source Python Toolkit for Building, Simulating, and Evaluating LLM-based Conversational Agents End-to-End appeared first on MarkTechPost.

How can developers reliably generate, control, and inspect large volumes of realistic dialogue data without building a custom simulation stack every time? Meet SDialog, an open sourced Python toolkit for synthetic dialogue generation, evaluation, and interpretability that targets the full conversational pipeline from agent definition to analysis. It standardizes how a Dialog is represented and

The post Meet SDialog: An Open-Source Python Toolkit for Building, Simulating, and Evaluating LLM-based Conversational Agents End-to-End appeared first on MarkTechPost. Read More

AgentEvolver: Towards Efficient Self-Evolving Agent Systemcs.AI updates on arXiv.org arXiv:2511.10395v1 Announce Type: cross

Abstract: Autonomous agents powered by large language models (LLMs) have the potential to significantly enhance human productivity by reasoning, using tools, and executing complex tasks in diverse environments. However, current approaches to developing such agents remain costly and inefficient, as they typically require manually constructed task datasets and reinforcement learning (RL) pipelines with extensive random exploration. These limitations lead to prohibitively high data-construction costs, low exploration efficiency, and poor sample utilization. To address these challenges, we present AgentEvolver, a self-evolving agent system that leverages the semantic understanding and reasoning capabilities of LLMs to drive autonomous agent learning. AgentEvolver introduces three synergistic mechanisms: (i) self-questioning, which enables curiosity-driven task generation in novel environments, reducing dependence on handcrafted datasets; (ii) self-navigating, which improves exploration efficiency through experience reuse and hybrid policy guidance; and (iii) self-attributing, which enhances sample efficiency by assigning differentiated rewards to trajectory states and actions based on their contribution. By integrating these mechanisms into a unified framework, AgentEvolver enables scalable, cost-effective, and continual improvement of agent capabilities. Preliminary experiments indicate that AgentEvolver achieves more efficient exploration, better sample utilization, and faster adaptation compared to traditional RL-based baselines.

arXiv:2511.10395v1 Announce Type: cross

Abstract: Autonomous agents powered by large language models (LLMs) have the potential to significantly enhance human productivity by reasoning, using tools, and executing complex tasks in diverse environments. However, current approaches to developing such agents remain costly and inefficient, as they typically require manually constructed task datasets and reinforcement learning (RL) pipelines with extensive random exploration. These limitations lead to prohibitively high data-construction costs, low exploration efficiency, and poor sample utilization. To address these challenges, we present AgentEvolver, a self-evolving agent system that leverages the semantic understanding and reasoning capabilities of LLMs to drive autonomous agent learning. AgentEvolver introduces three synergistic mechanisms: (i) self-questioning, which enables curiosity-driven task generation in novel environments, reducing dependence on handcrafted datasets; (ii) self-navigating, which improves exploration efficiency through experience reuse and hybrid policy guidance; and (iii) self-attributing, which enhances sample efficiency by assigning differentiated rewards to trajectory states and actions based on their contribution. By integrating these mechanisms into a unified framework, AgentEvolver enables scalable, cost-effective, and continual improvement of agent capabilities. Preliminary experiments indicate that AgentEvolver achieves more efficient exploration, better sample utilization, and faster adaptation compared to traditional RL-based baselines. Read More