WorldGen: Meta reveals generative AI for interactive 3D worldsAI News With its WorldGen system, Meta is shifting the use of generative AI for 3D worlds from creating static imagery to fully interactive assets. The main bottleneck in creating immersive spatial computing experiences – whether for consumer gaming, industrial digital twins, or employee training simulations – has long been the labour-intensive nature of 3D modelling. The

The post WorldGen: Meta reveals generative AI for interactive 3D worlds appeared first on AI News.

With its WorldGen system, Meta is shifting the use of generative AI for 3D worlds from creating static imagery to fully interactive assets. The main bottleneck in creating immersive spatial computing experiences – whether for consumer gaming, industrial digital twins, or employee training simulations – has long been the labour-intensive nature of 3D modelling. The

The post WorldGen: Meta reveals generative AI for interactive 3D worlds appeared first on AI News. Read More

Natural Language Visualization and the Future of Data Analysis and Presentation Towards Data Science

Natural Language Visualization and the Future of Data Analysis and PresentationTowards Data Science Will conversational interaction replace SQL queries, KPI reports, and dashboards?

The post Natural Language Visualization and the Future of Data Analysis and Presentation appeared first on Towards Data Science.

Will conversational interaction replace SQL queries, KPI reports, and dashboards?

The post Natural Language Visualization and the Future of Data Analysis and Presentation appeared first on Towards Data Science. Read More

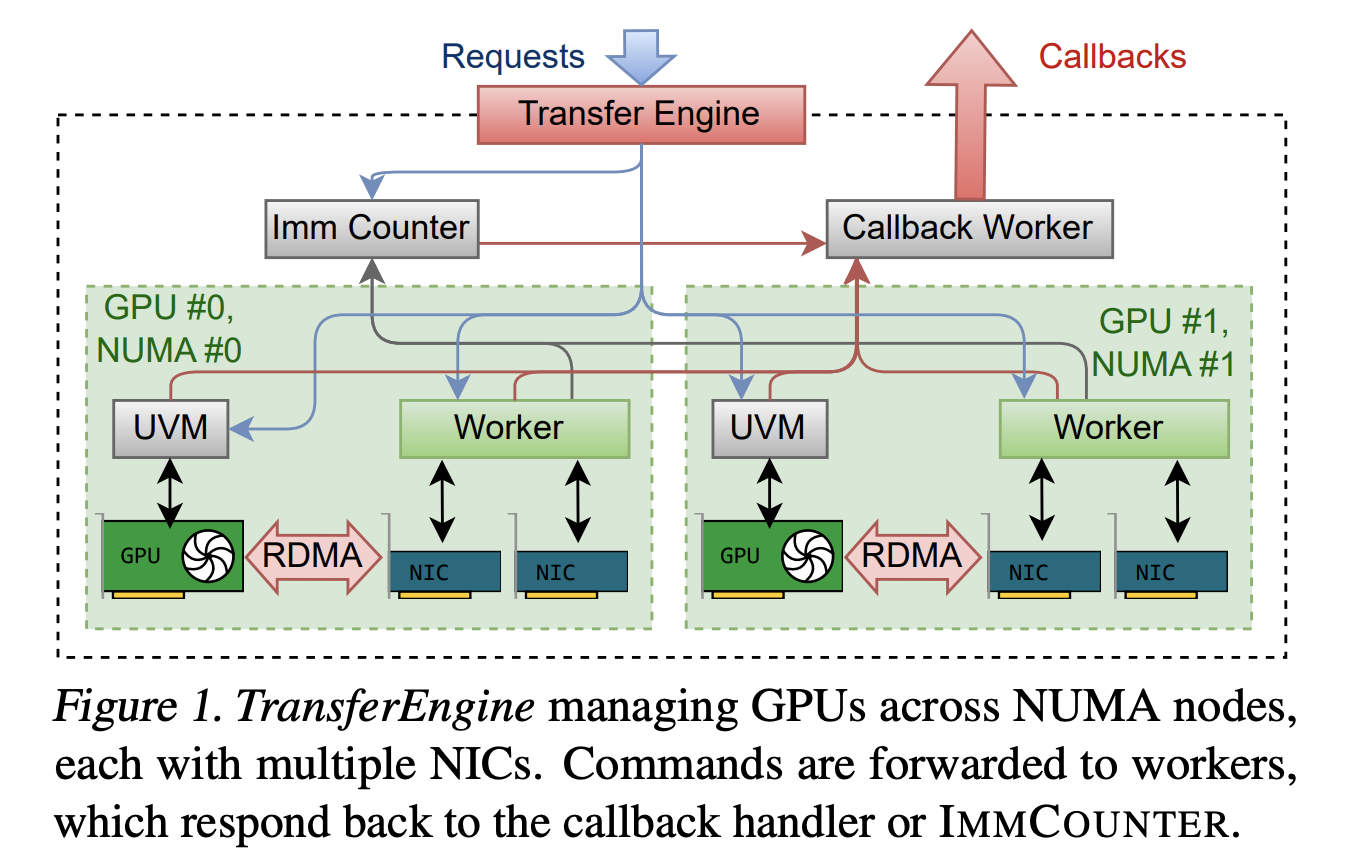

Perplexity AI Releases TransferEngine and pplx garden to Run Trillion Parameter LLMs on Existing GPU ClustersMarkTechPost How can teams run trillion parameter language models on existing mixed GPU clusters without costly new hardware or deep vendor lock in? Perplexity’s research team has released TransferEngine and the surrounding pplx garden toolkit as open source infrastructure for large language model systems. This provides a way to run models with up to 1 trillion

The post Perplexity AI Releases TransferEngine and pplx garden to Run Trillion Parameter LLMs on Existing GPU Clusters appeared first on MarkTechPost.

How can teams run trillion parameter language models on existing mixed GPU clusters without costly new hardware or deep vendor lock in? Perplexity’s research team has released TransferEngine and the surrounding pplx garden toolkit as open source infrastructure for large language model systems. This provides a way to run models with up to 1 trillion

The post Perplexity AI Releases TransferEngine and pplx garden to Run Trillion Parameter LLMs on Existing GPU Clusters appeared first on MarkTechPost. Read More

Top 7 Open Source AI Coding Models You Are Missing Out OnKDnuggets Stop sending your code to OpenAI or Anthropic. Run these 7 top-tier open-source coding models locally for privacy, control, and zero API costs.

Stop sending your code to OpenAI or Anthropic. Run these 7 top-tier open-source coding models locally for privacy, control, and zero API costs. Read More

Generative AI Will Redesign Cars, But Not the Way Automakers ThinkTowards Data Science Traditional manufacturers are using revolutionary technology for incremental optimization instead of fundamental re-imagination

The post Generative AI Will Redesign Cars, But Not the Way Automakers Think appeared first on Towards Data Science.

Traditional manufacturers are using revolutionary technology for incremental optimization instead of fundamental re-imagination

The post Generative AI Will Redesign Cars, But Not the Way Automakers Think appeared first on Towards Data Science. Read More

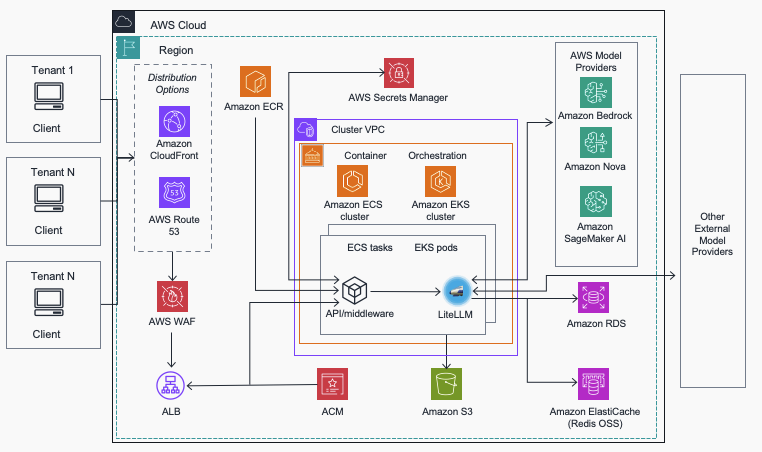

Streamline AI operations with the Multi-Provider Generative AI Gateway reference architectureArtificial Intelligence In this post, we introduce the Multi-Provider Generative AI Gateway reference architecture, which provides guidance for deploying LiteLLM into an AWS environment to streamline the management and governance of production generative AI workloads across multiple model providers. This centralized gateway solution addresses common enterprise challenges including provider fragmentation, decentralized governance, operational complexity, and cost management by offering a unified interface that supports Amazon Bedrock, Amazon SageMaker AI, and external providers while maintaining comprehensive security, monitoring, and control capabilities.

In this post, we introduce the Multi-Provider Generative AI Gateway reference architecture, which provides guidance for deploying LiteLLM into an AWS environment to streamline the management and governance of production generative AI workloads across multiple model providers. This centralized gateway solution addresses common enterprise challenges including provider fragmentation, decentralized governance, operational complexity, and cost management by offering a unified interface that supports Amazon Bedrock, Amazon SageMaker AI, and external providers while maintaining comprehensive security, monitoring, and control capabilities. Read More

Deploy geospatial agents with Foursquare Spatial H3 Hub and Amazon SageMaker AIArtificial Intelligence In this post, you’ll learn how to deploy geospatial AI agents that can answer complex spatial questions in minutes instead of months. By combining Foursquare Spatial H3 Hub’s analysis-ready geospatial data with reasoning models deployed on Amazon SageMaker AI, you can build agents that enable nontechnical domain experts to perform sophisticated spatial analysis through natural language queries—without requiring geographic information system (GIS) expertise or custom data engineering pipelines.

In this post, you’ll learn how to deploy geospatial AI agents that can answer complex spatial questions in minutes instead of months. By combining Foursquare Spatial H3 Hub’s analysis-ready geospatial data with reasoning models deployed on Amazon SageMaker AI, you can build agents that enable nontechnical domain experts to perform sophisticated spatial analysis through natural language queries—without requiring geographic information system (GIS) expertise or custom data engineering pipelines. Read More

Modern DataFrames in Python: A Hands-On Tutorial with Polars and DuckDBTowards Data Science How I learned to handle growing datasets without slowing down my entire workflow

The post Modern DataFrames in Python: A Hands-On Tutorial with Polars and DuckDB appeared first on Towards Data Science.

How I learned to handle growing datasets without slowing down my entire workflow

The post Modern DataFrames in Python: A Hands-On Tutorial with Polars and DuckDB appeared first on Towards Data Science. Read More

How To Build a Graph-Based Recommendation Engine Using EDG and Neo4jTowards Data Science Use a shared taxonomy to connect RDF and property graphs—and power smarter recommendations with inferencing

The post How To Build a Graph-Based Recommendation Engine Using EDG and Neo4j appeared first on Towards Data Science.

Use a shared taxonomy to connect RDF and property graphs—and power smarter recommendations with inferencing

The post How To Build a Graph-Based Recommendation Engine Using EDG and Neo4j appeared first on Towards Data Science. Read More

Task Specific Sharpness Aware O-RAN Resource Management using Multi Agent Reinforcement Learningcs.AI updates on arXiv.org arXiv:2511.15002v1 Announce Type: new

Abstract: Next-generation networks utilize the Open Radio Access Network (O-RAN) architecture to enable dynamic resource management, facilitated by the RAN Intelligent Controller (RIC). While deep reinforcement learning (DRL) models show promise in optimizing network resources, they often struggle with robustness and generalizability in dynamic environments. This paper introduces a novel resource management approach that enhances the Soft Actor Critic (SAC) algorithm with Sharpness-Aware Minimization (SAM) in a distributed Multi-Agent RL (MARL) framework. Our method introduces an adaptive and selective SAM mechanism, where regularization is explicitly driven by temporal-difference (TD)-error variance, ensuring that only agents facing high environmental complexity are regularized. This targeted strategy reduces unnecessary overhead, improves training stability, and enhances generalization without sacrificing learning efficiency. We further incorporate a dynamic $rho$ scheduling scheme to refine the exploration-exploitation trade-off across agents. Experimental results show our method significantly outperforms conventional DRL approaches, yielding up to a $22%$ improvement in resource allocation efficiency and ensuring superior QoS satisfaction across diverse O-RAN slices.

arXiv:2511.15002v1 Announce Type: new

Abstract: Next-generation networks utilize the Open Radio Access Network (O-RAN) architecture to enable dynamic resource management, facilitated by the RAN Intelligent Controller (RIC). While deep reinforcement learning (DRL) models show promise in optimizing network resources, they often struggle with robustness and generalizability in dynamic environments. This paper introduces a novel resource management approach that enhances the Soft Actor Critic (SAC) algorithm with Sharpness-Aware Minimization (SAM) in a distributed Multi-Agent RL (MARL) framework. Our method introduces an adaptive and selective SAM mechanism, where regularization is explicitly driven by temporal-difference (TD)-error variance, ensuring that only agents facing high environmental complexity are regularized. This targeted strategy reduces unnecessary overhead, improves training stability, and enhances generalization without sacrificing learning efficiency. We further incorporate a dynamic $rho$ scheduling scheme to refine the exploration-exploitation trade-off across agents. Experimental results show our method significantly outperforms conventional DRL approaches, yielding up to a $22%$ improvement in resource allocation efficiency and ensuring superior QoS satisfaction across diverse O-RAN slices. Read More