Expanding data residency access to business customers worldwideOpenAI News OpenAI expands data residency for ChatGPT Enterprise, ChatGPT Edu, and the API Platform, enabling eligible customers to store data at rest in-region.

OpenAI expands data residency for ChatGPT Enterprise, ChatGPT Edu, and the API Platform, enabling eligible customers to store data at rest in-region. Read More

How to Create Professional Articles with LaTeX in CursorTowards Data Science Learn how to rapidly create professional articles and presentations with LaTeX in Cursor

The post How to Create Professional Articles with LaTeX in Cursor appeared first on Towards Data Science.

Learn how to rapidly create professional articles and presentations with LaTeX in Cursor

The post How to Create Professional Articles with LaTeX in Cursor appeared first on Towards Data Science. Read More

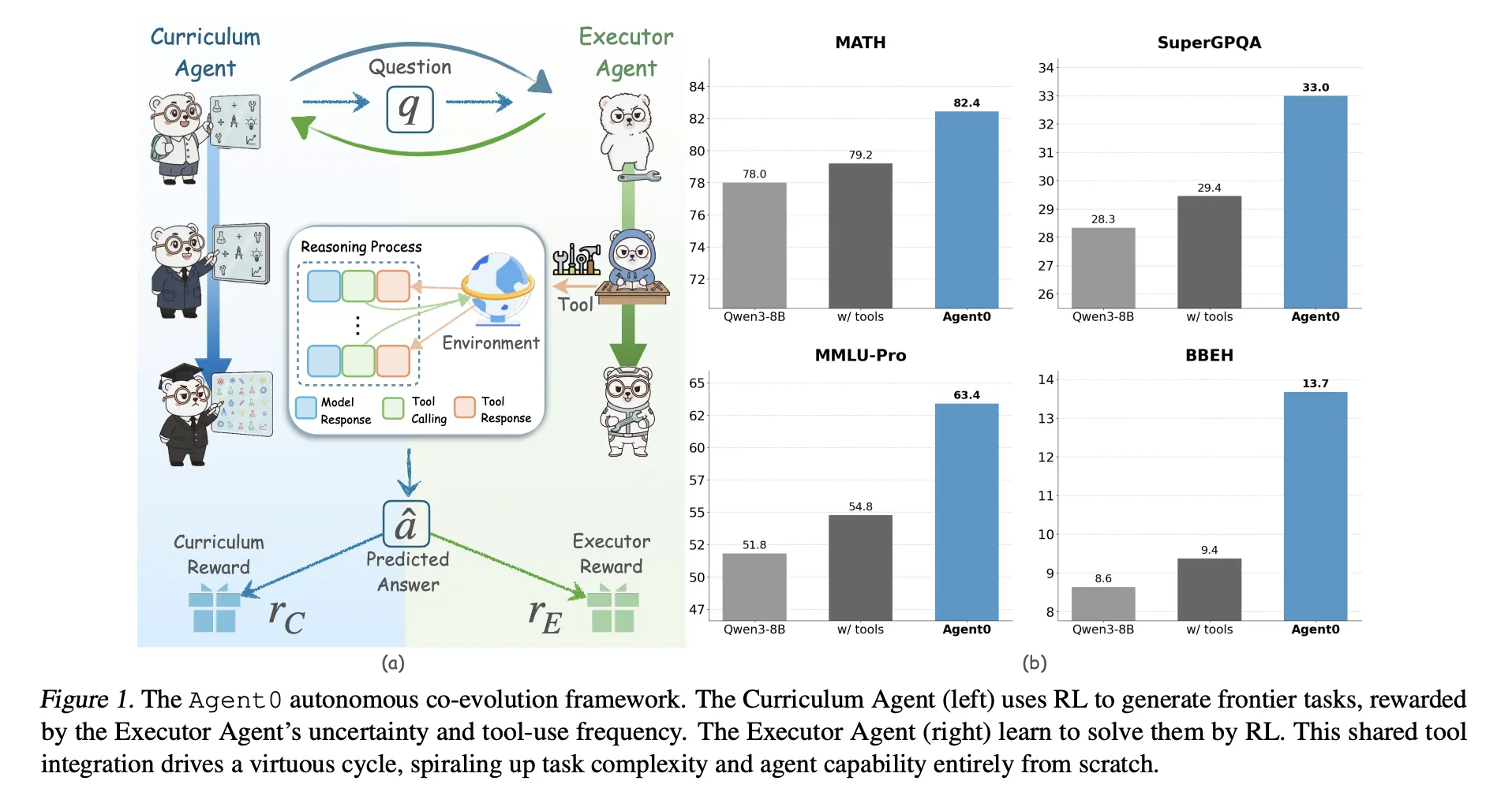

Agent0: A Fully Autonomous AI Framework that Evolves High-Performing Agents without External Data through Multi-Step Co-EvolutionMarkTechPost Large language models need huge human datasets, so what happens if the model must create all its own curriculum and teach itself to use tools? A team of researchers from UNC-Chapel Hill, Salesforce Research and Stanford University introduce ‘Agent0’, a fully autonomous framework that evolves high-performing agents without external data through multi-step co-evolution and seamless

The post Agent0: A Fully Autonomous AI Framework that Evolves High-Performing Agents without External Data through Multi-Step Co-Evolution appeared first on MarkTechPost.

Large language models need huge human datasets, so what happens if the model must create all its own curriculum and teach itself to use tools? A team of researchers from UNC-Chapel Hill, Salesforce Research and Stanford University introduce ‘Agent0’, a fully autonomous framework that evolves high-performing agents without external data through multi-step co-evolution and seamless

The post Agent0: A Fully Autonomous AI Framework that Evolves High-Performing Agents without External Data through Multi-Step Co-Evolution appeared first on MarkTechPost. Read More

How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-MakingMarkTechPost In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see

The post How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making appeared first on MarkTechPost.

In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see

The post How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making appeared first on MarkTechPost. Read More

AI Debaters are More Persuasive when Arguing in Alignment with Their Own Beliefscs.AI updates on arXiv.org arXiv:2510.13912v3 Announce Type: replace-cross

Abstract: The core premise of AI debate as a scalable oversight technique is that it is harder to lie convincingly than to refute a lie, enabling the judge to identify the correct position. Yet, existing debate experiments have relied on datasets with ground truth, where lying is reduced to defending an incorrect proposition. This overlooks a subjective dimension: lying also requires the belief that the claim defended is false. In this work, we apply debate to subjective questions and explicitly measure large language models’ prior beliefs before experiments. Debaters were asked to select their preferred position, then presented with a judge persona deliberately designed to conflict with their identified priors. This setup tested whether models would adopt sycophantic strategies, aligning with the judge’s presumed perspective to maximize persuasiveness, or remain faithful to their prior beliefs. We implemented and compared two debate protocols, sequential and simultaneous, to evaluate potential systematic biases. Finally, we assessed whether models were more persuasive and produced higher-quality arguments when defending positions consistent with their prior beliefs versus when arguing against them. Our main findings show that models tend to prefer defending stances aligned with the judge persona rather than their prior beliefs, sequential debate introduces significant bias favoring the second debater, models are more persuasive when defending positions aligned with their prior beliefs, and paradoxically, arguments misaligned with prior beliefs are rated as higher quality in pairwise comparison. These results can inform human judges to provide higher-quality training signals and contribute to more aligned AI systems, while revealing important aspects of human-AI interaction regarding persuasion dynamics in language models.

arXiv:2510.13912v3 Announce Type: replace-cross

Abstract: The core premise of AI debate as a scalable oversight technique is that it is harder to lie convincingly than to refute a lie, enabling the judge to identify the correct position. Yet, existing debate experiments have relied on datasets with ground truth, where lying is reduced to defending an incorrect proposition. This overlooks a subjective dimension: lying also requires the belief that the claim defended is false. In this work, we apply debate to subjective questions and explicitly measure large language models’ prior beliefs before experiments. Debaters were asked to select their preferred position, then presented with a judge persona deliberately designed to conflict with their identified priors. This setup tested whether models would adopt sycophantic strategies, aligning with the judge’s presumed perspective to maximize persuasiveness, or remain faithful to their prior beliefs. We implemented and compared two debate protocols, sequential and simultaneous, to evaluate potential systematic biases. Finally, we assessed whether models were more persuasive and produced higher-quality arguments when defending positions consistent with their prior beliefs versus when arguing against them. Our main findings show that models tend to prefer defending stances aligned with the judge persona rather than their prior beliefs, sequential debate introduces significant bias favoring the second debater, models are more persuasive when defending positions aligned with their prior beliefs, and paradoxically, arguments misaligned with prior beliefs are rated as higher quality in pairwise comparison. These results can inform human judges to provide higher-quality training signals and contribute to more aligned AI systems, while revealing important aspects of human-AI interaction regarding persuasion dynamics in language models. Read More

How does Alignment Enhance LLMs’ Multilingual Capabilities? A Language Neurons Perspectivecs.AI updates on arXiv.org arXiv:2505.21505v2 Announce Type: replace-cross

Abstract: Multilingual Alignment is an effective and representative paradigm to enhance LLMs’ multilingual capabilities, which transfers the capabilities from the high-resource languages to the low-resource languages. Meanwhile, some research on language-specific neurons provides a new perspective to analyze and understand LLMs’ mechanisms. However, we find that there are many neurons that are shared by multiple but not all languages and cannot be correctly classified. In this work, we propose a ternary classification methodology that categorizes neurons into three types, including language-specific neurons, language-related neurons, and general neurons. And we propose a corresponding identification algorithm to distinguish these different types of neurons. Furthermore, based on the distributional characteristics of different types of neurons, we divide the LLMs’ internal process for multilingual inference into four parts: (1) multilingual understanding, (2) shared semantic space reasoning, (3) multilingual output space transformation, and (4) vocabulary space outputting. Additionally, we systematically analyze the models before and after alignment with a focus on different types of neurons. We also analyze the phenomenon of ”Spontaneous Multilingual Alignment”. Overall, our work conducts a comprehensive investigation based on different types of neurons, providing empirical results and valuable insights to better understand multilingual alignment and multilingual capabilities of LLMs.

arXiv:2505.21505v2 Announce Type: replace-cross

Abstract: Multilingual Alignment is an effective and representative paradigm to enhance LLMs’ multilingual capabilities, which transfers the capabilities from the high-resource languages to the low-resource languages. Meanwhile, some research on language-specific neurons provides a new perspective to analyze and understand LLMs’ mechanisms. However, we find that there are many neurons that are shared by multiple but not all languages and cannot be correctly classified. In this work, we propose a ternary classification methodology that categorizes neurons into three types, including language-specific neurons, language-related neurons, and general neurons. And we propose a corresponding identification algorithm to distinguish these different types of neurons. Furthermore, based on the distributional characteristics of different types of neurons, we divide the LLMs’ internal process for multilingual inference into four parts: (1) multilingual understanding, (2) shared semantic space reasoning, (3) multilingual output space transformation, and (4) vocabulary space outputting. Additionally, we systematically analyze the models before and after alignment with a focus on different types of neurons. We also analyze the phenomenon of ”Spontaneous Multilingual Alignment”. Overall, our work conducts a comprehensive investigation based on different types of neurons, providing empirical results and valuable insights to better understand multilingual alignment and multilingual capabilities of LLMs. Read More

ZAYA1: AI model using AMD GPUs for training hits milestoneAI News Zyphra, AMD, and IBM spent a year testing whether AMD’s GPUs and platform can support large-scale AI model training, and the result is ZAYA1. In partnership, the three companies trained ZAYA1 – described as the first major Mixture-of-Experts foundation model built entirely on AMD GPUs and networking – which they see as proof that the

The post ZAYA1: AI model using AMD GPUs for training hits milestone appeared first on AI News.

Zyphra, AMD, and IBM spent a year testing whether AMD’s GPUs and platform can support large-scale AI model training, and the result is ZAYA1. In partnership, the three companies trained ZAYA1 – described as the first major Mixture-of-Experts foundation model built entirely on AMD GPUs and networking – which they see as proof that the

The post ZAYA1: AI model using AMD GPUs for training hits milestone appeared first on AI News. Read More

My Honest Review on Abacus AI: ChatLLM, DeepAgent & EnterpriseKDnuggets Abacus AI offers the world’s first professional and enterprise AI Super Assistant. It’s an all-in-one AI platform for the top language, image, voic,e and video models along with all the tooling and infrastructure to support them. Abacus can connect to all YOUR data and apply AI to automate work.

Abacus AI offers the world’s first professional and enterprise AI Super Assistant. It’s an all-in-one AI platform for the top language, image, voic,e and video models along with all the tooling and infrastructure to support them. Abacus can connect to all YOUR data and apply AI to automate work. Read More

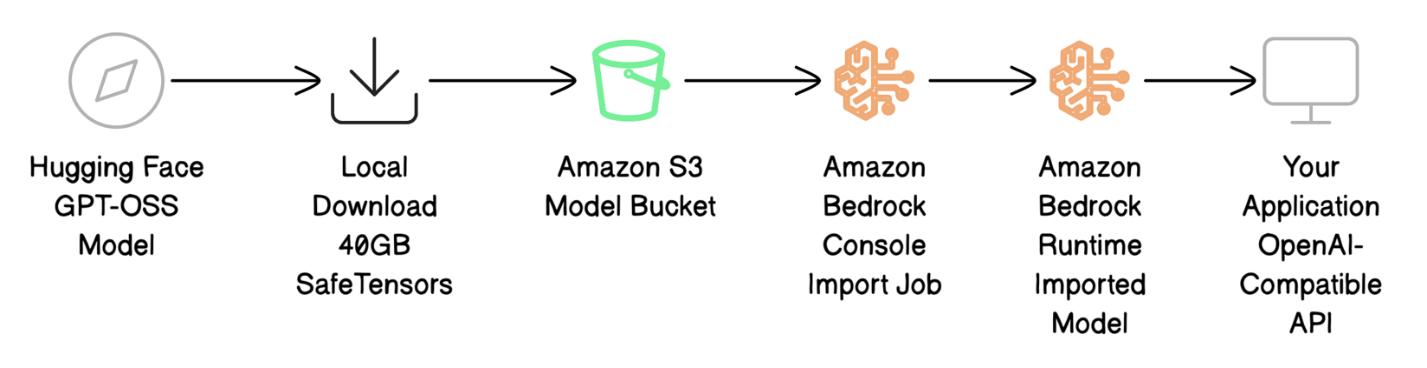

Deploy GPT-OSS models with Amazon Bedrock Custom Model ImportArtificial Intelligence In this post, we show how to deploy the GPT-OSS-20B model on Amazon Bedrock using Custom Model Import while maintaining complete API compatibility with your current applications.

In this post, we show how to deploy the GPT-OSS-20B model on Amazon Bedrock using Custom Model Import while maintaining complete API compatibility with your current applications. Read More

Lux + Pandas: Auto-Visualizations for Lazy AnalystsKDnuggets Why write 10 lines of matplotlib code when Lux can show you what you need in one click?

Why write 10 lines of matplotlib code when Lux can show you what you need in one click? Read More