How background AI builds operational resilience & visible ROIAI News If you asked most enterprise leaders which AI tools are delivering ROI, many would point to front-end chatbots or customer support automation. That’s the wrong door. The most value-generating AI systems today aren’t loud, customer-facing marvels. They’re tucked away in backend operations. They work silently, flagging irregularities in real-time, automating risk reviews, mapping data lineage,

The post How background AI builds operational resilience & visible ROI appeared first on AI News.

If you asked most enterprise leaders which AI tools are delivering ROI, many would point to front-end chatbots or customer support automation. That’s the wrong door. The most value-generating AI systems today aren’t loud, customer-facing marvels. They’re tucked away in backend operations. They work silently, flagging irregularities in real-time, automating risk reviews, mapping data lineage,

The post How background AI builds operational resilience & visible ROI appeared first on AI News. Read More

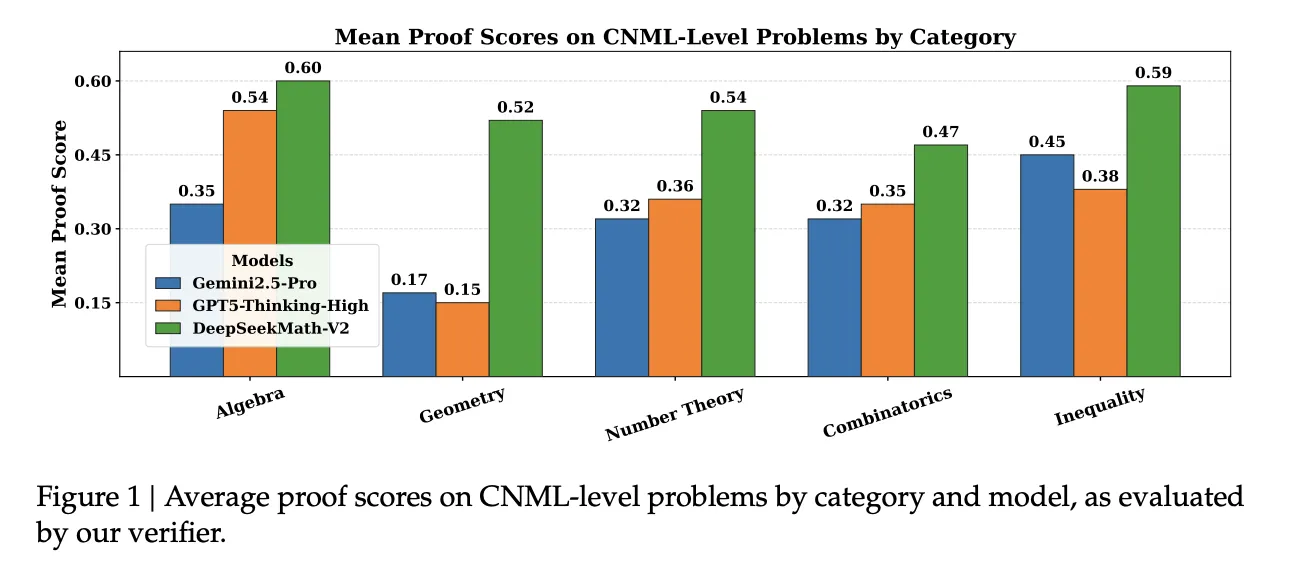

DeepSeek AI Releases DeepSeekMath-V2: The Open Weights Maths Model That Scored 118/120 on Putnam 2024MarkTechPost How can an AI system prove complex olympiad level math problems in clear natural language while also checking that its own reasoning is actually correct? DeepSeek AI has released DeepSeekMath-V2, an open weights large language model that is optimized for natural language theorem proving with self verification. The model is built on DeepSeek-V3.2-Exp-Base, runs as

The post DeepSeek AI Releases DeepSeekMath-V2: The Open Weights Maths Model That Scored 118/120 on Putnam 2024 appeared first on MarkTechPost.

How can an AI system prove complex olympiad level math problems in clear natural language while also checking that its own reasoning is actually correct? DeepSeek AI has released DeepSeekMath-V2, an open weights large language model that is optimized for natural language theorem proving with self verification. The model is built on DeepSeek-V3.2-Exp-Base, runs as

The post DeepSeek AI Releases DeepSeekMath-V2: The Open Weights Maths Model That Scored 118/120 on Putnam 2024 appeared first on MarkTechPost. Read More

Water Cooler Small Talk, Ep. 10: So, What About the AI Bubble?Towards Data Science Have we all been tricked into believing in an impossible, extremely expensive future?

The post Water Cooler Small Talk, Ep. 10: So, What About the AI Bubble? appeared first on Towards Data Science.

Have we all been tricked into believing in an impossible, extremely expensive future?

The post Water Cooler Small Talk, Ep. 10: So, What About the AI Bubble? appeared first on Towards Data Science. Read More

TDS Newsletter: November Must-Reads on GraphRAG, ML Projects, LLM-Powered Time-Series Analysis, and MoreTowards Data Science Don’t miss our most-read stories of the past month

The post TDS Newsletter: November Must-Reads on GraphRAG, ML Projects, LLM-Powered Time-Series Analysis, and More appeared first on Towards Data Science.

Don’t miss our most-read stories of the past month

The post TDS Newsletter: November Must-Reads on GraphRAG, ML Projects, LLM-Powered Time-Series Analysis, and More appeared first on Towards Data Science. Read More

A Coding Implementation for an Agentic AI Framework that Performs Literature Analysis, Hypothesis Generation, Experimental Planning, Simulation, and Scientific ReportingMarkTechPost In this tutorial, we build a complete scientific discovery agent step by step and experience how each component works together to form a coherent research workflow. We begin by loading our literature corpus, constructing retrieval and LLM modules, and then assembling agents that search papers, generate hypotheses, design experiments, and produce structured reports. Through snippets

The post A Coding Implementation for an Agentic AI Framework that Performs Literature Analysis, Hypothesis Generation, Experimental Planning, Simulation, and Scientific Reporting appeared first on MarkTechPost.

In this tutorial, we build a complete scientific discovery agent step by step and experience how each component works together to form a coherent research workflow. We begin by loading our literature corpus, constructing retrieval and LLM modules, and then assembling agents that search papers, generate hypotheses, design experiments, and produce structured reports. Through snippets

The post A Coding Implementation for an Agentic AI Framework that Performs Literature Analysis, Hypothesis Generation, Experimental Planning, Simulation, and Scientific Reporting appeared first on MarkTechPost. Read More

How the MCP spec update boosts security as infrastructure scalesAI News The latest MCP spec update fortifies enterprise infrastructure with tighter security, moving AI agents from pilot to production. Marking its first year, the Anthropic-created open-source project released a revised spec this week aimed at the operational headaches keeping generative AI agents stuck in pilot mode. Backed by Amazon Web Services (AWS), Microsoft, and Google Cloud,

The post How the MCP spec update boosts security as infrastructure scales appeared first on AI News.

The latest MCP spec update fortifies enterprise infrastructure with tighter security, moving AI agents from pilot to production. Marking its first year, the Anthropic-created open-source project released a revised spec this week aimed at the operational headaches keeping generative AI agents stuck in pilot mode. Backed by Amazon Web Services (AWS), Microsoft, and Google Cloud,

The post How the MCP spec update boosts security as infrastructure scales appeared first on AI News. Read More

LightMem: Lightweight and Efficient Memory-Augmented Generationcs.AI updates on arXiv.org arXiv:2510.18866v3 Announce Type: replace-cross

Abstract: Despite their remarkable capabilities, Large Language Models (LLMs) struggle to effectively leverage historical interaction information in dynamic and complex environments. Memory systems enable LLMs to move beyond stateless interactions by introducing persistent information storage, retrieval, and utilization mechanisms. However, existing memory systems often introduce substantial time and computational overhead. To this end, we introduce a new memory system called LightMem, which strikes a balance between the performance and efficiency of memory systems. Inspired by the Atkinson-Shiffrin model of human memory, LightMem organizes memory into three complementary stages. First, cognition-inspired sensory memory rapidly filters irrelevant information through lightweight compression and groups information according to their topics. Next, topic-aware short-term memory consolidates these topic-based groups, organizing and summarizing content for more structured access. Finally, long-term memory with sleep-time update employs an offline procedure that decouples consolidation from online inference. On LongMemEval and LoCoMo, using GPT and Qwen backbones, LightMem consistently surpasses strong baselines, improving QA accuracy by up to 7.7% / 29.3%, reducing total token usage by up to 38x / 20.9x and API calls by up to 30x / 55.5x, while purely online test-time costs are even lower, achieving up to 106x / 117x token reduction and 159x / 310x fewer API calls. The code is available at https://github.com/zjunlp/LightMem.

arXiv:2510.18866v3 Announce Type: replace-cross

Abstract: Despite their remarkable capabilities, Large Language Models (LLMs) struggle to effectively leverage historical interaction information in dynamic and complex environments. Memory systems enable LLMs to move beyond stateless interactions by introducing persistent information storage, retrieval, and utilization mechanisms. However, existing memory systems often introduce substantial time and computational overhead. To this end, we introduce a new memory system called LightMem, which strikes a balance between the performance and efficiency of memory systems. Inspired by the Atkinson-Shiffrin model of human memory, LightMem organizes memory into three complementary stages. First, cognition-inspired sensory memory rapidly filters irrelevant information through lightweight compression and groups information according to their topics. Next, topic-aware short-term memory consolidates these topic-based groups, organizing and summarizing content for more structured access. Finally, long-term memory with sleep-time update employs an offline procedure that decouples consolidation from online inference. On LongMemEval and LoCoMo, using GPT and Qwen backbones, LightMem consistently surpasses strong baselines, improving QA accuracy by up to 7.7% / 29.3%, reducing total token usage by up to 38x / 20.9x and API calls by up to 30x / 55.5x, while purely online test-time costs are even lower, achieving up to 106x / 117x token reduction and 159x / 310x fewer API calls. The code is available at https://github.com/zjunlp/LightMem. Read More

Staying Ahead of AI in Your CareerKDnuggets The point is this: those who learn to collaborate with AI rather than fear it will hold the keys to tomorrow’s job market.

The point is this: those who learn to collaborate with AI rather than fear it will hold the keys to tomorrow’s job market. Read More

SAP outlines new approach to European AI and cloud sovereigntyAI News SAP is moving its sovereignty plans forward with EU AI Cloud, a setup meant to bring its past efforts under one approach. The goal is simple: give organisations in Europe more choice and more control over how they run AI and cloud services. Some may prefer SAP’s own data centres, some may use trusted European

The post SAP outlines new approach to European AI and cloud sovereignty appeared first on AI News.

SAP is moving its sovereignty plans forward with EU AI Cloud, a setup meant to bring its past efforts under one approach. The goal is simple: give organisations in Europe more choice and more control over how they run AI and cloud services. Some may prefer SAP’s own data centres, some may use trusted European

The post SAP outlines new approach to European AI and cloud sovereignty appeared first on AI News. Read More

Everyday Decisions are Noisier Than You Think — Here’s How AI Can Help Fix That Towards Data Science

Everyday Decisions are Noisier Than You Think — Here’s How AI Can Help Fix ThatTowards Data Science From insurance premiums to courtrooms: the impact of noise

The post Everyday Decisions are Noisier Than You Think — Here’s How AI Can Help Fix That appeared first on Towards Data Science.

From insurance premiums to courtrooms: the impact of noise

The post Everyday Decisions are Noisier Than You Think — Here’s How AI Can Help Fix That appeared first on Towards Data Science. Read More