How to Climb the Hidden Career Ladder of Data ScienceTowards Data Science The behaviors that get you promoted

The post How to Climb the Hidden Career Ladder of Data Science appeared first on Towards Data Science.

The behaviors that get you promoted

The post How to Climb the Hidden Career Ladder of Data Science appeared first on Towards Data Science. Read More

How to Build an Adaptive Meta-Reasoning Agent That Dynamically Chooses Between Fast, Deep, and Tool-Based Thinking StrategiesMarkTechPost We begin this tutorial by building a meta-reasoning agent that decides how to think before it thinks. Instead of applying the same reasoning process for every query, we design a system that evaluates complexity, chooses between fast heuristics, deep chain-of-thought reasoning, or tool-based computation, and then adapts its behaviour in real time. By examining each

The post How to Build an Adaptive Meta-Reasoning Agent That Dynamically Chooses Between Fast, Deep, and Tool-Based Thinking Strategies appeared first on MarkTechPost.

We begin this tutorial by building a meta-reasoning agent that decides how to think before it thinks. Instead of applying the same reasoning process for every query, we design a system that evaluates complexity, chooses between fast heuristics, deep chain-of-thought reasoning, or tool-based computation, and then adapts its behaviour in real time. By examining each

The post How to Build an Adaptive Meta-Reasoning Agent That Dynamically Chooses Between Fast, Deep, and Tool-Based Thinking Strategies appeared first on MarkTechPost. Read More

The Machine Learning “Advent Calendar” Day 7: Decision Tree ClassifierTowards Data Science In Day 6, we saw how a Decision Tree Regressor finds its optimal split by minimizing the Mean Squared Error.

Today, for Day 7 of the Machine Learning “Advent Calendar”, we switch to classification. With just one numerical feature and two classes, we explore how a Decision Tree Classifier decides where to cut the data, using impurity measures like Gini and Entropy.

Even without doing the math, we can visually guess possible split points. But which one is best? And do impurity measures really make a difference? Let us build the first split step by step in Excel and see what happens.

The post The Machine Learning “Advent Calendar” Day 7: Decision Tree Classifier appeared first on Towards Data Science.

In Day 6, we saw how a Decision Tree Regressor finds its optimal split by minimizing the Mean Squared Error.

Today, for Day 7 of the Machine Learning “Advent Calendar”, we switch to classification. With just one numerical feature and two classes, we explore how a Decision Tree Classifier decides where to cut the data, using impurity measures like Gini and Entropy.

Even without doing the math, we can visually guess possible split points. But which one is best? And do impurity measures really make a difference? Let us build the first split step by step in Excel and see what happens.

The post The Machine Learning “Advent Calendar” Day 7: Decision Tree Classifier appeared first on Towards Data Science. Read More

Artificial Intelligence, Machine Learning, Deep Learning, and Generative AI — Clearly ExplainedTowards Data Science Understanding AI in 2026 — from machine learning to generative models

The post Artificial Intelligence, Machine Learning, Deep Learning, and Generative AI — Clearly Explained appeared first on Towards Data Science.

Understanding AI in 2026 — from machine learning to generative models

The post Artificial Intelligence, Machine Learning, Deep Learning, and Generative AI — Clearly Explained appeared first on Towards Data Science. Read More

The Best Web Scraping APIs for AI Models in 2026KDnuggets For powering next-generation AI models in 2026, Bright Data’s Web Scraper API delivers on all fronts: dynamic site support, anti-bot automation, structured output, and global reach.

For powering next-generation AI models in 2026, Bright Data’s Web Scraper API delivers on all fronts: dynamic site support, anti-bot automation, structured output, and global reach. Read More

Microsoft AI Releases VibeVoice-Realtime: A Lightweight Real‑Time Text-to-Speech Model Supporting Streaming Text Input and Robust Long-Form Speech GenerationMarkTechPost Microsoft has released VibeVoice-Realtime-0.5B, a real time text to speech model that works with streaming text input and long form speech output, aimed at agent style applications and live data narration. The model can start producing audible speech in about 300 ms, which is critical when a language model is still generating the rest of

The post Microsoft AI Releases VibeVoice-Realtime: A Lightweight Real‑Time Text-to-Speech Model Supporting Streaming Text Input and Robust Long-Form Speech Generation appeared first on MarkTechPost.

Microsoft has released VibeVoice-Realtime-0.5B, a real time text to speech model that works with streaming text input and long form speech output, aimed at agent style applications and live data narration. The model can start producing audible speech in about 300 ms, which is critical when a language model is still generating the rest of

The post Microsoft AI Releases VibeVoice-Realtime: A Lightweight Real‑Time Text-to-Speech Model Supporting Streaming Text Input and Robust Long-Form Speech Generation appeared first on MarkTechPost. Read More

Reading Research Papers in the Age of LLMsTowards Data Science How I keep up with papers with a mix of manual and AI-assisted reading

The post Reading Research Papers in the Age of LLMs appeared first on Towards Data Science.

How I keep up with papers with a mix of manual and AI-assisted reading

The post Reading Research Papers in the Age of LLMs appeared first on Towards Data Science. Read More

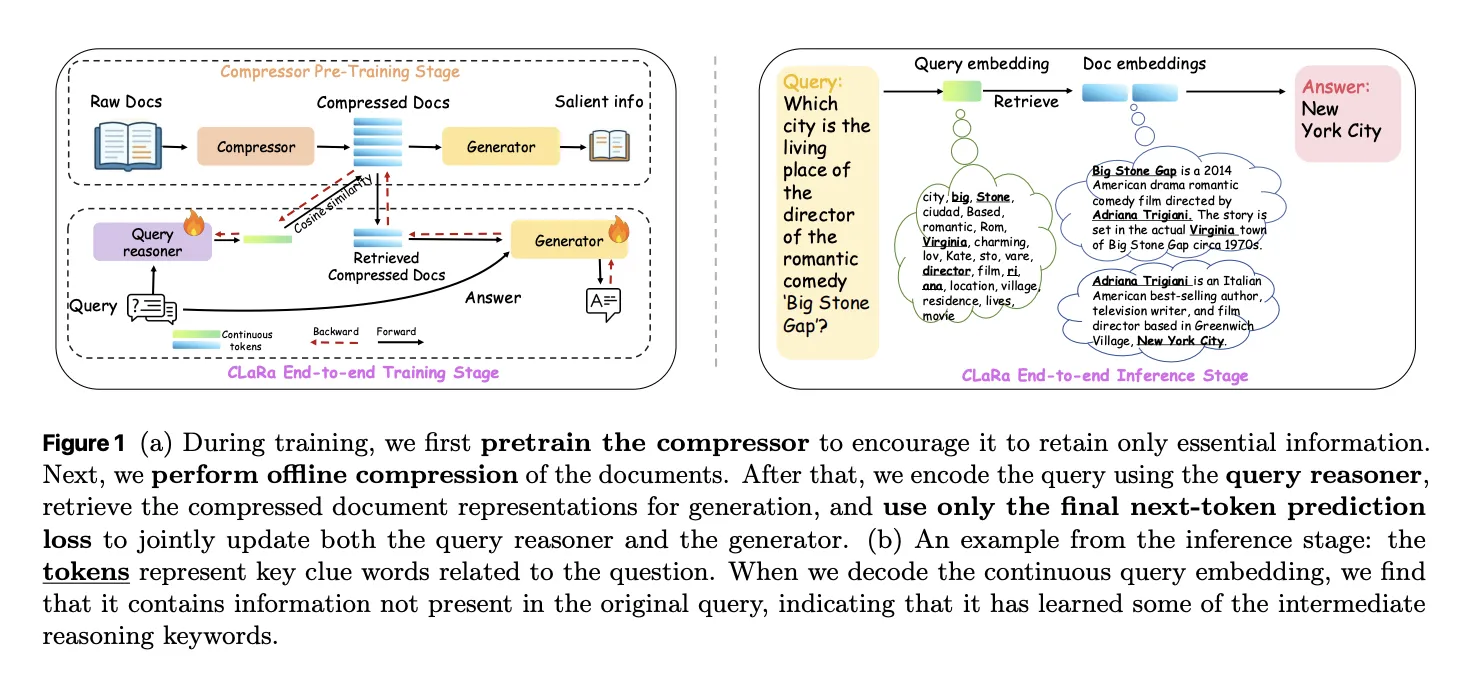

Apple Researchers Release CLaRa: A Continuous Latent Reasoning Framework for Compression‑Native RAG with 16x–128x Semantic Document CompressionMarkTechPost How do you keep RAG systems accurate and efficient when every query tries to stuff thousands of tokens into the context window and the retriever and generator are still optimized as 2 separate, disconnected systems? A team of researchers from Apple and University of Edinburgh released CLaRa, Continuous Latent Reasoning, (CLaRa-7B-Base, CLaRa-7B-Instruct and CLaRa-7B-E2E) a

The post Apple Researchers Release CLaRa: A Continuous Latent Reasoning Framework for Compression‑Native RAG with 16x–128x Semantic Document Compression appeared first on MarkTechPost.

How do you keep RAG systems accurate and efficient when every query tries to stuff thousands of tokens into the context window and the retriever and generator are still optimized as 2 separate, disconnected systems? A team of researchers from Apple and University of Edinburgh released CLaRa, Continuous Latent Reasoning, (CLaRa-7B-Base, CLaRa-7B-Instruct and CLaRa-7B-E2E) a

The post Apple Researchers Release CLaRa: A Continuous Latent Reasoning Framework for Compression‑Native RAG with 16x–128x Semantic Document Compression appeared first on MarkTechPost. Read More

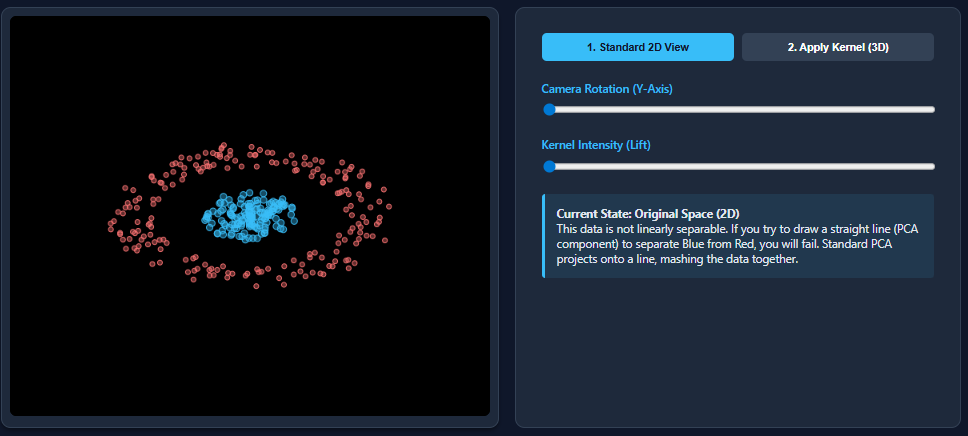

Kernel Principal Component Analysis (PCA): Explained with an ExampleMarkTechPost Dimensionality reduction techniques like PCA work wonderfully when datasets are linearly separable—but they break down the moment nonlinear patterns appear. That’s exactly what happens with datasets such as two moons: PCA flattens the structure and mixes the classes together. Kernel PCA fixes this limitation by mapping the data into a higher-dimensional feature space where nonlinear

The post Kernel Principal Component Analysis (PCA): Explained with an Example appeared first on MarkTechPost.

Dimensionality reduction techniques like PCA work wonderfully when datasets are linearly separable—but they break down the moment nonlinear patterns appear. That’s exactly what happens with datasets such as two moons: PCA flattens the structure and mixes the classes together. Kernel PCA fixes this limitation by mapping the data into a higher-dimensional feature space where nonlinear

The post Kernel Principal Component Analysis (PCA): Explained with an Example appeared first on MarkTechPost. Read More

How to Design a Fully Local Multi-Agent Orchestration System Using TinyLlama for Intelligent Task Decomposition and Autonomous CollaborationMarkTechPost In this tutorial, we explore how we can orchestrate a team of specialized AI agents locally using an efficient manager-agent architecture powered by TinyLlama. We walk through how we build structured task decomposition, inter-agent collaboration, and autonomous reasoning loops without relying on any external APIs. By running everything directly through the transformers library, we create

The post How to Design a Fully Local Multi-Agent Orchestration System Using TinyLlama for Intelligent Task Decomposition and Autonomous Collaboration appeared first on MarkTechPost.

In this tutorial, we explore how we can orchestrate a team of specialized AI agents locally using an efficient manager-agent architecture powered by TinyLlama. We walk through how we build structured task decomposition, inter-agent collaboration, and autonomous reasoning loops without relying on any external APIs. By running everything directly through the transformers library, we create

The post How to Design a Fully Local Multi-Agent Orchestration System Using TinyLlama for Intelligent Task Decomposition and Autonomous Collaboration appeared first on MarkTechPost. Read More