How to Build Interactive Geospatial Dashboards Using Folium with Heatmaps, Choropleths, Time Animation, Marker Clustering, and Advanced Interactive PluginsMarkTechPost In this Folium tutorial, we build a complete set of interactive maps that run in Colab or any local Python setup. We explore multiple basemap styles, design rich markers with HTML popups, and visualize spatial density using heatmaps. We also create region-level choropleth maps from GeoJSON, scale to thousands of points using marker clustering, and

The post How to Build Interactive Geospatial Dashboards Using Folium with Heatmaps, Choropleths, Time Animation, Marker Clustering, and Advanced Interactive Plugins appeared first on MarkTechPost.

In this Folium tutorial, we build a complete set of interactive maps that run in Colab or any local Python setup. We explore multiple basemap styles, design rich markers with HTML popups, and visualize spatial density using heatmaps. We also create region-level choropleth maps from GeoJSON, scale to thousands of points using marker clustering, and

The post How to Build Interactive Geospatial Dashboards Using Folium with Heatmaps, Choropleths, Time Animation, Marker Clustering, and Advanced Interactive Plugins appeared first on MarkTechPost. Read More

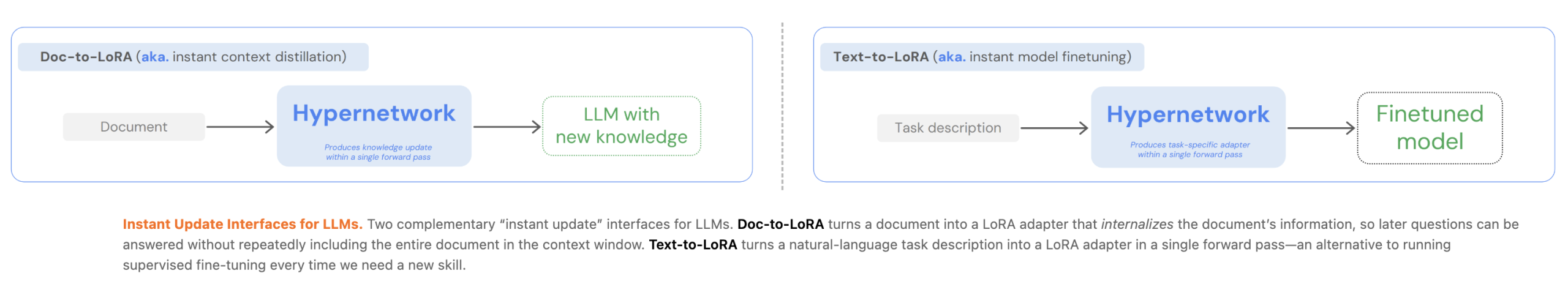

Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypernetworks that Instantly Internalize Long Contexts and Adapt LLMs via Zero-Shot Natural LanguageMarkTechPost Customizing Large Language Models (LLMs) currently presents a significant engineering trade-off between the flexibility of In-Context Learning (ICL) and the efficiency of Context Distillation (CD) or Supervised Fine-Tuning (SFT). Tokyo-based Sakana AI has proposed a new approach to bypass these constraints through cost amortization. In two of their recent papers, they introduced Text-to-LoRA (T2L) and

The post Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypernetworks that Instantly Internalize Long Contexts and Adapt LLMs via Zero-Shot Natural Language appeared first on MarkTechPost.

Customizing Large Language Models (LLMs) currently presents a significant engineering trade-off between the flexibility of In-Context Learning (ICL) and the efficiency of Context Distillation (CD) or Supervised Fine-Tuning (SFT). Tokyo-based Sakana AI has proposed a new approach to bypass these constraints through cost amortization. In two of their recent papers, they introduced Text-to-LoRA (T2L) and

The post Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypernetworks that Instantly Internalize Long Contexts and Adapt LLMs via Zero-Shot Natural Language appeared first on MarkTechPost. Read More

Docker AI for Agent Builders: Models, Tools, and Cloud OffloadKDnuggets This article explores five infrastructure patterns that make Docker a powerful foundation for building robust, autonomous AI applications.

This article explores five infrastructure patterns that make Docker a powerful foundation for building robust, autonomous AI applications. Read More

The Future of Data Storytelling Formats: Beyond DashboardsKDnuggets Redefining data storytelling through interactive narratives, immersive environments, and alternative sensory techniques

Redefining data storytelling through interactive narratives, immersive environments, and alternative sensory techniques Read More

Generative AI, Discriminative HumanTowards Data Science How to think critically about AI in an ocean of hype

The post Generative AI, Discriminative Human appeared first on Towards Data Science.

How to think critically about AI in an ocean of hype

The post Generative AI, Discriminative Human appeared first on Towards Data Science. Read More

Joint Statement from OpenAI and MicrosoftOpenAI News Microsoft and OpenAI continue to work closely across research, engineering, and product development, building on years of deep collaboration and shared success.

Microsoft and OpenAI continue to work closely across research, engineering, and product development, building on years of deep collaboration and shared success. Read More

LLM4AD: A Platform for Algorithm Design with Large Language Modelcs.AI updates on arXiv.org arXiv:2412.17287v2 Announce Type: replace

Abstract: We introduce LLM4AD, a unified Python platform for algorithm design (AD) with large language models (LLMs). LLM4AD is a generic framework with modularized blocks for search methods, algorithm design tasks, and LLM interface. The platform integrates numerous key methods and supports a wide range of algorithm design tasks across various domains including optimization, machine learning, and scientific discovery. We have also designed a unified evaluation sandbox to ensure a secure and robust assessment of algorithms. Additionally, we have compiled a comprehensive suite of support resources, including tutorials, examples, a user manual, online resources, and a dedicated graphical user interface (GUI) to enhance the usage of LLM4AD. We believe this platform will serve as a valuable tool for fostering future development in the merging research direction of LLM-assisted algorithm design.

arXiv:2412.17287v2 Announce Type: replace

Abstract: We introduce LLM4AD, a unified Python platform for algorithm design (AD) with large language models (LLMs). LLM4AD is a generic framework with modularized blocks for search methods, algorithm design tasks, and LLM interface. The platform integrates numerous key methods and supports a wide range of algorithm design tasks across various domains including optimization, machine learning, and scientific discovery. We have also designed a unified evaluation sandbox to ensure a secure and robust assessment of algorithms. Additionally, we have compiled a comprehensive suite of support resources, including tutorials, examples, a user manual, online resources, and a dedicated graphical user interface (GUI) to enhance the usage of LLM4AD. We believe this platform will serve as a valuable tool for fostering future development in the merging research direction of LLM-assisted algorithm design. Read More

K-Search: LLM Kernel Generation via Co-Evolving Intrinsic World Modelcs.AI updates on arXiv.org arXiv:2602.19128v2 Announce Type: replace

Abstract: Optimizing GPU kernels is critical for efficient modern machine learning systems yet remains challenging due to the complex interplay of design factors and rapid hardware evolution. Existing automated approaches typically treat Large Language Models (LLMs) merely as stochastic code generators within heuristic-guided evolutionary loops. These methods often struggle with complex kernels requiring coordinated, multi-step structural transformations, as they lack explicit planning capabilities and frequently discard promising strategies due to inefficient or incorrect intermediate implementations. To address this, we propose Search via Co-Evolving World Model and build K-Search based on this method. By replacing static search heuristics with a co-evolving world model, our framework leverages LLMs’ prior domain knowledge to guide the search, actively exploring the optimization space. This approach explicitly decouples high-level algorithmic planning from low-level program instantiation, enabling the system to navigate non-monotonic optimization paths while remaining resilient to temporary implementation defects. We evaluate K-Search on diverse, complex kernels from FlashInfer, including GQA, MLA, and MoE kernels. Our results show that K-Search significantly outperforms state-of-the-art evolutionary search methods, achieving an average 2.10x improvement and up to a 14.3x gain on complex MoE kernels. On the GPUMode TriMul task, K-Search achieves state-of-the-art performance on H100, reaching 1030us and surpassing both prior evolution and human-designed solutions.

arXiv:2602.19128v2 Announce Type: replace

Abstract: Optimizing GPU kernels is critical for efficient modern machine learning systems yet remains challenging due to the complex interplay of design factors and rapid hardware evolution. Existing automated approaches typically treat Large Language Models (LLMs) merely as stochastic code generators within heuristic-guided evolutionary loops. These methods often struggle with complex kernels requiring coordinated, multi-step structural transformations, as they lack explicit planning capabilities and frequently discard promising strategies due to inefficient or incorrect intermediate implementations. To address this, we propose Search via Co-Evolving World Model and build K-Search based on this method. By replacing static search heuristics with a co-evolving world model, our framework leverages LLMs’ prior domain knowledge to guide the search, actively exploring the optimization space. This approach explicitly decouples high-level algorithmic planning from low-level program instantiation, enabling the system to navigate non-monotonic optimization paths while remaining resilient to temporary implementation defects. We evaluate K-Search on diverse, complex kernels from FlashInfer, including GQA, MLA, and MoE kernels. Our results show that K-Search significantly outperforms state-of-the-art evolutionary search methods, achieving an average 2.10x improvement and up to a 14.3x gain on complex MoE kernels. On the GPUMode TriMul task, K-Search achieves state-of-the-art performance on H100, reaching 1030us and surpassing both prior evolution and human-designed solutions. Read More

Upgrading agentic AI for finance workflowsAI News Improving trust in agentic AI for finance workflows remains a major priority for technology leaders today. Over the past two years, enterprises have rushed to put automated agents into real workflows, spanning customer support and back-office operations. These tools excel at retrieving information, yet they often struggle to provide consistent and explainable reasoning during multi-step

The post Upgrading agentic AI for finance workflows appeared first on AI News.

Improving trust in agentic AI for finance workflows remains a major priority for technology leaders today. Over the past two years, enterprises have rushed to put automated agents into real workflows, spanning customer support and back-office operations. These tools excel at retrieving information, yet they often struggle to provide consistent and explainable reasoning during multi-step

The post Upgrading agentic AI for finance workflows appeared first on AI News. Read More

5 Things You Need to Know Before Using OpenClawKDnuggets OpenClaw is incredibly powerful, but if you install it without understanding these five things, you could expose far more than you expect.

OpenClaw is incredibly powerful, but if you install it without understanding these five things, you could expose far more than you expect. Read More