Microsoft Copilot vs Claude: The Comparison Microsoft Hoped You Wouldn't Make

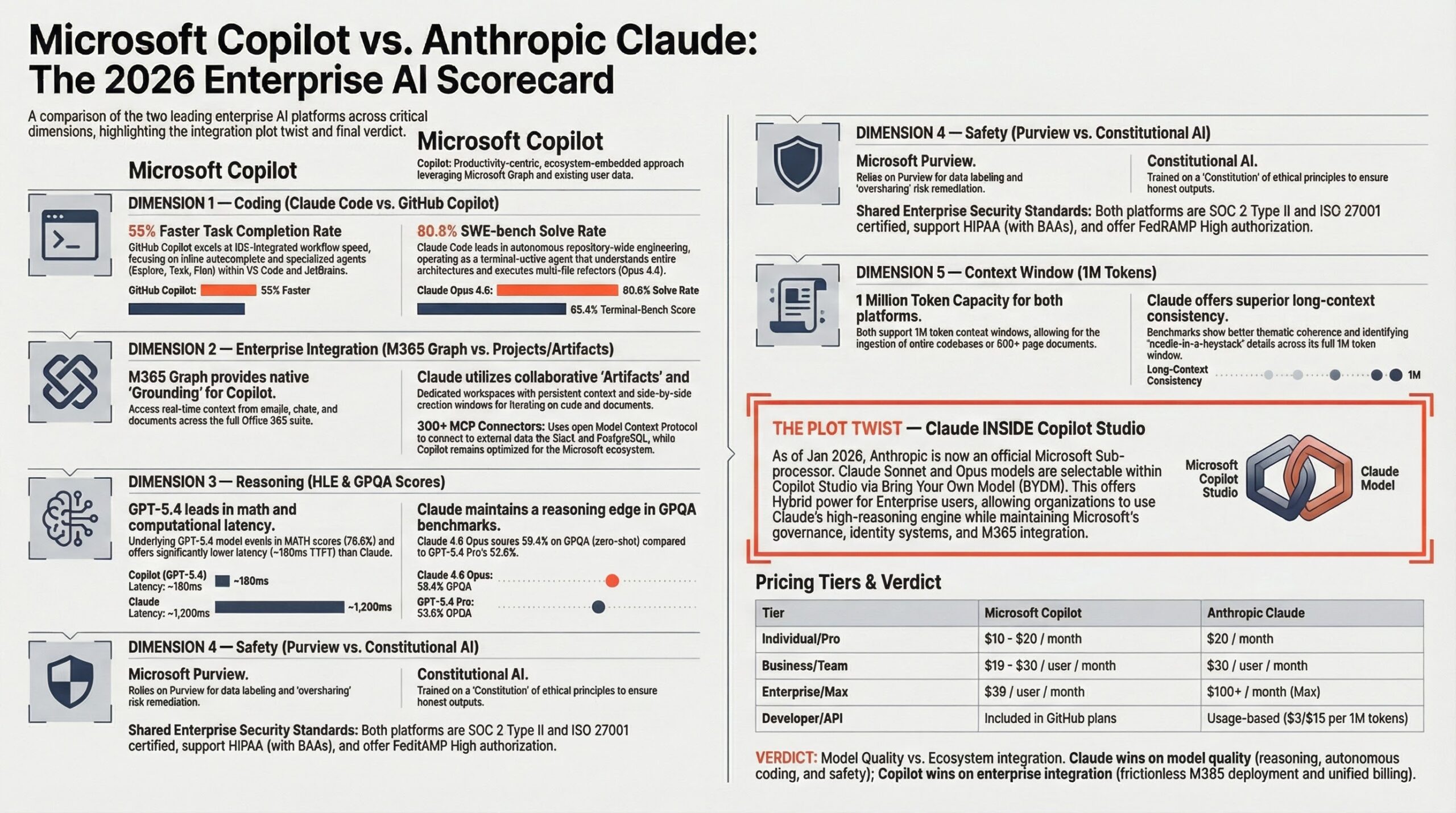

Microsoft now sells Claude inside its own Copilot platform. Read that again. The company that built its entire AI strategy around OpenAI's GPT models quietly onboarded Anthropic as a subprocessor in January 2026, making Claude Opus 4.6 and Claude Sonnet 4.5 selectable inside Copilot Studio for custom agents, while Opus 4.5 powers the main M365 surfaces including Excel Agent Mode and the Researcher agent (Microsoft Learn, March 2026). So the question isn't just "which is better." It's whether you want Claude standalone or Claude-inside-Copilot. That's a fundamentally different comparison than the internet is running.

Microsoft 365 Copilot uses GPT-5.x as its default model, with Claude available as an alternative in specific surfaces. Claude standalone (claude.ai, Claude Code) runs exclusively on Anthropic's models. Benchmarks below compare the underlying models (GPT-5.4 vs Claude Opus 4.6) and the platform experiences. Pricing checked March 27, 2026.

Claude is an AI assistant built by Anthropic, a San Francisco-based AI safety company. Think of it as a competitor to ChatGPT, but focused on careful reasoning and coding. If you haven't used either tool before, we recommend reading What Is Microsoft Copilot first to understand Copilot's product lineup, then coming back here for the head-to-head comparison.

A context window is how much text an AI model can read and respond to in a single conversation -- think of it as the model's working memory. It's measured in tokens, which are chunks of text roughly 3/4 of a word (so 200K tokens is about 150,000 words, or roughly 500 pages). The bigger the context window, the more documents the AI can analyze at once.

Quick Verdict: Copilot vs Claude

Verdict: Claude is the stronger AI model for reasoning and deep work. Copilot is the stronger platform for enterprise productivity. Neither is categorically better. Pick Claude if your work demands long-context analysis, complex coding, or tasks where reasoning depth matters more than ecosystem integration. Pick Copilot if your organization lives in Microsoft 365 and needs AI woven into Word, Excel, Outlook, and Teams with zero context-switching.

The irony: Microsoft knows this. That's why they put Claude inside Copilot.

- Claude leads on coding benchmarks (80.8% vs 80.0% SWE-bench Verified) and deep reasoning (Humanity's Last Exam: 34.4% vs GPT-5 Pro's 31.6% -- GPT-5.4 not yet ranked)

- Copilot's value isn't the model, it's the Microsoft 365 integration (emails, meetings, SharePoint, Teams grounded in your org's data)

- Microsoft now offers Claude models inside Copilot Studio, so "both" is a real option at no extra license cost

- Enterprise pricing: Copilot at $30/user/mo requires an existing M365 E3/E5 license. Claude Enterprise is custom pricing (reportedly starting around $60/user/mo, but actual quotes vary -- contact Anthropic directly)

- Data residency warning: Claude inside Copilot routes data to Anthropic's US-based servers, breaking EU Data Boundary commitments

Copilot vs Claude at a Glance

Contender Profiles

Not a single AI model. An orchestration layer routing through Microsoft Graph -- Microsoft's master index of everything your organization stores in M365 (emails, documents, calendar, Teams chats, SharePoint files) -- before hitting a foundation model. 85% of Fortune 500 companies use Microsoft's AI platforms. Embedded in the tools 450M commercial M365 subscribers already have.

Built by Anthropic, a San Francisco-based AI safety company founded by former OpenAI researchers. Trained using Constitutional AI (a method that teaches the model to follow explicit ethical rules -- like a constitution -- instead of relying solely on human feedback). Model lineup: Opus 4.6 (latest flagship), Opus 4.5 (previous flagship, used in some Copilot surfaces), Sonnet 4.5 (mid-tier, faster and cheaper). Largest effective context window in the industry (up to 1M tokens in beta) and the strongest coding benchmarks among frontier models. Enterprise pricing is custom with a 70-user minimum -- reported starting points vary and actual quotes require direct engagement with Anthropic's sales team.

Coding and Development

This is where the comparison gets interesting, because these tools solve fundamentally different problems. Important distinction: GitHub Copilot (the coding assistant, $10-39/mo) is a separate product from Microsoft 365 Copilot (the productivity assistant, $30/user/mo). They share the name but do completely different things. GitHub Copilot is a prediction-first, IDE-embedded autocomplete assistant that suggests code as you type. Claude Code is a reasoning-first, terminal-native agent that reads your entire repository and executes multi-file changes autonomously.

GPT-5.4 dominates Terminal-Bench 2.0 (measures how well AI handles DevOps tasks and command-line operations) at 75.1% vs Claude Opus 4.6's 65.4% -- a 9.7-point gap. On SWE-bench Pro (harder, multi-language version with standardized testing), GPT-5.4 leads at 57.7% vs Opus 4.5's (previous flagship) 45.9%. Note: the SWE-bench Pro score uses Opus 4.5, not the current Opus 4.6; Opus 4.6 scores are not yet published for this benchmark. Killer feature Claude Code lacks: inline autocomplete in VS Code, JetBrains, Neovim, Xcode. Developers complete tasks 55% faster. Copilot Pro costs just $10/mo.

Claude Opus 4.6 (current flagship) scores 80.8% on SWE-bench Verified -- a benchmark testing whether AI can fix real bugs from GitHub repos (higher = more bugs fixed correctly) -- vs GPT-5.4's ~80.0%. Caveat: all six frontier models cluster within 1.3% of each other on this benchmark; harness configuration and potential training data contamination drive much of the remaining variance. OpenAI has stopped reporting SWE-bench Verified scores due to contamination concerns. Treat these numbers as directional, not definitive. Excels at repository-scale refactoring, handling coordinated edits across 10-30+ files with a 1M-token context window.

Enterprise Integration and Ecosystem

This measures how well the AI fits into your existing workflow. A model's benchmark scores are irrelevant if your team can't actually use it.

This is Copilot's entire reason for existing. Embedded in Word, Excel, PowerPoint, Outlook, and Teams. Queries grounded in your org's Microsoft Graph data. For M365 orgs, AI appears inside tools people already use with zero new workflows. Copilot Studio lets you build custom agents with BYOM (Bring Your Own Model -- the ability to swap in a different AI model instead of the default) supporting GPT, Claude, Phi, Llama, and Mistral.

Claude operates as a standalone workspace. Projects and Artifacts provide collaborative spaces. No native M365 or Google Workspace integration. Claude's ecosystem play is the API and MCP (Model Context Protocol -- a universal adapter standard that lets developers plug Claude into databases, internal tools, and external services). Powerful for builders but invisible to end users who just want AI in their inbox.

Reasoning and Knowledge Work

This measures raw cognitive ability on complex tasks. If you're asking the AI to analyze a regulatory filing, debug a distributed system, or synthesize a 200-page contract, this is the dimension that matters.

GPT-5.4 leads on FrontierMath (research-level math problems) at 47.6% vs Claude Opus 4.6's 40.7%. On SimpleBench (everyday common-sense reasoning): GPT-5.4 Pro 74.1% vs Claude Opus 4.6's 67.6%. On GDPval (simulates real tasks across 44 knowledge work occupations): GPT-5.4 scores 83.0% vs Claude Opus 4.5's (previous flagship; Opus 4.6 scores not yet published) 59.6% -- a 23-point gap on everyday professional tasks.

Claude Opus 4.6 (current flagship) scores 34.4% on HLE (Humanity's Last Exam) -- 2,500 questions so hard they require genuine PhD-level expertise, the "final boss" of AI benchmarks -- vs GPT-5 Pro's 31.6% (GPT-5.4 not yet ranked). On GPQA Diamond (PhD-level science questions where even expert humans average ~65%): 90.5% vs GPT-5.2's 91.4% -- a practical tie. The 1M-token context window enables ingesting entire codebases and legal archives in a single session.

GDPval measures day-to-day knowledge work across 44 occupations. GPT-5.4 leads 83.0% to Claude Opus 4.5's 59.6%. However, GDPval is an OpenAI-led benchmark -- a significant conflict of interest. The 23-point gap is dramatic enough to note, but weight it accordingly: benchmarks designed by a vendor tend to favor that vendor's strengths. Independent benchmarks (SWE-bench, HLE) show a more balanced picture. If your team's work is routine knowledge tasks, Copilot's default model may perform well. If the work requires deep analysis on complex documents, Claude's advantage on independent benchmarks holds.

Safety and Compliance

This measures whether you can actually deploy the tool in a regulated environment. Benchmarks are useless if legal won't sign off.

Zero-trust architecture inherited from M365. Integrates with Entra ID, Purview, Conditional Access. Holds FedRAMP High, SOC 2, HIPAA, GDPR. Data stays within your M365 tenant boundary with geographic data residency controls. Enforces existing ACLs -- if a user can't see a SharePoint file, Copilot can't surface it.

Constitutional AI (described in the Contender Profiles above) embeds safety principles directly into training, creating predictable, auditable behavior. SOC 2 Type II, ISO 27001:2022, HIPAA (with BAA). Zero data retention available for API. Privacy-by-design: data explicitly excluded from training. Limitation: primarily US datacenter processing.

The risk both vendors bury: Copilot inherits your permission debt -- misconfigured SharePoint permissions become a magnifying glass. Claude's risk: users upload massive data volumes into active context. For teams building AI governance frameworks, address these before deployment, not after.

Context Window and Long-Document Handling

GPT-5.4 supports 272K tokens standard (1M in Codex mode). Within M365, effective context is constrained by Graph retrieval limits. Fundamentally different architecture: fetches relevant context on demand rather than holding everything in memory. Better for finding the right needle in a large organizational haystack.

200K standard, 500K Enterprise, 1M in beta with Opus 4.6. On MRCR v2 (Multi-Round Context Retention -- tests whether the model actually remembers information from the start of a very long conversation): 76% accuracy. Enables use cases shorter-context models literally cannot do: whole-codebase analysis, multi-year legal archives, comprehensive research libraries.

Dimension Scorecard: Copilot vs Claude

Claude Inside Copilot

Here's the part that makes this comparison genuinely interesting. Microsoft announced Anthropic as a subprocessor in January 2026. Claude models are now selectable inside Copilot Studio, available in the Researcher agent, and rolling out to Excel, PowerPoint, and Word through Microsoft's Frontier program.

Microsoft Graph grounding

Word, Excel, Outlook, Teams

Researcher agent

Excel Agent Mode

What's available: Claude Opus 4.6 (latest flagship) and Sonnet 4.5 (mid-tier) are selectable in Copilot Studio's prompt builder for custom agents. Opus 4.5 (previous flagship) powers the Researcher agent and is available in Agent Mode in Excel. All included in the existing M365 Copilot license.

A cloud-based AI agent that can plan, execute, and deliver long-running, multi-step workflows across Outlook, Teams, Excel, PowerPoint, and SharePoint. Claude handles complex reasoning and planning; Microsoft's models manage M365 integration. Takes high-level outcomes ("Prepare the quarterly board deck from these five SharePoint files and the last three Teams meetings") and executes a structured plan in the background.

What this means for enterprise customers: You don't have to choose. An M365 Copilot organization can use GPT-5.x for everyday productivity and route high-stakes analytical work to Claude-powered agents. This hybrid model is exactly what Microsoft's research describes as the emerging enterprise pattern: GPT for speed, Claude for depth.

Data processed by Claude inside Copilot transfers from Azure to Anthropic's servers (AWS/GCP, primarily US). This breaks Microsoft's in-country data residency commitments. Claude is disabled by default for EU/EFTA/UK commercial tenancies. Government and sovereign tenancies have no access.

Cost implications: Claude Opus is 7-10x more expensive than GPT per million tokens on Microsoft Foundry rates. Currently bundled in the flat M365 Copilot license. If usage grows, expect a shift to tiered or base-plus pricing. That isn't speculation; it's basic unit economics.

Copilot vs Claude Pricing Comparison

The headline prices look similar ($20/mo individual, $30/user enterprise). The total cost of ownership tells a different story. Copilot requires an existing M365 subscription ($12.50-57/user/mo) that most enterprises already pay. Claude is standalone but requires separate procurement and governance. For organizations already on M365 E3/E5, Copilot is the cheaper add-on. For teams that don't need M365 integration, Claude Pro at $20/mo delivers stronger raw model capability.

For developers specifically: GitHub Copilot Pro at $10/mo offers unlimited inline completions and is the best value in AI-assisted coding. Claude Code Max at $100/mo is the realistic tier for daily agentic AI coding with Opus 4.6. Many developers run both ($110/mo total): Copilot for speed, Claude Code for depth.

Best For

Which Should You Pick?

Question 1 of 4

What's your primary use case?

Pick the one that best describes your daily work.

What's your organization's primary ecosystem?

This affects integration value significantly.

What matters most?

Your top priority when evaluating AI tools.

How many users need access?

This affects pricing and deployment complexity.

Our Recommendation

Edge Cases: When the Wrong Choice Wins

Suggested reading order: If you landed here first, start with What Is Microsoft Copilot for the full product breakdown, then Microsoft Copilot Pricing for detailed cost analysis. Explore the full AI tools landscape to see how Copilot and Claude fit alongside Gemini, ChatGPT, and other platforms.