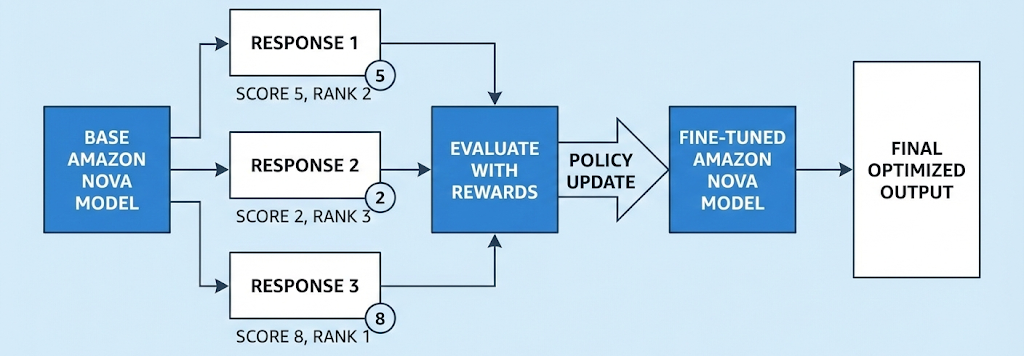

In this post, we explore reinforcement fine-tuning (RFT) for Amazon Nova models, which can be a powerful customization technique that learns through evaluation rather than imitation. We’ll cover how RFT works, when to use it versus supervised fine-tuning, real-world applications from code generation to customer service, and implementation options ranging from fully managed Amazon Bedrock to multi-turn agentic workflows with Nova Forge. You’ll also learn practical guidance on data preparation, reward function design, and best practices for achieving optimal results. Read More

ESC to close

Resources ▼

TJS Hubs

Agentic AI Hub

AI News Hub

Security News Center

Tech Jobs Hub

AI Tools

Governance

AI Governance Hub

EU AI Act Hub

NIST AI RMF

ISO 42001

AI Data Governance

AI Governance India

Knowledge

Article Library

IT Certifications

Prompt Engineering

AI Governance Careers

Job Displacement Tracker

Security & IT

Information Security Hub New

Incident Response New

Risk Management New

Information Technology

Cloud

Solutions ▼

Consulting Services ▶

AI Governance & Risk Management

Cloud Security Architecture

Cyber Security Risk Assessments

Incident Response & Preparedness

Security Program Development

Vendor & Third-Party Risk

Virtual CISO (vCISO)

Education & Training

Templates & Tools ▶

Template Marketplace

ISO 42001 Templates

Free AI Templates & Tools

Prompt Engineering Library

AI Glossary

Blueprint Quest

Service Catalog

Company ▼

ESC to close

Resources ▼

TJS Hubs

Agentic AI Hub

AI News Hub

Security News Center

Tech Jobs Hub

AI Tools

Governance

AI Governance Hub

EU AI Act Hub

NIST AI RMF

ISO 42001

AI Data Governance

AI Governance India

Knowledge

Article Library

IT Certifications

Prompt Engineering

AI Governance Careers

Job Displacement Tracker

Security & IT

Information Security Hub New

Incident Response New

Risk Management New

Information Technology

Cloud

Solutions ▼

Consulting Services ▶

AI Governance & Risk Management

Cloud Security Architecture

Cyber Security Risk Assessments

Incident Response & Preparedness

Security Program Development

Vendor & Third-Party Risk

Virtual CISO (vCISO)

Education & Training

Templates & Tools ▶

Template Marketplace

ISO 42001 Templates

Free AI Templates & Tools

Prompt Engineering Library

AI Glossary

Blueprint Quest

Service Catalog

Company ▼

Over 10 years we help companies reach their financial and branding goals. Engitech is a values-driven technology agency dedicated.

Gallery

Contacts

411 University St, Seattle, USA

engitech@oceanthemes.net

+1 -800-456-478-23