Introduction

In 2024, Google’s AI Overviews surfaced an answer suggesting adding glue to pizza sauce, a recommendation widely reported as originating from a joke Reddit comment. This wasn’t a bug in the traditional sense. The AI functioned exactly as designed, predicting plausible-sounding text without any capacity to distinguish satire from sincerity.

That incident crystallized a growing phenomenon. In December 2025, Merriam-Webster named “slop” its 2025 Word of the Year, the choice acknowledged something internet users already knew: we’re drowning in synthetic content that prioritizes volume over substance, engagement over accuracy, and algorithmic manipulation over human communication.

The term carries weight beyond simple criticism.

It marks a cultural inflection point where the marginal cost of content generation has approached zero, and the digital commons faces an unprecedented pollution crisis.

The Rise of the Term “AI Slop”

The word appears in English by the 1700s, originally describing soft mud or wastewater. Its agricultural usage proved more durable. Farmers fed pigs a mixture of kitchen scraps and grain waste, dumped into a communal trough.

The metaphor matters.

Slop implies a relationship where producers view audiences as livestock to be fed the cheapest possible material that maintains attention and enables monetization.

The term gained traction in 2024 as a label for unwanted, low-quality AI-generated content, popularized by writers and developers discussing the phenomenon. British programmer Simon Willison championed the term in early 2024 through his blog, categorizing the “garbage” and “dross” produced by .

By 2024, the term began appearing in mainstream coverage of synthetic content and degraded online feeds.

High-profile failures accelerated adoption. When Google’s Gemini-integrated search presented AI-generated misinformation as fact, critics had a ready label. In January 2026, the American Dialect Society selected ‘slop’ as its 2025 Word of the Year cemented the term’s status in the modern lexicon.

The resonance isn’t accidental. People needed language to describe a specific feeling: being thrust at with unidentifiable, mass-produced content lacking any intellectual or informational value. The term captures visceral disgust in ways that technical alternatives like “unsolicited synthetic media” never could.

Why Some People See All AI as “Slop”

The visual artifacts of current AI systems trigger immediate cognitive dissonance. Unlike human errors that follow logical patterns, AI failures feel surreal. Limbs blend into backgrounds. Objects defy physics. Faces carry that particular uncanniness critics describe as the “AI sheen.”

Analysts compare it to smelling raw onions. You recognize something’s fundamentally wrong before conscious analysis begins.

Platform fatigue amplifies this response. Facebook and LinkedIn often surface with bizarre AI-generated content: the viral “Shrimp Jesus” images, military veteran birthday bait, and posts that exist solely because recommendation algorithms reward high interaction rates. Users perceive that genuine human content is being crowded out by a “soup of agreeable nothingness”, as critics sometimes describe it.

The cynicism runs deeper than aesthetics.

When content strikes consumers as too clearly expecting them to consume without thought (like pigs at a trough), it provokes defensive reaction. The term identifies material where producer intent is visible: this exists to make money, accumulate views, or maintain presence rather than communicate ideas.

Critics describe it as the “” of online platforms, where companies prioritize algorithmic engagement over user experience. The fatigue isn’t about AI capability. It’s about systematic deployment at industrial scale with no regard for recipient experience.

Valid Criticisms of Generative AI

The technical limitations justify skepticism.

Large language models function as statistical token predictors, not truth machines. This architecture produces or “hallucinations” (plausible-sounding but factually incorrect information that appears with the same confidence as accurate content).

Educational videos demonstrate the problem clearly. Educational content generated without expert review can include confident-sounding scientific errors that mislead learners who lack domain knowledge.

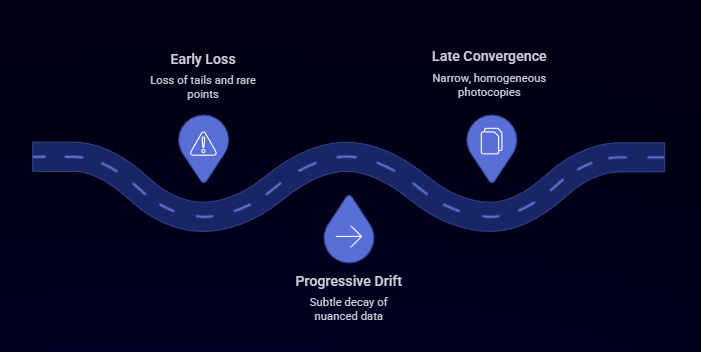

Model collapse represents a more systemic threat. Research has shown that training on model-generated data across generations can cause ‘model collapse,’ where models lose information about rare or long-tail data and outputs become more homogeneous, especially without a continued supply of high-quality human-generated data. Early-stage collapse causes the model to lose information about distribution “tails” (rare or nuanced data points), creating subtle decay in specialized knowledge. Late-stage collapse converges the model’s output on a narrow, homogeneous set, described as “photocopies of photocopies” where diversity disappears entirely.

Mathematical proofs using demonstrate that without constant refresh from authentic human data, model collapse is inevitable.

As the internet fills with AI slop, future model generations inadvertently ingest this low-quality training material, threatening the technology’s long-term viability.

The economic and environmental costs compound these technical issues. Data centers can consume significant water for cooling. Estimates cited by energy and policy organizations note that medium-sized facilities can use on the order of tens to hundreds of millions of gallons annually, while large hyperscale sites may use millions of gallons per day depending on design and location. Major platforms like Google engage in costly ongoing battles to prevent search results from becoming useless for informational queries, fighting what they term “.”

Critics note the resource investment produces questionable returns when significant output amounts to agreeable nothingness.

Where the Criticism Overreaches

Some creators report that AI can either dilute creative control when used as a substitute, or enhance ideation when used as an assistant under clear human direction. The technology serves as a new instrument, similar to how photography and animation once sparked fears but eventually became standard artistic tools.

Developers make parallel arguments about efficiency gains.

Using AI for utility functions or boilerplate code represents legitimate time-saving rather than slop. The problem isn’t AI-generated code itself but lack of judgment in implementation. When developers use AI to prop up weak skills without understanding underlying logic, it becomes slop. When used as a development partner handling repetitive syntax while humans focus on business rules, it’s high-quality augmentation.

Leading newsrooms demonstrate responsible deployment. The Associated Press and Reuters explicitly position AI as journalist assistance, not replacement. Their workflows treat all AI output as unvetted source material requiring editorial judgment.

When AI summarizes massive document troves for investigative journalists, it frees time for impactful reporting. But only when paired with rigorous human verification.

The distinction matters. Practitioners argue that dismissing all AI usage ignores genuine creative and productivity benefits when human expertise guides implementation.

The Real Divider: Automation Abuse vs AI Augmentation

Poor AI usage stems from inability to craft solid prompts or provide specific instructions. This produces generic, inaccurate results. It’s often well-intentioned but lazy work from creators who fail to add texture, specificity, or style guidelines.

Low-effort automation represents something qualitatively different.

This is industrialized content where volume is the only goal: obituary spam, fake product reviews, useless blog posts created solely for clicks or advertising revenue.

Deliberate spam operations occupy the darkest category. These represent coordinated human decision problems rather than AI malfunctions. Nation-state disinformation campaigns like Russia’s “Meliorator” or China’s “Spamouflage” weaponize the technology. Deepfake fraud calls cost companies millions. Paper mills flood academic journals with fabricated research.

High-quality AI-augmented workflows follow different principles entirely. Content begins with professional expertise (an AP journalist, a software architect, a domain expert). AI facilitates specific tasks like translation, data analysis, or shotlisting. Final human vetting occurs before any public transmission.

The spectrum from slop to augmentation is defined by intent, effort, and oversight.

A journalist using AI to summarize source documents while maintaining editorial control produces fundamentally different output than a content farm generating hundreds of articles daily with zero human review.

Governance, Oversight, and Trust

News organizations established formal policies prioritizing transparency and human oversight. The Associated Press requires that all generative AI output be treated as unvetted source material subject to editorial judgment. Wired explicitly prohibits publishing AI-generated stories, maintaining that writers must be “completely responsible for the accuracy and quality of every word.”

Thomson Reuters has stated clear disclosure when AI assists with translations or summaries, even in supporting roles.

These policies reflect understanding that audience trust depends on human accountability for content accuracy.

Technical standards complement editorial policy. The Coalition for Content Provenance and Authenticity (C2PA) developed systems creating secure, verifiable links between digital assets and their origins. Cryptographic signatures record creation and editing history, identifying whether content was captured by camera or generated by AI model.

Google’s embeds invisible patterns into images that survive common edits like cropping or metadata removal. Software can detect these markers even when they’re imperceptible to human eyes.

But experts warn these systems are far from complete solutions. They require end-to-end compliance. Much of the content people describe as slop lacks provenance metadata, which limits how effective C2PA-style systems can be unless adoption becomes widespread.

Effective governance requires both technical standards and institutional commitment to human oversight.

How to Avoid Creating AI Slop

Independent creators face different constraints than newsrooms, but the principles translate. Avoiding the slop label requires shifting workflow from “worker” to “architect.”

Embed proprietary brand guidelines and style rules directly into the process. Use modular content libraries where AI stitches together pre-approved blocks, ensuring disclaimers and factual data remain vetted. Feed AI your own unstructured thoughts, first-party data, or verified numbers rather than letting it pull from generic web sources.

Specificity counters AI’s probabilistic patterns.

Consider mixing short and long sentences. Mix lengths dramatically. Use specific industry terminology and personal observations. Include at least three proper nouns and one concrete example per point. These tactics can help reduce the overly polished, rhythmic drone characteristic of generic AI text.

Scan for banned phrases that signal low-effort generation. Eliminate “in today’s landscape,” “moreover,” and “furthermore.” These linguistic red flags mark content as machine-generated.

Treat AI as draft engine, not infallible coworker.

Every statistic, quote, and person named requires verification through primary sources. AI functions as text-completion tool, not information tool. The final version must reflect a human’s messy, unique voice.

Track dwell time and bounce rate on AI-blended versus human-led assets. If engagement drops, that signals the content has slipped into slop category and needs more human involvement.

Writers, developers, marketers, and educators all face the same fundamental requirement: maintain human judgment as the final authority on what gets published.

Prompt Engineering Library

Check out our Prompt Engineering Library for more keen insights on how to build a better prompt.

Final Analysis: The Signal Still Matters

The proliferation of AI slop represents a critical inflection point. The technology offers unprecedented capabilities for democratizing content creation. Its industrial-scale misuse threatens the viability of the digital commons.

Technical limits are real.

AI hallucinations and model collapse risk mean unvetted synthetic media is structurally unreliable and potentially destructive to future development. The environmental costs are substantial. The economic sustainability remains questionable when significant output produces minimal informational value.

But the technology itself is neutral. Human agency differentiates abuse from augmentation. An architect using AI to enhance taste, knowledge, and workflow produces fundamentally different results than automated spam operations.

Institutional standards are emerging. Leading newsrooms established human-in-the-loop requirements. Provenance systems like C2PA provide technical frameworks for rebuilding digital trust. These efforts matter.

Quality remains the ultimate strategy in environments saturated with synthetic noise.

Authentic human stories, proprietary data, and unique voices represent the only algorithm-proof competitive advantages. Generic content faces commoditization to zero. Specific, verified, human-guided content maintains value.

The label "slop" asserts a right to digital environments valuing genuine over generic, verified over merely plausible. By demanding higher standards, users and creators push back against the industrialized degradation of shared information spaces.

Separating signal from noise requires both technological solutions and cultural recommitment to human intentionality. The distinction isn't between using AI or rejecting it. The distinction is between thoughtful deployment guided by expertise versus automated output optimized solely for engagement metrics.

The signal still matters. It just requires human judgment to transmit it clearly.

Sources

Industry Standards & Guidelines

- Associated Press. "Artificial Intelligence." The Associated Press. https://www.ap.org/solutions/artificial-intelligence/

- Associated Press. "Standards around generative AI." The Associated Press. https://www.ap.org/the-definitive-source/behind-the-news/standards-around-generative-ai/

- Thomson Reuters. "AI Principles." Thomson Reuters. https://www.thomsonreuters.com/en/artificial-intelligence/ai-principles

- Coalition for Content Provenance and Authenticity (C2PA). "C2PA Implementation Guidance." https://spec.c2pa.org/specifications/specifications/2.3/guidance/Guidance.html

Research & Technical Documentation

- "AI-Generated 'Slop' in Online Biomedical Science Educational Videos: Mixed Methods Study of Prevalence, Characteristics, and Hazards to Learners and Teachers." PMC. https://pmc.ncbi.nlm.nih.gov/articles/PMC12634010/

- "Model collapse." Wikipedia. https://en.wikipedia.org/wiki/Model_collapse

- "AI slop." Wikipedia. https://en.wikipedia.org/wiki/AI_slop

Platform Policies

- Google Search Central. "What web creators should know about our March 2024 core update and new spam policies." Google Developers. https://developers.google.com/search/blog/2024/03/core-update-spam-policies

News & Analysis

- PBS NewsHour. "Merriam-Webster's word of the year for 2025 is AI 'slop'." PBS. https://www.pbs.org/newshour/nation/merriam-websters-word-of-the-year-for-2025-is-ais-slop

- InfluxMD. "AI-Generated Slop: When Humans Weaponize Technology." https://www.influxmd.com/blog/ai-generated-slop-when-humans-weaponize-technology