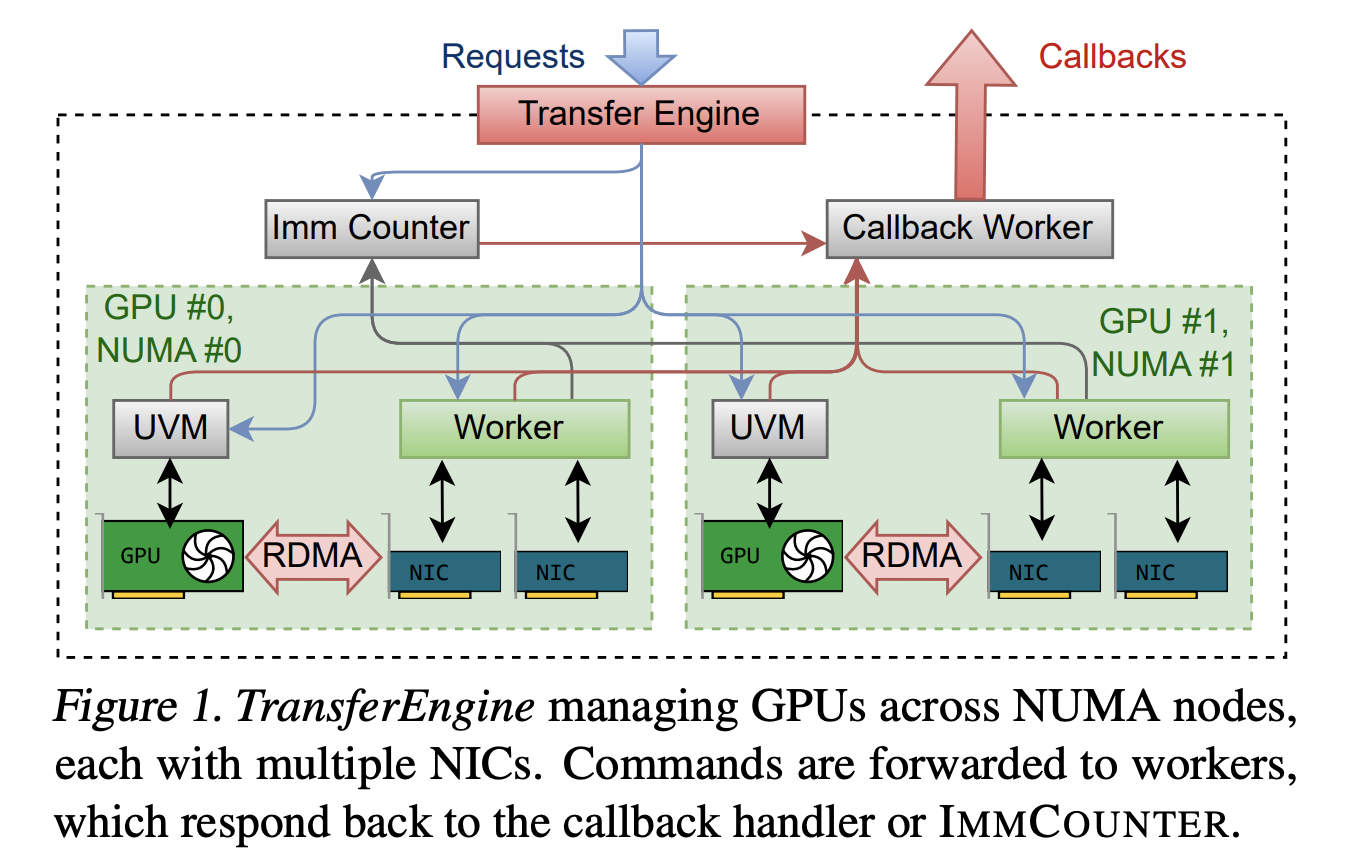

How can teams run trillion parameter language models on existing mixed GPU clusters without costly new hardware or deep vendor lock in? Perplexity’s research team has released TransferEngine and the surrounding pplx garden toolkit as open source infrastructure for large language model systems. This provides a way to run models with up to 1 trillion

The post Perplexity AI Releases TransferEngine and pplx garden to Run Trillion Parameter LLMs on Existing GPU Clusters appeared first on MarkTechPost. Read More