AI Model Cards for Beginners

The “nutrition label” for AI systems. The EU AI Act now mandates this documentation for high-risk AI. Learn what model cards are, how they satisfy three major regulatory frameworks, and how to build your first one in 30 days.

Elements

Frameworks

Phases

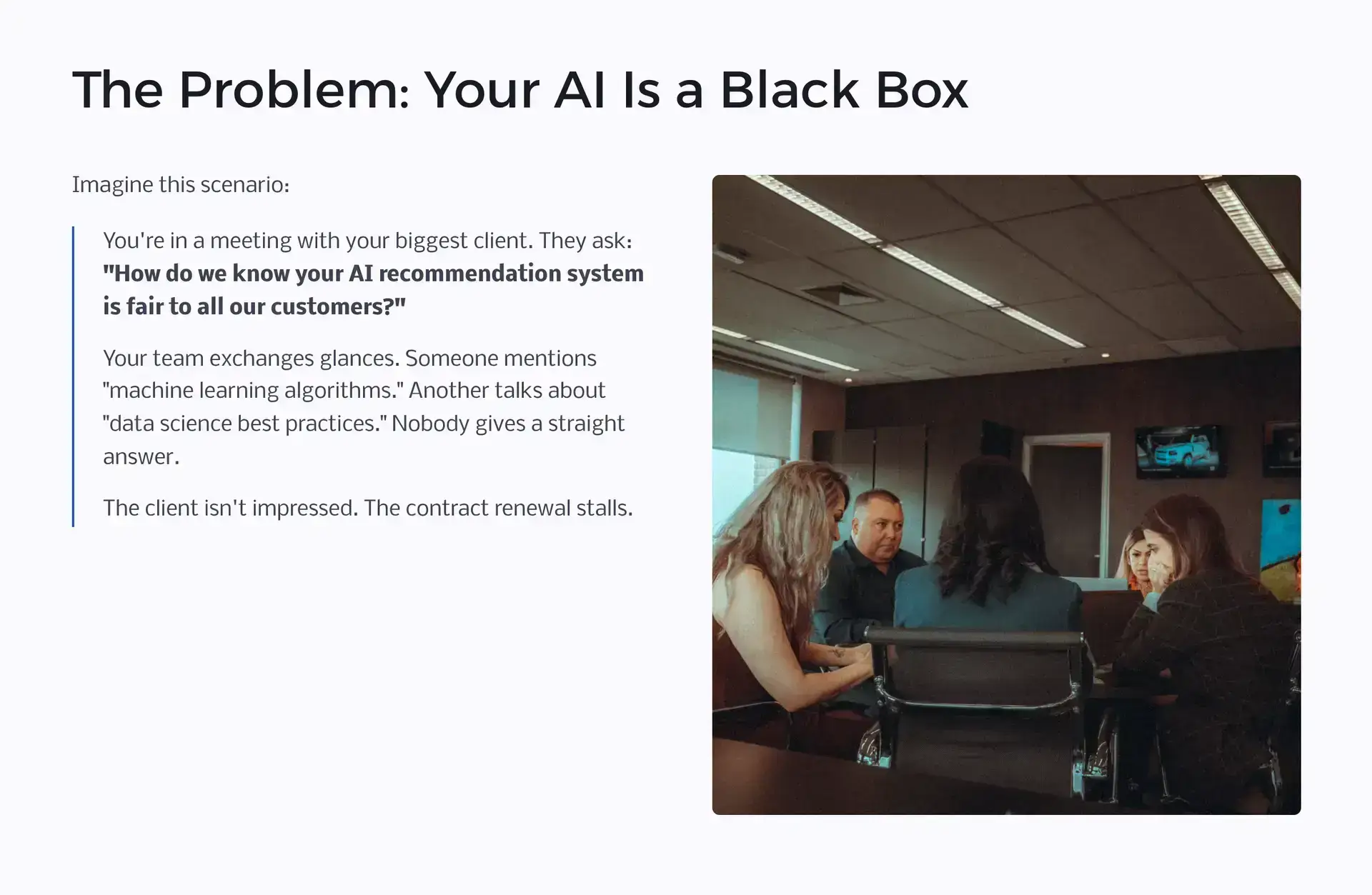

Your AI Is a Black Box to Everyone Who Matters

Most organizations cannot clearly explain how their AI systems work, what they excel at, or where they fall short. Not to customers. Not to regulators. Not even to their own leadership.

Picture this: You are in a renewal meeting with your largest client. They ask a direct question about your AI recommendation system: “How do we know it treats all our customers fairly?” Your team exchanges glances. Someone mentions “machine learning algorithms.” Another person references “data science best practices.” Nobody delivers a clear answer. The contract discussion stalls.

This scenario plays out across industries every day. The gap between what AI systems do and what organizations can explain about them creates real business risk: lost deals, compliance failures, and eroded trust.

✗ Without Model Cards

✓ With Model Cards

Why This Is No Longer Optional

Model cards moved from best practice to legal requirement between 2024 and 2026. Here is the regulatory timeline driving urgency.

Regulatory Mandates Now in Effect

- EU AI Act (Annex IV, enforced Aug 2026): High-risk AI systems must have structured technical documentation covering 13 specific elements before deployment. Model cards satisfy this requirement. Fines: up to 3% of global annual turnover for non-compliance.

- ISO 42001 certification requirement: Organizations seeking ISO 42001 must maintain documented information about each AI system (Cl. 7.5). Without model cards or equivalent artifacts, certification auditors will flag gaps in Cl. 6.1, 8.1, and 8.4 as well.

- NIST AI RMF procurement requirements: US federal agencies are requiring NIST AI RMF alignment from vendors. GOVERN 1.1 and MEASURE 2.6 both call for documented AI system profiles. Model cards fulfill this at the system level.

- Enterprise procurement pressure: Fortune 500 procurement teams have added AI transparency questionnaires to vendor due diligence. “Can you provide a model card?” is now a standard question in enterprise sales cycles.

Not sure if your systems qualify as high-risk? Use the Decision Framework in Section 9 to find out in under 2 minutes. Even systems that do not meet the high-risk threshold benefit from model card documentation for audit readiness and trust.

What Is a Model Card?

Think of it as a nutrition label for AI. Just as food labels tell you calories, ingredients, and allergen warnings, a model card tells you everything important about an AI system in plain language, from the people who built it to the regulators who audit it.

The concept was introduced by Mitchell et al. at Google in 2019. Their paper, “Model Cards for Model Reporting,” proposed a standardized way to document AI systems so that anyone, from executives to regulators to end users, can understand what a system does, how well it performs, and where its limits lie.

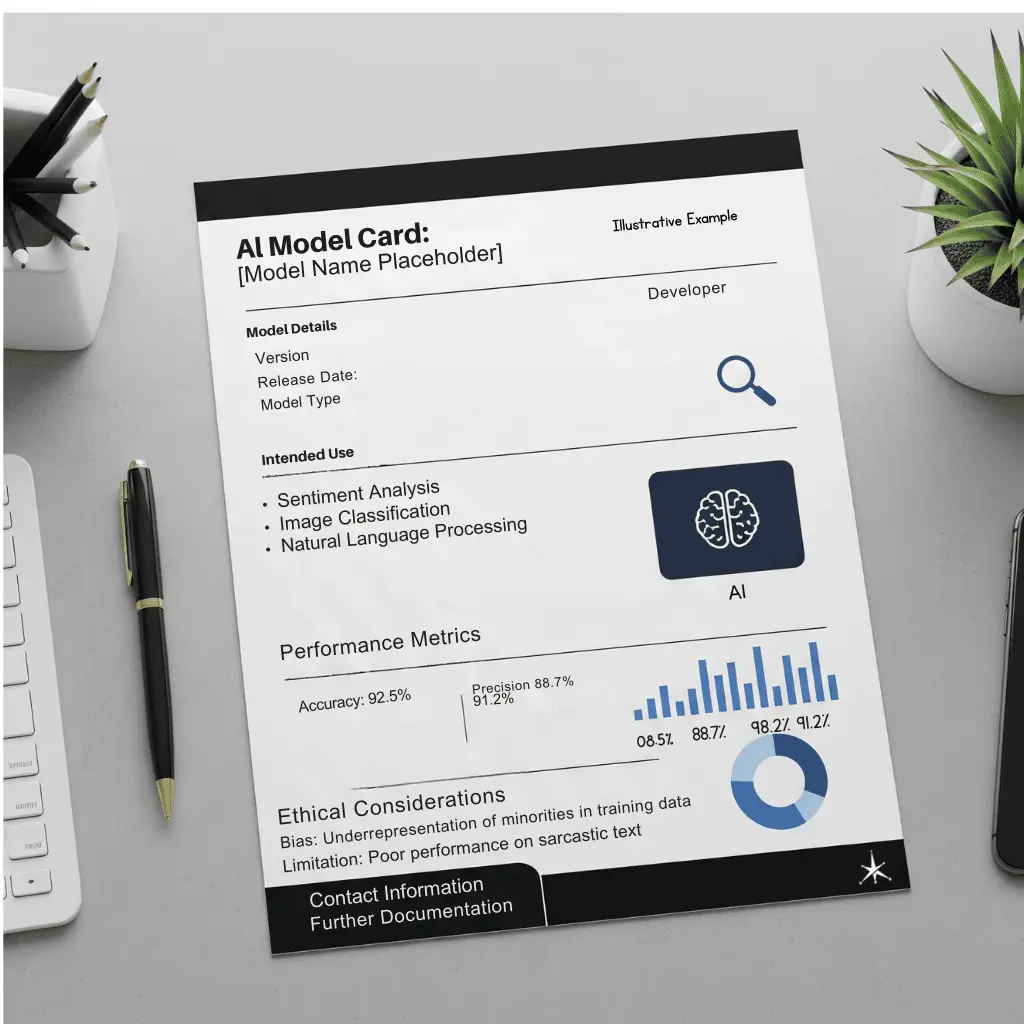

Below is an interactive example of what a real model card looks like. Click each section to explore the documentation a well-governed AI system should have. New to AI terminology? Terms highlighted with a dashed underline link to plain-language definitions.

The 6 Essential Elements

Based on Google’s original Model Cards framework by Mitchell et al. (2019), every model card should cover these six areas. Click any card to see an example.

01 System Identification

Name, version, owner, build team, and creation date. The “who, what, when” of the AI system.

02 Purpose and Usage

What business problem does it solve? Who should use it? What should it absolutely NOT be used for?

03 Performance Information

How well does the system work? Where does it excel? Where does it struggle? Include metrics and evaluation datasets.

04 Risk Assessment

What could go wrong? How are risks controlled? What bias testing has been performed, and what are the results?

05 Data Foundation

What data was used for training? What are its limitations? How current is it? Are there representation gaps?

06 Human Oversight

Who monitors this system? What is the review cadence? How do humans intervene if something goes wrong? What is the kill switch?

Framework Alignment

Model cards map directly to requirements across three major regulatory and standards frameworks. Here is exactly where each one applies.

| Requirement | What It Means for Model Cards | Key Articles |

|---|---|---|

| Risk Management System | Model cards document risk identification, assessment, and mitigation for each AI system | Art. 9 |

| Technical Documentation | Model cards satisfy the requirement for structured technical documentation of high-risk AI | Art. 11 |

| Data Governance | Training data documentation in model cards addresses data quality and representativeness requirements | Art. 10 |

| Human Oversight | Model card oversight sections document monitoring, escalation, and kill-switch procedures | Art. 14 |

| Technical Standards | Model cards provide the structured format needed for Annex IV documentation requirements | Annex IV |

| Function | How Model Cards Support It | Reference |

|---|---|---|

| MAP | Model cards document intended context, users, and known risks during the mapping phase. MAP 1.1 establishes intended purpose and context of use; MAP 2.1 defines specific tasks and methods the AI system supports. | MAP 1.1, 2.1 |

| MEASURE | Performance metrics, fairness testing, and evaluation datasets recorded in model cards fulfill measurement requirements. MEASURE 2.6 covers safety and reliability; MEASURE 2.11 covers fairness and bias evaluation. | MEASURE 2.6, 2.7, 2.11 |

| MANAGE | Risk controls, limitations, and human oversight documented in model cards support risk management. MANAGE 1.3 establishes risk treatment options (mitigate, transfer, avoid, accept). | MANAGE 1.3, 2.2 |

| GOVERN | Model card review cadences, ownership, and escalation paths align with governance structure requirements. GOVERN 1.1 establishes organizational policies. | GOVERN 1.1, 1.4 |

| Clause | Model Card Connection | Reference |

|---|---|---|

| Cl. 4 Context | Model cards document organizational context and stakeholder needs for each AI system | ISO 42001 Cl. 4.1, 4.2 |

| Cl. 6.1 Risk Assessment | Model cards document the OUTPUT of the risk assessment process (identified risks, mitigations, residual risk). The assessment process itself must be separate; model cards are the evidence artifact, not a substitute for the process. | ISO 42001 Cl. 6.1 |

| Cl. 7.5 Documentation | Model cards serve as the primary documented information for AI system management | ISO 42001 Cl. 7.5 |

| Cl. 8.1 Planning | Intended use and deployment constraints in model cards support operational planning | ISO 42001 Cl. 8.1 |

| Cl. 8.4 AI Impact | Ethical considerations and fairness testing map to AI system impact assessment | ISO 42001 Cl. 8.4 |

| Cl. 9.1 Monitoring | Human oversight and review cadences support performance evaluation requirements | ISO 42001 Cl. 9.1 |

Important: Annex IV lists 13 required documentation elements for high-risk AI systems. The table below maps each element to the corresponding model card section. If your system is NOT high-risk under Annex III, you are not legally required to follow Annex IV, though doing so is best practice.

| Annex IV Element | Model Card Section | Notes |

|---|---|---|

| 1. General description of the AI system | Section 1: System Identification | Name, version, purpose, developer, deployment context |

| 2. Intended purpose, persons responsible, interaction with other systems | Section 2: Purpose and Usage | Intended users, out-of-scope uses, system integrations |

| 3. Version of software and firmware | Section 1: System Identification | Version history and update log |

| 4. Description of all forms of deployment | Section 2: Purpose and Usage | API, embedded, SaaS, on-premise deployment notes |

| 5. Hardware requirements | Section 1 (extended) | Typically in technical appendix; reference in card |

| 6. Training, validation, and testing data | Section 5: Data Foundation | Source, size, date range, representation gaps |

| 7. Logging capabilities | Section 6: Human Oversight | Audit logging, monitoring frequency, log retention |

| 8. Performance metrics and accuracy | Section 3: Performance Metrics | Primary metrics, evaluation dataset, thresholds |

| 9. Cybersecurity measures | Section 4: Risk Assessment | Adversarial robustness, access controls, data security |

| 10. Description of changes to the system over its lifecycle | Section 1: Version History | Change log with dates and rationale |

| 11. List of applicable harmonised standards applied | Section 4: Risk Assessment | ISO 42001, NIST AI RMF alignment notes |

| 12. Copy of the EU declaration of conformity | External document (reference in card) | Link or attach separately; reference in Section 6 |

| 13. Post-market monitoring plan | Section 6: Human Oversight | Review cadence, performance thresholds, escalation path |

ℹ Elements 5 and 12 are typically separate documents referenced in the model card rather than included inline. Your card should note where these documents are stored and who is responsible for them.

📐 When Multiple Frameworks Apply to You

If your system is high-risk under the EU AI Act AND you are pursuing ISO 42001 certification, both frameworks apply simultaneously. EU AI Act requirements are generally more prescriptive (Annex IV specifies 13 exact elements). ISO 42001 is a management system standard that requires documented information but does not specify every field. Satisfying Annex IV typically satisfies ISO 42001 Cl. 7.5 in full, while also addressing Cl. 6.1 and 8.4. Prioritize Annex IV compliance for the documentation structure, then map it to ISO clauses.

Ready to start documenting your AI systems?

Get the Model Card Policy Template, Development Procedure, and Assessment Checklist.

Who Benefits and How

Model cards create value for different stakeholders in different ways. Each role gets exactly what they need from the same document.

Executives

Risk visibility without technical complexity. Clear accountability and compliance posture at a glance.

- Board-ready AI risk summaries

- Named owners for every system

- Regulatory readiness status

- Incident escalation paths

Data Scientists

Reproducibility and institutional knowledge. No more reverse-engineering what past teams built.

- Training data provenance

- Performance baselines

- Architecture decisions logged

- Version history tracking

Compliance Officers

Audit-ready documentation that maps to regulatory requirements without chasing down engineers.

- Framework alignment evidence

- Bias audit results

- Risk treatment documentation

- Review cadence proof

Customers & Partners

Transparency and trust. Clear answers to “How does your AI work?” and “Is it fair?”

- Plain-language capabilities

- Known limitations disclosed

- Fairness testing results

- Ongoing monitoring proof

📌 Who Actually Builds Model Cards at Your Organization?

You do not need a dedicated AI documentation team. Most organizations assign model card authorship across three existing roles:

In smaller organizations, one person may wear multiple hats. A technical co-founder who also handles compliance can author the full card alone. What matters is that all three perspectives are covered, not that they come from separate people.

Real-World Examples

Leading organizations already publish model cards and use them as competitive advantages. Here is how they do it.

Google publishes model cards for major AI systems including Gemma, documenting capabilities, limitations, and intended uses. Their Model Card Toolkit is open-source.

View Google Model Cards →Synthesia

AI Video Company, ISO 42001 CertifiedSynthesia was among the first AI video companies to achieve ISO 42001 certification. Model cards serve as key documentation in their governance framework and have become part of their enterprise sales proposition.

ISO 42001 CertifiedKPMG Australia

Early ISO 42001 CertificationKPMG Australia was among the first organizations globally to achieve ISO 42001 certification, with systematic AI documentation including model cards as evidence of management practices across their AI portfolio.

ISO 42001 CertifiedAWS SageMaker

Enterprise Platform IntegrationAmazon Web Services built model card functionality directly into SageMaker, enabling automated generation, version tracking, and governance workflows at enterprise scale.

Read AWS Docs →Hugging Face

Largest Open Model Card EcosystemHugging Face hosts over 1 million models, each with a model card built on their standardized template. Their system uses YAML metadata for machine-readable fields (license, language, datasets, evaluation metrics), a 20-section annotated template with 3 authorship roles (developer, sociotechnic, project organizer), and built-in widgets for evaluation results, CO2 emissions tracking, and base model lineage.

View Annotated Template →Regional Financial Services Firm

Mid-Market, 280 Employees, ISO 42001 PathwayA US-based commercial lender with 280 employees operates three AI systems: a credit risk model, a document classification tool, and a fraud detection algorithm. After a client RFP requested “AI transparency documentation,” the compliance team spent three weeks building their first model card for the credit risk model using TechJacks templates. The card revealed an undocumented data gap (no model card existed for training data cutoff). The gap was fixed before the audit. Enterprise contract signed.

High-Risk under EU AI Act Annex III NIST MAP + MEASURE

Implementation Roadmap

A practical 30-day plan to go from zero to your first completed model card. Start with one system, build the muscle, then scale.

Audit and Select

Week 1 (Days 1-7)Identify your AI systems. Pick the one that generates the most customer questions or carries the highest business risk if it fails. Start there, not with everything at once.

Assemble the Team

Week 2 (Days 8-14)Bring together three perspectives: someone who understands the technology, someone who understands the business purpose, and someone who can write clearly for non-technical readers. No single person can do this well alone.

Embed model card creation into your AI development lifecycle: draft the card in Week 1 of any new AI project, update it at each major milestone, and require a sign-off on the card as a gate before production deployment. This makes documentation continuous rather than a last-minute audit scramble.

Document

Week 3 (Days 15-21)Work through the 6-section framework. Approximate information is more valuable than no information. You can refine over time. Most organizations discover they already have much of the data scattered across teams and systems.

Validate and Scale

Week 4 (Days 22-30)Test the model card with someone unfamiliar with your AI system. If they can understand it and explain it back, the documentation is working. Then create your template and process for the next system.

Common Questions

Direct answers to the questions executives and practitioners ask most about model cards.

An initial model card for a single AI system typically takes 2-4 weeks of focused collaboration between technical, business, and compliance teams. The first card takes the longest because you are establishing templates and processes. Subsequent cards can often be completed in 1-2 weeks once the framework is in place.

You still need model cards for third-party AI. Your card documents how you use the system, what data you feed it, your performance requirements, and your risk controls. The EU AI Act holds deployers accountable regardless of who built the system.

Ask your vendor these 10 questions:

- What is the model’s training data composition and cutoff date?

- What performance metrics are publicly documented, and on which evaluation datasets?

- What demographic groups were included in bias and fairness testing?

- What are the system’s documented out-of-scope uses?

- Who is the named responsible person for this model’s performance and updates?

- What is the model retraining and version update schedule?

- How are incidents (errors, bias events, outages) reported and tracked?

- Do you have an EU AI Act Annex IV-compliant technical documentation package?

- Will you notify us when the model is significantly retrained or updated?

- What contractual protections exist if the model’s performance degrades materially?

If a vendor refuses to answer questions 1-5, treat that as a risk signal. Document their refusal in your model card’s risk assessment section.

The EU AI Act (Articles 9, 11, and Annex IV) mandates technical documentation for high-risk AI systems, which includes model card-equivalent information. NIST AI RMF recommends systematic documentation across all AI risk tiers. ISO 42001 certification requires documented AI management practices. Even where not explicitly mandated, model cards satisfy documentation requirements across multiple frameworks simultaneously.

No. Model cards document what your AI does and how well it performs, not proprietary algorithms, training techniques, or trade secrets. The focus is on capabilities, limitations, intended uses, and risk controls. Google, NVIDIA, and other leaders publish model cards publicly without exposing competitive advantages.

Organizations report faster regulatory audit preparation, accelerated enterprise sales cycles where buyers request AI transparency documentation, quicker incident response times because system specifications and responsible parties are already documented, and stronger competitive positioning as AI governance maturity becomes a differentiating factor in procurement decisions.

Build model card updates into your development lifecycle. Trigger updates when: significant model retraining occurs, performance metrics shift by more than your threshold (e.g. 5%), new data sources are added, or risk assessments change. Schedule quarterly reviews regardless, and annual full audits. Tools like the TensorFlow Model Card Toolkit and AWS SageMaker Model Cards can automate portions of this process.

⚠ Common Model Card Mistakes to Avoid

- Treating it as a one-time deliverable. Model cards must be updated when the model is retrained, performance thresholds shift, or new data sources are added.

- Writing only for technical readers. Executives, legal teams, and customers all read model cards. If a non-engineer cannot understand the “intended use” section, rewrite it.

- Skipping the “out-of-scope uses” field. This is the single most legally protective section. If the card does not say what the system should NOT do, any misuse becomes your liability.

- Assuming fairness testing across 4 groups is sufficient. Test for the demographic groups most relevant to your specific use case and operating context. Financial services needs different groups than healthcare.

- Leaving “owner” blank or set to a team. The owner must be a named individual. “Data Science Team” is not accountable. “Sarah Chen, Head of Product Analytics” is.

Model Card Depth Calculator

Not every AI system needs the same documentation depth. Answer three questions to map your system to a specific documentation tier with exact requirements, review cadences, and framework obligations.

Does your organization use this AI system to make or influence decisions that affect real people?

This includes: hiring or HR screening, credit or loan decisions, insurance pricing, healthcare triage or diagnosis, content moderation, benefit eligibility, education placement, law enforcement scoring, or customer pricing. It also includes systems that feed into human decisions in these areas (not just fully automated ones).

ℹ Not sure? If your system outputs a score, recommendation, or classification that a human then acts on in any of these domains, answer Yes.

Could incorrect outputs cause financial, physical, or legal harm?

Denied loans, misdiagnosis, wrongful termination, safety incidents, discriminatory outcomes, or regulatory penalties.

Is this system classified as high-risk under EU AI Act Annex III?

Biometrics, critical infrastructure, education access, employment, essential services, law enforcement, migration/border, or administration of justice.

Is this system customer-facing or used in regulated operations?

Product recommendations, chatbots, automated reporting, financial calculations, supply chain optimization, or public-facing content generation.

Does this system operate in a domain covered by the EU AI Act or similar regulation?

Financial services, healthcare, education, employment, law enforcement, critical infrastructure, or consumer protection.

Does this system access sensitive data or integrate with critical business systems?

PII, financial data, trade secrets, production databases, authentication systems, or infrastructure controls.

Critical: Full Annex IV Documentation

This system requires the highest level of documentation. EU AI Act Annex IV mandates specific technical documentation elements. Non-compliance carries penalties up to 3% of global annual turnover.

Required Sections (All 6)

- System Identity and Purpose

- Training Data and Methodology

- Performance Metrics and Evaluation

- Ethical Considerations and Bias Testing

- Limitations and Known Failure Modes

- Human Oversight and Escalation

Additional Requirements

- Bias audit with disaggregated metrics

- Human oversight plan (Art. 14)

- Risk management system (Art. 9)

- Data governance documentation (Art. 10)

- Incident reporting protocol (Art. 73)

- Conformity assessment evidence

High Priority: Detailed Model Card

This system carries significant risk exposure through people-impact, regulatory scope, or both. Full documentation protects the organization from liability and accelerates audit readiness.

Required Sections (5 of 6)

- System Identity and Purpose

- Performance Metrics and Evaluation

- Ethical Considerations and Bias Testing

- Limitations and Known Failure Modes

- Human Oversight and Escalation

- Training Data (recommended if available)

Additional Requirements

- Fairness testing across protected groups

- Escalation path documented

- Performance thresholds defined

- Data provenance (if you built the model)

- Third-party vendor documentation request

Standard-Plus: Model Card with Privacy Focus

This system influences decisions about people without direct harm potential, but the people-impact means privacy, fairness, and transparency documentation is essential. Reputational and trust risks drive the documentation scope.

Required Sections (5 of 6)

- System Identity and Purpose

- Performance Metrics and Evaluation

- Ethical Considerations and Bias Testing

- Limitations and Known Failure Modes

- Human Oversight and Escalation

- Training Data (if applicable)

Additional Requirements

- Data provenance and PII handling documented

- Consent and opt-out mechanisms described

- Fairness testing for affected populations

- Privacy impact assessment reference

Standard: Recommended Documentation

This system benefits from model card documentation even without strict regulatory requirements. Documentation builds customer trust, speeds incident response, and prepares you for regulatory changes that are coming.

Required Sections (4 of 6)

- System Identity and Purpose

- Performance Metrics and Evaluation

- Limitations and Known Failure Modes

- Human Oversight (owner + escalation)

- Ethical Considerations (recommended)

- Training Data (if available)

Recommended Actions

- Named system owner documented

- Performance baselines established

- Customer-facing transparency statement

- Vendor documentation on file

Lightweight: Basic Internal Documentation

Internal-only tools with no direct people impact or sensitive data access still benefit from basic documentation. The effort is minimal and prevents knowledge loss when team members leave or systems change hands.

Required Sections (3 of 6)

- System Identity and Purpose

- Limitations and Known Failure Modes

- Ownership (who maintains this)

- Performance Metrics (if measured)

- Other sections as needed

Recommended Actions

- Named owner in your AI inventory

- Known limitations written down

- Integration points documented

All Documentation Tiers at a Glance

| Tier | Sections | Review Cadence | Bias Audit | External Audit | Framework Scope |

|---|---|---|---|---|---|

| Critical | 6 of 6 | Quarterly + triggered | Required | Annual | EU AI Act + NIST + ISO |

| High | 5 of 6 | Semi-annual + triggered | Required | Recommended | EU AI Act + NIST + ISO |

| Std-Plus | 5 of 6 | Semi-annual | Required | Recommended | NIST + ISO |

| Standard | 4 of 6 | Annual + major changes | Optional | Not required | NIST + ISO |

| Lightweight | 3 of 6 | Annual | Optional | Not required | ISO |

ℹ Tier defensibility: These classifications are based on EU AI Act Annex III, NIST AI RMF risk guidance, and ISO 42001 Cl. 6.1 requirements. If your AI Governance Committee determines a different tier is appropriate based on organizational context, document your reasoning as a formal risk acceptance record. The decision tree provides a starting point, not a final legal determination.

Templates and Tools

Get started with ready-made templates built by TechJacks Solutions. Each one maps to the frameworks covered in this article.

Model Card Policy Template

Organizational policy defining when model cards are required, who is responsible, and what standards to follow.

Download Template → ProcedureModel Card Development Procedure

Step-by-step procedure for creating, reviewing, and maintaining model cards across your AI portfolio.

Download Procedure → ChecklistModel Card Assessment Checklist

Quality assurance checklist to verify your model cards meet regulatory requirements and internal standards.

Download Checklist → Free BundleFree AI Governance Toolkit

Quick-start checklist, risk decision tree, regulatory mapping, and more. Everything you need to launch governance today.

Get Free Bundle → Article + ToolAI Use Case Tracker

Track every AI system in your organization with a 40-field inventory. The foundation for knowing which systems need model cards.

Read Article → Pillar PageAI Governance Hub

The complete governance ecosystem: frameworks, committee setup, lifecycle management, and 15+ linked resources.

Explore Hub →Where to Go Next

Model cards are one piece of a complete AI governance program. These resources help you build the rest.