AI Governance Hub Guide & Resources | Tech Jacks

- Home

- AI Governance Hub Guide & Resources | Tech Jacks

AI Governance

Hub

From Strategy to Implementation — Built from 130+ Authoritative Sources Across the Hub

Derrick D. Jackson | CISSP, CRISC, CCSP | Updated March 2026

Most governance frameworks give you principles.

We give you operations.

78% of businesses (McKinsey, 2024) use AI across functions. Only 28% have active CEO involvement in AI strategy. The gap between “we have an AI policy” and “we actually govern AI” is where organizations fail audits, face penalties, and lose trust.

- “Establish governance”

- “Manage risk”

- “Comply with regulations”

- “Assign accountability”

- 8-stage committee with 120-day rollout + RACI matrix

- 5×5 risk matrix + Shadow AI detection + vendor due diligence

- Stage-by-stage ISO 42001 + NIST AI RMF + EU AI Act mapping

- Named roles per activity with decision tollgates

Your Governance Ecosystem

Seven lanes of governance — each with dedicated resources, tools, and guidance.

Your AI Governance Roadmap

Can’t start with a formal charter? Start by inventorying what you already have.

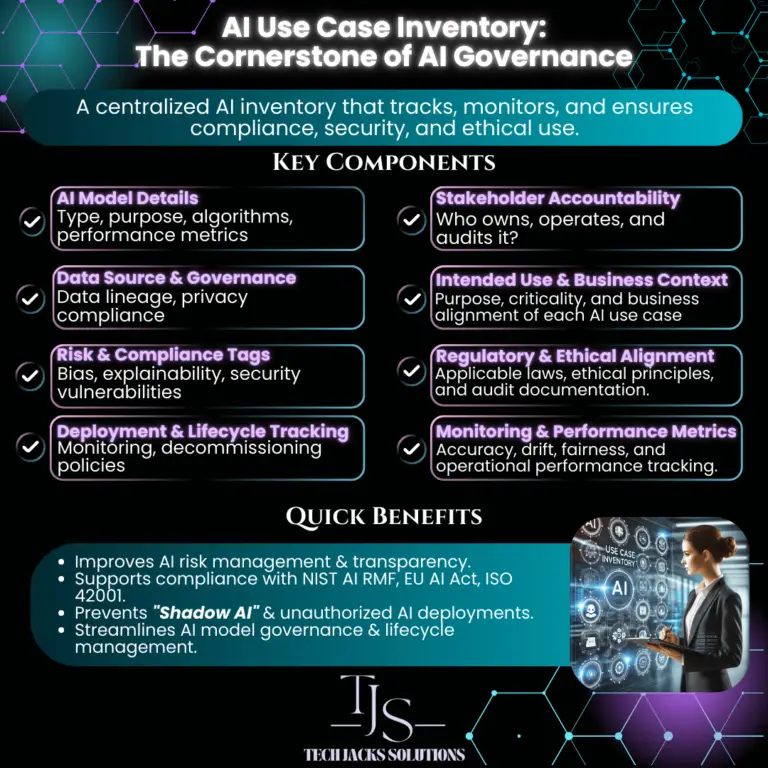

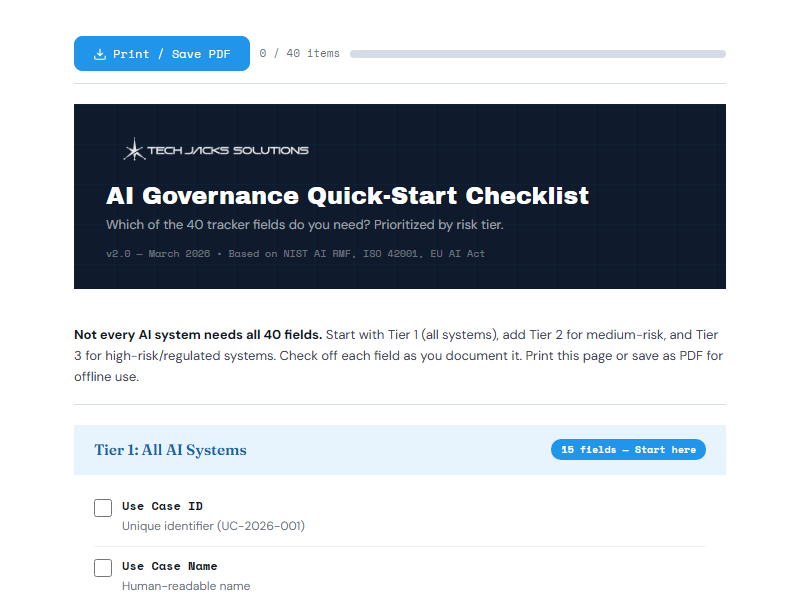

Inventory & Visibility

Start here if AI is already in use at your organization. Before you can govern AI, you need to know what's running — and research shows 71% of employees (Software AG, 2024) believe productivity gains are worth the risks of using unauthorized AI tools. That's Shadow AI, and it's your biggest blind spot.

Our AI Use Case Inventory framework defines 8 key components every tracker needs, and our 40-field tracking template covers everything from data access permissions to EU AI Act risk tier classification. Most organizations discover 2-3x more AI usage than they expected during their first inventory.

The goal isn't to block AI adoption — it's to gain visibility. Start with your highest-risk departments (HR, finance, customer-facing operations), document what's running, who owns it, and what data it touches. Then work outward.

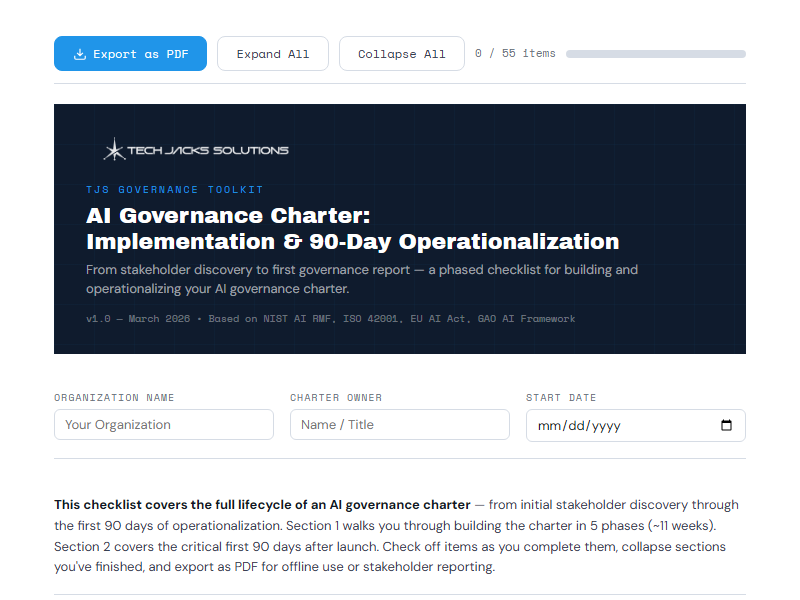

Governance Charter

The charter is your organization's commitment to responsible AI — it establishes who has authority, what the scope covers, and how decisions will be made. Without it, governance is just good intentions with no enforcement mechanism.

Our charter guide walks through 5 foundational pillars with a 90-day operationalization roadmap: Days 1-30 for drafting and stakeholder alignment, Days 31-60 for approval and communication, Days 61-90 for embedding into operations. ISO 42001 Clause 5.2 requires a documented AI policy, and the charter is how you deliver it.

The charter also establishes your governance committee's authority boundaries — critical for avoiding the "committee that recommends but can't enforce" trap that undermines most governance programs.

8-Stage Committee Implementation

This is the operational backbone of your governance program. Our proprietary 8-stage framework takes you from executive mandate to continuous monitoring in 120 days — with a 30% buffer built in for the reality of enterprise change management.

Each stage maps simultaneously to ISO 42001 clauses, NIST AI RMF functions, and EU AI Act articles. This triple alignment is a core TJS differentiator. Stage-by-stage deliverables include RACI matrices, risk registers, evaluation criteria, audit schedules, and incident response procedures — not theoretical concepts, but templates you can fill in and use.

The framework also includes decision tollgates between stages — hard go/no-go checkpoints where the committee reviews standardized artifacts before advancing. This prevents the common failure mode of rushing to deployment without completing foundational governance work.

Acceptable Use Policy

Someone at your organization is already using ChatGPT to draft customer communications. Marketing is running AI-generated campaigns. Legal might be reviewing contracts with AI assistance. An Acceptable Use Policy defines what's allowed, what's prohibited, and how you'll enforce the boundaries.

Our AUP implementation guide includes a 90-day phased rollout, sample prohibited-use language, and a risk classification system that rates real tools (ChatGPT, Claude, Copilot, Midjourney) by organizational risk level. It also includes intake forms for requesting new AI tool approvals — so innovation isn't blocked, just governed.

The key insight: a good AUP doesn't just list rules. It classifies AI tools into risk tiers (Low, Medium, High, Prohibited) and applies proportionate controls to each tier. A spell checker doesn't need the same oversight as a credit decisioning model.

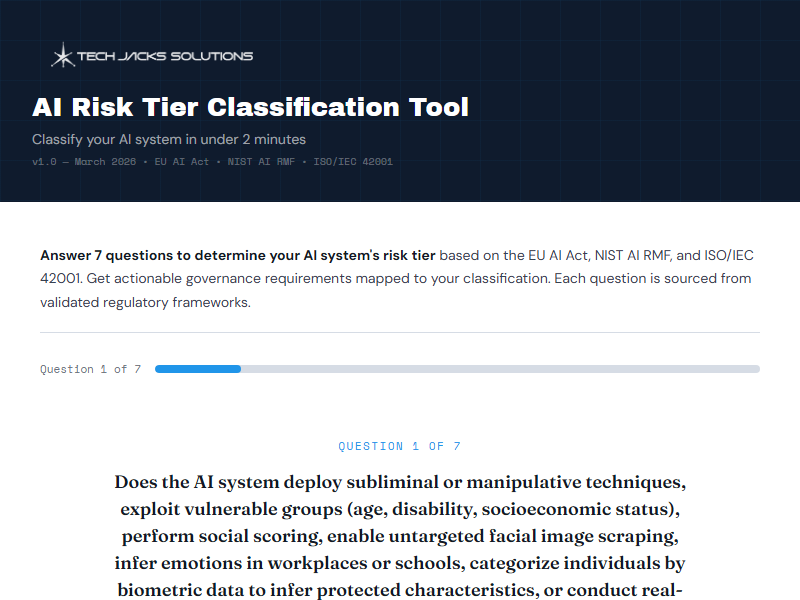

AI Risk Assessment & Register

You know what AI exists — now assess how dangerous it is. For each inventoried system, evaluate: who is using it, what data it accesses, what it's integrated into, what permissions it holds, and what regulatory classification applies under the EU AI Act.

Build a risk register using a 5×5 scoring matrix (likelihood × impact) and map risk tiers to proportionate governance controls. A system processing millions of SSNs (Social Security Numbers/national IDs) for years is fundamentally different from an internal chatbot answering HR questions — and your governance intensity should reflect that difference.

This step is what separates real governance from checkbox compliance. The risk assessment determines which AI systems get lightweight oversight and which ones need full tollgates, mandatory documentation, third-party validation, and continuous monitoring.

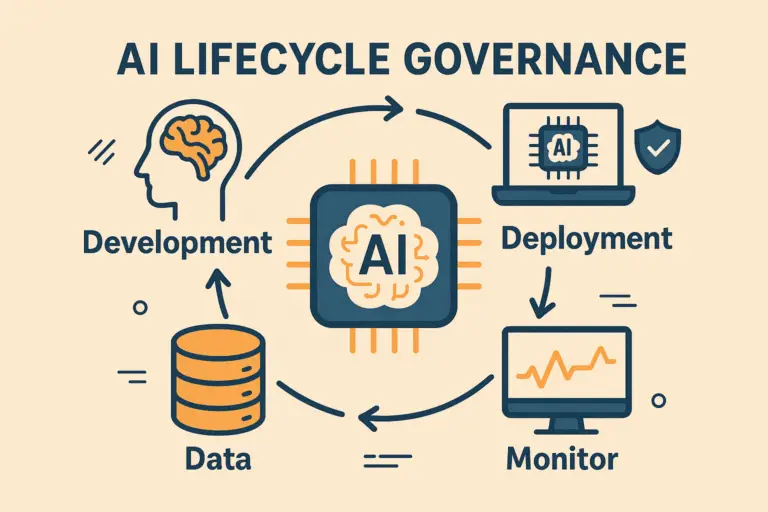

AI Lifecycle Framework

Now that you know your risk posture, adopt lifecycle controls proportionate to what you found. The 7-stage AI lifecycle — from Planning & Design through Retirement & Decommissioning — provides the ongoing governance engine that keeps your AI systems safe, compliant, and effective over time.

Each lifecycle stage includes committee oversight and decision tollgates. A low-risk internal tool might pass through tollgates with minimal documentation. A high-risk customer-facing system triggers full conformity assessment, bias testing, explainability review, and human-in-the-loop validation before advancing.

The lifecycle framework is iterative — feedback from monitoring (Stage 6) triggers reassessment of earlier stages. Models drift, regulations evolve, business contexts change. The framework ensures your governance adapts rather than becoming stale documentation that nobody reads.

The TJS 8-Stage AI Governance

Committee Framework

AI Governance for Your Role

RACI Key: R = Responsible | A = Accountable | C = Consulted | I = Informed

78% of businesses use AI across functions, only 28% (McKinsey, 2024) report active CEO involvement in shaping AI strategy. This leadership gap can lead to missed opportunities and underperformance. McKinsey's State of AI 2025 report shows companies with CEOs directly involved in AI governance achieve stronger EBIT (earnings before interest and taxes) results. Reframing AI governance as an enabler of innovation rather than a compliance burden can make all the difference.

You’ve probably seen this before. A shiny new tech comes along, promising to change everything, and compliance is left scrambling. Someone’s using ChatGPT to review contracts. Marketing is pushing out AI-generated campaigns. IT just rolled out “AI-powered” tools without looping anyone in. The rules seem to change every other month.

Your employees are already using AI tools you don’t know about. They’re feeding company data into ChatGPT, running models through third-party APIs, and building “quick experiments” that somehow ended up in production. You need technical solutions that give you visibility into what’s actually running.

When your AI system makes a discriminatory hiring decision, who gets sued? The CEO? The data scientist? The vendor? Legal precedent for AI liability is practically nonexistent, insurance carriers are still figuring out coverage, and your existing risk frameworks weren’t designed for systems that learn and change after deployment.

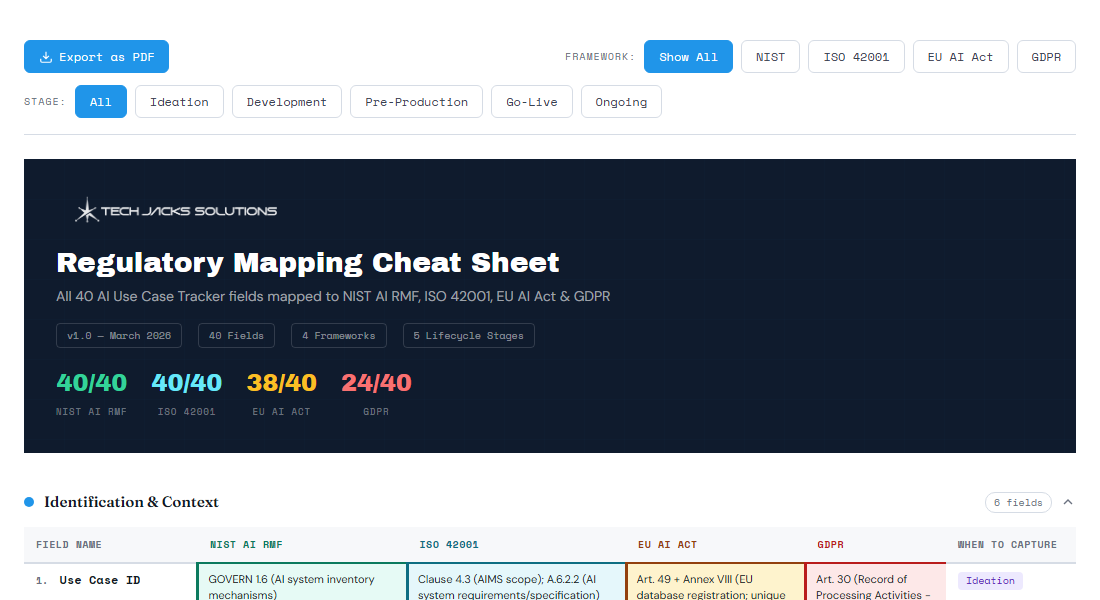

Framework Comparison

Which framework fits your organization? Filter by your needs.

| Framework | Scope | Mandatory? | Key Focus | Best For | TJS Coverage |

|---|---|---|---|---|---|

| EU AI Act | EU market AI systems | Mandatory | Risk classification, prohibited uses | Any org deploying AI in EU | • Full Guide |

| NIST AI RMF 1.0 | US voluntary framework | Voluntary | Govern, Map, Measure, Manage | US orgs, federal contractors | • Stage-mapped |

| ISO/IEC 42001 | International certifiable | Voluntary | AI Management System (AIMS) | Orgs seeking certification | • Resource Center |

| OECD AI Principles | 38+ member countries | Voluntary | Trustworthy AI, human rights | Policy-level alignment | • Referenced |

| IEEE EAD | Global technical standard | Voluntary | Ethical design of autonomous systems | Technical implementation | • Referenced |

| CSA AI Governance | Cloud/enterprise security | Voluntary | Org security responsibilities | Enterprise security teams | • Core source |

| India MeitY 7 Sutras | India AI systems | Voluntary + DPDPA Mandatory | Innovation-first governance, data protection, sector regulation | Orgs operating in or serving India | • Full Hub |

AI Governance Toolkit

Practical tools derived from 130+ primary sources across all hub articles — not opinions.

The 7-Stage AI Lifecycle

Committee oversight operates across every stage — with decision tollgates at each transition.

◆ = Decision Tollgate — Go/No-Go checkpoint requiring committee review of standardized artifacts

AI Governance Deep Dives

Latest regulation and governance analysis from our AI News Hub intelligence pipeline.

AI Governance Updates

Get notified when we publish new governance guides, tools, and regulatory analysis.

Stay in the Loop

AI governance insights, career data, and free resources — no spam, unsubscribe anytime.

AI Governance Resource Library

AI Governance Created in Alignment With:

Weekly AI Governance Intelligence

Curated regulation updates, career insights, and security intelligence — every Tuesday.